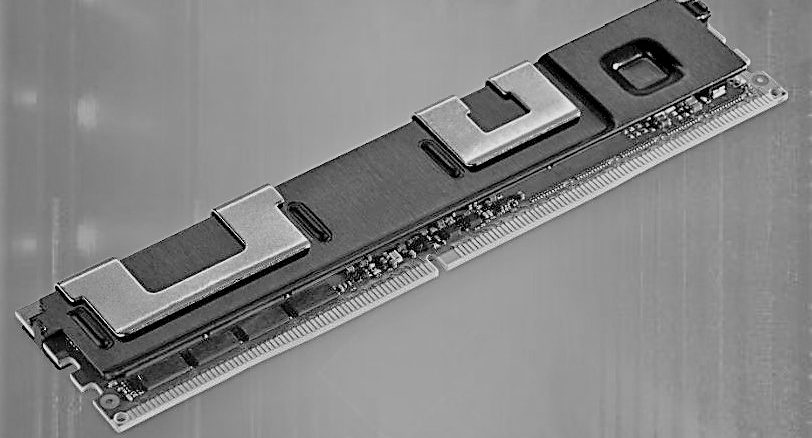

Now that Optane Persistent Memory Modules, based on bit-addressable 3D XPoint memory, are finally available from Intel, everybody in the storage business is trying to figure out how to best make use of them. Another startup, called MemVerge, has just dropped out of stealth mode and is weaving together DDR4 main memory, Optane PMMs, and regular NVM-Express flash drives to create what it calls memory converged infrastructure that pools together the two memory types to create a clustered storage system that is a lot zippier than a cluster of all-flash arrays.

Putting all of this hardware together is the easy part. Coming up with a memory hypervisor that allows for the Optane PMMs to be used in the two different modes Intel has created – Memory Mode and App Direct Mode – that is transparent to the applications on the system was the tricky bit, But that is what Charles Fan, co-founder and chief executive officer at MemVerge, tells The Next Platform its Distributed Memory Objects hypervisor can do.

The different modes for Optane PMMs are a bit tricky, as we explained last summer when talking about the “Cascade Lake” Xeon SP processors, announced this week and the first processors from Intel that support Optane PMMs. The different modes of use of Optane PMMs required some changes to the memory controllers on the Xeon SP processors, which is why they are not backwards compatible with the first generation “Skylake” Xeon SP chips.

The first way to use the Optane PMMs is to use them literally as main memory in the system, which is called Memory Mode, logically enough. But here’s the fun bit about Memory Mode: The DDR4 memory is used exclusively as a cache for the Optane PMMs and operating systems and applications don’t see the DDR4 capacity and, funnily enough, the Optane memory is no longer persistent. (There was a reason we kept trying to call these Optane DIMMs, even though Intel doesn’t.) According to Fan, thanks to the DDR4 caching (for both reads and especially writes), which is necessary because CPUs talk to DDR memory controllers a lot faster than Optane can handle in native bit-addressable protocols, the performance of the Optane PMMs inside of a server is very close to DDR4-only systems. “But this is just bigger, slightly cheaper, and slower DRAM,” as Fan puts it.

In another mode of use, called App Direct Mode, DDR4 stays as main memory and the Optane PMMs are persistent, but applications have to be tweaked to make use of it. This will be a pain in the neck for a while as application developers sort out the Optane software development kit, tweak applications to run in App Direct Mode, and ascertain the performance and operational implications of using Optane PMMs in this fashion.

Intel doesn’t talk about this, but there is another mode in which you can address Optane PMMs, according to Fan. And that is to use it as a very fast block device that looks and smells like an Optane SSD, but it is just riding on the memory bus instead of the PCI-Express peripheral bus and it is using Optane PMMs. In this case, there is no change necessary to applications running on the server, but you are giving up memory semantics.

The MemVerge team knows all of this, and the confusion it is going to create. And that is why MemVerge is focusing on embedding Optane PMMs inside of a clustered flash array. In this way, the problem can be removed from the server node and masked inside of the storage cluster, allowing that storage hypervisor can make use of the Optane PMMs in any of these three modes in a way that is utterly transparent to the applications running on servers on the other side of the wire.

The Distributed Memory Objects hypervisor does all of the work of abstracting the two Optane PMM addressability modes inside of the cluster, deciding which method is suitable for any given workload and doing so transparently to users. This is, perhaps, exactly the kind of software layer that needs to be embedded inside of Windows Server and Linux to make this all a lot easier. All that MemVerge knows is that by mixing and converging these two types of memory together, with both modes supported through a single API, it can get a fast and persistent storage system that accelerates all kinds of workloads.

Looking Forward to 3D XPoint For A Long Time

MemVerge was incorporated back in 2017, but the company’s founders have been thinking about persistent memory for a lot longer than this.

Fan studied at California Institute of Technology, and had Jehoshua Bruck, currently professor of computation and neural systems and electrical engineering, as his PhD advisor. Back in 1998, the two co-founded Rainfinity, which created a hypervisor to virtualize network attached storage; the company was acquired by EMC (now part of Dell) in 2005 for just under $100 million. Bruck went on as one of the co-founders of all-flash array innovator Xtremio, which EMC bought in 2012 for $430 million, and Fan moved over to VMware (when it was still separate from EMC and Dell) to run its Virtual SAN storage software business unit. Both Bruck and Fan were privy to the briefings on what Intel and Micron Technology, who co-developed 3D XPoint memory, way back in 2012, and they have watched as it came along. In 2017, when the Optane SSDs came out and Amazon Web Services went into production with them and Intel was talking up how it could deliver Optane modules that would plug into DDR memory slots, Bruck and Fan figured that Optane PMMs were real and set about to create MemVerge and build a better storage mousetrap. The two enlisted the help of Yue Li, a post-doc at CalTech, as chief technology officer of the new company, with Bruck as chairman and Fan as chief executive officer. MemVerge today has 25 employees, with all of them being engineers, and it has raised $24.5 million in Series A funding from Gaorong, JVP, LDVP, Lightspeed, and Northern Light.

MemVerge has been developing its memory converged infrastructure for two years, and has been testing it with early adopter customers for the past year. This week, as it is dropping out of stealth, it is getting set to open up a beta testing program in June to put its storage through a wider set of paces.

What MemVerge is trying to address are some of the concerns of companies wanting to adopt Optane PMMs, according to Fan. “What distributed storage software are customers supposed to use with this storage? Optane PMMs have a latency on the order of 100 nanoseconds. All of the previous generations of distributed storage software were all written for media that that has a latency of roughly 100 microseconds – three orders of magnitude slower. The faster NVM-Express SSDs from Intel and Samsung can get down to 10 microseconds, and it is still two orders of magnitude away. So if you are using HDFS, Ceph, or Gluster, then 95 percent of the latency will be in the storage software and the stack becomes the bottleneck. That is going to slow down the whole storage system and remove the benefits of the hardware. So a new software stack has to be created to take full advantage of this new media.”

The other issue is that even the fatter memory that comes from the use of Optane PMMs inside of a node is great, but what happens when a dataset goes even beyond those bounds? If you buy an eight socket server, you can get a larger main memory footprint, but an eight socket server also costs a lot more. Fan quips it is 10X more, and it is probably not that bad, but those high end Xeon SP SKUs with lots of cores and the ability to scale to eight sockets are easily five to ten times as expensive as those that have a more modest core counts and scale only to two sockets in a node. But the big issue is that companies will want to use Optane PMMs as bigger memory and faster storage, at the same time, and they do not want to rewrite their applications to do this.

The Distributed Memory Objects hypervisor does this, using Remote Direct Memory Access (RDMA) protocols over Ethernet or InfiniBand to lash the memories together in each node in the storage cluster. This is accomplished by taking the App Direct Mode and creating memory and block drivers inside this hypervisor, so both types of uses for Optane PMMs – volatile storage and block storage – can be done at the same time within a single storage cluster. By the way, the architecture of this hypervisor and its MemVerge Distributed File System, which tiers data off to flash devices, are not dependent on Optane PMMs, so as soon as other kinds of persistent memory or non-voltaile storage are available, MemVerge can snap these into its appliances.

At the moment, the MemVerge storage cluster can scale across 128 nodes and up to 768 TB of main memory. The storage node has 6 TB of Optane PMM capacity, and the DDR4 cache can range in size from 128 GB to 768 GB. A typical system will have 384 GB or 512 GB of DDR4 memory, and some of the capacity will be used as plain DRAM in the system and some will be used as cache for the Optane PMMs. The nodes also have 360 TB of QLC SSDs, which is where data is tiered to by MVDFS. The Optane PMMs are used to store metadata for the storage cluster and its distributed file system, as a write cache, and to expand node main memory. MemVerge is saying that it can get 72 GB/sec into and out of the storage nodes and deliver under 1 microsecond of latency access to the data in its distributed file system.

By the way, because this is a hyperconverged architecture, you can run applications atop the storage cluster rather than have them running on external servers.

MemVerge has some results back on alpha customers who have been trying out its storage cluster appliances.

The first scenario is for machine learning training, where customers have an HDFS cluster feeding into training systems. This customer has very large training sets, with data from hundreds of millions of users. The slower I/O in disk-based HDFS slows down the training, even with shuffle operations and scratch being cached on local SSDs in the HDFS nodes. The initial customer setup also restricts how frequently training runs can be checkpointed to back up their intermediate data during a training session. Loading the data from HDFS into the training nodes also takes a long time because it is moving millions of small files. By shifting the local shuffle and scratch spaces over to the remote MVDFS running on the MemVerge appliances, which were linked with 100 Gb/sec Ethernet switches that supported RoCE, the training speed increased by 6X, the data import was 350X faster, and checkpointing was instantaneous and a lot more frequent.

In scenario two, the customer put ten MemVerge storage appliances beside an existing 2,000-node Spark in-memory data warehouse cluster, with 25 Gb/sec Ethernet with RoCE support providing the fabric on both Spark and storage clusters. Just by offloading storage to the MemVerge appliances and making use of their memory to expand the capacity of the existing Spark nodes, the TeraSort benchmark run on this system ran 5X faster and the Resilient Distributed Datasets (RDD) caches in Spark ran 7X faster. And once again, intermediate data could be checkpointed out to the MemVerge appliances fairly instantaneously.

With the MemVerge stack only being in beta testing starting in June, pricing is not available now. And given Fan’s experience at VMware, we can expect for MemVerge to price to value and charge for its appliances based on the value they deliver. It will be up to MemVerge to show that return on investment in case after case to justify whatever price it commands, and it is safe to say that VMware was a master at this and Fan knows full well how to do this.

Be the first to comment