It is hard to remember that for decades, whether a system was large or small, its storage was intricately and inescapably linked to its compute.

Network attached storage, as pioneered by NetApp, helped break those links between compute and storage in the enterprise at the file level. But it was the advent of storage area networks that allowed for storage to still be reasonably tightly coupled to servers and work at the lower block level, below file systems, while at the same time allowing that storage to scale independently from the number of disks you might jam into a single box.

SANs were a kind of precursor to the disaggregated and composable system that we often talk about here at The Next Platform. And they are still a very big business in the enterprise, even if hyperscalers and cloud builders do not use such storage except, perhaps ironically, in the back office systems where they count their money and pay their bills. (You don’t think Google and Microsoft and Facebook and Amazon write their own code for that or co-design systems to support these functions, do you? They most certainly do not.) But with the advent of clustered storage and the rise of unstructured data and the object storage that not only contains it, but can be the underpinning of block and file storage as well, as you might expect the era of the SAN has long since peaked even if it will take a long, long time before the last SAN and its Fibre Channel switching is unplugged in the glass house.

Some kinds of change come to the datacenter explosively, some at the pace of molasses in the winter. The ascent of InfiniBand and now Ethernet as a storage fabric and the decline of Fibre Channel is measured in decades, not years, but it looks like the enterprise will build storage that looks more like that seen in HPC centers and at hyperscalers and cloud builders, with their parallel and clustered file systems and high speed, lossless InfiniBand or Ethernet networks, and looks a lot less like the massive aggregations of spinning rust, now sometimes front ended by flash to speed up accesses and still using Fibre Channel switching, that have dominated the datacenter.

A Walk Down History Lane

At this point in the history of networking, Fibre Channel, Ethernet, and InfiniBand are on parallel tracks that seem to overlap, with InfiniBand usually out on the bow wave of bandwidth expansion and Fibre Channel riding on the tail. But it wasn’t always this way, which is why Fibre Channel became the interconnect of choice for high performance SAN array attachment for servers and, because of its popularity and the vast installed base of SAN storage in the enterprise, this is why it persists today even as, in some cases, it is being supplanted by Ethernet and InfiniBand.

Back in the mid-1990s, when decoupling storage from servers and aggregating it in a SAN first took off, Fibre Channel had a serious bandwidth advantage with its blazing 1 Gb/sec speed, and it quickly moved up to 2 Gb/sec because it did not have to go through Ethernet standards to blaze ahead. At the time, Ethernet was humming along at 100 Mb/sec, which was fine for corporate networks and maybe NAS devices linked at the file system layer where performance was not critical. But this setup not good for linking storage arrays to servers at the block level where databases and then virtual machines – yes, proprietary and Unix machines had hypervisors a decade before the X86 platform went crazy for them – needed more oomph.

InfiniBand would not even be invented until the late 1990s, and even though it was created to be a universal fabric for linking servers to each other, to storage, and to clients, that never really happened and InfiniBand was largely relegated to being a high speed interconnect for HPC clusters until fairly recently, when it has also seen the interconnect fabric for clustered storage systems as well as niche uses in data analytics.

Perhaps more importantly for the emergence of Fibre Channel, the parallel SCSI interface for disk drives was limited to a handful of disk drives because of its limited fan out. To scale out SCSI, the interface was serialized and the SCSI protocol was put on a wire and became Fiber Channel. So not only did Fibre Channel have more bandwidth, but it allowed for many more devices to hang off a channel. In effect, it made distant disks look local and it allowed more of them to be allocated to a single server. That SAN was more an aggregation of independent, logical storage arrays than a shared storage utility, mind you, but the beautiful thing was that all of the operating system and application software could speak the same SCSI block storage and it would work over the Fibre Channel network. And unlike Ethernet-attached storage, Fibre Channel SANs were running over a lossless fabric, features that have only been added to Ethernet in recent years – Data Center Bridging is a decade old, but has only recently matured and taken off – and the Fibre Channel protocol was also absorbed into Ethernet, starting with Fibre Channel over Ethernet (FCoE) with the Unified Computing System servers from Cisco Systems back in early 2009.

The rise of Fibre Channel was a little more complicated than that, but it was a relatively easy transition that allowed volume economics to come into play. Yes, SANs were more expensive, but they were also a lot more flexible, and the net-net – as is so common in the IT industry – is that a new technology was not really cheaper, but it was better.

That said, even if SAN was better than direct or network attached storage – DAS and NAS – it was not something that could be deployed affordably at hyperscale, which is why you don’t see SANs at Google, Amazon, Microsoft, Facebook, and the like. They had to invent something else that used commodity parts in a different way, and they decided to build massive Ethernet networks that spanned a datacenter of 100,000 server and storage nodes and interconnected the whole shebang, allowing anything to talk to anything else. Now, that disaggregated storage approach, which is most definitely not hyperconverged storage, no matter what people say, is trickling down from on high and it is changing the Ethernet and Fibre Channel markets with it.

The Fibre Channel switch and adapter market is flat to declining slightly, as the market data for port counts from Creehan Research above suggests, but Adarsh Viswanathan, senior manager of datacenter product management at Cisco, says that it has a very, very long tail. Viswanathan adds that among enterprise customers, 80 percent of the flash arrays sold have linked to servers over Fibre Channel switching infrastructure, and for mission critical applications, customers still prefer to deploy their flash arrays or mixed disk and flash arrays in SANs, which are familiar. Moreover, as much as Cisco loves hyperconverged storage, and recently bought Springpath to have its own offering here, Viswanathan says that hyperconverged is not going to replace SANs for Tier 0 or Tier 1 mission critical applications because companies want to rock solid accuracy and predictability of Fibre Channel. Flash and other kinds of persistent storage are driving the bandwidth between server and storage and are pushing Fibre Channel just as hard as Ethernet and InfiniBand are being pushed.

To that end, back in April, Cisco announced 32 Gb/sec Fibre Channel cards for its MDS 9700 storage switches and matching 32 Gb/sec host bus adapters for its UCS C Series rack servers, At the moment, the virtual network interface cards on the UCS B Series blade servers top out at 40 Gb/sec of total bandwidth and only 8 Gb/sec and 16 Gb/sec FCoE ports are supported out to storage across the integrated switch, but you can anticipate that the UCS iron will be updated to support 32 Gb/sec FCoE ports. Just for a sense of scale, 16 Gb/sec Fibre Channel was rolled out in late 2013 and ramped in 2014. So the speed bumps are not fast and furious here.

The 32G Fibre Channel module for the MDS 9700 has 48 ports running at 32 Gb/sec, and the chassis supports up to 768 ports running at line rate for an aggregate bandwidth of 1.5 TB/sec, with support for NVM-Express over Fibre Channel being a seamless upgrade in the future. The 32G adapters that go into the C Series machines come from Broadcom (which bought Emulex) and Cavium (which bought QLogic). Dell, IBM, Hitachi, and NetApp are big partners for the MDS 9700 line; Hewlett Packard Enterprise rebadges Brocade Fibre Channel switching gear.

While this is still a big business, the big question is: When does Fibre Channel go away?

“You might say it has already gone away in that there are really no standalone Fibre Channel companies left,” says Kevin Deierling, vice president of marketing at Mellanox. “They have all gotten sucked into larger companies. The innovation is already gone, and we don’t see lots of new protocols. Most vendors are farming this as a cash cow. They will support NVM-Express over Fabrics, maybe a year or two behind, but this is no longer a driving force. That said, people are still buying IBM mainframes thirty years after the end of growth in that market.”

The real issue for large enterprises, says Deierling, is that they are hitting the same limits of scale as the hyperscalers, and it is more on their budgets than on any inherent aspects of their storage technology. If their storage is growing at 30 percent a year, but their budgets are flat, that quickly becomes a problem. Existing applications might stay on a Fibre Channel platform, but all new applications will end up on disaggregated, converged, or hyperconverged storage and all three of these drive the adoption of Ethernet fabrics.

It is not that companies made the wrong decision to either adopt Fibre Channel SANs or to continue to use them here in the 2010s. These were the best answers for large enterprises that could not, unlike the hyperscalers, engineer their own software-defined storage stacks, and for certain workloads, continuing to invest in SANs is the easiest and most cost-effective strategy. But now, as companies look at adding new platforms and applications, they can buy Ceph object/block/file storage from Red Hat or use the open source software; deploy any number of hyperconverged storage stacks like Nutanix Enterprise Computing Platform and its several competitors; or go with upstarts like DriveScale, Datrium, Excelero, and myriad other contenders who have their own twists on scale-out storage inspired by the hyperscalers.

As far as Ethernet fabrics are concerned, the goal, says Deierling, is for key features of Fibre Channel –management, automation, zoning, and security – will be exposed on top of Ethernet. “The vision is that everything you can do on Fibre Channel, you will be able to do on Ethernet, only faster and cheaper,” says Deierling, and to put some numbers on that, he adds it will have three times the performance for one third the cost, which is nearly an order of magnitude better bang for the buck.

You understand now why hyperscalers moved to disaggregated storage linked by the fastest and widest Ethernet they could build.

The beauty of this approach is that all of the goodness of Ethernet, such as accelerators to support VXLAN overlay networks or Open vSwitch virtual switching, just to name two features, now comes to the storage fabric, which presents some interesting possibilities for innovation.

The bandwidth and latency that supports such innovation are going to be issues. Back in the day, when Fibre Channel was new, a disk drive had an access time of maybe 10 milliseconds, and today, the fastest disk drives, two decades later, are maybe around 7 milliseconds. That is just the limit of a physical device that has mechanical rather than electrical access.

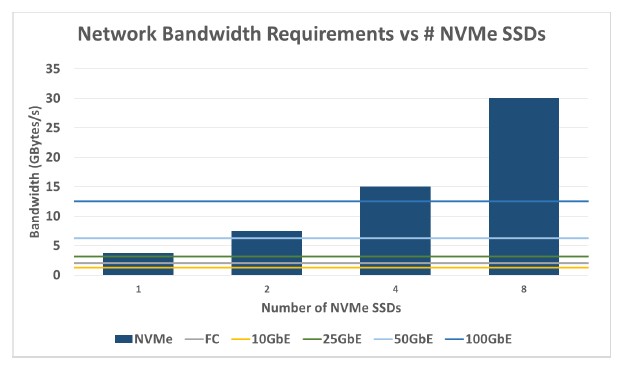

By contrast, a flash-based SSD has an access time on the order of 100 microseconds, and looking forward, there are low latency SSDs and persistent memory and NVDIMMs that offer access times below 10 microseconds and the envelope is pushing down to under 1 microsecond. That factor of 10,000X different in access time means the network linking compute to storage is key, and with Ethernet at 100 Gb/sec today and on track for 200 Gb/sec and 400 Gb/sec shortly and perhaps hitting 1.6 Tb/sec early in the next decade, the odds are that Fibre Channel is going to lag, just as it is today. Only now is 32 Gb/sec Fibre Channel coming to market, and the gap is going to widen, and today, if you use NVM-Express over fabrics on top of Ethernet switching, this only adds around 10 microseconds of additional latency over running an SSD local in the chassis. So even when NVM-Express comes to Fibre Channel, which is great for those still deploying SANs, the combination of Ethernet and software defined storage running on server nodes will have a big advantage.

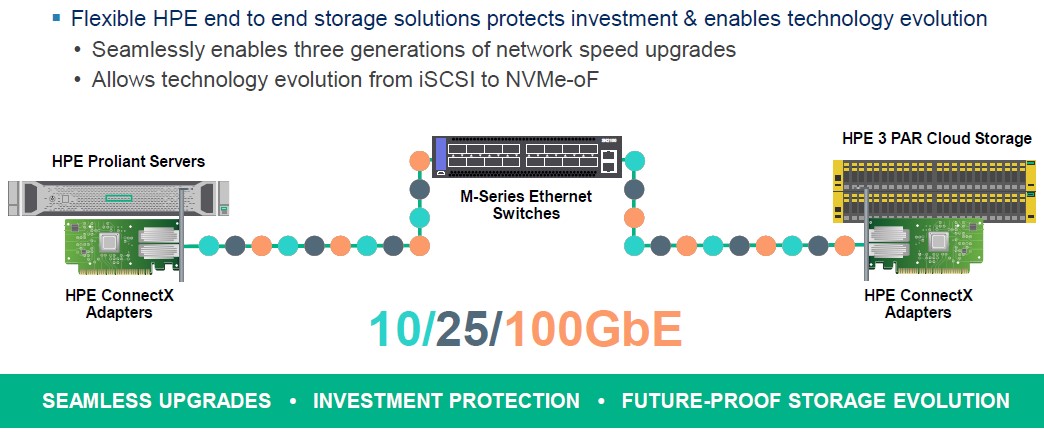

This, in part, is why HPE has partnered with Mellanox to resell its Ethernet switches as storage fabrics, the first of what will probably be a bunch of agreements with OEMs and ODMs.

Three thoughts to complement this excellent historical survey:

1. Auspex pioneered NAS. NetApps (at least spiritually) spun off Auspex, and won by making NAS really easy to own and operate.

2. The way to think about Fibre Channel is that it is the right fabric to layer a SCSI stack doing block reads and writes of disk sectors over. With all due respect to NVMe over Fabric, it is shared block access which is the legacy technology, not just Fibre Channel. Sharing at the block level requires that the application pass each I/O though the container/VM/OS/interface path, and that access control and the like be done in the semantics and mindset of the operating system stack, not in the semantics of the application. We figured out pretty quickly 25 years ago that block access in a fabric environment has a lot of ways to be fragile; there are some aspects of the way most Ethernet switch ASICs are implemented in this decade which will be more fragile under bursty loads than under the streams of TCP/IP traffic they were architected for.

3. If we are to do storage in the mindset and semantics of the application, then storage will be presented as data structures in shared persistent memory, and it will be read and written in a suitably secure and QoS controlled way from user space. It is this, and not the evolution of block storage, where the future lies…in the 2020s.

Steve, can you elaborate on the ways in which block access over a fabric is fragile? Thanks.

One of the best articles I have read in a long time. Thank you for writing!

-Alex