It is not every day that you see the titans of some of the biggest hyperscalers share the same stage, and even less likely that the companies that pride themselves on having bootstrapped their own infrastructure because they can do it better than the vendor community at their massive scale would agree on setting standards together.

But Google did just that today when Urs Hölzle, senior vice president of the Technical Infrastructure team at the search engine giant and cloud provider, joined Jay Parikh, vice president of engineering at Facebook, on the stage at the Open Compute Summit 2016 conference and announced that Google was joining the open hardware effort.

There was nothing preventing Google from joining the Open Compute Project at any time in the past six years, except the fact that it views its infrastructure prowess as strategic to the operations and profitability of its businesses and that has operated at such a large scale for the longest time among the hyperscalers and therefore is constantly running into scale issues long before its peers. But having said all of that, Google knows that it can benefit from cooperation in the industry, driving up innovation and driving down costs, and it is starting at the rack.

Google’s very first rack-scale system, the “Corkboard” cluster of 1,680 machines it ordered custom made in the summer of 1999, was designed by Hölzle and increased its infrastructure footprint by more than 15X, setting the company on the path towards hyperscale as its search engine exploded along with the commercial Internet. (The company now has well in excess of 1 million servers, although it will not say how many.) Like its hyperscale peers, Google is obsessive about taking components out of systems and datacenters that are unnecessary, and back in 2005, when it first started doing its own custom iron, it put batteries in servers so it could get rid of uninterruptible power supplies out of the datacenter. At last year’s Open Compute Summit, Microsoft was talking about how it had done the same thing in its massive datacenters and cut its datacenter costs by 25 percent – a decade after Google had done it. You can see why Google has not joined up and disclosed its server, storage, and network equipment designs.

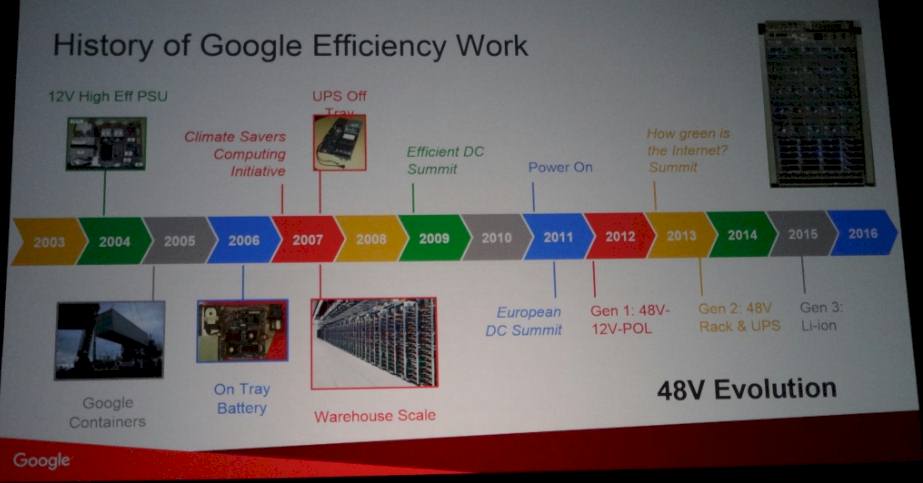

As the top techie at Google, Hölzle is obsessive about driving up efficiency in the company’s datacenters and driving out costs, starting with a talk he gave back in 2003 at CERN where he discussed the need for the industry to deliver more efficient power supplies, which wasted a lot of energy at the time.

In 2004, it moved to 12 volt power supplies, and in 2006 it integrated 12 volt batteries in the power supplies on a distinct power shelf in its racks to allow servers to share these components as they rode out brownouts. (Inspiring Facebook’s own Open Rack design, in fact.) Google has made all kinds of power and cooling innovations in the datacenter since that time – which it does not generally disclose – but Hölzle said that what compelled Google to join the Open Compute effort was its desire to push efficiencies in the last six feet of power distribution inside of a rack and the last ten inches of power distribution on a server motherboard.

Having perfected 12 volt power distribution to gear inside of the rack, Google started to think about how it could push efficiencies even further by bringing higher voltages down the rack. After doing some experiments, the company decided that 48 volt power distribution was the answer, and did a number of different designs, including its first, which had 48 volts at the rack and 12 volts at the server, and its current design, which brings 48 volts into the rack and then direct conversions to specific voltages for elements of the motherboard such as processors, memory, and I/O.

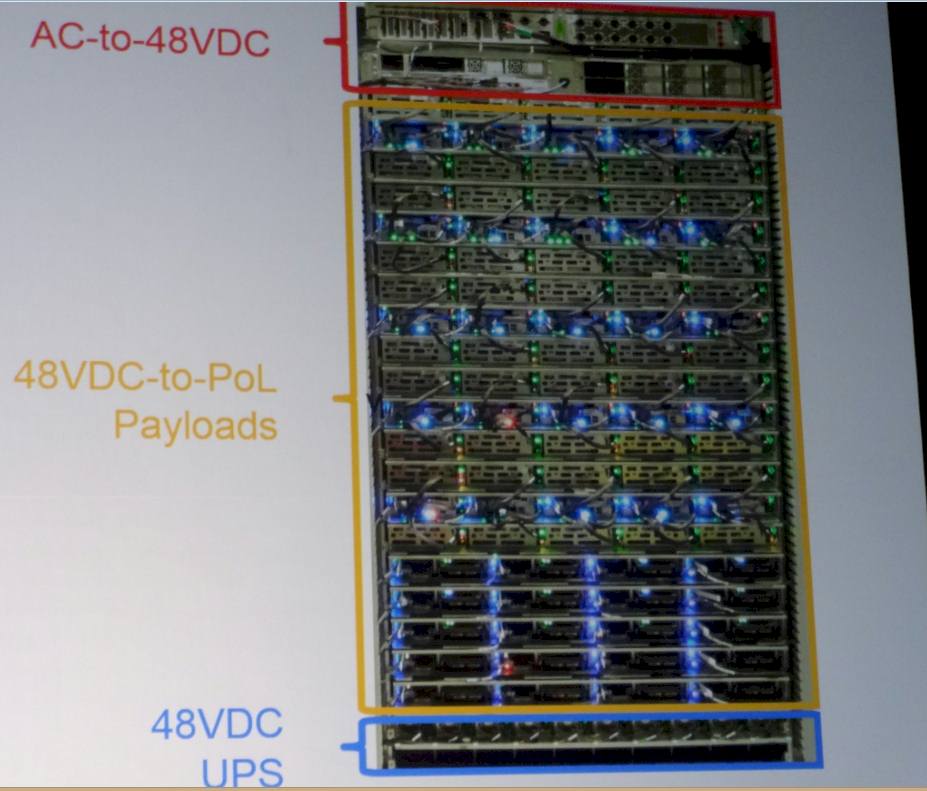

Amazingly, Hölzle actually showed an image of the current Google compute rack:

At the top of the rack is not a switch, but the rectifiers that convert AC power coming into the rack (we don’t know what voltage) to 48 volt DC. At the bottom of the rack is a 48 volt UPS shelf, which is modular and allows for different amounts of capacity depending on the load in the rack. In the middle of the Google rack are trays that can be loaded up with CPUs, GPUs, disks, or flash.

“The key thing that we figured out,” explained Hölzle, “was that to get the efficiency in both in cost and power, you have to directly feed the power to the components on the motherboard and you have to convert it in only one DC-to-DC step, down to the CPU at 1 volt and to the memory and the disk at whatever they need. And that happens now in all of these boards and it is something that we have deployed at scale. So we have several years of experience now and have deployed this many thousands of times. This is not an experimental system, this is something that works at scale.”

Compared to a highly tuned 12 volt rack, the 48 volt rack that the company currently deploys is 30 percent more energy efficient, which when you are talking about 25 megawatt datacenters is a big, big deal.

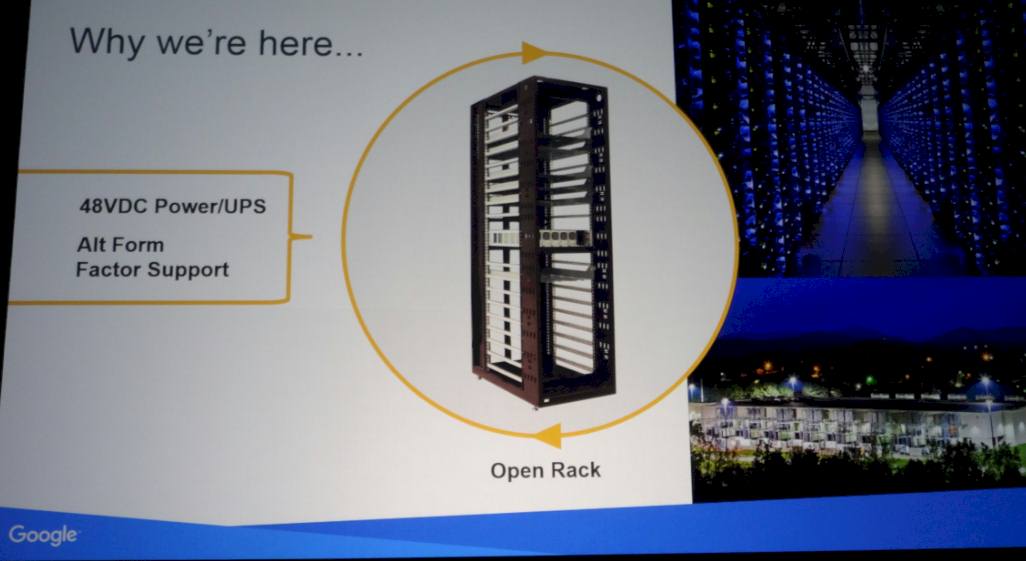

But there is apparently a problem, and that is that there is not a 48 volt rack standard, and Google wants to help set that standard along with Facebook and the rest of the Open Compute community. The rack that Google is proposing is shallower than a standard server rack, which could be tricky, but Google wants for there to be one standard for 48 volt racks, and the idea is that server designs will be able to work with both the proposed Google rack and the current Open Rack from Facebook.

The upshot is that Google wants the entire industry to move to 48 volt racks and have a common standard that will end up benefitting the hyperscalers most because they are much more dependent on IT infrastructure than other businesses. Google itself sometimes deploys 12 volt server and storage trays inside of 48 volt racks, which requires a step-down rectifier and reduces efficiency, but offers backwards compatibility.

It is interesting to note that Hölzle did not open source the motherboards or other intellectual property in the motherboards that allows for the 48 volts to be stepped down and fed directly to different components on the motherboard. Google could have done this, and maybe it will someday. But it is possible that Google does not own such intellectual property and that its motherboard suppliers control this.

Looking ahead at potential areas where the search engine giant might contribute to the Open Compute cause, Hölzle observed that effort was “light on software” and that all of the hyperscalers have “a lot of gizmos” in their datacenters and that the SNMP management protocol that is commonly used to interface with devices is outdated and that this is the time to create a new standard. He added that he was sure that there were more opportunities, but obviously Google is not doing what Microsoft did by designing its own machine and then dropping it all in one big splash, including management software, into the OCP pond. Google could open up its server designs if it thought it needed a broader supply chain, but as the world’s largest server buyer, this is probably less of an issue for Google than it was for Facebook five years ago when it was straining as it made the transition to massive scale.

Hölzle also said that the redesign of the disk drive that the company has been recently advocating could be done through the Open Compute effort, too.

Be the first to comment