Hewlett Packard Enterprise is hosting its first big shindig, the Discover Europe customer and partner conference, as a company separated from PCs and printers, and is trotting out a new line of systems, code named “Thunderbird” and sold under the brand HPE Synergy, that are follow-ons to its BladeSystem blade servers. The Thunderbirds are the foundation for what HP and rival Cisco Systems are calling composable infrastructure.

With composable infrastructure, the idea is to take “infrastructure as code” to the same extreme that the hyperscalers do. But as we pointed out when this idea started being bandied about this year, to provide truly composable infrastructure, the tight coupling between the CPU and its main memory (whether it is DRAM, MCDRAM, or 3D XPoint in the case of Intel) must be shattered and then allowed to be configured on the fly. (You cannot do this today. A system board has a set number of processors and a set number of memory slots, and that is that.)

We discussed this idea both with Hewlett-Packard in June and with Cisco Systems in November, and everyone agrees that customers must be able to add memory independently from compute and, equally importantly, be able to support multiple generations of compute and memory within the same complex of racks and rows, for infrastructure to be the fluid resource pools that HP and Cisco are describing. Presumably, the CPUs and memory will all be linked by silicon photonics light pipes.

Until then, what HPE can do with the Thunderbird machines, and what Cisco is doing with its UCS M Series machines, is laying the groundwork for a fully composable infrastructure stack by making a compute-memory complex a programmable pool of capacity as a unit instead of as separate compute and memory, and allowing for the switching fabrics and auxiliary storage to be composed independently of each other and the CPU-memory complex on the fly.

What the new Thunderbird iron really amounts to is a slightly new blade server or modular server design that has virtualized networks and storage that also have multiple personalities that can be activated depending on the need of a particular application that is assigned to it. Storage nodes in the Thunderbird enclosure can be viewed as local or remote storage, and as file, block, or object storage. Switch fabrics can present Ethernet ports for server-to-server connectivity or Fibre Channel ports for server-to-storage connectivity, as needed by applications. This is quite a bit more sophisticated than the BladeSystem, to be sure, just like the UCS M Series machines that are ramping up this year are a bit more sophisticated than the original UCS blade servers that date from 2009.

“What we have constructed is a new system that is built from the ground up for composability,” says Gary Thome, vice president and chief engineer of converged data infrastructure at HPE. It may not be immediately clear why HPE needed a new hardware stack to provide this composable infrastructure, since the automation and orchestration is running on management nodes in the enclosures, just as was the case with blade servers like its BladeSystem designs. But the BladeSystem chassis dates from 2004 with an update a few years back, and so it is getting a little long in the tooth. The Thunderbird enclosure can probably best be thought of as a follow-on that offers node form factors, internal bandwidth on the midplane, and other features that are appropriate to modern compute, storage, and switching.

The Thunderbird machines are not fully composable and they cannot be until CPU chip makers break the memory controllers and main memory storage free from the CPU complex in some way. These must be upgraded in lockstep, and we probably won’t see such composable processing complexes from Intel until the “Skylake” Xeon E5 v5 generation in 2017, which we told you about here back in May. And even that could be optimistic. That new Ultra Path Interconnect (UPI) point-to-point link for Skylake processors (an upgrade to the QuickPath Interconnect, or QPI, links used with Xeons since 2009) and their memory may not provide the generic links and memory controllers that would be necessary to break the CPU from the memory. Frankly, we would expect this CPU-memory link to be broken with a chip like the Xeon D aimed at hyperscalers first. And, to be truly composable, we would also add that it should be possible to fire up NUMA clustering across nodes on demand, too, scaling compute from one to eight or more sockets on the fly. (Intel would have to add NUMA circuits to all levels of Xeons to do this, and that could be pricey indeed. Or go with a software approach like Applied Micro intends to do with its future “Skylark” X-Gene 3 ARM server chips and their X-Tend NUMA interconnect.)

Nonetheless, by launching the Thunderbird machines now, HPE will be ready for virtualized compute and memory when Intel or other CPU chip makers provide it. The important thing for HPE is to get its ecosystem of orchestration and management tools into the field running on the Thunderbird iron and get people used to this new stack, which has an embedded version of its OneView system manager at the heart of it and which has hooks into other open source application and system orchestration tools such as Puppet and Chef.

In a sense, the HPE Synergy system, as it is currently being delivered, is a multi-chassis blade server that has a single management API stack based largely on OneView, and this layer abstracts every aspect of the underlying infrastructure. Like Google’s Borg, Microsoft’s Autopilot, and Facebook’s Kobold/FBAR does for their own vast clusters. And just like those and other hyperscale orchestration tools, Thunderbird systems will be able to discover, assemble, secure, and orchestrate themselves as workloads require – rather than being statically defined as is the case with blade servers and converged infrastructure today. Ultimately, what customers will see is a pool of bare metal, virtual, or container compute with file, block, or object data storage as needed.

HP wants to put new wine in new bottles, and will want its enterprise customers to do so as well. What is not clear is how (or why) these composability features will be restricted from its Apollo HPC and enterprise machines or its Cloudline minimalist servers. Google, Facebook, and Microsoft do not use what are in essence blade servers – they tend to go with racks machines with some shared infrastructure at the rack level for power and cooling. So it is possible that HP will offer Synergy extensions to these other machines at some point.

The Thunderbird Synergy System

The Thunderbird machines will start shipping in the second quarter of 2016, and they look very much like a blade or modular system.

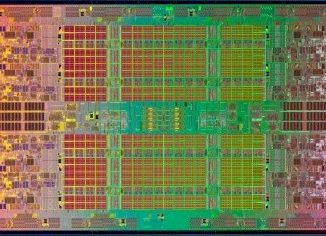

Thome tells The Next Platform that HP will offer two-socket and four-socket compute nodes based on the Xeon E5 processors from Intel and four-socket and eight-socket nodes based on the Xeon E7. That timing suggests that the initial compute nodes in the Thunderbird machines will be based on Intel’s “Broadwell-EP” Xeon E5 v4 processors to start, followed up with a “Broadwell-EX” Xeon E7 v4 after that. There is no reason why HP could not put ARM processors inside of this infrastructure, but there is no suggestion this will happen.

The Synergy 12000 enclosure has six bays in the front, and a variety of different compute and storage nodes can slide into them. There are half-height and full-height nodes that offer two or four Xeon E5 processors, respectively, and a full-height, single-wide node that offers two Xeon E7s and a double-wide, full-height node that has four Xeon E7 sockets. (There is not an eight-socket Xeon E7 Thunderbird node, but there could be one if HP wanted to make one. It would probably eat up four out of the six bays in the 10U Synergy 12000 enclosure.)

A Thunderbird storage node takes up one of the six bays – which means it is half-height but double wide – and has 40 2.5-inch disks in it. Here is what it looks like with its disk bay tongue sticking out:

The Synergy 12000 chassis also has room for redundant management appliance bays for running the embedded OneView, which is called HPE Composer, and another tool called Image Streamer, which as the name suggests is a templating system for throwing software images out on composed infrastructure stacks within the racks of Thunderbird iron. Image Streamer provisions boot and run storage volumes on the storage nodes and deploys operating systems on compute nodes and also iSCSI targets for the boot and run volumes on the nodes. Thome says Image Streamer can provision an operating system in under 15 seconds.

In the back of the chassis, there is room for ten fan modules, six interconnect modules that can be configured in a 3×3 redundancy setup so a single module failure does not knock out connectivity. At the moment, HP has two interconnect modules. The first is a Virtual Connect SE 40 Gb F8 module, which supplies 40 Gb/sec uplinks that can be split into four 10 Gb/sec ports and then downlinks (which run internally on the midplane) can run at either 10 Gb/sec or 20 Gb/sec. Customers can do Fibre Channel over Ethernet on the 40 Gb/sec switch if they want to, linking out to storage and doing away with Fibre Channel links to storage. If they want Fibre Channel, then there is the Virtual Connect SE 16 Gb FC module, which provides such connectivity to external SAN storage.

We were wondering why HPE is not trotting out support for 25 Gb/sec, 50 Gb/sec, and 100 Gb/sec switching in the new iron, which would seem logical, and all Thome could tell us is that HPE is in talks with multiple ASIC suppliers to broaden the interconnect options.

There are also two frame link module slots for hooking adjacent Thunderbird enclosures to each other for management purposes, and up to 20 frames can be linked together under a single management domain.

The chassis has six power supplies with a whopping 7,950 watts of fully redundant power for the components in the system.

Thome says that the Thunderbird compute nodes will support the popular Linux variants as well as Windows Server in terms of operating systems as well as VMware’s ESXi and vSphere tools for virtualization out of the gate. Chef has been integrated with the embedded OneView that makes up HPE Composer, and the company is working to integrate Puppet management tools with this variant of OneView now.

Pricing for the Thunderbird systems was not divulged, but will be available when they ship in the second quarter.

The Synergy machines are aimed at two distinct sets of customers or application stacks within a single customer, says Thome.

The first is what HPE is calling traditional enterprise applications that are relatively stable and that are updated maybe once or twice a year and that have their systems optimized to run those applications; these can be run on bare metal but are often highly virtualized, with many applications running side-by-side om each node in a cluster. The focus here is on getting performance sufficient to run these applications well and provide stability while cutting costs for hardware over time. At the other end of the spectrum are new applications, typically data analytics and mobile front-ends for applications or whole new mobile apps themselves that get changed every couple of months. These are native to the cloud, meaning that they are not only virtualized but they tend to make use of various orchestration tools to optimize the workflow of the different components of the application stacks. The focus for these applications is on chasing new revenue streams and new opportunities, so cost is less of a factor.

Be the first to comment