As we have pointed out before, large enterprises have to deal with a different kind of scale issue than the hyperscalers, and in many ways, the hyperscalers have it easier.

The hyperscalers have dozens of core applications that they have to run at massive data scale – pushing up to exabytes of data across millions of servers – to support hundreds of millions to billions of users for those applications. They have to make a few things big. Insanely big. And they need to scale capacity. But enterprises have to deal more with scope than they do with capacity. They have hundreds to thousands to sometimes even tens of thousands of applications, and hundreds to thousands of different file systems, datastores, and databases that support those applications. And they are scattered all over the place.

This is why data warehouses from Teradata, Netezza, and others first took off for systems of record and their structured data several decades ago. And when unstructured data, from operational systems and web applications, were used to create sophisticated systems of engagement, it was natural that Hadoop technologies created by hyperscalers to store and analyze their massive amounts of unstructured data would be adopted in the enterprise.

But both data warehouses running on traditional parallel SQL databases and what has evolved into data lakes running atop Hadoop and other data storage engines have a big problem: You have to move all of this data to them, and you have to keep doing it as new data is generated. Data extraction, transformation, and loading becomes the new bottleneck, and a very big headache that compounds as the number of data sources and data types increases – as is the normal state of data at large enterprises.

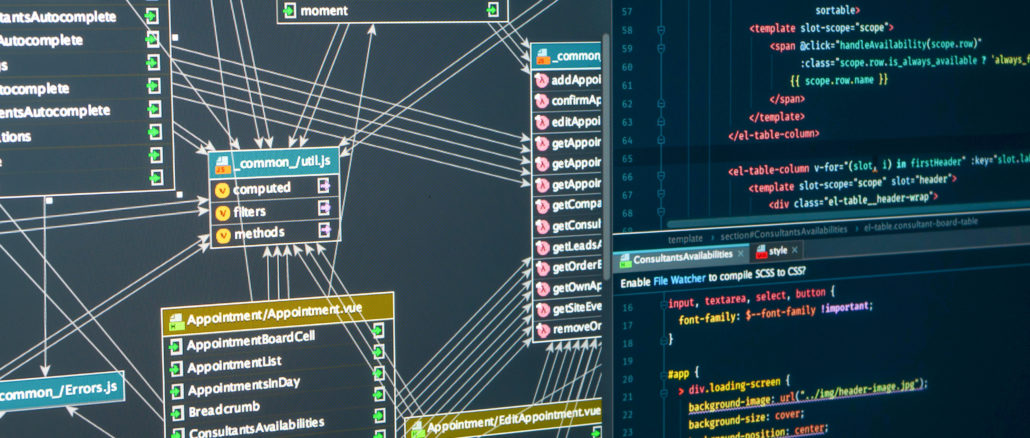

That is why Facebook created the Presto distributed SQL engine to span its own databases and datastores, which Facebook first started talking about back in November 2013 but had been using for almost two years at that point. Presto is an example of a federated database, which we discussed here at length, with the idea of running complex queries across collections of databases and datastores where they are and aggregating the answers within the Presto query engine rather than trying to pull all of the data out of disparate data sources and putting it in one place to do complex queries. The queries do not run as fast – you have an Amdahl’s Law problem in that any query will be only as fast as the query on its slowest data source – but then again, you don’t have to do days, weeks, or months of ETL work to get set up to run a query, either.

In any event, soon after Facebook started talking about Presto, the social network open sourced the Presto code, which was great. But then things got a little weird five years later.

In January 2019, the three original creators of Presto within Facebook – Martin Traverso, Dain Sundstrom, and David Phillips – created the Presto Software Foundation to steer development of Presto, creating a variant called PrestoSQL, which has a commercial entity supporting it called Starburst where these three people have shared the chief technology officer role. Nine months later, in September 2019, Facebook, Twitter, Uber, and Alibaba, who were not pleased with the input they had in the Presto Software Foundation set up by Traverso, Sundstrom, and Phillips, created the Presto Foundation and slid it into the Linux Foundation to use its governance model. As we talked about nearly two years ago, a company called Ahana aims to be the commercializer the PrestoDB variant of the Presto database engine, which has Facebook, Twitter, Uber, and Alibaba contributing their code changes. In the wake of Ahana’s launch in June 2020 and some legal wrangling with the Linux Foundation over the Presto brand, the PrestoSQL database project was renamed Trino. And it is Trino that Starburst is now packaging up and selling as Starburst Enterprise for an on-premises database engine that runs atop existing databases and datastores and as Starburst Galaxy as a SaaS implementation that spans the Amazon Web Services, Microsoft Azure, and Google Cloud clouds.

(We are tying to break the habit of saying “public clouds,” since these are not regulated public utilities but rather absolutely proprietary ones. And so we will just say clouds.)

And now the race is a-foot between Ahana and Starburst to build the next database platform having the Facebook code as its foundation and based on federated techniques.

This week, Starburst is hosting its annual Datanova user conference and making a lot of noise, including an announcement of a massive $250 million Series D funding round that brings its total venture capital take to $414 million. This fourth round of funding was led by Alkeon Capital and also included Altimeter Capital and B Capital Group as new investors; Andreessen Horowitz, Coatue Management, Index Ventures, and Salesforce Ventures, who have all previously invested in Starburst, kicked in some more dough.

Everybody with any venture money is looking for the next Snowflake, which is the darling of the database world because it has created a giant data warehouse in the sky, raised $1.4 billion in a stunning seven rounds of venture capital, and went public in September 2020 to raise another $3.4 billion. Today Snowflake has more than 5,400 customers, cranks through 1.34 billion database queries a day against a combined 250 PB of data it stores on the cloud for its customers, has just broken through a $1 billion trailing twelve month revenue in the quarter ended in September 2021, and has a market capitalization of $89.9 billion as we go to press.

That investment in Starburst by Salesforce is significant, Justin Borgman, chairman and chief executive officer of Starburst, tells The Next Platform, and it also points out one of the problems with Snowflake and other data warehouses in the cloud.

“As it turns out, extracting data from Salesforce for analytic purposes is one of the top use cases – if not the top use case – for Snowflake,” says Borgman. “And that means a ton of people are taking data out of their CRM and loading it into Snowflake just to run analytics. Well, what if you never had to do that? What if you could just query that directly and incorporate that in your analysis? Part of what led us to form Starburst a few years ago was the fact that we were seeing more and more companies of various shapes and sizes deploying the Presto, now Trino, technology to access data anywhere. This is data warehousing analytics without the data warehouse. What we are doing is literally the opposite of Snowflake. Snowflake is a really interesting, incremental step in data warehouse history, which goes from Teradata on prem to Snowflake in the cloud. But all of those models require you to extract data out of your different database systems and load it into this one single source of truth. And Starburst is the opposite of that. We can query the data where it lives, which is turning the data warehousing model on its head and gaining a lot of traction.”

Borgman should know, because he has been trying to transform the data warehousing market for most of his career since getting his bachelor’s in computer science from the University of Massachusetts in 2002 and his master’s in business administration from Yale University a few years later. Borgman was a software engineer at military contractor Raytheon for a few years, and then was a senior software engineer at the vaunted Lincoln Laboratory at MIT that has brought so much go information technology into the world. In 2010, after being exposed to the ideas of Yale researcher Daniel Abadi about merging distributed relational databases and Hadoop into a single platform, Borgman and Abadi formed Hadapt, a commercialization of the HadoopDB research done at Yale. In 2014, Teradata acquired Hadapt for $50 million, and Borgman stayed on at Teradata for three years running the Hadoop business at the data warehousing pioneer. In 2017, Borgman hooked up with Traverso, Sundstrom, and Phillips to found Starburst to commercialize PrestoSQL, now Trino.

So far, PrestoSQL and Trino have a combined 5 million downloads, which is a good indicator of interest in federated database query engines, and so is the explosive growth that Starburst has seen. The company has more than tripled its employee count to 350 in the past year, has tripled its paying customer base to roughly 200 large enterprises in the past year, and has tripled its revenues in the past year, too. In fact, says Borgman, the company has tripled its revenues in each of the past three years – 2019, 2020, and 2021 – and not surprisingly, between its Series C and Series D funding, the valuation on Starburst has nearly tripled from $1.2 billion to $3.35 billion.

One of the things we always want to know is how the performance of distributed database systems like Hadapt, Impala, and other SQL layers atop Hadoop as well as new databases like Snowflake and Starburst, perform in the wild. Borgman says there have been “remarkable performance improvements” on many fronts, not the least of which are the Parquet, ORC, and RCFile column-oriented storage formats that have been added to Hadoop and other datastores and databases to speed up SQL access to unstructured data (well, to create semi-structured data from unstructured data, to be more precise). At this point, if you have data stored in a Hadoop data lake in these formats and you run a federated SQL query against it using Trino or Starburst, the performance “is actually pretty close” to what you can get out of Teradata. And you don’t have to do any of that ETL nonsense.

“There are certainly going to be some workloads where Teradata is going to be faster,” says Borgman. “But again, Teradata controls its own underlying storage and you have to do all that ETL even before you start the query. For a broad array of analytics, federated queries are close enough and that means we are bringing tremendous good value, then.”

We would love to see more benchmarking of real-world scenarios, with the kinds of data, databases, data stores, and SQL queries that large enterprises really do to measure what the benefits of the federated database approach is compared to old-school data warehousing like Teradata or the new school running Snowflake in the cloud.

I think, Teradata is re-discovery itself. The Vantage platform is a prime example of it.I think, when it comes to performance for Enterprise Analytics, Teradata is really the king.

To auto scale up / out you need to manage the infrastructure and have it ready and available

You typically end up needing to throw a lot of memory resources to make queries faster

Lots of moving pieces (Starburst engine + Ranger + Storage + infrastructure, etc.)

The TCO becomes prohibitive and more complicated as you increase usage given all the pieces needed.

Egress costs on each query depending on where the data is located vs the Starburst layer.

What a load of old polemic nonsense.

Very nice article. I would like to read more similar stories to know how the industry is moving or focusing to add value to its customers.

What is this article? It makes no sense, jumps from one un-related topic to another, has no connection to the title, and why are we talking about Hadoop in 2022?

Nice article. Will be great to see more from you on the data managent.