At this point in supercomputing, it’s becoming an anomaly to see an upcoming double-digit petaflops system not using AMD for CPU and GPU, but the National Renewable Energy Laboratory will be taking a more traditional route for the “Kestrel” machine.

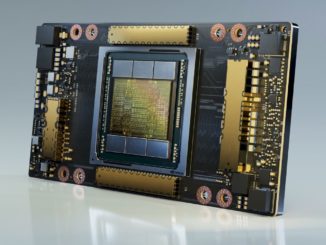

The newly announced system will be capable of 44 petaflops peak performance when it goes live in 2023. Even though we might expect the HPE Cray EX supercomputer to sport a future Intel Xeon processor like “Granite Rapids,” NREL and HPE confirmed today that Intel “Sapphire Rapids” will be the host CPU, with acceleration coming from a forthcoming Nvidia A100 GPU, called A100NEXT Tensor Core GPU.

This is the first we have heard the term A100NEXT, so we have to assume this will be one of Nvidia’s GPUs in the lineup for the next round of improvements which will likely be announced formally next year. Nvidia CEO, Jensen Huang, says the company will unveil new GPUs, CPUs, and networks every two years and it could very well be that this A100NEXT is that news in advance – albeit without much detail.

If we were to speculate on the A100NEXT Tensor Core GPU, the A100 will probably have a die shrink to 5nm and include more compute units, perhaps doubling via a chiplet design. After all, Nvidia is already at 54 billion transistors, which starts to get tricky yield-wise. By breaking those into four 25 billion transistor chips, odds of success go up – just in time for real-world exascale and ever-growing AI training.

Aside from NREL using what will be an “older” CPU via the Sapphire Rapids choice, we also have to wonder why NREL did not decide on Nvidia’s “Grace” CPU, which will be available in the 2023 timeframe. It is likely a matter of guaranteeing delivery of the system, which does real mission-critical work and can’t be subject to Intel-driven delays as with the “Aurora” supercomputer at Argonne. Further, while an Arm-based supercomputer of this magnitude would be quite the story, it could be that no-one wants to go first – that is, no one with such a clear practical science mission as NREL has.

Even though Huang says large-scale supercomputers only drive around one percent of the company’s datacenter business, these are still important symbolically for the company that created the concept of GPU supercomputing over a decade back. AMD is a credible threat to Nvidia’s GPU dominance at the upper end of HPC and Intel has a strong enough story to keep that two-year cadence a driving force.

NREL chooses to spend its innovation points, so to speak, less on novel compute and more on energy efficiency, which is no surprise given its mission within the US Department of Energy. This has meant firm commitment to vendors including Intel and HPE, although the introduction of Nvidia GPUs might mean a new loyalty will build, depending on how many codes can benefit from GPU acceleration.

As for NREL, the Intel tradition is strong. The current 8 petaflop Intel Skylake-based “Eagle” supercomputer is an all-CPU machine, which replaced the “Peregrine” system. The HPE commitment is also strong at NREL with the previous systems based on HPE designs with unique regard to power and cooling efficiency (“Peregrine” was the first supercomputer deployed with HPE’s “Apollo” warm water cooling technology, which was used to keep nearby buildings warm in cold Colorado winters, capturing 97 percent of datacenter heat).

While this is not NREL’s first dance with HPE, it is its first foray into the Cray system architecture via HPE’s purchase of Cray. All of this delivered via an HPE Cray EX system with Slingshot fabric, which should help NREL handle both data-intensive and AI workloads. NREL went all-HPE/Cray with its storage infrastructure as well, opting for over 75 petabytes in a Cray Clusterstor E1000 system.

The HPE Cray EX system NREL chose is built around liquid cooling, which will lead to an annualized average PUE rating of 1.036 for the datacenter facility. Showcasing high energy efficiency is key for NREL, as is pioneering new research in that area. NREL supports energy research in renewables as well as areas like transportation, manufacturing, materials science, and more.

With that in mind, the last few years have shown speedy progress in blending HPC and AI. With the addition of advanced GPUs for training, we can expect NREL to blaze some new trails in HPC applications with a deep learning component.

According to HPE, there has already been collaboration with NREL on AI/ML technologies to monitor, automate and improve operational efficiency, including resiliency and energy usage, in datacenters, and an initiative to demonstrate hydrogen fuel cell-powered datacenters to deliver smarter, more energy-conscious computing environments.

“HPE has a long-standing collaboration with the National Renewable Energy Laboratory (NREL) where we have developed joint high performance computing and AI solutions to innovate new approaches that reduce energy consumption and lower operating costs,” said Bill Mannel, vice president and general manager, HPC, at HPE. “We look forward to continuing our relationship with NREL and are honored to have been selected to deliver an advanced supercomputer with Kestrel that will significantly augment the laboratory’s efforts in making breakthrough discoveries of new, affordable energy sources to prepare for a sustainable future.”

For a renewable energy company it seems they chose a higher power use over energy efficiency.

I think one thing overlooked with the AMD GPU offerings, at least journalistically, is that the supercomputing centers at the national labs may want more control of the software stack to ensure the longevity of their software development. In particular, for the expertise levels associated with exascale performance the fact that AMD ROCm is open source may be a significant advantage while for petascale configurations much less so.