The first exaflops-capable supercomputers are just around the corner and to celebrate this milestone-to-be and talk about its ramifications, the US Department of Energy hosted a national “Exascale Day” discussion. And if there was a common theme in this feel-good event, it is that the exascale era will be unlike any that came before it.

The panel, moderated by Hyperion Research’s Earl Joseph, was anchored by three key execs at the trio DOE facilities that will deploy the country’s first exascale supercomputers: Rick Stevens at Argonne National Laboratory, Jeff Nichols at Oak Ridge National Laboratory, and Michel McCoy at Lawrence Livermore National Laboratory. That threesome was bracketed by Doug Kothe, who runs the agency’s Exascale Computing Project (ECP), and Steve Scott, chief technology officer at Cray (now part of HPE), the vendor that will be delivering the three initial exascale machines.

It is something of a cliché to believe that the current era is always the exceptional one, but the five panelists made a pretty good case that the next ten years of HPC is going to be something of a watershed – not just for the underlying hardware, but for the HPC application landscape itself.

Scott set that stage by observing that going from terascale to petascale was primarily an evolutionary transition. During that time, Moore’s Law was more of less operable and the hardware-software landscape was relatively stable. “This is not going to be an evolutionary decade coming up,” predicted Scott.

The basis of his argument is that the slowdown in Moore’s Law and the related issue of energy efficiency stagnation has delayed our entry into exascale computing and will continue to impact hardware design going forward. That delay, Scott said, can be seen in our current situation where the historical ten-year cadence between the attainment of megaflops, teraflops, and petaflops has not been the case for exaflops. If it had, an exascale system would be up and running this year.

As a result, systems are getting bigger and more power-hungry. For example, the 1.5-exaflops “Frontier” supercomputer headed to Oak Ridge in 2021 will be the size of two basketball courts, have 90 miles of cabling, and draw 30 MW of power. Its predecessor, the 200-petaflops “Summit” supercomputer, uses a mere 13 MW. Yes, energy efficiency is rising, but as Nichols pointed out, Frontier’s thirst for energy means the lab will have to spend millions of dollars more per year in electricity when it makes the move from petascale to exascale. That’s a trend that can’t go on ad infinitum.

The Moore’s Law/energy efficiency slowdowns are driving HPC toward new technologies and architectures, said Scott. One visible example of this is that all three of the first US exascale systems – Aurora, Frontier, and El Capitan – will employ GPUs. Although they are less general-purpose than CPUs (and generally more difficult to program), they deliver much better performance and performance per watt. “We’re moving toward an accelerated style of computing,” observed Scott.

Another ramification of diminishing processor advancement is the aforementioned growth in system size. That will require proportionally more network bandwidth, and more network infrastructure in general, to deal with increased node counts. Scott also noted that storage is being pulled into the server (mostly in the form of solid state media) to further alleviate the system network bottleneck. Similarly, memory is being placed onto the processor package, as high bandwidth memory, to save additional energy and increase processor performance.

But perhaps the biggest development in the exascale era is what will happen on the application front. Rick Stevens, from Argonne, believes the confluence of AI with traditional simulations is going to transform the very nature of high performance computing. “That’s the thing that I think is going be yet another sea change in how we do science,” he said.

Such a conviction is well-known to our readership, given that we’ve been reporting on this trend since we were founded five years ago. But Stevens thinks the AI-simulation mashup will not only change how theoretical science is performed, but how experimental science is done as well. For example, he envisions that AI will enable the creation of automated labs that can be daisy chained together to drive a new kind of experimental workflow that is much more efficient and powerful than the labor-intensive labs we’ve grown up with.

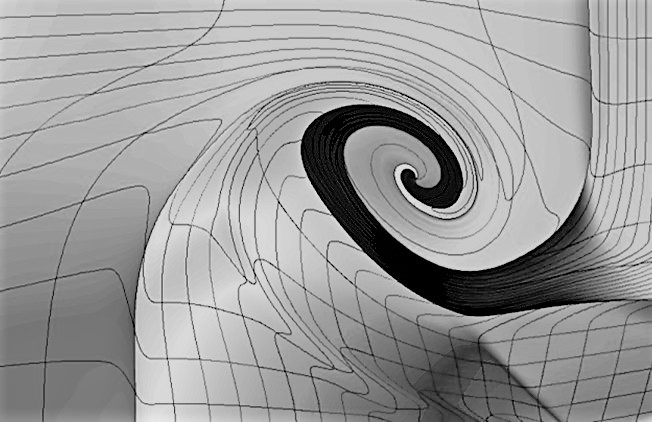

In the theoretical realm, Stevens expects machine learning will increasingly be used to replace portions (sub-models) of traditional simulations based on partial differential equations. This is already being done by developers in areas like quantum chemistry, high energy physics, and material science. Even early attempts at this has seen sub-model performance speedups as high as 1,000X and even 10,000X. Stevens predicts that within five years, the kinds of problems that will be accelerated by the merging of AI and simulations will “blow our minds.”

More generally, he expects the combination to be applied to a lot of DOE application domains in areas like photovoltaics, energy storage materials, and reactor safety. He also thinks it will be relevant to developing new classes polymers that are environmentally friendly in both their manufacture and their degradation. In a more exotic vein, Stevens thinks AI will be used to design new types of organisms to both study the inner workings of biology and to harness them for practical purposes. “We’re not doing our grandfather’s HPC here,” he said.

Kothe chimed in that DOE codes aimed at things like turbine engine design, nuclear reactors, subsurface carbon sequestration, biofuels, power grid optimization, seismic hazard risk assessment, and additive manufacturing, as well as a whole host of scientific discovery applications, are going to get a huge boost from exascale computing. But not only in the exascale realm. As Kothe pointed out, the software stack being developed under the ECP effort will apply to future HPC systems of more modest means as well.

Since exascale supercomputers will enable machine learning applications to be run at scale, Stevens believes these systems will become of much greater interest to those outside the traditional HPC community – in particular, web services companies and other internet-based businesses. They may not care much about theoretical science but are very interested in using AI to decipher the behavior of their customers. “It’s going to be a new world as we build out these systems,” he predicted.

At Lawrence Livermore, McCoy is understandably more focused on his stockpile stewardship codes that are used to maintain the country’s nuclear arsenal. But even here, exascale is poised to break new ground. That’s because as nuclear weapons age, they become increasingly difficult to simulate. Therefore, the codes have to become more powerful and predictive than they have in the past. And that necessitates three-dimensional simulations.

“The nasty truth is that up until now we have basically had to run most of our routine calculations in 2D, said McCoy, simply because running in 3D, the turnaround time was so long, the analyst would forget the question before he got the answer.” Although the codes are capable of 3D simulations, they just don’t have enough computational horsepower to run them at this level for day-to-day work.

On Sierra, the world’s second most powerful supercomputer, they recently ran a high-resolution 3D calculation in less than a day. When El Capitan is delivered to Lawrence Livermore National Lab in late 2022, they’ll be able run these 3D models much more extensively, running whole series of them while quantifying the uncertainty of the calculations. “In other words, 3D can become the new 2D,” said McCoy, adding that exascale is arriving in just the nick of time to deal with the aging nuclear stockpile.

Coming back to Scott, he concluded that we are unlikely to hit the next HPC milestone of zettaflops with our current silicon-based CMOS technology. For that, we will have to look toward non-von Neumann hardware like neuromorphic computers and other post-exascale technologies. “The next decade is going to be all about having significantly different approaches to how we do computing,” he said.

Be the first to comment