Even though the race to the exascale computing is based entirely on an arbitrary milestone, it does serve to focus the attention of the HPC community to think about advancing the state-of-the-art for supercomputer hardware. The software that must run on those supercomputers has to follow suit or there is no point in the leap ahead.

The US Exascale Computing Project (ECP) that is administered under the Department of Energy is most often associated with the drive toward exaflops-capable hardware. But the project is also charged with making the software ecosystem exascale-capable, and this is arguably the more complex effort. That’s not only because of the large number of software components involved, but also because the DOE is using this opportunity to improve the interoperability and usability of the entire software stack.

To get some sense of how complex that actually is, you just had to watch Michael Heroux of Sandia National Laboratories describe the various software activities currently taking place across the project. Heroux, who also happens to be the ECP’s director of software technology, delivered this presentation at Hyperion Research’s HPC User Forum earlier this month.

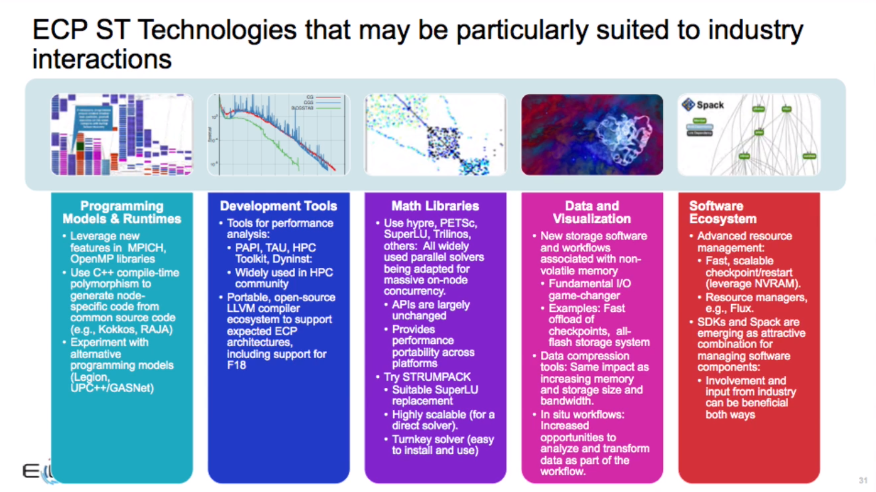

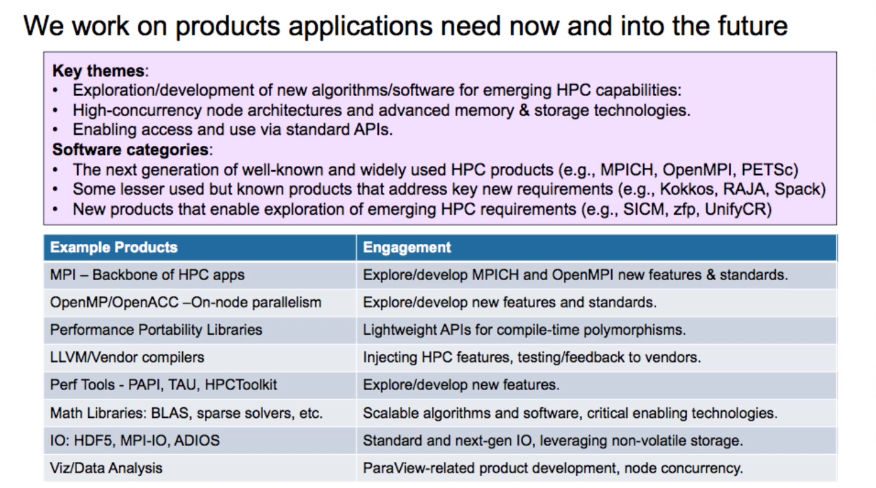

The ECP ST work encapsulates the entire HPC software stack and toolset, including development tools, math libraries, programming models and runtimes, and the catch-all of data and visualization. A fifth area that spans the other four is the software ecosystem and delivery that Heroux says is focused on “getting stuff out the door.”

Outside of Heroux’s particular area of responsibility are the applications themselves, which, however, inform a lot of the work in his software technology group. That’s a consequence of the need for greater scalability and portability for future exascale platforms, and the need for more comprehensive support for accelerators. Overshadowing these requirements is the more fundamental shift from simple simulations to multiscale, multiphysics codes, which requires greater interoperability across libraries and new software development tools.

The ECP application effort is comprised of 25 codes relevant to the DOE mission – so areas like nuclear fission and fusion, turbine wind plants, biofuels, combustion engines and gas turbine design, advance fossil fuel combustion, power grid planning and carbon capture. And since the DOE also encompasses the National Nuclear Security Administration (NNSA), they also need to support the stockpile stewardship program, which requires some of the most computationally intensive simulations that the agency does. Plus, since the DOE has become the lead government agency for supercomputing, its mission has expanded to support more basic science as well. These include applications in earth and climate science, computational chemistry, cosmology, particle and high-energy physics, bioscience, and health care.

To move to exascale, those applications will have to rely to a large degree on what the software technology effort is able to accomplish. At the highest level, the goal of the ST work is to construct a coherent software stack that will enable developers to effectively write applications that are applicable across exascale target hardware.

Several years ago, when exascale was more of an abstract concept than a reality, there was a lot of talk about exotic new exascale frameworks and programming languages that would deliver transformative software development experiences. But Heroux says the emphasis today is on bringing existing HPC software, like MPI, forward into the exascale realm. Although some of the ST effort is focused on R&D for novel approaches, it’s much less about new programming models and environments than it is about upgrading the old ones for the next generation of hardware.

There’s also a renewed focus on software quality, an area that has largely been neglected in the HPC community. Heroux thinks there’s an opportunity to bring more advanced software practices from other communities to improve HPC software. “We have not historically been rewarded for being good software engineers,” he admits.

But the heart of the ST work is on upgrading the HPC software stack itself, which is comprised of comprised of algorithms and application libraries. These will become available as open source components to the DOE labs, as well as other government agencies like the NSF, NASA and NOAA, who are collaborating with the DOE on some of this work. Libraries for things like MPI, OpenMP, and OpenACC will be even more widely shared, since many of the open source versions of these packages are used worldwide in both public and private organizations.

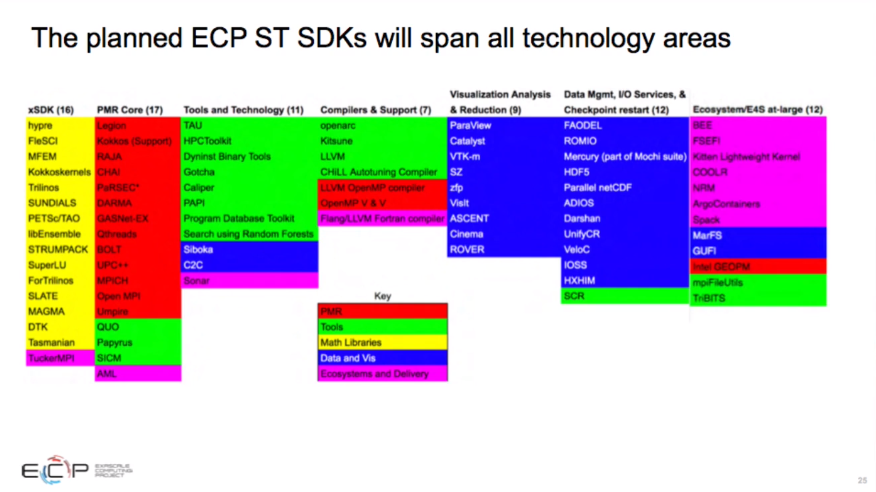

One big thrust is the development of software development kits (SDKs), which in this context are elements built as composites of related packages, the idea being to make them more interoperable and consistent. The impetus behind this concept was driven by developers who were building multi-scale, multi-physics codes that often brought together multiple related libraries from different codes. Often due to disparities in the data structures being used, a function from one library sometimes interacted with functions from other libraries in an anomalous manner. The creation of a unifying SDK eliminated these inconsistencies. The initial effort brought multiple math libraries under a single SDK, but this will be extended to encapsulate other packages collections as well. Heroux says SDKs will become a key software delivery vehicle for ECP products.

The heart of the software technology effort is upgrading and delivering all the commonly used HPC software packages, which have been aggregated the “extreme-scale scientific software stack,” or E4S. Heroux describes this collection as “the kitchen sink of HPC software.” It includes everything, from OpenMPI, MPICH, OpenMP, and OpenACC to storage layers like HDF5 and ADIOS to performance tools, like PAPI and TAU, math libraries like BLAS and sparse solvers, and visualization tools like ParaView. It also encompasses a number of open source LLVM and vendor compilers. E4S currently includes 46 packages, but that number is expected to grow substantially.

One tool that the ST work has taken to heart is Spack, an open source package manager devised specifically for HPC. It’s designed to make installing scientific software a lot easier and more flexible. Spack has racked up about 150,000 downloads over the past year and currently manages over 2,800 software packages. ECP uses it as a meta-build tool that sits on top of all the software products it will provide. Not surprisingly, the project is also a contributor to the tool.

Currently, over 80 software technology products are under development. According to Heroux, his effort is on track, with plans for containerized delivery of software via SDKs and E4S already underway.

Be the first to comment