There is a certain level of impatience in the IT industry to create truly composable infrastructure from disaggregated compute, storage, and networking. It seems to be just as hard to break things apart into their respective components as it is to get the technology together to stitch it all back together again with what amounts to a virtual motherboard for a composed server.

The problem that composability solves is easy enough to state, even if it is hard to do. If all of the compute, main memory, and auxiliary storage were networked together and the interconnect fabric was fast enough and had enough bandwidth, then a system or cluster or any size could be created on the fly to look like a big NUMA box or a more generic distributed cluster to run whatever any workload required at the moment. Right now, servers have relatively fixed ratios of compute, memory, storage, networking, and other I/O, and that means you have to plan for the peak loads of any given application stack and buy hardware accordingly. This results in overprovisioning of capacity, and that is wasted money. While virtualization and containers have helped by bin packing more workloads into a given machine, it would be far better to just create the actual machine an application wanted at the time and scale that up and down as needed.

We do not doubt that we will get to the glorious composable future eventually, but a lot of things have to change before they can.

Hewlett Packard Enterprise has been waving the composable banner for a few years now with its Synergy systems, which made their debut back in December 2015 and which now have over 1,600 customers who are kicking the tires on HPE’s second pass on composability. (The “Moonshot” modular systems, launched in 2012 and updated in 2013 with the “Redstone” and “Gemini” systems, were arguably the first pass at composability by HPE.)

Dell, which has become the dominant supplier of servers in the world in recent quarters, has taken a slower approach to composable systems, testing out some ideas with its custom systems for hyperscalers and also doing elements of modularity – a key part of the composability of systems – with its PowerEdge FX line, launched in November 2014. Back in May, Dell lifted the curtain a little bit on its next step towards composable systems aimed at enterprise customers, the PowerEdge MX, which is at the heart of the company’s Kinetic infrastructure initiative. Just as HPE has been focusing on memory-centric architectures – The Machine is a good example of this – to drive composable systems, Dell has been doing much the same thing. But the main memory controllers are on the processors these days and therefore main memory is attached to the CPU in a most uncomposable fashion – something we discussed at length with techies at Dell when it first started talking about the PowerEdge MX machines and the Kinetic strategy. Despite this fact, both HPE and Dell are moving as much of their futuristic systems into the field, knowing full well that it will take interconnect fabrics (almost certainly based on silicon photonics) and protocols like Gen-Z, which HPE helped establish and which Dell is adopting, to glue disaggregated memory into a single space that clusters of compute can chew on to do their work.

Right now, both HPE and Dell are laying the groundwork for truly composable systems, and that means moving away from the blade server architectures of the past decade and ditching approaches that, at the time, seemed like the right thing to do. The difference can be illustrated well by comparing Dell’s PowerEdge M1000e blade server chassis with the new PowerEdge MX enclosure. With the PowerEdge M1000e, the blades with compute, DRAM, and persistent storage slide into the front and the networking slides into the back; they are connected by a midplane that has to be able to support the bandwidth and latency needs for customers for a long time – the M1000e has had one midplane upgrade in ten years – and this relatively static glue between the compute and storage on one side and the networking on the other did not seem like a very big deal given all of the other benefits of blade servers in terms of the ease of networking and sharing peripherals, all of which saved money. But with the PowerEdge MX systems, there is no midplane. Everything is connected to everything else using a standard interconnect fabric, and in this case it is 25 Gb/sec Ethernet switching that has RoCE remote direct memory access capabilities coming off the server and generally feeding up to 100 Gb/sec Ethernet switches that also support RoCE. This is the new baseline for networking among hyperscalers and cloud builders, following on from 10 Gb/sec server uplinks and 40 Gb/sec switch fabrics. Dell uses industry standard connections between the server nodes, storage, network adapters, and switches. It is, in essence, a tiny disaggregated hyperscale datacenter in a box rather than a blade server as we know it, but it makes use of the modularity and form factor density of blade servers just the same. Something that hyperscalers and cloud builders, who by far prefer minimalist rack-mounted servers, so not do.

Actually, the PowerEdge MX will scale across ten enclosures in its initial configuration, which Brian Payne, director of product management and marketing for the PowerEdge business at Dell, tells The Next Platform is a sufficiently large enough deployment size for large enterprises. The scale can grow from there, as needed, but the important thing right now is to get started down the road.

“We have got to take a different approach than the market has to date around composability,” says Payne. “We really need to think about how we can enable full disaggregation of a server to dial up the right resources at the right time for a workload. The idea behind Kinetic infrastructure is to rethink how we put together compute, memory, storage, and I/O for the diverse set of workloads that customers have, and with the right management tools, so they can respond to rapid changes. If you think about composability, it naturally lends itself to modularity, with components completely disaggregated and yet in a fully composable way.”

By the way, this is one example where the hyperscalers and the cloud builders have not, as far as any of us know, already invented. They do not have composable systems as we are talking about them. That’s a wonder, really. We expected them to have created this already. Hmmmm. To their credit, they do have pretty flexible infrastructure, due mostly to the vast Clos fabrics that define a datacenter with 100,000 servers, all accessible as one.

In any event, Dell had been hinting at what the PowerEdge MX systems would be back in May, but now that it is getting ready to ship these partially composable machines, it is giving out some more detail about them ahead of the VMworld conference next week, where it will be showing off the new iron and OpenManage and iDRAC software and middleware that is the core of the Kinetic composability stack at this point.

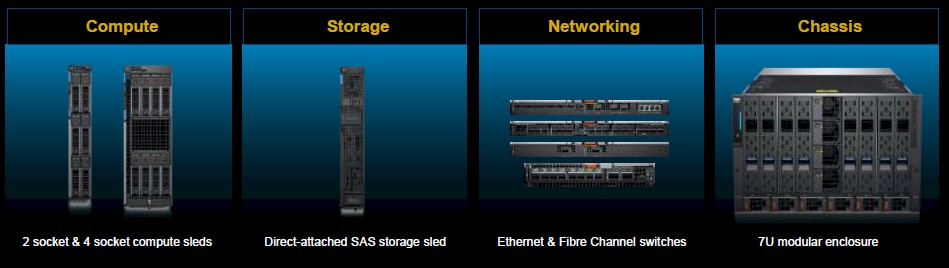

The starting point for the PowerEdge MX composable systems is the MX7000 chassis. It comes in a 7U form factor that has room for eight single-width server nodes or four double-width server nodes. The chassis can hold up to seven storage sleds. As you can see, the sleds are all oriented vertically as with many blade servers in the past, which in theory can simplify the removal of heat form a rack of machines. (This turns out to be harder than it looks, hence the horizontal nature of most server and storage racks at the hyperscalers and cloud builders.) The MX7000 enclosure can support three different I/O fabrics, and has redundancy built in, and Dell is promising to support at least server processor generations and from 400 Gb/sec Ethernet and beyond in the initial MX7000 chassis.

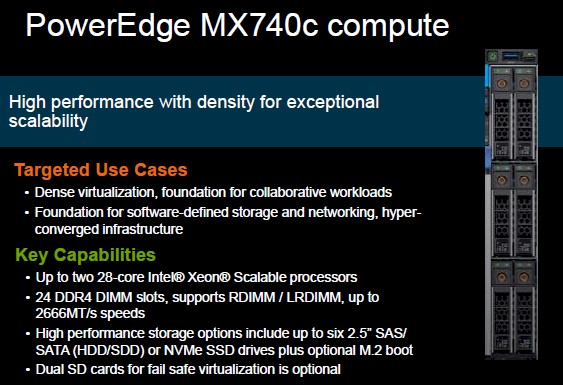

The single-wide server sled, called the PowerEdge MX740c, has room for six 2.5-inch storage devices on the front and has room for two of Intel’s latest “Skylake” Xeon SP processors. The MX740c can use any of the Xeon SP processors, including the top bin 28-core parts that run at 205 watts a pop, and has room for 24 memory sticks across those two processors. Both registered DDR4 and load reduced DDR4 memory can be used, and at speeds of up to 2.67 GHz. Dell has a variety of SAS and SATA drive options based on disks or flash, as well as NVM-Express flash SSD and M.2 boot flash options.

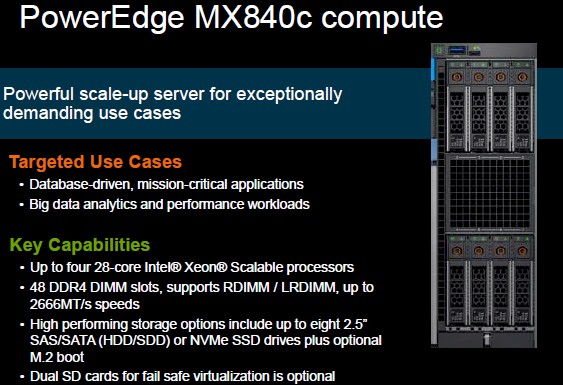

The PowerEdge MX840c server sled is basically a double-wide sled that gangs up twice as many processors and memory slots as the MX740c into a four-way NUMA node. Because the NUMA interconnects take up space, this sled only has room for eight drive bays instead of the twelve you might expect. This node also supports the full range of Xeon SP processors.

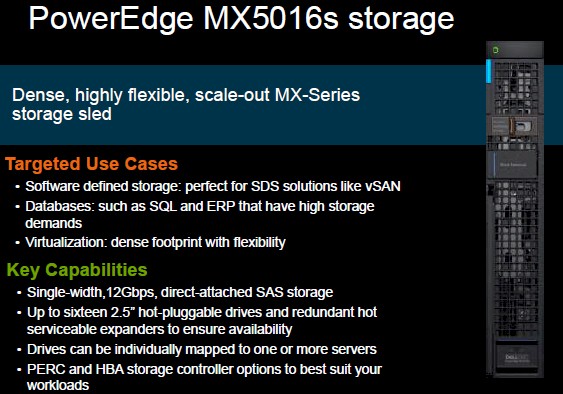

On the storage front, the PowerEdge MX5016s sled has room for sixteen 2.5-inch SAS drives, which link to the server nodes through 12 Gb/sec SAS switches that allow for individual drives to be mapped to particular servers within the chassis or across as many as ten PowerEdge MX enclosures – the maximum management domain at the moment of the OpenManage Enterprise Modular Edition software that Dell has created to control freak the whole shebang. In this sense, the storage in the PowerEdge MX line is actually composable today, but it will take an Ethernet fabric and a protocol like Gen-Z and maybe CCIX to extend that composability to GPUs, FPGAs, and other devices.

That scale may be a little on the light side, we think, since ten enclosures only deliver a maximum of 80 skinny servers or 40 fat ones. We also think that Dell needs to include server sleds based on AMD Epyc and Cavium ThunderX2 processors, which we think will happen over time. It would be nice to have some InfiniBand networking options for the HPC and AI crowds as well, which are in some ways converging because of the intense latency needs they have for their networks.

At this point, the PowerEdge MX supports a mix of Ethernet and Fibre Channel switching modules; the Etehrnet is used to link the server nodes to each other and to the outside world and the Fibre Channel is used to link to traditional SANs that, we think, will eventually be replaced by hyperconverged and disaggregated storage over the long haul. The MX5108n Ethernet switch has eight 25 Gb/sec ports that downlink to the servers in an enclosure and then has two 100 Gb/sec, one 40 Gb/sec, and four 10 Gb/sec ports that can link to other enclosures or out to the Ethernet backbone as needed. The MX7116n fabric expansion module has sixteen 25 Gb/sec downlinks to the servers plus two fabric expansion ports to link out to the MX fabric, while the MX9116n fabric switching engine has sixteen of the 25 Gb/sec server downlinks, two 100 Gb/sec ports, two unified Ethernet/Fibre Channel ports that deliver a pair of 100 Gb/sec Ethernet or eight 32 Gb/sec Fibre Channel ports, plus a dozen fabric extenders. Finally, there is the MXG610s Fibre Channel switch, which has sixteen 32 Gb/sec internal Fibre Channel ports, eight 32 Gb/sec unified Ethernet/Fibre Channel ports running through SFP+ ports, and two unified uplinks that provide a pair of QSFP and four 32 Gb/sec Fibre Channel ports. When all is said and done, you can link to the servers in a variety of topologies and rail configurations using 25 Gb/sec Ethernet and 32 Gb/sec Fibre Channel for SANs and have uplinks to the network or to storage running at 100 Gb/sec or 32 Gb/sec, respectively.

The PowerEdge MX gear outlined above will start shipping on September 12. Pricing has not yet been announced, but it cannot command much of a premium over generic rack servers or companies won’t invest in it.

Interesting article. However please tell me where is the composability? The fact that there is no midplane does not mean that this is composable? At the end I see a lot of quickspecs which is a typical evolution of a blade enclosure, and limitations that grow up to 10 enclosures but which is still a practical limitation of hardware… Look at what gartner says about composability, and I don’t see it in the Dell solution…