The best way to make a wave is to make a big splash, which is something that Andy Bechtolsheim, perhaps the most famous serial entrepreneur in IT infrastructure, is very good at doing. As one of the co-founders of Sun Microsystems and a slew of networking and system startups as well as the first investor in Google, he doesn’t just see waves, but generates them and then surfs on them, creating companies and markets as he goes along.

Bechtolsheim was a PhD student at Stanford University, working on a project that aimed to integrate networking interfaces with processors when he was asked by Scott McNealy and Vinod Khosla to be the Sun Microsystems’ first chief technology officer. Sun was wildly successful, moving from workstations into systems, and in 1995, Bechtolsheim left with plenty of dough in his back pocket to started a company to make cheaper Gigabit Ethernet switches, called Granite Systems, and after being in business for only a little more than a year, he sold it off to Ethernet routing giant Cisco Systems, giving that company its foundation in Ethernet switching with its Catalyst 4000 line of iron.

Five years later, as the dot-com boom was busting, Bechtolsheim came back to the network-system interface problem again and founded a company called Kealia, which made monster InfiniBand modular switches and a line of Opteron-based servers that had design philosophy of supercomputers, but aimed at enterprises. Sun Microsystems, which was not having an easy time in servers by 2004, bought Kealia and brought Bechtolsheim back into the fold, and the basic architecture of these systems lives on in the Sun product line today, particularly the Exadata database clusters.

Having solved this problem, Bechtolsheim moved on to the next wave order of magnitude wave in networking, 10 Gb/sec Ethernet, co-founding Arista Networks with David Cheriton, a Stanford University professor who lead the Distributed Systems Group and who was a co-founder of Granite Systems with Bechtolsheim as well as an early investor in Google. (Sergey Brin and Larry Page were Cheriton’s students, and when Bechtolsheim whipped out a check for $100,000 on Cheriton’s front porch to give the two the money to start the search engine company, Cheriton followed it up with a $200,000 check of his own.) Another of Cheriton’s students, Ken Duda, is a co-founder at Arista Networks, which dropped out of stealth mode in 2008 when Bechtolsheim stopped working so much at Sun and conceded his was spending most of his time at a new company. A year and a half later, Arista Networks launched its first gear, modular switches sporting 384 10 Gb/sec ports and its Extensible Operating System (EOS) variant of Linux on them, and following it up in March 2011 with top-of-rack switches based on Broadcom’s Trident+ and Fulcrum Microsystems’ Bali switch ASICs.

Getting an edge in networking often means being contrarian, and the initial push by Arista Networks was to use merchant switch silicon and focus on having a more flexible network operating system that would appeal to hyperscalers and cloud builders, and to push the bandwidth waves as hard as possible and encourage the merchant chip makers to innovate and iterate faster. With 40 Gb/sec Ethernet, which was admittedly just a stepping stone to 100 Gb/sec that was necessary because getting the signaling and optics up to speed was easier ganging up four lanes of 10 Gb/sec Ethernet than ganging up ten lanes. The heat and expense of ten lanes was awful, which is why the early 100 Gb/sec products were only really used for high-end routing and switching by carriers. The hyperscalers – notably Google and Microsoft, the latter of which has been Arista Network’s largest customer since it started shipping those top of rackers in 2011 – along with Arista Networks, partner Broadcom, and competitor Mellanox Technologies formed the 25G Ethernet Consortium back in 2014 because they wanted the industry to move to 25 Gb/sec signaling on Ethernet lanes and therefore be able to make 100 Gb/sec switches with only four lanes per port and server ports with 25 Gb/sec or 50 Gb/sec capacities with only one or two lanes.

The 25G Ethernet Consortium won that battle, and the IEEE backed their standard, the first time in history that has ever happened.

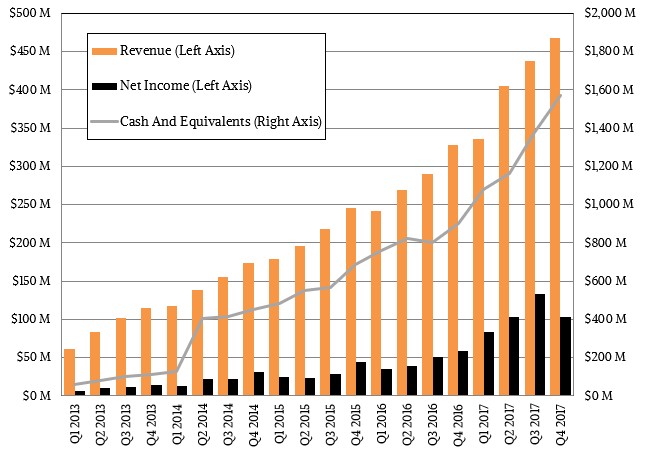

Arista Networks made a lot of money from the 10 Gb/sec ramps, and has done even better during the 40 Gb/sec and 100 Gb/sec waves. The company reported its financial results for the final quarter of 2017 recently, and in that period Arista Networks brought in $407.2 million in product sales and another $60.7 million in services revenues, totaling $467.9 million, and it brought $103.8 million to the bottom line. Arista Networks has found its profitable niche, holding the line between the whitebox switch vendors affiliated with ODMs and the bigger switching OEMs that tend to sell to regular enterprises that do not tend to have the scalability and software flexibility needs of the hyperscalers. That said, more and more enterprises want to behave like hyperscalers, and they are starting to buy infrastructure in a similar manner. This is one reason why Arista Networks was propelled to $1.65 billion in sales in 2017 and could convert a very respectable 25.7 percent of revenue to a pool of black ink. Jayshree Ullal, Arista Network’s chief executive officer and a former Cisco executive whom Bechtolsheim and Cheriton brought in to run the company when it launched, conceded that Arista had an exceptionally good year last year, with sales up 45.8 percent overall, and would be facing tough compares in 2018 and therefore is telling Wall Street to forecast something more like 25 percent to 30 percent growth. Machine learning workloads are the big additional revenue booster in 2017, and there is inherent volatility in selling to hyperscalers and cloud builders.

“If you asked Ita [Brennan, our CFO] and me whether we would have 50 percent growth increases in 2017, we certainly did not forecast it,” Ullal said. “It came upon us suddenly because the cloud titans suddenly had a use case and an application. So, when we look at the overall year and when we look at how to plan the year, just like we did in 2017, we think a growth rate in the 20 percent to 30 percent range with an average of 25 percent is a very, very good growth rate. It is not cautious. It is realistic. And if something really good happens beyond that, that’s great. Or if something really bad happens, we will let you know. Hope is not a strategy. This is our best effort at predicting what that growth would be.”

Anyone who has ever run a business knows this is the best that you can do. Refreshing to see someone say it.

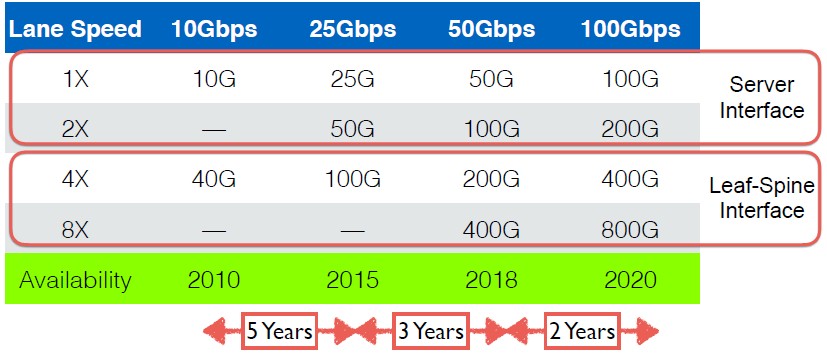

The interesting bit that Ullal talked about in the call was the transition to 400 Gb/sec Ethernet, which is underway in the development labs and which will be the next revolution in Ethernet speeds to hit the datacenter. Here is what it looks like from a high level that Wall Street can appreciate, according to Ullal:

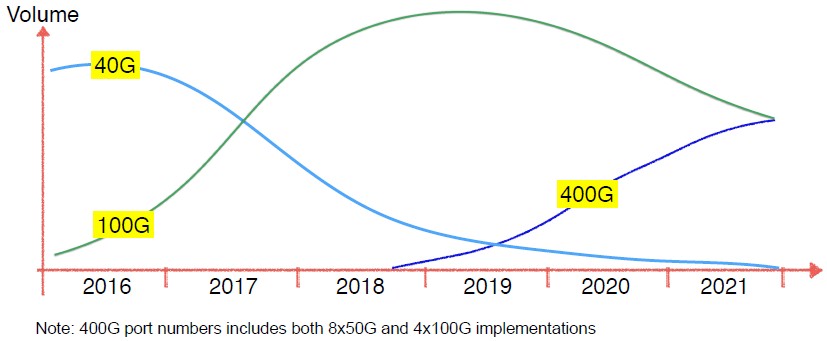

“We saw that 10 Gb/sec had a very long tail for almost ten years, and then many vendors jumped into 40 Gb/sec, as did Arista,” she explained. “But for 100 Gb/sec, we believe first of all that it has an extremely long tail, unlike 40 Gb/sec. So we think the relevant bandwidth point is really going to be 100 Gb/sec for a very long time, with the option to do 10 Gb/sec, 25 Gb/sec, or 50 Gb/sec on the server or storage I/O connections; 400 Gb/sec is going to be very important in certain use cases, and you can expect that Arista is working very hard on it. And we will be as always first or early to market. The mainstream 400 Gb/sec market is going to take multiple years. I believe initial trials will be in 2019, but the mainstream market will be even later. And just because 400 Gb/sec comes, by the way, does not mean 100 Gb/sec goes away. They are really going to be in tandem. The more we do a 400 Gb/sec, the more we will also do 100 Gb/sec. So they are really together – it is not either or.”

Arista Networks launched its initial 100 Gb/sec switches based on the 25G standard that it championed back in September 2015 using Broadcom “Tomahawk” ASICs, and in December 2016 augmented its line with a set of 100 Gb/sec switches based on ASICs from XPliant, which was then part of ARM server chip upstart Cavium, which is now being acquired by Marvell.

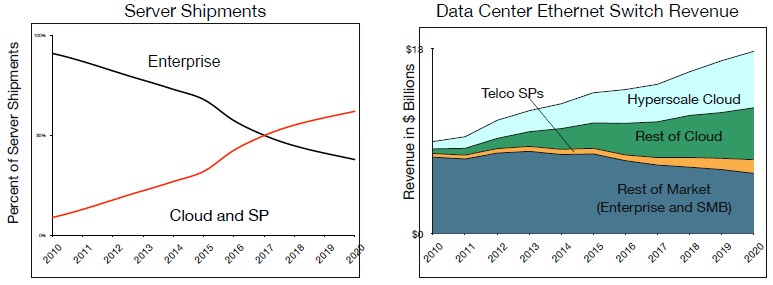

In a recent Tech Field Day session hosted by Bechtolsheim, he laid out a detailed roadmap that the industry might take – and probably will take if it knows what is good for it – 400 Gb/sec Ethernet switching in the datacenter. Before we get into the details here, it is probably helpful to look at the changing server and switching landscape, which is encapsulated in these two charts from Dell’Oro Market Research, which made these forecasts in January 2017 and which are still representative of the way the future will look:

As you can see, as companies are moving more and more applications to public clouds or using applications created by hyperscalers, they are beginning to dominate server and switch shipments. (This chart mixes both shipments on one side and revenues on the other, but we think that because hyperscalers and cloud builders pay a lot less than enterprises for gear, they don’t yet dominate revenues – or profits – in these two pillars of IT. We suspect that they do dominate server processor and switch port shipments worldwide already.) The point is, the cloud builders and hyperscalers are firmly in charge of the compute and networking agenda, and from here on out, with some exceptions for very high end supercomputing, it is these technologies that will either be directly adopted by enterprises by paying for cloud capacity or using hyperscale applications or alternatively directly by embracing similar technologies in their datacenters or indirectly by OEMs assembling more normal enterprise gear from a lot of the same piece parts.

To date, Arista Networks has sold on the order of 15 million ports across its installed base of customers, and it takes less and less time to get the next million ports. Part of the reason is that the 100 Gb/sec ramp is getting underway in earnest now – and we think because of the demands of the 25G Consortium, who wanted to get 2.5X the bandwidth at somewhere around 1.3X to 1.5X the cost in something like 1.2X the power envelope. Bechtolsheim said in his presentation that 1 million 100 Gb/sec ports shipped across all vendors in 2016, and over 5 million shipped in 2017 and that for 2018, somewhere between 10 million and 12 million ports are expected to ship.

In referring to 100 Gb/sec Ethernet and its follow on, Bechtolsheim had this to say: “This is certainly the fastest growing Ethernet standard in terms of revenue growth, ever. 400 Gb/sec Ethernet will kick in at the end of 2018 and ramp through 2019 and into 2020. It will not ramp faster than 100 Gb/sec Ethernet because it is more expensive. So 100 Gb/sec will continue to ship in high volume and perhaps it will cross over in 2021.”

The important thing for the hyperscalers and cloud builders is that 400 Gb/sec will drop right into the same spots in the datacenter that 100 Gb/sec is currently used in, and without having to change the copper cables and fiber lines that are currently used within the rack, across rows, spanning datacenters, and across regions and geographies – and this is exactly as Microsoft researchers had predicted would be necessary when everyone started talking at the IEEE back in 2013 about the needs of the 400 Gb/sec Ethernet standards. At that time, the 25G Consortium was a year away from launching, so the 400 Gb/sec we will be getting is different in some respects than we would have gotten without the hyperscale pressure.

Bechtolsheim does not have much to say about 200 Gb/sec networks, we noted, and is more focused on making the jump straight to 400 Gb/sec. It is no coincidence that this is precisely what Broadcom is doing with its “Tomahawk-3” chipsets, which we talked about a month ago. With the Tomhawk-3, Broadcom is moving from ports that gangs up four 25 Gb/sec lanes using non-zero return (NRZ) signaling to eight ports running at 50 Gb/sec that have four channel pulse amplitude modulation (PAM-4) signaling. This is all well and good, except for one thing. Bechtolsheim – and we presume at least some of the hyperscalers and the cloud builders – want to move straight to 100 Gb/sec signaling and reduce the lane count, the heat dissipation, and the cost. (The IEEE specification for 400 Gb/sec was originally going to be 16 lanes running at 25 Gb/sec, if you can imagine that beast.)

“The only thing that actually makes sense is to go straight to 100 Gb/sec per lane,” Bechtolsheim said in his presentation, noting that the active life for each SERDES speed within switch ASICs is getting shorter and shorter as the bandwidth jumps get bigger and come faster due to the demand by the hyperscalers and cloud builders.

Bechtolsheim predicts, in fact, that the majority of the ports in 400 Gb/sec devices will be based on 100 Gb/sec signaling, not the current 50 Gb/sec signaling, so we can pretty much guess what the “Tomahawk-4” chip from Broadcom might look like and when it arrives.

Some of these transitions have taken a long time, including the move from NRZ to PAM-4 signaling is but one of them. “The issue is economics,” explained Bechtolsheim. “People quickly migrate to products that are cheaper and use less power. We have seen this on the transition from 10 Gb/sec to 25 Gb/sec and from 40 Gb/sec to 100 Gb/sec, that in less than a year – maybe between six months and nine months – the majority of our bookings switched over to the new generation, where the product is better in every metric.” In fact, he added, customers take the products as fast as Arista Networks could make them.

This is a very different dynamic than the slow-paced, predictable, and methodical way that the Ethernet switch market exhibited during the first couple of decades of its existence. The good news is, every IT organization will benefit from the pressure that the hyperscalers and cloud builders are applying – through luminaries like Bechtolsheim – to the switch chip makers and the downstream players who make optical interconnects and wires.

One last thing: Bechtolsheim says that making SERDES go faster than 100 Gb/sec is very difficult. This fast switching is its own kind of barrier. So what do we do to move faster? Crank up the pulse amplitude modulation. By moving to PAM-8 signaling, you can double up to 800 Gb/sec per port at 100 Gb/sec across four lanes, and with PAM-16 signaling, you can get up to 1.6 Tb/sec. If you had to do eight lanes at 100 Gb/sec — and no one is suggesting this is desirable, but it may be necessary — then that takes you up to 3.2 Tb/sec, by our reckoning. This may be the limit using these approaches, just like cramming something on the order of 48 cores to 64 cores on a 3 nanometer extreme ultraviolet (EUV) process on a 300 millimeter wafer might be the practical limit to Moore’s Law using near-current chip manufacturing approaches.

As usual, everything looks like it is going to run out of road about ten years out. That’s what makes the IT industry exciting.

Andreas also did DSSD which was bought by EMC for what was rumored to be 1B.

“By moving to PAM-8 signaling, you can double up to 800 Gb/sec per port at 100 Gb/sec across four lanes”

actually PAM-8 will only add one bit of encoding by baud or signal, so if we are still using a frequency of 50Ghz, we will have 150Gbit/s per lane, so 600G per port for a 4 lane.

moving to PAM-16 we will have 4 bits encoded per signal, meaning if we have 4 lanes it only goes to 800G/port.

moving to 8 lanes PAM-16 will get you to 1.6T/port.

please confirm if I’m wrong …