Medical imaging is one areas where hospitals have invested significantly in on-premises infrastructure to support diagnostic analysis.

These investments have been stepped up in recent years with ever-more complex frameworks for analyzing scans, but as cloud continues to mature, the build versus buy hardware question gets more complicated. This is especially true with the addition to deep learning for medical images into more hospital settings—something that adds more hardware and software heft to an already top-heavy stack.

Earlier this week, we talked about the medical imaging revolution that is being driven forward by GPU accelerated deep learning, but as it turns out, there is more to the story beyond automated image analysis. Other areas where machine learning dominates, including speech recognition, are further changing the way radiologists operate—something that natural language processing giant, Nuance, is behind.

The image processing side of the deep learning workflow for medical diagnostics is one part of the automation puzzle. On the post-image side is the opportunity for automated analysis and reporting based on a composite of those findings. It is here that Nuance is building a collaborative platform that takes processed medical images and generates final signed legal reports that drive patient care—a revolution indeed when one considers the humans in the loop that have historically been required in every step of this workflow. The radiologist still has the final say on the AI output and if there are problems with the algorithmic approach, they can provide feedback to the developers to help them tweak their codes for higher accuracy.

This is all interesting from an applications standpoint, but from our point of view, which is focused on infrastructure, what do changes like this in computationally intensive medical workflows mean for hardware investments at big hospitals? This is an important question because the computational requirements are more demanding than ever before and involve the use of GPU acceleration—something that comes with it own set of programming challenges for the uninitiated.

The answer is the keep driving a shift to public cloud resources that offer GPUs, which Raghu Vemula, VP of Engineering at Nuance tells us is more common, especially for medical image data-heavy radiology departments. He explains that contrary to popular belief, large hospitals are increasingly shirking away from managing their own on-site compute in favor of cloud. The size of cloud companies, their ability to partner, innovate, and upgrade, as well as the increased security has driven this shift, particularly in medical imaging and radiology. Further, the complexity of the AI stack and hardware acceleration makes hospitals less likely to want to stay in the hardware management business, which is great news for the big clouds but according to Vemula, could represent an even bigger opportunity for a company like Nvidia if it could find a way to create and control its own cloud enterprise.

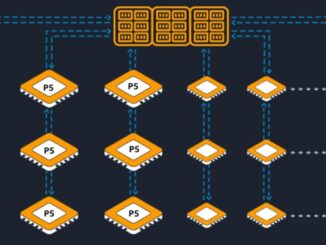

Currently, the platform Nuance has created to generate reports from many sources (diagnostic and otherwise) is built on Microsoft Azure, but he says it could just as easily have been built on AWS. While these are fine for now since the GPU-intensive platform is in its early stages, when it comes to future scalability, the cloud giants might not have what it takes to meet the data and compute-intensive demands of medical imaging. These infrastructure giants are slower to get the latest GPUs, Vemula says, and having a full stack of GPU support for developers is also in need. What would be ideal, he explains, is a cloud created and managed by Nvidia, which can instantly get the latest GPUs and support the broader software stacks that rely on expert CUDA hooks for the highest level of acceleration.

He says that they have looked at on-prem GPU-accelerated server infrastructure but it will be too difficult to manage the scalability of that over time, especially with shifts in demand. Although Nuance is in the early stages of weighing through the CAPEX/OPEX of their GPU cloud strategy, even DGX-1 type appliances are not going to fit the bill for them or their ultimate platform users.

“One of the clients told us recently that they have a huge volume of images but they do not want to send those to the cloud because of latency. They put some pressure on us to buy the actual hardware and put it all on-rem. Our architecture does allow for that, but we all had to keep in mind that Nvidia is iterating new processors so quickly and pretty soon, there is all that investment and what we bought is already obsolete.” He says that they have to decide how much latency they can live with to reduce the cost and balance that against having access to the latest hardware.

What Vemula is getting at with his assessment of the state of infrastructure for radiology is that on-premise gear is not going to offer the agility in terms of scalability and upgrades of public cloud. And public clouds are not quite specific or agile enough their upgrade cycles and developer support for complexity. Meanwhile, off on the horizon is an idea that keeps gaining traction in other market segments; the domain-specific cloud. In this case, as Vemula says, if a company with the size and support of Nvidia could step in and provide its own cloud with tooling specific to radiology, this could become a de facto standard. For now, however, Nuance is building its own platform for sharing and development on a public cloud that is not as robust as it needs.

Although Nvidia rolled out a cloud container service last week, this does not come close to the kind of infrastructure Nuance, and likely many others, require. In conversations over the years with Nvidia, however, we have never gotten the impression that Nvidia wants to be in the cloud business and manage the wide ranging stack of services required. For now, they can control which clouds get access to their newest processors and at what price, but for hospitals relying on GPU accelerated machine and deep learning, demand like Nuance’s might come to light more frequently.

Be the first to comment