During a trip to Dell in Austin, Texas this week, little did The Next Platform know that the hardware giant and nearby Texas Advanced Computing Center (TACC) had major news to share on the supercomputing front.

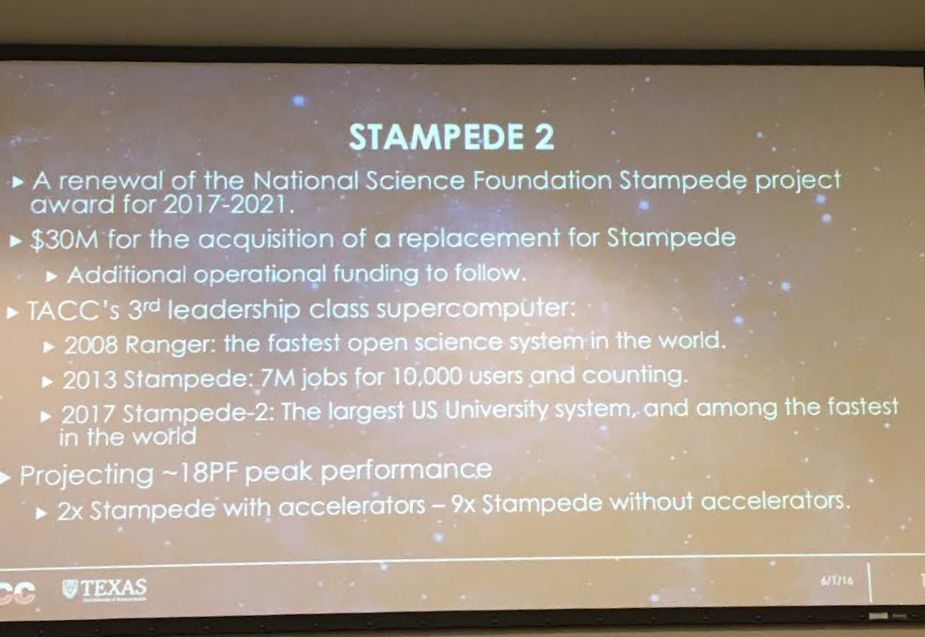

It appears the top ten-ranked Stampede supercomputer is set for an extensive, rolling upgrade—one that will keep TACC’s spot in the top tier of supercomputing sites and which will feature the latest Knights Landing processors and over time, a homogeneous Omni-Path fabric. The net effect of the upgrade will be a whopping 18 petaflops of peak performance by 2018.

The new system will begin its transition to “Stampede 2” starting in the second half of this year with the addition of 500 of its first Knights Landing nodes. As TACC director, Dan Stanzione explained to the group of analysts gathered at TACC yesterday, this will not be one of those massive single system announcements because they have to keep production workloads in queue and running on Stampede 1 during the course of the upgrade. Since the current Stampede machine serves many thousands of users in the open science community via its National Science Foundation (NSF) mandate and proposals from the XSEDE network of projects vying for time on powerful systems, both Stampede and the range of other supercomputers on site.

Although TACC center director, Dan Stanzione cannot mention the name of the post-Broadwell Xeon variant, there is no doubt these will be Skylake based (probably not unlike described here), which means that processor upgrade will happen in 2017. Also of interest is a smaller upgrade phase in 2018 where some of the system will be equipped with the upcoming 3D XPoint memory. OmniPath will form a single large fabric and although TACC will be just at the edge of OmniPath2, Stanzione says that integration does not fit with their plans. Even though there will be Knights Landing cards coming around then with the integrated interconnect, TACC is sticking with cards for the first 500 due to availability and integrate those with integrated fabric later for the largest part of the expansion, likely in 2017.

The NSF funding for just the acquisition of the system is set at $30 million, which runs from 2017-2021. This is yet another in a list of leadership-class machines, Stanzione says, sharing a slide highlighting the historical system investments at the center. “Stampede was a 2 petaflop base system in the nodes with another 9 petaflops if you count all the PCIe cards [Xeon Phi} working as co-processors. This doesn’t have any co-processors, it’s all in a node, which makes it easier from a programming perspective, with twice the peak performance.”

The first Knights Landing upgrade that is just getting underway now is meant to help teams at TACC build experience with users. While they haven’t fixed the node counts in the other two phases, the process will start with those first few rows of replacements so the system can stay active for users.

We can expect to see news about a number of forthcoming Knights Landing based supercomputers in a couple of weeks at the International Supercomputing Conference (ISC) in Germany (we will be on hand). And as it turns out, the HPC world is already a step ahead of these machines, with architectures based on the follow-on architecture from Intel, Knights Hill, which will appear on Argonne National Lab’s “Aurora” supercomputer—a system in which Intel is the prime contractor to build (a rare thing in the last decade in HPC). There are already some highly detailed systems hitting the floor soon featuring Knights Landing, including the lead-up to Aurora at Argonne, the 8-petaflop Theta machine. Another system we’ve written about extensively is the Cori machine at NERSC, which is currently in the process of its Knights Landing upgrade. It is hard to say if either of these supers will be outfitted with their new cards in time for the bi-annual listing of the Top 500 most powerful supercomputers (which takes place at ISC soon), but at the very least, it’s good to see some fresh machines gearing up to refresh the list.

On that note, Stanzione mentioned that although TACC loves their big iron stories, the real direction at the center is moving a bit farther away from the monolithic HPC approach. The NSF and XSEDE projects are diverse, which means the center needs to continue investment in tooling and software and more frequently, interfaces and portals for scientific computing users. TACC is already home to massive visualization, OpenStack cloud, and other systems to support such needs and that’s where a great deal of focus and investment will be in coming years. He says that TACC now has as many web developers to create these portals and tools as they do HPC administrators.

Across all of its systems, TACC employs 135 full-timers, roughly 70 of which are PhD level scientists and engineers. They support 12 megawatts of datacenter capacity across 3 datacenters and have their own chilling plant to support the computational facilities, which pumps around 180,000 gallons per hour to keep everything cool. Overall, Stanzione says they push about a billion compute hours per year across their many thousands of users with 5 billion user files, 50-60 petabytes of data, and 15 supercomputers, storage systems, machine learning systems, cloud and visualization clusters.

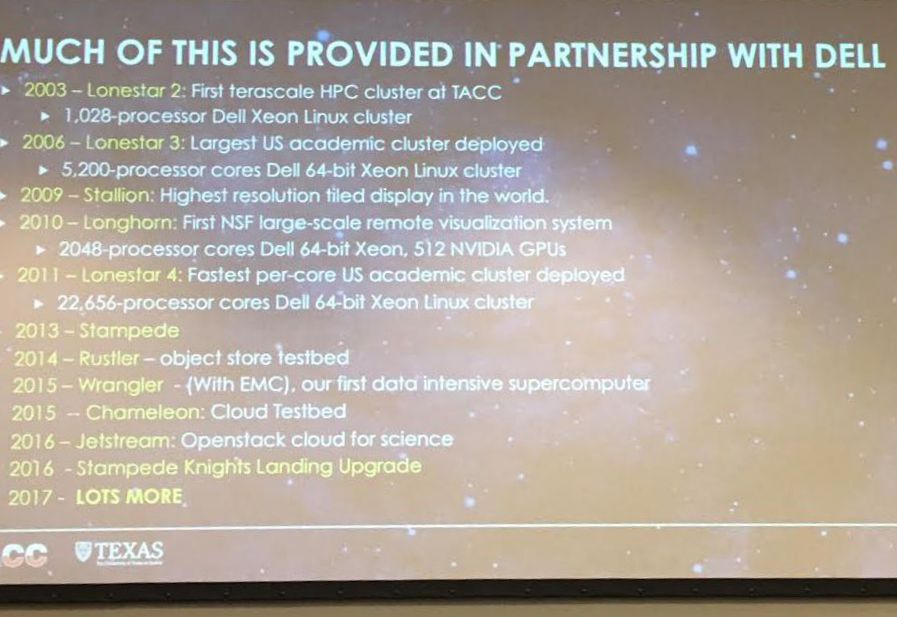

As one might imagine given the hometown advantage (both Dell and TACC are in Austin), the center tends to go with Dell machines, as seen in the slide above. Although the Stampede upgrade was put to competitive bid, TACC officials say Dell won on price and support.

Be the first to comment