Much has been made of the ability of The Machine, the system with the novel silicon photonics interconnect and massively scalable shared memory pool being developed by Hewlett Packard Enterprise, to already address more main memory at once across many compute elements than many big iron NUMA servers. With the latest prototype, which was unveiled last month, the company was able to address a whopping 160 TB of DDR4 memory.

This is a considerable feat, but HPE has the ability to significantly expand the memory addressability of the platform, using both standard DRAM memory and as lower cost memories such as 3D XPoint from Intel and Micron Technology and memristors come to market from HPE itself. (Although memristors seem like the perpetual technology of the future at HPE, and they may always be if 3D XPoint actually comes out next year as plans in DIMM form factors from Intel. . . .) The fact that The Machine can expand up to exascale is one of the reasons why the Exascale Computing Project at the US Department of Energy has just awarded some research and development funds to HPE.

To get a sense of the possibilities, we had a chat with Kirk Bresniker, chief architect at Hewlett Packard Labs, the research and development arm of the systems maker, who walked us through how the memory space of The Machine could be extended by orders of magnitude and therefore be appropriate for many exascale jobs.

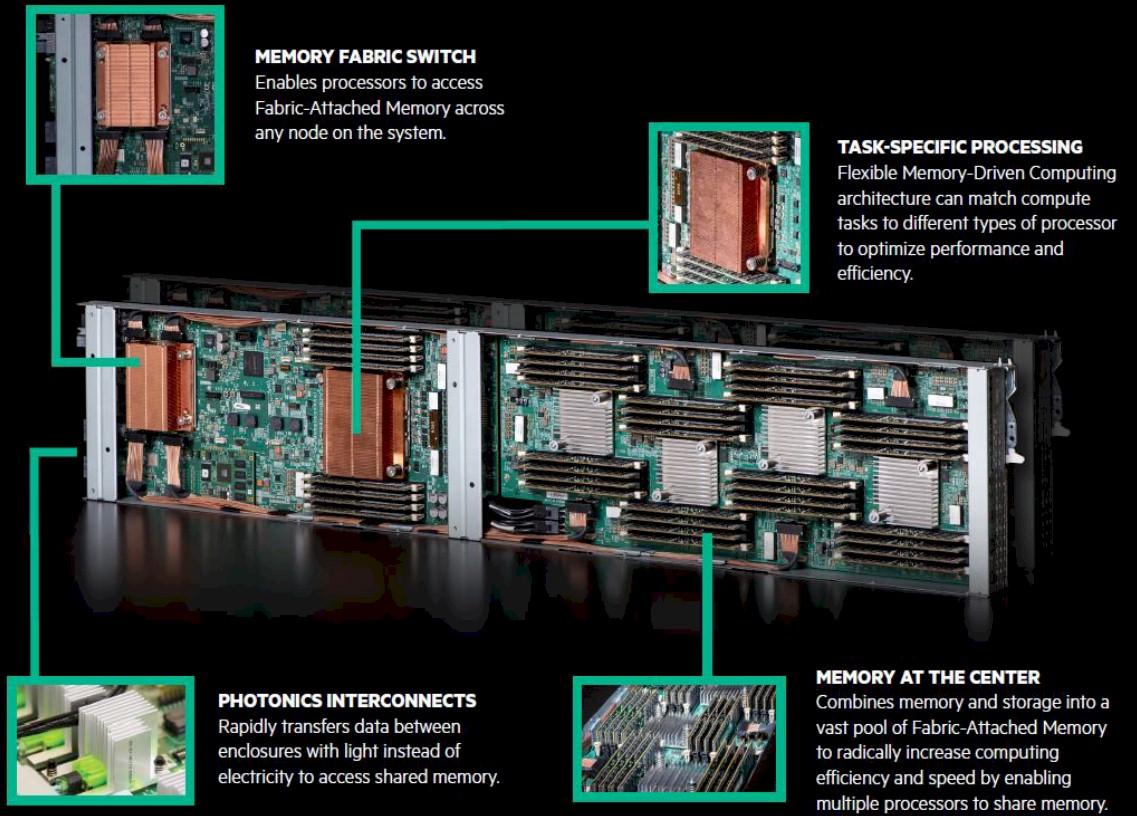

But before we get into that, let’s take a look at what HPE has done with the latest iteration of prototype for The Machine. The basic premise of The Machine is to make memory centric to the architecture, not compute, and to use very fast silicon photonics to lash together great gobs of persistent, memory-addressable storage into a single address space and then hang elements of compute, in the form of the system-on-chip packages that are common in some types of consumer devices and appliance systems today, off of that main memory. We drilled down into the architecture and how it might relate to traditional HPC simulation and modeling workloads two years ago, and we also took a deep dive into a modestly sized, DRAM-based prototype that HPE unveiled last fall. With the memristor that HPE is developing with Western Digital being delayed and Intel also delaying its shipping of the “Apache Pass” 3D XPoint DIMM sticks, which were supposed to be a key component of the “Purley” server platform that will support the impending “Skylake” Xeon processor from Intel, HPE has had no choice but to use DRAM to make prototypes of The Machine. So, when the power is off, the data is lost. But that will change as these persistent memories come to market, and we suspect that customers might want to pool a mixture of non-persistent DRAM and other persistent memories like 3D XPoint or memristor to have a memory pool with fast and slow areas.

For processing elements, the initial prototypes have been created using Cavium’s ThunderX ARM processors, but the whole point of The Machine is that any kind of compute can be plugging into the memory pool. The HPE and SGI teams know how to do X86 compute, so choosing ARM helped extend HPE’s knowledge as well as allowing for larger memory addressing than is possible with a Xeon or Opteron.

The latest prototype, which is significant because it now has rack scale, coupled together 160 TB of addressable memory and 1,280 compute cores into something that is big enough to actually test to see if it can do useful work, and with what efficiencies. These elements are linked through the X1 silicon photonics module, which is at the moment is implemented using 16 nanometer processes from Taiwan Semiconductor Manufacturing Corp, and the fabric bridge, which is implemented in FPGAs rather than being etched on custom ASICs. (Using FPGAs is a standard practice in the development of new compute and networking chips.) HPE only had a few nodes up and running with a modest amount of memory in the testbed it rolled out last November running a tweaked Linux operating system and a graph analytics inference application based on the GraphLab engine that was actually prototyped on its Superdome X NUMA big iron first. Now, HPE has more nodes, and more X1 modules and FPGAs to create the memory fabric and has expanded the size of The Machine cluster.

To be precise, the cluster has 160 of the fabric-attached memory controllers, each addressing a block of 1 TB of DDR4 memory. Each 32-core ThunderX SoC has four of these X1 silicon photonics memory controllers linked to it, and they work through the FPGA bridges that are plugged into the second socket of the two-socket ThunderX system board.

There are ten of these basic building blocks in an enclosure, and four of these enclosures in the system, for a total of 40 processors and 160 memory controllers to get that 1,280 processors and 160 TB of address space that they share.

To put this rack-scale variant of The Machine through the paces, Bresniker says that HPE has loaded up a security workload atop the GraphLab engine that looks for denial of service attacks against DNS servers. Specifically, the application looks for anomalies that identify servers hitting a web site that are coming from robots rather than from real people. Because it is almost impossible to construct a blacklist against such fleets of zombie machines – there are so many of them, and they change their behavior – graph analytics is used to detect minute telltale patterns of access that give the zombie machines away. (You basically take a very small blacklist and a very small whitelist of sites and based on the behaviors of both, you infer if a new site is either bad or good.)

Graph analytics works best in a single memory space (such as a NUMA machine) because on a more traditional cluster based on Ethernet or InfiniBand, every time you leave a node to access another’s main memory, the latencies are awful, even with RDMA, RoCE, or iWARP flavors of remote direct memory access to bypass the CPU software stack to share data directly between memories on servers linked to each other on the network. Ironically, GraphLab is designed to run across clusters, and HPE has had to tweak it so it can run on a single shared memory instance, and presumably it runs a hell of a lot faster on it, too.

Hyperscalers use clusters to do graph analytics anyway because their graphs are so large they have no choice. But they are no doubt cooking up systems very much like The Machine, we suspect, and probably using Intel’s silicon photonics, too. Maybe they are also testing out The Machine, and if not, then maybe they should be. Ditto for the traditional HPC community, which is used to using MPI for memory sharing but might see an acceleration of simulation and modeling workloads on a massive shared memory machine.

This has always been the dream, after all, and if federated clusters of RISC/Unix machines were affordable with their global addressing schemes, that would have been the future. We have clusters only because of the queuing theory limits and very high costs of scaling up SMP and NUMA architectures, and interestingly, architectures like The Machine are bringing us back to the past because the speed and bandwidth of silicon photonics can make any memory look local to any device, even on a petascale memory complex should anyone have the $35 million to buy the 64 GB memory sticks and probably that much again for the photonics to link it all. Think for a moment about what an exabyte of memory might cost today. . . . How does $35 billion grab you? And that is just for the memory. But man, you could store one heck of a graph in that space.

Enough of that. Back to reality. Or, a possible future.

By comparison, the Superdome X NUMA machine based on Xeon E7 processors (it has sixteen of them) tops out at 24 TB of memory and will soon double up to 48 TB. The UV 300 NUMA machines that were created by SGI and that are now part of the HPE portfolio currently top out at 64 TB, which is the maximum memory addressable by the Xeon architecture right now. (And the Skylake Xeons do not increase this, by the way.) So there is a reason why HPE is using an ARM architecture for the compute in The Machine. Cray, you will remember, still is the memory addressable champion with its “ThreadStorm” processors used in its Urika analytics appliance (formerly known as the XMT), which is based on the old “Gemini” XT interconnect but which scales to 8,192 processors with a total of 1.05 million threads and 512 TB of main memory.

The Machine can, in theory, do better than this.

Bresniker says that the current X1 modules and FPGA fabric bridge can expand to eight enclosures from the current four models without any tweaking, so that is 320 TB of memory right there. Bresniker says there is another factor of four in memory expandability that could be attained by adding another layer of the photonic interconnect on top of the ones linking the machines inside racks – much as datacenter networks have leaf/spine setups. That gets you to 1.25 PB of memory right there, and that would also yield 32 times the number of cores in the system, or 40,960 cores.

“That is about as far as I would want to take this current generation,” Bresniker tells The Next Platform. “If we want to move beyond that, we are aiming at an exascale system by the end of the decade. If I were to take the rest of this year, I bet I could prototype that is knocking on the door of a 500 TB system and find my way towards a 1 PB, but if you want to go up another three orders of magnitude, we really need to get the silicon implementation of these devices and we probably need to get greater distances in the network by moving from multi-mode to single-mode fiber optics.”

Once the Gen-Z consortium has its non-volatile memory address protocol finalized and accepted by the industry, The Machine will support that protocol over its silicon photonics and any memory that supports Gen-Z will snap into this system. Bresniker thinks, in fact, there will be a wide variety of compute and storage in The Machine.

“One of the interesting things about working on The Machine is that people still tend to think in binary terms,” says Bresniker. “They have compute and they have memory as the end points on the fabric. In reality, every piece of compute has at least some cache if not some amount of high bandwidth local memory, and every piece of memory is going to at least have cryptography for security and wear leveling and erasure coding for robustness. So there is no pure end point. There is compute-like and memory-like, but they are all an interesting mix. With an open interface like Gen-Z, we can make those mixed end points on the right process at the right price points, and make small-scale investments and they do not have to develop a full-blown SoC.”

Capacity is only one element of performance on things like graph analytics. So the hardware and software engineers at HP Labs are working together right now to tweak the algorithms that govern memory accesses on the box. Bresniker says that the software engineers will be able to improve performance by one or two orders of magnitude, and adds that the 160 TB edition of The Machine is more stable than expected and delivering about what HPE expected in terms of performance compared to the Superdome X testbed it has been using. The final test results are not done yet, so Bresniker can’t be precise.

I find it funny that Intel wont move past the 64TB limit so they had to use ARM instead.

Has it been said if any other exascale architectures will be shared memory like The Machine?