Getting the ratio of compute to storage right is not something that is easy within a single server design. Some workloads are wrestling with either more bits of data or heavier file types (like video), and the amount of capacity required per given unit of compute is much higher than can fit in a standard 2U machine with either a dozen large 3.5-inch drives or two dozen 2.5-inch drives.

To attack these use cases, Cisco Systems is tweaking a storage-dense machine it debuted two years ago, and equipping it with some of the System Link virtualization technologies that it created for its M Series modular systems aimed at hyperscalers. These M Series machines, which represent a move toward composable infrastructure like Hewlett Packard Enterprise’s Project Synergy effort, were part of its Unified Computing System line of Xeon machines. The M Series have been discontinued by Cisco, mainly because customers and their operating systems are not quite ready for composable systems and because customers wanted more than a single-socket compute node that Cisco was offering, but System Link is living on in the new S Series storage servers that Cisco will start selling next week.

The System Link ASIC that Cisco created for the M Series hyperscale servers and which is now at the center of the S Series storage server abstracts away the I/O, networking, and storage inside the chassis, allowing for different nodes within the system to be allocated precise capacities represented by these components. System Link predates Single Root I/O Virtualization (SR-IOV) and it carves up the physical PCI-Express bus like Cisco does in the UCS machines for its virtual network interface cards. (Which also predate SR-IOV.) System Link doesn’t just virtualize the network interfaces, but also creates virtual drives from RAID disk groups on the server. System Link is at the forefront of composable systems, but until we break the hard link between processors and main memory, true composable systems are not possible. That will be when the real value comes from composable systems.

In the meantime, System Link allows for Cisco to make a much more flexible storage server, and one that is a lot better for certain kinds of workloads than ganging up a slew of rack servers with lots of disk drives. The current S Series machine is similar to the UCS C3160 and C3260 rack servers that Cisco has been shipping for a few years in terms of the basic hardware. The S Series enclosure comes in a 4U form factor and has room for 56 top-mounted 3.5-inch disk drives plus another four drives in slots across the back in each compute node. The S3260 has room for four 2.5-inch disk or flash drives in the back, plus two pairs of 40 Gb/sec virtualized fabric connectors, which Todd Brannon, director of product marketing for the Computing Systems Product Group, tells The Next Platform will be shortly upgraded to 100 Gb/sec links.

The S3260 storage server is currently using a pair of UCS M4 server nodes based on the “Broadwell” Xeon E5 v4 processors from Intel, but will be upgraded to a future generation of Intel processors around the middle of next year. These future processors are, of course, the “Skylake” Xeon E5 v5 chips from Intel, but no one can confirm that and Cisco certainly won’t. (So, in a way, the M Series server is not really gone. It is being repurposed as a storage compute mode.) The current M4 nodes support up to 512 GB of memory each, and a range of processors with core counts ranging from 8 to 18.

With the S3260, Cisco allows for up to 256 virtual Ethernet adapters of tunable bandwidth to be set up across each node, which has a pair of 40 Gb/sec ports per node on the back end of the storage server. That is 160 Gb/sec of total bandwidth out to the servers linking into the storage.

The disks can be used as bare metal capacity or have RAID groups set up on a section of the drives, and System Link allows for chunks of that capacity to be allocated to one or the other compute nodes in the box. The S3260 uses 10 TB Helium disk drives from Hitachi at the moment, and for those who need less capacity, drives with 4 TB, 6 TB, and 8 TB capacities are available. Cisco is also offering flash SSDs for the top-loaded bays, but because of bandwidth limits, a maximum of 28 flash SSDs can be put into the box. Cisco is offering 400 GB, 800 GB, and 1.6 TB flash drives in the S3260. If you do the math, you can max the S3260 at 600 TB of disk or 45 TB of flash capacity.

To get the same capacity using the UCS C240 rack servers from Cisco would take five units and require 10U of space in a rack instead of the 4U that is occupied by the S3260 storage server. That is considerably less space per unit of storage, and there are four Xeon processor sockets allocated across that 600 TB of capacity instead of ten. So, again, you have to be careful about the ratio of compute to storage and fit the workload to the device. But that S3260 will cost 34 percent less, have 80 percent lower management costs, have 70 percent less cabling, take up 60 percent less space, and use 59 percent less power to deliver 600 TB of capacity than those five UCS C240 rack servers loaded up with drives.

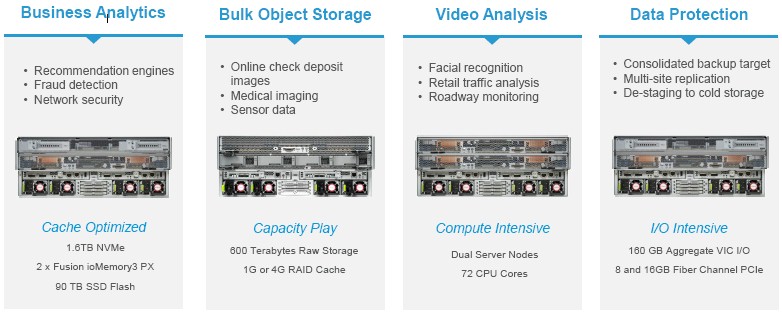

In general, Brannon says that there is a move to do more compute right where the storage is located, and that is true for even seemingly mundane workloads such as video surveillance. Instead of trying to move these large files around, the idea is to do the compute where the fat files are located.

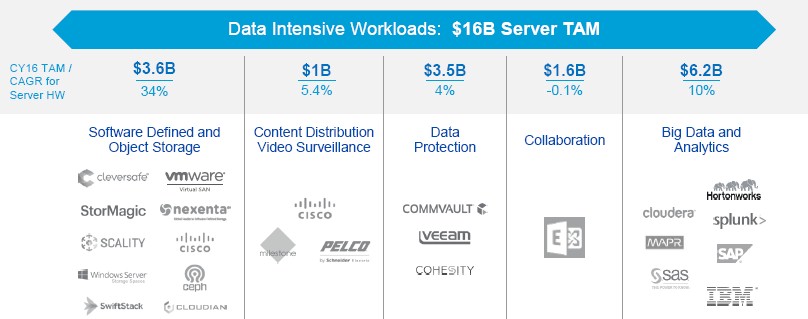

In some cases, this means running Hadoop on these nodes, and one customer using these systems is a large telco that is using Hadoop to do predictive analytics on the failure of devices on its network based on a huge amount of telemetry coming off those devices and its network. (A single UCS domain can have up to 86 PB attached to it.) The S3260 machines can be used as archive servers, too, and as fat nodes for object storage such as Red Hat Ceph, Scality RING, or IBM Cleversafe. Brannon says that these machines are also becoming popular for running Windows Storage Spaces, the Azure-style object storage that Microsoft sells for on-premises use and that is based on Windows Server 2016. Early customers are also using the S3260 as a kind of batch processing layer in a two-tiered system that mixes Hadoop and Cassandra on fat S3260 nodes and Spark on skinny C240 nodes.

With 60 of the 10 TB Helium drives and a single compute node with a pair of eight core E5-2620 processors and 128 GB of main memory costs $112,000, or about 18.6 cents per GB at list price.

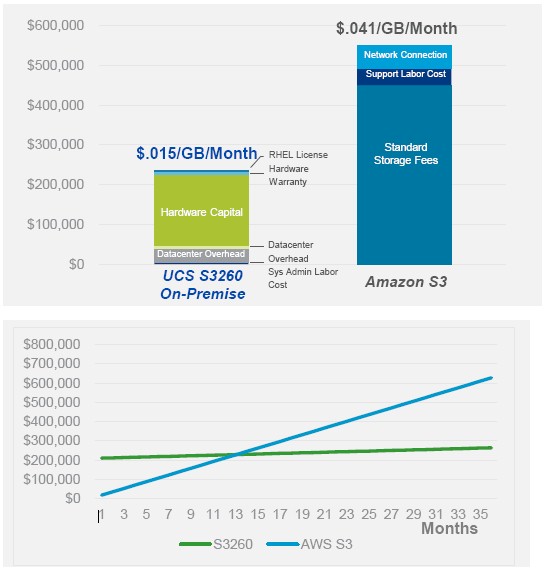

That may seem like a lot compared to the cost of cloud storage, which is perceived as being nearly free – until you actually start reading the data a lot or moving it around between datacenters and regions. Then, it is a whole different story. Cisco wants to make a case for on-premises uses for petabyte-scale data storage, of course, and its engineers ginned up this comparison with the S3 object storage service on Amazon Web Services:

In the above example, the Cisco S3260 has 600 TB of raw capacity which can be protected running Ceph object storage to yield about the same amount of usable capacity as 420 TB of S3 storage. Over the course of three years, the Cisco on-premises S3260 storage costs around 1.5 cents per GB per month, while the AWS S3 costs somewhere around 4.1 cents per GB per month. The breakeven for when S3 storage and the S3260 server costs the same is about 13 months, by the way.

Be the first to comment