There is an old adage that the best way to predict the future is to create it. While chip maker Intel has a lot of power in the information technology realm, and it arguably has one of the largest slices of profits in the IT sector and is responsible, either directly or indirectly, for a lot of the sales and marketing that goes on for datacenter products, it is still not all-powerful even if it is mighty.

And to get to the cloud infrastructure future that the company envisions, it is not only going to need to corral the sometimes warring factions in the lands of hardware and software, but it is also going to have to lay down some laws and get those factions to lay down their swords and use plows.

This, more than anything else, was the underlying theme of the Cloud Day that the top brass of Intel’s Data Center Group hosted in San Francisco today. Intel may have missed the transition from the PC to the smartphone and the tablet, but the systems side of its business has been keenly aware of the rise of hyperscalers and public clouds and Intel has profited handsomely from the amount of gear these companies are buying as they build out their infrastructure.

Intel has had to work hard to keep that business, because these are some of the toughest and smartest customers in the world and they can – and might – change their infrastructure on a whim because, for the hyperscalers at least, they control their own software stacks down to the Linux operating system they deploy. One need only look at the breadth and depth of the new “Broadwell” Xeon E5 v4 processor lineup, also launched today, to see how the hyperscalers and cloud builders have forced Intel to deliver products specifically for them while also making Xeons suitable for enterprise and HPC customers and myriad storage and networking devices, too.

“It is the on-demand, dynamic nature of cloud that is making it a big disruptor, and that has allowed hundreds of clouds that will deliver millions of services to billions of connected devices generating massive, massive amounts of data,” said Data Center Group general manager Diane Bryant in her opening keynote. “I think we will look back in time and say that the cloud had a bigger impact on technology than the PC did back in the 1990s, and we all think the PC was a pretty big deal, but the cloud is truly going to extend the reach of the digital world and make it massively accessible.”

Intel has been talking to the outside world eagerly about cloud for about the past five years, Bryant added, and said that there were some observations that the chip maker had about how we can go from hundreds of public clouds and perhaps thousands of private ones to add the next several tens of thousands of clouds, not only converting virtualized servers and hosting companies to true cloudy infrastructure (which means metered pricing, the ability to turn capacity on and off at a whim, and workload orchestration, to use a technical definition) but also adding whole new clouds with specialized services aimed at specific use cases.

“But there are people who may want to co-opt this. There are challenges along the way, and to be candid there is a lot of fragmentation that risks having this come to fruition. And in fact, even though open source is one of the great enablers of those standards, and a great enabler of this virtuous cycle, we still see there are cases where people are holding back from open source, that they are not putting out the full features and capabilities that will enable the industry move forward. And at Intel, we are not going to sit by idly as this thing happens. We have to be involved.”

In Bryant’s view, the world is diversifying as it is adding more and more clouds, not consolidating. (There used to be many tens of thousands of service providers in the early days of the commercial Internet two decades ago, we would point out, and we now have a much smaller number of much larger ISPs that are providing out Internet connectivity for home and business.) The other thing about clouds is that they are, for the most part, consumer facing, with about two-third of all cloud services aimed at people in their personal rather than professional environment. (We suspect that the revenue split might be a little different there, with Amazon Web Services driving $7.88 billion in revenue last year and other public clouds and SaaS services aimed at businesses collectively raking in their billions. That said, it is hard to draw such lines, particularly when so many consumer-facing services buy their infrastructure on AWS and other public clouds.)

Another driver pushing for more, rather than fewer, clouds are regulations concerning the storing and movement of data, said Bryant, which will mean companies will keep come compute and storage capacity internally or require it to not leave specific countries where they do business for compliance reasons. You can’t just put all of the world’s data in the major cities on each continent.

The most interesting thing, perhaps, that Bryant said was that there is a point at which owning your own infrastructure and building your own private cloud makes more sense than moving applications to a public cloud.

“There is now far greater clarity in what would drive one to select a particular cloud, and we see examples of movement from on premise to public cloud and then movement from a public cloud back to an on premise private cloud,” Bryant explained. “This is a very agile, fluid environment with lots of reasons of why you would make a decision. One of them would be scale, and our math says that if you have between 1,200 and 1,500 servers under management, you have sufficient scale to deliver an efficient private cloud.”

After the keynote, we spoke to Jason Waxman, general manager of the Cloud Platforms Group within the Data Center Group at Intel, to have him elaborate a little bit on that assertion about scale.

“There is some tipping point when it comes to scale, and people argue about where that is at,” Waxman told The Next Platform. “There are some people who think you need a million servers, but we think it is on the order of thousands. We looked at this from strictly an economic perspective, and compared buying reserved instances and look at utilization in a cloud service provider versus what you could do if you had your own efficient stack and your own optimized hardware, what would it look like. There are a number of different scenarios that could play out, but what you see is that when you get to a few thousand servers, there is enough critical mass that companies can manage it like a cloud, and companies like Microsoft are making the same bet.”

This was a reference to the Azure Stack, a mini version of Microsoft’s Azure public cloud that will be available later this year and that will allow companies to replicate Azure inside of their own datacenters and, eventually, federate those private Azures that they run with the big public Azure cloud that Microsoft operates.

Only a few weeks ago at the Open Compute Summit out here in nearby San Jose, Waxman tossed out a statistic that we thought was pretty profound (but not shocking to us because we see which way the wind is blowing public and private clouds) and that Intel was using as its polestar as it thinks about the products it needs to develop to serve the IT market. Waxman said that by 2025, Intel expects that 70 percent to 80 percent of all servers shipped will be deployed in large scale datacenters, and he quickly qualified to us that a large scale datacenter was one with thousands of systems and one that is engaged in infrastructure, platform, or software services, including the big cloud providers as well as smaller players and service providers and telcos that compete against them. Intel has said previously that its research suggests that about half of the enterprise applications running today are on either public or private cloud infrastructure (using a technical definition of cloud mentioned above) and that by 2020, it expects the percent of applications on clouds to be around 85 percent.

These kinds of statistics are why Intel started the Cloud For All technical and marketing effort last summer, to bring the next several tens of thousands of public and private clouds into existence and to fulfill its own prophecy while at the same time filling its coffers and those of its partners.

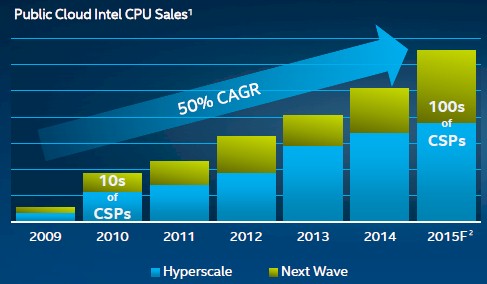

This seems to be playing out Intel’s way, with regional and national clouds popping up and the global, national, and regional telcos and cable providers also getting into the cloud racket. While we have focused on the “super seven” hyperscalers and cloud builders who define the top-end of the market – that is Google, Facebook, Amazon, and Microsoft in the United States and Alibaba, Baidu, and Tencent in China – the next fifty largest cloud builders and service providers that are doing cloud are growing at twice the rate of these behemoths, according to Bryant. This is similar to the data that Intel shared last year, which showed the growth rates for the hyperscalers compared to smaller cloud and communication service providers.

Bryant pulled out a few examples. Swisscom, which is a communications service provider based in Switzerland that has built out its own infrastructure and platform clouds and that will also operate cloudy infrastructure on premises for customers, is growing its datacenter capacity at 30 percent per month, and Flipkart, a big Indian retailer peddling electronics and the largest distributor of smartphones in that country, is doubling its datacenter capacity every year.

The point is that it will not be long before the next several hundred cloud service providers provide as much revenue as the hyperscalers at the top of the food chain. Like maybe in two years, perhaps three. And it will not be long until the next 10,000 drive even more sales, and so on until everyone who wants to build a cloud does and those that don’t run their applications on someone else’s cloud.

Tens of thousands of clouds with thousands of machines each is a lot of machines, by the way, and it is a much, much bigger target than the top seven hyperscalers plus the next fifty cloud builders and service providers down. We think there may be as many as 50,000 organizations worldwide (including the hyperscalers, HPC centers, cloud builders, and large enterprises) that do the kind of scale that The Next Platform is focused on. That number is admittedly an educated guess, and there is a very long tail of company size and datacenter capacity that goes along with that.

So if the top hyperscalers have 1 million machines each – a number we will use for the sake of argument – that is a total of 7 million machines. (These hyperscalers tend to buy top bin parts, which is why their contribution to Intel revenue is so high.) Their server footprint is increasing, of course, but they are also cramming much more compute into each generation. So maybe over the course of four years they may buy something like 10 million machines. The next 10,000 clouds times an average of 1,200 to 1,500 machines is somewhere between 12 million and 15 million machines. The next 10,000 down from that could be another 8 million to 10 million, and the next 10,000 organizations – if they stick with on-premises compute at all – could be maybe 5 million to 7 million, and so on. Those hyperscalers might spend something like $20 billion on those 10 million machines, but the next wave of 10,000 will pay slightly more per machine (because of their lower volumes) and it will be something on the order of $30 billion to $40 billion to buy that iron over the course of the next several years. Each wave of 10,000 more clouds represents less money, but it is still more than is spent by the hyperscalers.

Google Infrastructure For Everyone Else

You begin to see why Intel wants to reduce the amount of friction in the hardware and software relating to cloudy infrastructure. This is one reason why Intel has brokered a deal between the community behind the OpenStack cloud controller and the Kubernetes container management system to come up with a way to bring these two technologies together. (Something we have pondered time and again here at The Next Platform.)

To Intel’s way of thinking, the availability of open standards created a virtuous cycle of adoption for the PC industry and the Internet, and it wants to see the same thing happen with cloud computing.

“But there are people who may want to co-opt this,” Waxman explained as he launched an effort called the “universal resource scheduler,” which would seek to harmonize the OpenStack and Kubernetes parts of the modern platform rather than put them into contention, as they sometimes are. “There are challenges along the way, and to be candid there is a lot of fragmentation that risks having this come to fruition. And in fact, even though open source is one of the great enablers of those standards, and a great enabler of this virtuous cycle, we still see there are cases where people are holding back from open source, that they are not putting out the full features and capabilities that will enable the industry move forward. And at Intel, we are not going to sit by idly as this thing happens. We have to be involved.”

To that end, Alex Polvi, the co-founder of CoreOS, one of the commercializers of the Kubernetes container manager, and Alex Freeland, co-founder and CEO at commercial OpenStack distributor Mirantis, which has been funded partially by Intel, joined Waxman on the stage to announce their combined efforts to integrate these two related but separate controllers.

The Google way of doing this would be to lay down a container substrate and then add virtual machines when necessary, as it does with Google Compute Engine to provide enhanced security and isolation as well as to dice and slice a server up into virtual machine slices with a set amount of compute, memory, storage, and I/O capacity. This, Polvi told The Next Platform at the Intel event, is how it was going to be done with Kubernetes and OpenStack, and he provided this picture:

Whether or not the industry gets behind this idea of running OpenStack on top of Kubernetes remains to be seen. Mesosphere and VMware probably have their own ideas about this, as will Red Hat and others. We told you that this integration was coming last summer, so it is no surprise to us, and we will reiterate our position that the real fight is between Mesos – which can run Kubernetes on top of it – and Kubernetes running OpenStack.

Be the first to comment