The next generation, high speed, low latency fabric known as the Intel Omni-Path Architecture (OPA), made an informal debut in July at this year’s ISC conference in Frankfurt. Omni-Path was helping to power a demonstration of research breakthroughs achieved by the COSMOS Supercomputing Team at the University of Cambridge. Led by Stephen Hawking, the project is studying the cosmic microwave background to gain new insights into the origins and shape of our universe.

The Intel Omni-Path Fabric will be officially announced in the fourth quarter of 2015. It builds on Intel True-Scale Fabric InfiniBand and adds some important new features to the mix including intellectual property designed to enhance the fabric’s switching environment. The result is improved QoS, reliability, performance and scalability.

The fabric is designed to support the next generations Intel processors, both Intel Xeon® E5 family of processors and the many-core, highly parallel processors such as Knights Landing and Knights Hill, both members of the Xeon Phi family.

Part of our strategy is to leverage the Open Fabric Alliance (OFA) and the OFA Enterprise Distribution. We have enhanced the OFA distro with Omni-Path technology including device drivers and key software for management and support. Compatibility and stability are the result. Omni-Path is a mature software stack, which is leveraged by the various Linux distributions. It provides users with complete compatibility between their current technology and the Omni-Path architecture.

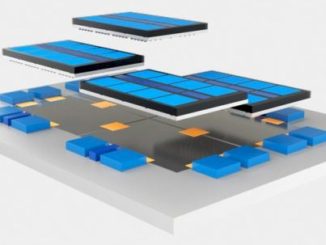

Another essential strategy is to drive the fabric closer and closer to the CPU until it becomes essentially an extension of the CPU itself. This integration improves over time. The initial Intel OPA release will utilize discrete adapters, but quickly it will become integrated into Intel Xeon Phi and then Xeon processors. This allows us to increase the bandwidth to each Intel Xeon Phi socket. Intel OPA delivers up to 50GB of bi-directional bandwidth in order to feed those hungry processors and remove any performance bottlenecks.

In addition, we have improved the latency associated with each dual socket system. Because Omni-Path is embedded in each socket, there is no longer a need to hop across the internal bus to other sockets to access the network for internode communications. This allows can remove up to 0.2 microseconds of latency – a tiny amount that quickly adds up in large scale HPC systems.

Boosting MPI

And speaking of HPC, one of the features that Omni-Path has retained from Intel True Scale is an extremely high MPI message rate and low end-to-end latency at scale – and MPI is a staple of high performance computing. The fabric uses connectionless design, which preserves low latency even at scale.

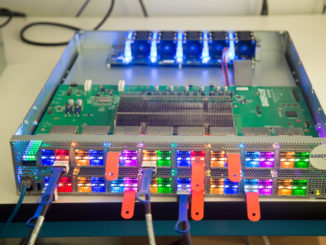

Each Omni-Path port supports up to 195 million messages/second. With the 48-port design of the Omni-Path switch infrastructure, over 9.6 billion messages/second are possible.

The port–to-port latency of 100 to 110 nanoseconds includes error detection and correction in the fabric and between the fabric and the hosts. At these speeds and scales, errors are inevitable, so correction in the fabric is critical. The trick is to not let those errors make their way to a receiving node prompting the system to launch an end-to-end retry, which causes low latency to go out the window.

One of the revolutionary features we have added to Omni-Path to control latency and support QoS features is the ability to exert very fine control over the traffic flowing through the fabric down to elements that are 65 bits in size. At this level you can make priority decisions such as allowing a smaller high priority and latency sensitive MPI application traffic to take precedence over a large storage file that is already in the queue.

Building a Better Fabric

Our overall development approach for Intel OPA has been to take the best features of the True Scale InfiniBand-based fabric and combine them with new Intel intellectual property to optimize performance, scalability, reliability and QoS. Here’s a summary of some of those features:

Traffic flow optimization – For every 65 bits moving through the system, we have the opportunity to make a decision by asking, “Is this information the highest priority data that should be sent through the fabric at this time.” This is particularly critical for fabrics supporting a mix of data types – from MPI latency sensitive messages to large less latency sensitive traffic like storage data.

With previous generations of InfiniBand, once a large storage MTU (maximum transfer unit) starts to transmit, it must complete the entire message transfer before new traffic is allowed to move down the pipe. If a high priority MPI message shows up just a nanosecond after the storage data starts transmitting, it must wait for the entire MTU to finish and latency can take a beating. Now with Omni-Path, we can preempt low priority MTUs and allow the MPI message to move through the fabric immediately. This can result in significant improvement in performance resulting in application performance with a better time to solution result.

Packet integrity protection – Omni-Path uses CRC (cyclic redundancy check) code to catch and correct all single and multi-bit errors in the fabric itself. This almost guarantees that you will have no end-to-end retries and overall latency stays low.

Dynamic lane scaling – This feature, which provides a four lane implementation for each link, allows a copper or optical cable to fail gracefully. If a link goes down, you lose some bandwidth but the cable will stay up and the application will not be affected. This approach promotes reliability and application stability.

48-port radix switch chip – Today’s InfiniBand fabrics are all designed around a 36-port chip. So, if you want to deploy, for example, a 768-node fabric, you wind up with a lot of switches – including edge switches and at least two director class switches. Intel OPA uses a 48-port radix switch chip with one director class switch. This not only saves hops and the latency associated with those hops, but also fewer switches mean lower costs, less rack space and potentially lower TCO (total cost of ownership). Instead of spending your budget on fabric, you can apply the savings to buying more compute power to run your applications faster.

Taking the Long View

Intel is fortunate in having the resources to field not one but three development teams working concurrently and cooperatively on Omni-Path. Team 1 has already finished the development of the initial release scheduled for shipment at the end of 2015. A second team is working on the design of the next generation of the architecture. And finally, a third team composed of forward looking “pathfinders” is exploring the requirements for the third generation of Omni-Path and beyond. At some point, their findings will be turned over to the architects for the design of advanced fabrics for the exascale era.

At this point in time, the initial release of Omni-Path is on track as evidenced by the COSMOS demonstration in Frankfurt.

The demo ran on Intel’s Xeon Phi Knights Landing processors and the Intel Omni-Path Architecture. As COSMOS so amply demonstrates, Intel OPA has been designed for HPC with an emphasis on performance and scaling, backed up by high reliability and outstanding QoS. The fabric has been optimized at each layer. Users will be able to leverage existing InfiniBand stacks through our work with the Open Fabric Alliance to provide a mature set of protocols and application compatibility.

As a result, Omni-Path will be the fabric of choice for years to come for systems ranging from an entry level of 16 nodes to extreme scale supercomputers and high performance clusters with multiples of tens of thousands of nodes.

And by the way, the simulations run on COSMOS seem to indicate the universe is flat.

Joe Yaworski is Intel Director of Fabric Marketing, HPC Group

I found this to be a very well written and interesting article. I do have a couple of questions relating the section “48-rated switch chip”. If you reduce the fabric components down to just one director class switch, do you not create a single point of failure? Is redundancy of switch connectivity not an important requirement for HPC environments?

Looking forward to learning something new! Thanks again for providing such a well constructed article.