When one thinks about the largest supercomputing sites on the planet and the approach to examining future technologies for next-generation systems, it might seem logical to guess they are at the bleeding edge of exploring entirely new, under-the-radar architectures and approaches that could spike the curve of Moore’s Law.

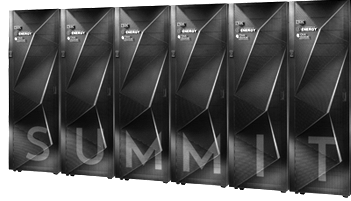

While there are plenty of bleeding edge trends on the watchlist, Jeff Vetter, who runs the Future Technologies division at Oak Ridge National Lab (the site of the top-tier Titan supercomputer and eventual home of the Summit system) says that what they are really paying attention to in his group when it comes to future systems are technologies that have been right in front of our noses all along—albeit in a more refined form.

“We have groups that are looking at quantum computing, but more from a physics angle,” Vetter tells The Next Platform. He also says that they keep an eye on trends around optical processors and new architectures. But as it stands, the trend toward increased heterogeneity in supercomputers is at the heart of their focus—along with some old memory technology that has found new life. As described with the launch of Summit, it is clear that pushing the GPU and CPU closer together and with a fast link between them is the way of the future. But the FPGA, which has come out of mainstream attention hibernation in recent months following the Intel acquisition and large-scale use cases that highlight its potential in hyperscale, deep learning, and high performance computing, is a hot topic for exploration at Oak Ridge once again.

“Even though we’re watching a lot of what’s happening with FPGAs now, we were still doing more actual work with them ten years ago. But a lot of that stopped when GPUs came along, which took a lot of our focus.” Vetter’s group was among the first to target the applicability of GPUs for supercomputing and undertook some of the initial porting and code refinements to prove the concept before putting those lessons into practice on the Jaguar supercomputer (which later evolved into the Titan machine).

In that space of time between Oak Ridge’s focus on GPUs, however, there have been some key developments towards more making FPGAs more accessible for HPC users, particularly on the OpenCL front. “We’ve been tracking OpenCL since around 2010 and have a benchmark suite we created in it. We’re working with Altera now to start mapping some of our benchmark suite to FPGAs and that OpenCL suite will run on Titan, AMD, MIC, and now FPGAs eventually, so we will get a better sense of performance and productivity,” Vetter says. “There are still some issues in dealing with all the parameters but once we do start to find the sweet spot for mapping an OpenCL program into an FPGA it may work extremely well.”

This goes to the point Altera’s Mike Strickland made to The Next Platform in a conversation last week. Just as was the case with GPUs, it is possible to start layering higher level frameworks on top of OpenCL to create a richer environment, especially for high performance computing. “The benefit is that you’re raising the level of abstraction with the addition of OpenCL from the compiler perspective. So for us, we have a research compiler in house that generates OpenCL, so one of the big things we’re set to do is see how we can take OpenMP 4.0 and OpenACC and start generating OpenCL to see what challenges are still there.

All of this, coupled with the fact that Intel will be pushing FPGAs on the same die with CPUs, OpenPower’s emphasis on getting them linked into future systems, and a push a richer development environment mean that we might start seeing far more FPGAs inside top-tier supercomputers within the next several years. But there is yet another key trend that is nothing new in computing circles—even if it is finding new life due to some unexpected changes.

Non-volatile memory has been around for years, but few expected the density and price to become so attractive—especially at supercomputing scale. Vetter points to how new uses for old technology like this in the form of burst buffers and for some more unique approaches to creating more efficient systems (as in the DAOS program that could eliminate file systems permanently) are pushing non-volatile memory to forefront.

“About six years ago when the Department of Energy put together their report for next-generation systems, everyone’s complaint was around the amount of DRAM that was going to be in these machines. It’s turning out that DRAM and DDR are lasting way longer than people thought but at the time, no one thought that non-volatile memory would continue to improve cost and density-wise like it did. The cost is almost to the point of ridiculous—it’s nothing. But these are the reasons we’re seeing non-volatile memory on the node in so many of these big DoE procurements—and we are absolutely looking at phase change, Memristors, flash, and other things, the question now is more about what the benefit is and what the device will be. There are still a lot of research questions.”

In addition to old technologies that are getting a new lease life on life is another element, this time more esoteric, that Vetter’s team encountered back in 2008 with their early GPU work. “Approximate computing is one of those things that has been around or a long time but is getting a second look,” Vetter explains. He says a good, basic example is using single versus double precision for performance over efficiency gains—something that has re-emerged in the mobile space due to power constraints. Although supercomputing is often all about exact, reproducible science, there are some cases where approximation can be useful and add enormous efficiency to the system—a concept he and his team at Oak Ridge are continuing to explore.

Although we have a lot of existing technologies coming down the pike to find a new way to integrate into future systems, this is not to say there is nothing new or potentially useful on the horizon. It’s Vetter’s job to watch what’s happening, but at the moment, the exciting things that are happening to make memory more accessible for a growing array of processors is a big deal—one that shouldn’t be overlooked when we look ahead. It might make for a sexier story to hear that the next wave of large-scale systems are quantum machines or powered by data stored in DNA–but the changes are incremental and powerful nonetheless. Not to mention practical.

Speaking of old things becoming new. NEC has quietly continued refining their vector supercomputers. A 1.3 PFLOPS machine just became the Earth Simulator 3 in Jamstec’s EMP and lightning proof steel supercomputing bubble.

I havent seen that reported in ANY western supercomputing media. I don’t think it gets much more old school than a real vector supercomputer.

They’re apparently working on their own next generation architecture named “Aurora”(weird coincidence with the Knights Hill Aurora i guess).

Considering how efficient vector architectures can be, especially their particular architecture, which focuses on having few huge, powerful cores with lots of bandwidth, it will be interesting to see if more vector supercomputers start popping up in the landscape.

Whats also interesting is how “old fashioned” vector architectures havent been talked about much in recent years, but they perform so well in HPCG even if they seem weak in HPL.

Its also very nice to see that a few manufacturers are still making and refining chips for use in nothing but supercomputers.

That idea is much older than CPU/GPU hybrids, and probably the only way to get to USEFUL exascale computing. I believe its been pointed out in an article on The Next Platform that an EXAFLOP is only meaningful if the architecture can do useful work with that raw power.

Commodity processors make for pretty boring and computationally inefficient computers as well. Its also a bit weird to hear talk of regressing to using single precision for performance gains.