Sponsored Feature There are tens of millions of lines of code in thousands of software programs, on a typical server in the datacenter. All of which collectively present a huge attack surface for various kinds of malware.

And no matter how hard vendors and open-source project developers try to secure the code they produce, it’s still susceptible to vulnerabilities.

That puts the datacenter in a quandary, given that the value of modern applications derives from the fact that they can easily share data and the results of processing that data. Cyber security has been a concern since the first moment two computers were networked together. But it moved into the big league with the commercialization of the Internet and shortly thereafter, the emergence of web applications.

It’s taken a long time to come up with computing platforms that deliver adequate security without leaving too much control in the hands of systems manufacturers. The Trusted Computing technology of the 2000s focused primarily on digital rights management (DRM). While it was too draconian for the enterprise datacenter, it was well suited to military and government institutions that need absolute control over data and applications residing on the machines attached to their networks.

TEE Adopts Different Approach

The on-prem and cloud infrastructure increasingly used by enterprises needs a different approach, which is where the Confidential Computing movement and its idea of a Trusted Execution Environment, or TEE, have stepped in.

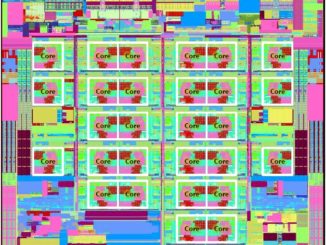

For datacenters, the foundation for Confidential Computing on Intel’s Xeon SP CPUs is its Software Guard Extension, or SGX. The extension was initially added in the first generation “Skylake” Xeon SP processors and has gradually been added to more CPUs since. The protected memory area that SGX creates has also been increased over time, making it not only suitable for holding cryptographic keys, but also for housing entire datasets and the applications that use them.

The idea is to create enclaves – secure partitions within main system memory – where data and applications can reside and run in an encrypted state which makes them impenetrable to outsiders. Well, at least impenetrable enough to make it a real hassle to try to hack into the encrypted memory areas of the system short of using cold DRAM extraction or bus and cache monitoring quantum cryptographic hacking techniques – in other words rendering the prospect extremely unattractive to the perpetrator so much less likely to occur.

The first principle of the early 21st century is that exponentially more data is being generated on a global basis. And that means more transactions with personal information are happening every day. Equally the volume and sophistication of hacking, phishing, and ransomware is increasing in parallel. So Confidential Computing – implemented in different ways by hardware and software – needs to inhabit any device handling sensitive data.

“On Guard”

Data encryption has been around for a long time. It was first made available for data at rest on storage devices like disk and flash drives as well as data in transit as it passed through the NIC and out across the network. But data in use – literally data in the memory of a system within which it is being processed – has not, until fairly recently, been protected by encryption.

With the addition of memory encryption and enclaves, it is now possible to actually deliver a Confidential Computing platform with a TEE that provides data confidentiality. This not only stops unauthorized entities, either people or applications, from viewing data while it is in use, in transit, or at rest. It also stops them from adding, removing, or altering data or code while it is in use, in transit, or at rest too.

It effectively allows enterprises in regulated industries (banking, insurance, finance, healthcare, life sciences for example) as well as government agencies (particularly defense and national security) and multi-tenant cloud service providers to better secure their environments. Importantly, Confidential Computing means that any organization running applications on the cloud can be sure that any other users of the cloud capacity and even the cloud service providers themselves cannot access the data or applications residing within a memory enclave.

Intel SGX features which deliver those guarantees are now pervasive across third generation Xeon processors and make use of the integrated cryptographic acceleration circuits on the CPUs. On earlier generations of Intel Xeon, the memory enclave had a maximum capacity of 256 MB, but with the release of the third generation of this technology, it has grown to a 1 TB that can unlock data insights faster than ever.

The combination of encryption plus the memory enclave – which is isolated from other parts of the memory space where the operating system and other software resides – means that certain data and applications can be secured from disclosure or modification.

Confidential Computing Can Mean Sharing, Too

This allows for organizations that might not otherwise work together to share data and compute against it without actually having access to that data – a process called federated analytics and learning.

“Privacy preserving analytics have been revolutionary in a lot of industries,” explains Laura Martinez, director of datacenter security marketing at Intel. “Take insurance as one example. In the past, insurance companies did not have the ability to share data. That made it hard to detect double dipping, which is when bad actors create multiple claims for the same loss event at multiple insurers, which in turn makes it hard to know if you have more than one policy.”

“Until recently, there was no technology that supported this type of data exchange. With the recent advancements and adoption of enterprise blockchain and confidential computing, companies like IntellectEU have built solutions to securely and privately share and match data without compromising the customer data.”

Fraud detection is a good example of how analytics and machine learning – from within shared secure enclaves – can deliver benefits that were not possible before Intel SGX. Healthcare is another. HIPAA and other regulations are strict in their controls of patient data, but if you want an AI algorithm to work properly, you need a tremendous amount of data. And, if you want to train an AI application to read brain scans, you have to figure out a way to share patient data without violating patient rights.

Enter the memory enclave and Intel SGX. The University of Pennsylvania, working with Intel and funded by the US National Institutes of Health, has been able to put together the brain scans of dozens of different healthcare institutions to run AI algorithms against a much larger dataset than any individual institution could run against alone.

What these use cases demonstrate is that often Confidential Computing is more about sharing data and applications than it is about restricting use of data and applications.

Sponsored by Intel.