Real growth in and recognition of the importance of high performance computing has been a long time coming. Perhaps since Seymour Cray launched the Cray-1 back in 1975. Perhaps even earlier than that. No matter where you draw the line, it has been difficult to watch companies struggle to bring advanced systems to market and not really make any money. Vendors usually did not have much net income left after they did the hard work of designing and building supercomputers.

That could be changing as the HPC market is expanding with new types of work, adding AI and data analytics to traditional simulation and modeling.

The profile of HPC simulation and modeling has no doubt been helped by the coronavirus pandemic, since supercomputers played a major role in the speedy creation of not one, but many vaccines and several treatments for the COVID-19 disease that is caused by SARS-CoV-2 virus. And governments around the world shelled out extra money to upgrade systems or buy new machinery to do genomics and other research to fight the virus. Which was a plus. But the recession the pandemic caused was a definite minus, although the sale of a single pre-exascale system helped mask the tricky 2020 year for HPC.

So says the box counters at Hyperion Research, who have been tracking the HPC space for more than 25 years and are expanding out into data analytics, quantum computing, and other aspects of high performance computing. For the purposes of this story, we are sticking to the traditional HPC simulation and modeling market, though.

“The fundamentals of the market were actually down 6 percent to 7 percent,” explained Earl Joseph, chief executive officer at Hyperion, at the recent HPC User Forum, which was hosted online instead of some place interesting as it usually is. “But the Fugaku system, which was accepted in December, was over $1 billion of the $13.7 billion in on-prem servers.” That’s a bit higher than the $910 million that was given as

Sales of supercomputers – which means systems that cost $500,000 or more in the categorization that Hyperion uses – were helped significantly by that acceptance of the Fugaku machine at the RIKEN lab in Japan. Hyperion reckons that supercomputer sales were up 13.7 percent to $5.94 billion, which helped to offset declines in sales of divisional and workgroup HPC systems.

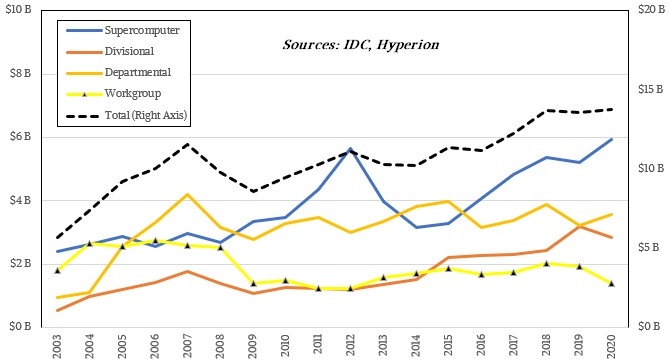

Here is how Hyperion (and IDC before Hyperion was spun out of that market researcher) breaks up the HPC server market, and the server tail definitely wags the HPC dog since it is the dominant part of spending. (HPC folks are focused very much on compute, and do the minimal amount they can get away with on networking, storage, software, and services.) Workgroup clusters cost under $100,000; departmental clusters cost between $100,000 and $250,000; divisional machines cost between $250,000 and $500,000; and supercomputers, as we said, cost more than $500,000. Sometimes a lot more. Like 100X to 200X more than that entry point for the supercomputer level here at the cusp between the pre-exascale and exascale eras.

In 2020, sales of divisional machines were off 10.9 percent to $2.86 billion, according to Hyperion, and sales of departmental machines rose by 10.2 percent to $3.57 billion. Workgroup HPC systems, which used to counterbalance the high end of HPC quite nicely, continue to shrink, falling 28.3 percent in 2020, to $1.38 billion. The public clouds, which have respectable HPC configurations these days, are probably the biggest threat to the sale of these smaller HPC systems.

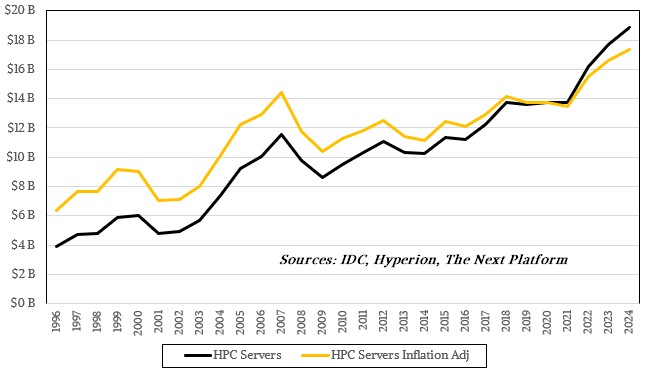

IDC and Hyperion after it have always been generous in actually giving out datasets and talking about the HPC market six or seven times a year at the HPC User Forum and at the annual ISC and SC supercomputing conferences. All of the data given out at the HPC User Forum is available online back to 2008, which includes historical data that often reaches back to 1996. We like to take a very long view on markets, as you all well know, so we sat down and strung all of this historical data and in some cases revenue forecasts together to make some pretty and illustrative charts.

First, let’s talk about sales by HPC server type and how that has changed over time. Take a look:

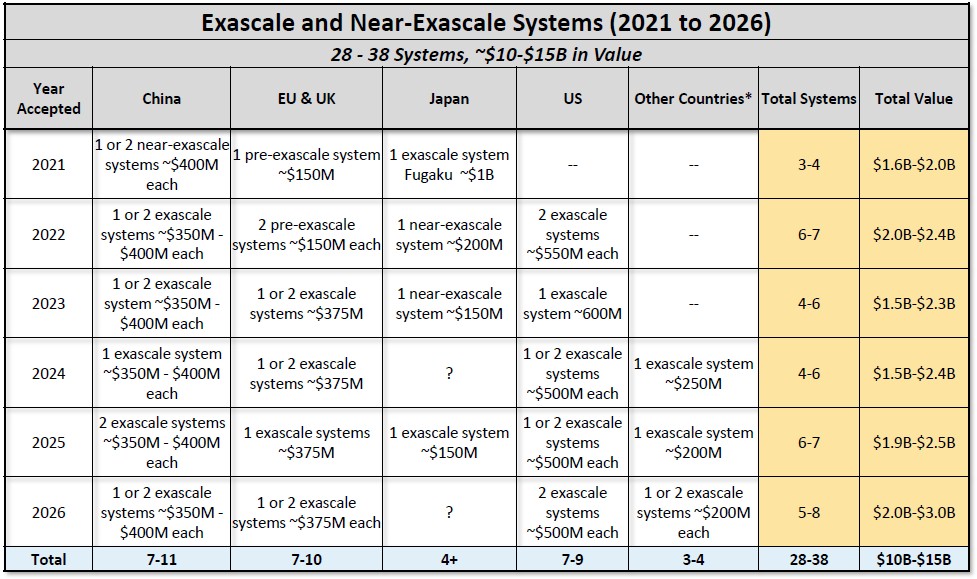

The decapetascale era set a pretty high bar for supercomputer sales, as you can see, and it is only now, as we are in the pre-exascale era and heading into the exascale era sometime this year. China could launch a real exascale machine in June at ISC21, and the “Frontier” system at Oak Ridge National Laboratory is supposed to be ready by the Top 500 list in November in time for SC21 – based on custom CPUs and GPUs from AMD and the Slingshot interconnect from Hewlett Packard Enterprise. There is a lot of money on the table over the next couple of years for exascale machines and some that are going to kiss that peak 64-bit floating point performance level., and we should be seeing a boom like we have never seen before in HPC because of the high cost of these machines. Here is the lineup by country for these superdupercomputers:

There is a high-level hum of money coming in for machines that will be somewhere between $2 billion and $3 billion a year at the upper echelon of supercomputing. Which is great. But if you subtract that out of the market, the overall HPC market is expected to grow underneath it, including the rest of supercomputers (again, machines that cost more than $500,000) but probably not workgroup machines, which we think will be largely killed off by the cloud.

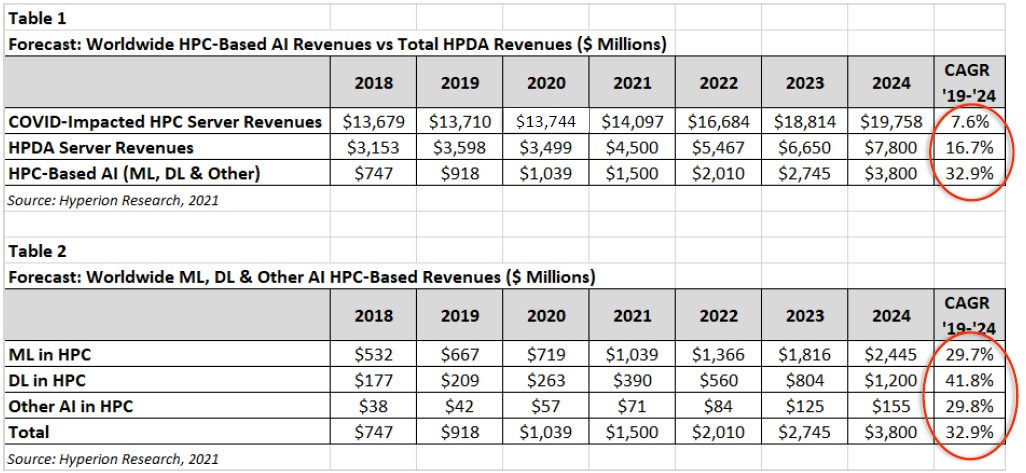

Here is the latest HPC server forecast from Hyperion, which has the coronavirus pandemic effects taken into account and which is still causing a slowdown in HPC sales in 2021:

The forecast was not made public by the price and performance bands, which is why we didn’t add it to the first chart we made above from the Hyperion data.

Don’t think that all of that exascale action in the next five and a half years will necessarily mean that the HPC business will be profitable. History suggests that these big boxes break even at best for the vendors who are the prime contractors on them, and any profits and a lot more revenue has to be made on peddling smaller versions of the systems to the wider HPC community in government, academia, and other research institutions. It looks like the prognostications of the analysts at Hyperion are assuming this will be the case. Which is good news. But clearly sales of systems for data analytics (what Hyperion calls HPDA) and for AI (which Hyperion calls HPC-Based AI) are growing faster. To the way we think about this, the definition of HPC has been broadened by new applications, and clearly machines can be built that do all three workloads very nicely. And in fact, that will be a goal for many HPC centers. If you think of it that way, then the HPC server market will grow from $17.6 billion in 2018 to $31.4 billion by 2024. That is a near doubling of the market in seven years.

This is pretty impressive, on the face of it.

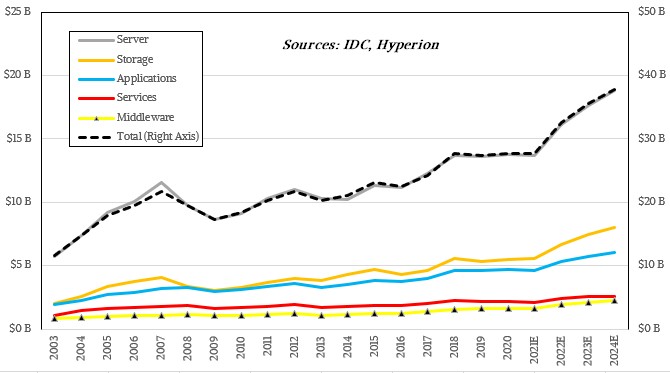

Hyperion doesn’t just track the HPC servers sold each year, but also figures out how much storage, applications, middleware, and services are sold into the HPC market and added to these machines. It does not have a way of reckoning the value of the homegrown applications and middleware in use on HPC systems, or open source software that is commonly used, but it would ne interesting to come up with such values and add them into the mix. (The servers include the networking costs in the way that Hyperion counts the money.)

Here is a distribution of sales of all of the components in a HPC system from 2003 out through the forecast into 2024:

The correlation between HPC server sales and total HPC system sales is very, very tight. Like crazily so. Compute and networking represent around 50 percent of the system costs averaged across all HPC centers, apparently. It wiggles here and there, but not by much. The storage, application, middleware, and services lines all wiggle up and down, more or less in synch. Perhaps the HPC market is more predictable than other markets, and given the relative regularity of the timing and budgets for system upgrades in the 3,000 or so HPC centers of the world, this may make some sense. It’s just very odd to see such a strong correlation.

The following is our favorite chart derived from the Hyperion data. This one shows HPC server sales from 1996 through 2020 and then the forecast out to 2024:

The black line shows HPC server revenues as reported, and the orange line is the inflation adjustment I did to take into account the time value of money, which you really need to do over such long time horizons. A dollar in 1996 bought a hell of a lot more than it will in 2024. If you do the math in constant 2020 dollars, inflation adjusting raises the line the further you go back to the left of 2020 and pushes it down a little to the right of 2020. But even with the inflation adjustment, that is still a fairly healthy line. And it shows how good the beginning of the terascale era was in 2003 and into the pre-petascale era up to the beginning of Great Recession. It was then that the US Defense Research Projects Agency started to pull back on massive HPC investments, setting the whole industry back at least three years on the march to exascale, and maybe as much as four or five years. We finally had HPC server sales reach levels seem in 2006 and 2007 as we entered the pre-exascale era in 2018 and it has been fairly flat since then. But as you can see, even with inflation adjustment, we are going to set new highs and see new heights in the next couple of years.

Ah, but will there be any profits? Hewlett Packard Enterprise has been investing like it believes in that, and so have Nvidia, Fujitsu, Dell, and others. We are not sure what IBM’s plan is, to be quite honest, but Power10 will make a very interesting big memory HPC system if someone wants to do something very different.

We are excited at all of the prospects. This is going to be fun.

Be the first to comment