It has been a long time – more than 15 years – since AMD has been in a position to pressure larger rival Intel in supplying processors to server OEMs and ODMs for the datacenter. In 2003, the company was able to surprise an Intel that was looking to force a shift among enterprises away from the 32-bit X86 chip architecture to the 64-bit Itanium environment with the release of its first Opteron processors, which offered a X86 architecture that could support both 32-bit and 64-bit and backward compatibility and rode into its release with the crucial backing of IBM.

However, the subsequent generations of Opteron disappointed due to performance issues or product delays and the momentum AMD had built off the initial release waned. That combined with Intel’s well-funded innovation and manufacturing engine made AMD almost an afterthought in the datacenter as Intel solidified its dominance, grabbing more than 90 percent of the global market.

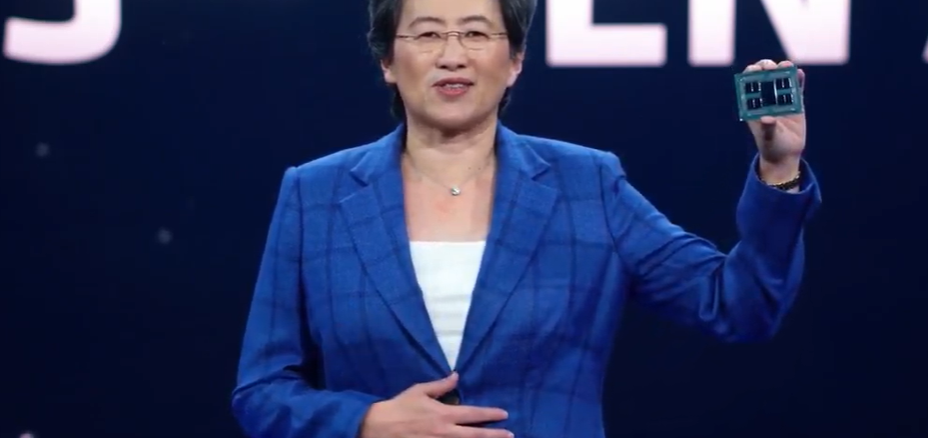

After years of stagnant sales and executive changes, Lisa Su in 2014 took over as president and CEO and AMD soon after placed an expensive and risky bet on creating a new chip microarchitecture that not only could address the widely expanding workloads not only in the datacenter but also in the cloud and increasingly at the edge, but also could handle emerging workloads like artificial intelligence (AI) and advanced data analytics and market segments like high-performance computing.

The company also created a process that ensured manufacturing would stay on track, with one design team working on one generation while another team works on the following one. The Zen architecture, first rolled out in 2017, has become the foundation of AMD’s Ryzen client chips and Epyc server processors. The third generation of Zen has already found its way into the company’s latest Ryzen processors. Now, Su and other AMD executives launched the latest Epyc chips based on the Zen3 cores.

We at The Next Platform have taken a look at the feeds and speeds of the “Milan” Epyc processors and will be doing a deep dive into the Zen3 and Epyc 7003 architecture as well as into the competitive dynamics in the server space. At the Milan virtual launch event, Su and others touted improvements in the Zen architecture, the latest security capabilities, benchmark numbers, vendor adoption and roadmap to show that Epyc is a viable alternative to the Xeon server processors from Intel, which has stumbled in recent years in making the manufacturing move from 10 nanometers to 7 nanometers, opening up an opportunity for AMD to gain some ground.

“Our customers always want more,” Su said during the event. “They want more performance, greater scalability and new layers of security. We thought about all of this when creating our next generation server processors. It’s simply the best server processor available. We deliver more performance, best-in-class scalability and differentiated security. It actually increases our lead in overall performance-per-socket, enabling maximum compute density. It also now delivers per-core performance leadership.”

To be sure, AMD has a way to go before against Intel. According to Mercury Research, AMD in the fourth quarter 2020 held 7.1 percent of the worldwide server chip market, compared with Intel’s 92.9 percent. However, it is making mark. In Q4 2019, Intel had 95.5 percent of the market to AMD’s 4.5 percent. Arm – which designs chips and then licenses the designs to manufacturing partners – for years has been agitating to become a player in data center as well, with some success and could be helped if Nvidia is allowed to buy it for $40 billion. The announcement of the Milan chips comes only weeks before Intel is expected to announce its “Ice Lake” Xeon SPs, the latest generation of its server processors.

AMD in the fourth quarter saw its Enterprise, Embedded and Semi-Custom business segment increase year-over-year 176 percent, to $1.28 billion, thanks to Epyc and semi-custom sales.

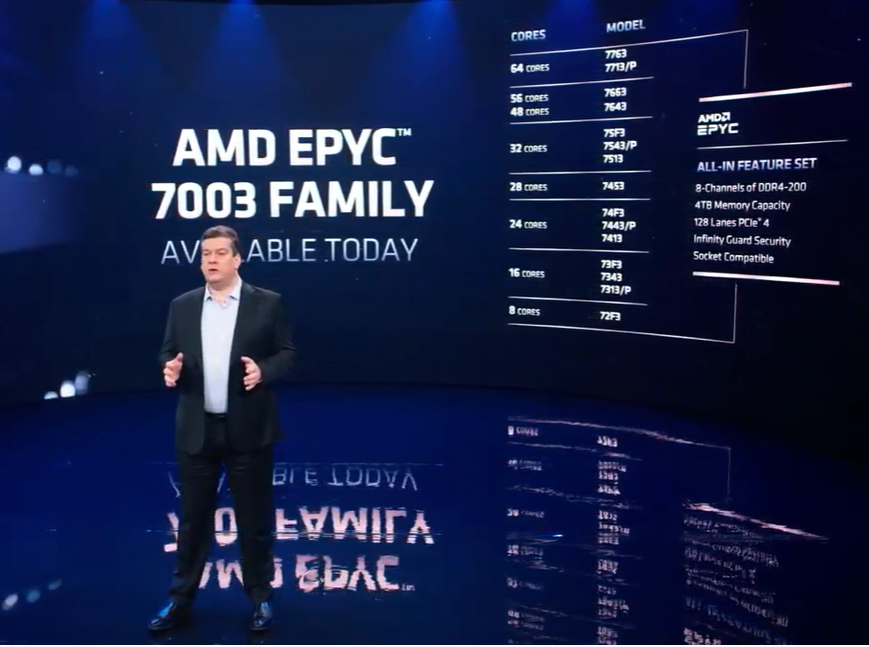

The Milan family comes after the second-generation Epyc “Rome” processors were released in 2019. The lineup of 7 nanometer processors includes 19 SKUs that run from the eight-core 72F3 to the 64-core 7763, 7713, and 7713P, according to Forrest Norrod, senior vice president and general manager of AMD’s Datacenter and Embedded Solutions Group.

“All of these processors, regardless of their core count, include the complete set of Epyc features: eight channels of high-performance memory supporting up to 4 TB of DRAM per CPU, 128 or more lanes of PCI-Express 4.0 I/O to connect to networking, storage and accelerators without the cost or performance impacts of external relationships,” Norrod said.

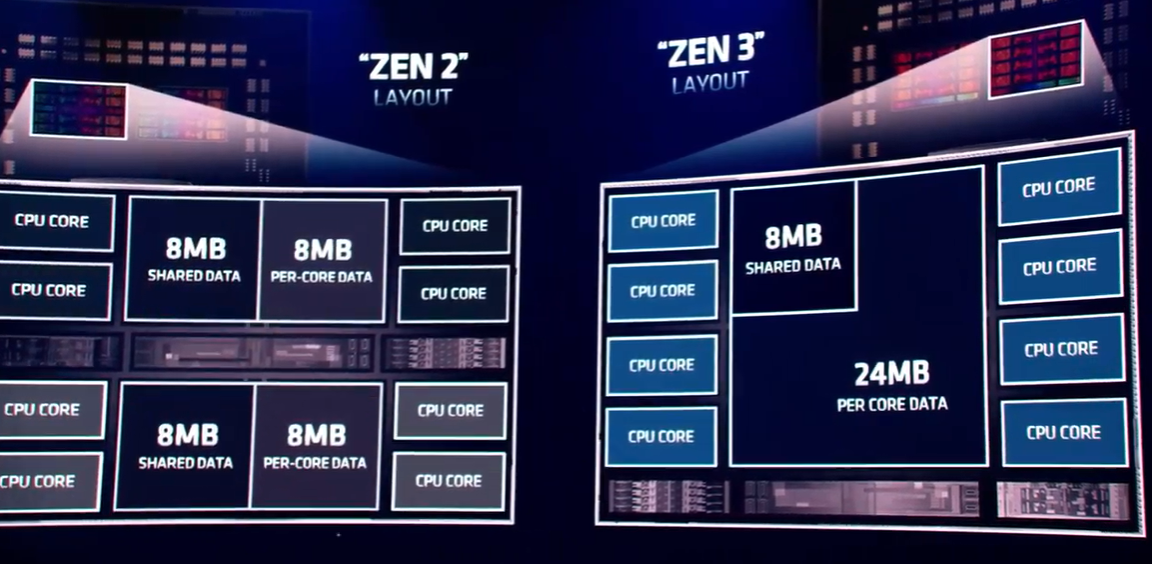

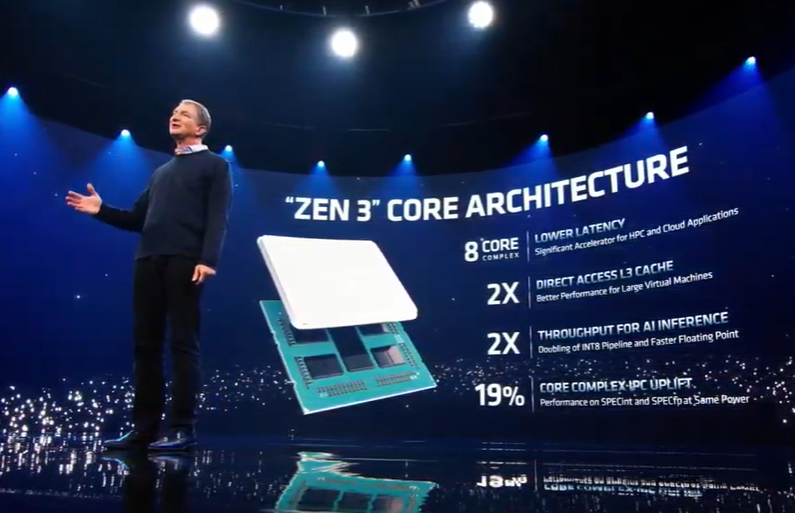

The chips lean heavily on the Zen architecture, which was reworked after the second-generation Rome Epyc processors. The third-generation Zen architecture offers a 19 percent improvement average increase in instruction per clock, due in part to a transition in the core complexes between the generations. Zen two was organized with two four-core attached complexes tightly integrated on the core die, said Mark Papermaster, AMD’s executive vice president and CTO. Zen 3 offers a single unified eight cores on the die, with each core having direct access to the 32 MB of free cache.

“Every core can now communicate directly to the cache without traversing across the die, reducing latency,” Papermaster said during the presentation. “The direct access to a cache flow of this size is good for applications that make frequent use of the memory subsystem. Many server workloads take advantage of multiple cores [and] have multiple copies of a workload running on a core die. For instance, database workloads run in this mode with a fixed size shared data, with the double 32 MB shared L3 in Zen3. The effective cache capacity of the data-per-core increases significantly, directly improving cache hit rates and as a result, performance.”

Also a focus was security, with the Milan processors offering the Secure Encrypted Virtualization-Secure Nested Paging (SEV-SNP) for stronger memory protection against malicious hypervisor-based attacks. The feature creates an isolated execution environment.

AMD put an emphasis on three target areas: cloud, HPC and the enterprise. Su said AMD currently has more than 200 first-generation and second-generation Epyc instances and that Milan will deliver twice the integer performance of comparable Intel processors, as well as tight security and high density. With announcements of new instances from the likes of Google Cloud, Microsoft Azure, and Tencent as well as other plans in development, the CEO said that number could double to more than 400 instances by the end of the year.

Hitting that milestone would be important to AMD given the sizeable influence hyperscalers and major cloud providers have in the datacenter hardware market. In the fourth quarter, enterprise spending on cloud infrastructure services grew 35 percent year-over-year, to more than $37 billion, according to Synergy Research Group.

In HPC, the Epyc 7003 chip deliver more I/O and memory throughput than previous generations and twice the workload performance than Intel, according to the company. That includes 70 percent higher floating-point performance from the 32-core 75F3, Norrod said. AMD chips also are being used in pre-exascale systems being built and will be used in “Frontier,” the supercomputer at the Oak Ridge National Laboratory comprising Cray systems from HPE that will be the first of the US exascale machines when it goes online this year with 1.5 exaflops of double precision floating point processing power.

In the enterprise, organizations are “constantly under pressure to meet the ever increasing workload demands,” said Dan McNamara, senior vice president and general manager of AMD’s server business. “They’re being asked to not just keep the infrastructure running but to improve the efficiency of the operation. On top of all these challenges, there are ever increasing security threats that need to be defended against time. With this environment in mind, we developed third-gen Epyc to help enterprises overcome these challenges. Epyc translates to lower total cost of ownership, helping our customers do more with less.”

That includes partnering with vendors like HPE, Nutanix, Microsoft and VMware in areas from hyperconverged infrastructure and hybrid cloud and multicloud environments. It also means focusing on databases and analytics, including as much as 60 percent higher throughput with Hadoop workloads than comparable Intel processors, McNamara said.

AMD is listing 11 OEMs, software makers and cloud providers that are saying now that they will use the Epyc 7003 processors, adding that there will be 100 new server platforms leveraging Milan by the end of the year. During the virtual event, Microsoft Azure announced new virtual machine offerings, including the HBv3 VMs for HPC that are available now and Confidential Computing VMs that leverage the security features from AMD that are in private preview now.

Google Cloud unveiled new compute-optimized C2D VM and the expanding of the general-purpose N2D VM later this year. The cloud provider’s Cloud Confidential Computing capabilities will be available on both. Tencent Cloud unveiled the new SA3 server instance.

HPE will double the number of Epyc-powered servers, using them in ProLIant and Apollo systems and Cray EX supercomputers and Dell announced the new PowerEdge XE8545 and plans to expand the use of the Milan chips in its server portfolio. Lenovo s putting the third generation Epyc chips in ten ThinkSystem servers and ThinkAgile HCI solutions. Cisco rolled out new UCS rack server models with the new chips aimed at hybrid cloud workloads.

VMware’s vSphere 7 cloud platform is optimized to leverage the new Epyc processors’ virtualization capabilities and support the new security features, including SEV-ES for both VM-based and containerized application. VMware last year unveiled vSphere integrated with its Tanzu container technology.

“The company also created a process that ensured manufacturing would stay on track, with one design team working on one generation while another team works on the following one. ”

Doesn’t Intel do the same? Their roadmaps indicate multiple generations of launches. They’ve also talked about parallel development of 10nm and 7nm processes, for example, and many years of parallel development on technologies like 3D fabrication and silicon photonics.

This swing does look like a near miss to me – significant increase in power and very little if any increase in price/performance when lifetime electricity cost is included (with a few exceptions – like 7443P, dual 7453 and dual 7763). Am I missing something?

However, next gen (EPYC 7004) looks MUCH more promising.