The challenge of managing data is growing faster than the data itself is piling up, and that is bad except for the companies that can create new tools to manage it, either to use internally as the hyperscalers do or to sell to those who cannot fund such development and count on vendors to do it.

The data streaming into datacenters is increasingly unstructured, and it needs to be stored, moved, and analyzed in real-time to get the most business value out of it. It is also coming in from all directions – the datacenter, multiple clouds, and the fast-growing edge environments that are tasked with catching the data being generated by all the tiny sensors to massive connected industrial systems that fall under the umbrella of the Internet of Things (IoT). The highly decentralized nature of the modern IT environment also rapidly expands the attack surface that bad actors can leverage to attack enterprises, putting a premium on securing the data both at rest and while on the move between systems between the datacenter, cloud and edge.

It is the waters Qumulo has been swimming in since launching in 2012 and coming out with a file system three years later that has been built up over the past seven-plus years and is aimed at enterprises that need scale-out, high-performance capabilities for their HPC-like workloads. As Molly Presley, manager of global product marketing for the Seattle-based company, tells The Next Platform, customers have grown to rely on running Qumulo’s file system software on appliances from the likes of Hewlett Packard Enterprise for years.

“That’s kind of normal stuff,” Presley says as she talks about the latest version of the software platform, the v3 release launched this week. “But the big gap that we’re solving today with customers is essentially consolidating all their unstructured data into a single software solution. You have edge data coming in from cameras, from autonomous vehicles, from sensors, whatever it may be. You have datacenter data that’s coming in off of a compute cluster of some sort or users in the datacenter and you have data in the cloud. Being able to unify all that into a single software solution is something that users really haven’t been able to accomplish so far. It’s that whole unstructured data sprawl issue, so consolidating is nice. That simplifies the environment. But everyone wants to get value out of the data that they have. They want to make business decisions and accelerate research. Whatever it is that they’re trying to get data for it, if they can’t aggregate it into a single spot, they can’t get business results or information out of that data.”

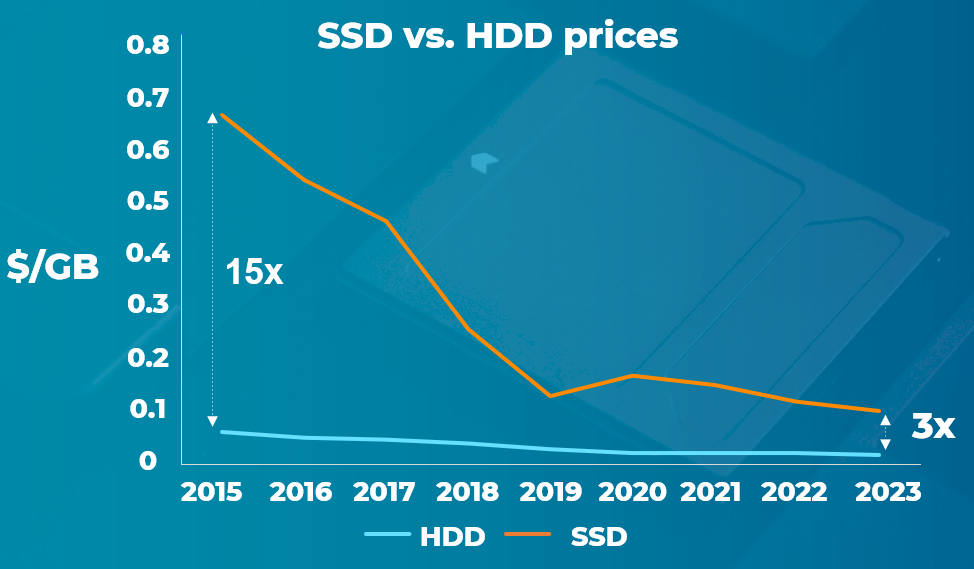

As we have talked about before at The Next Platform, Qumulo has developed a file system that’s about helping enterprises quickly adapt to the inevitable changes in the field, from the cloud and big data to the edge, IoT, software-as-a-service (SaaS) and hybrid clouds. The company embraced flash from the beginning, back when SSDs were 15-times more expensive than HDDs. However, since 2015, the price difference has shrunk to flash being three times more expensive, and the rise of the NVM-Express protocol has without a doubt improved the performance of flash and its fabric extensions have made it more broadly useful, too.

Qumulo’s software runs on Amazon Web Services (AWS) and Google Cloud. It has been certified to run on HPE’s Apollo hardware and now will be available on HPE GreenLake as a service offering. The file system also is now certified to run on Fujitsu hardware, which will help Qumulo expand its geographic reach deeper into such regions as Asia-Pacific and the Middle East. In addition, the vendor has signed on two distributors – Arrow Electronics and Global Distribution – to further push the software into the market.

Dell EMC had been a technology partner, but that alliance ended, Presley says. The OEM wasn’t selling many PowerEdge systems with the Qumulo software because there was too much competition between Qumulo and Dell EMC’s Isilon storage line, so the partnership was discontinued, she says.

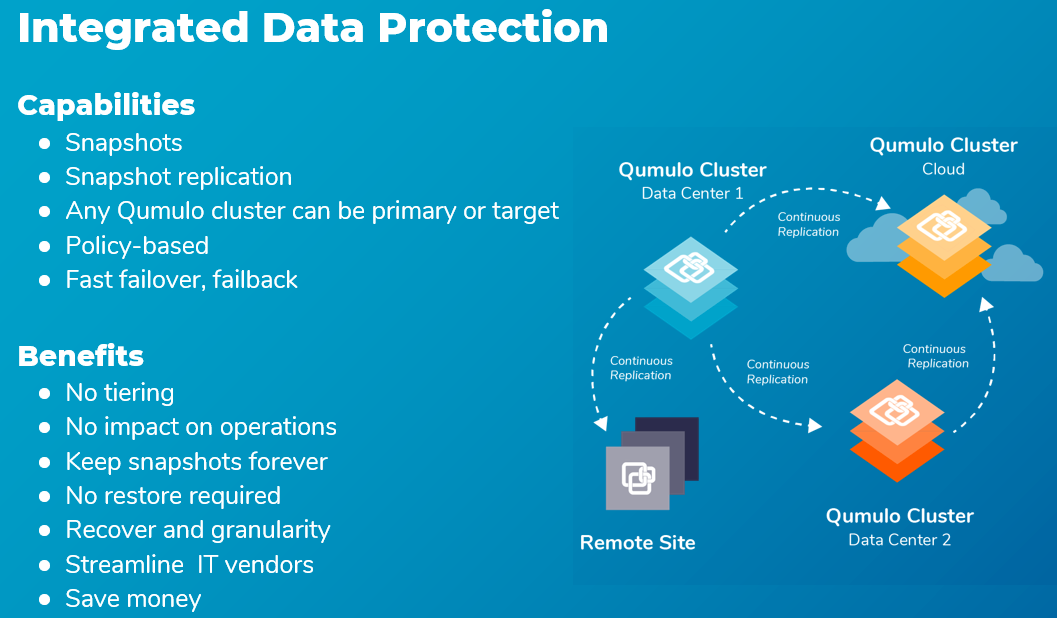

With the expanded market reach, Qumulo is rolling out the latest version of the file system aimed at addressing the various needs enterprise data, from security and protection to improved all-NVM-Express application performance – and a new all-NVM-Express platform – real-time analytics and scalability. Qumulo now offers encryption for data in transit through the SMBv3 protocol and at rest on Apollo Gen 10 appliances from Hewlett Packard Enterprise and audit capabilities to ensure security and compliance. Role-based access control policies make access easier to manage and data can be backed up via snapshot replication to on-premises locations or to the cloud.

Qumulo introduced its first all-NVM-Express file storage platform in 2017 when SSDs were still about 10 times as expensive when compared to spinning disks.

“Fast forward to where we are now, where it’s about a 3x price premium over spinning disk … and a lot of users at this point are looking and saying, ‘If I have a very cache-heavy workload, read-intensive workload, a workload that is doing things like AI and ML, anything like that, that is just very data-read intensive, they want to go ahead and make a move to NVM-Express now so that they don’t have any application performance or system performance limitations for those workloads,” Presley says. “What has happened is the price came in to a reasonable cost and AI and ML have become so common and just data analytics and different use cases have become so common that those workloads almost across the board have moved to NVM-Express and that has just been in the last two quarters.”

In the v3 release, the software has been further optimized to drive better performance for small file data sets both for on-premises hardware or in the cloud. Qumulo also is rolling out a new addition to its P-Series line of all-NVM-Express appliances. The P-368T is a highly dense 2U platform that offers 368 terabytes of raw capacity per node and can scale to more than 36 petabytes of all-NVM-Express storage in a single cluster. It adds to the cost-benefit trend that is driving adoption of NVM-Express, Presley says.

“This drives down the cost of a node because we’re getting more capacity in a single node so that more expensive PCI infrastructure and the other controllers within that node you gain more capacity out of it,” she says. “The beauty of NVM-Express is because of how it runs on PCIe and because of how it is optimized, you don’t have any performance bottlenecks by building more capacity in a single node. The more you can get into a single node, you’re driving down costs without hurting performance. This more dense solution was requested by a really important Fortune 10 customer and we decided to go ahead and qualify it so we meet their datacenter density need.”

The accelerated adoption of NVM-Express has been a key business driver for Qumulo, Presley says. Most new customers are looking to run analytics on their data, changing not only the kinds of businesses embracing Qumulo technology but also the amount of data that is being analyzed.

“For the first couple of years of business, the majority of our customers were coming more from the rich media space, like media and entertainment customers,” she says. “The shift to analytical workloads is probably the biggest shift. Our average deal size as far as the amount of capacity has gone up. Our average customer size right now is around 800 TB or something like that. … Where it used to be that maybe once a year you would get a 10 PB customer, it’s now become very, very regular to see 10 PB+ customers.”

Along with NVM-Express, the enterprise drive to the cloud has helped Qumulo’s fortunes. More than 80 percent of its customers are buying the software because it can help them move to the cloud. Not long ago that number was between 25 percent and 30 percent, according to Presley, adding that it’s “a key decision that they’re making on future technologies, whether they can help them get them to the cloud or not. If no, they won’t invest in technology.”

Be the first to comment