With the ramping of volumes, the maturing of the manufacturing process, and the widening number of use cases in the field, there is always an opportunity for the lineup of every type and generation of compute engine to get some tweaks here and there. In recent years, such tweaks have been relatively rare, given that chip makers do a lot of legwork and forecasting to plot out every possible scenario matching yields by core, clock speed, cache, and thermals to potential customers and list prices. But they do happen.

So it is with the “Rome” Epyc 7002 processors from AMD, which we covered on launch day last August and then did a deep architectural dive on a few weeks later. Our initial coverage included a detailed price/performance analysis comparing the “Naples” Epyc 7001s to the Rome Epyc 7002s and to the “Shanghai” Opteron 2300s, which are a kind of touchstone from the time of the Great Recession in early when Intel work up and started making decent Xeon processors again with its “Nehalem” Xeon 5500s.

There are two new members of the Rome Epyc lineup, as the company explained in a blog post, and they are the result of more intensive bin sorting. The first new chip is the Epyc 7662, which has 64 cores and 256 MB of L3 cache across those cores; the chip has simultaneous multithreading activated, so there are 128 threads presented to the operating system for this socket unless users purposefully turn SMT off. The base frequency of the cores is set at 2 GHz flat and the maximum boost frequency – meaning the clock speed of a single core when the other 63 are turned off – scales up to 3.3 GHz. The boost frequency will diminish as more and more cores are activated, as is the case with not only all Epyc 7002 family chips, but also all processors that allow for goosed clocks. This is just the way thermodynamics is a gating factor in chip operation.

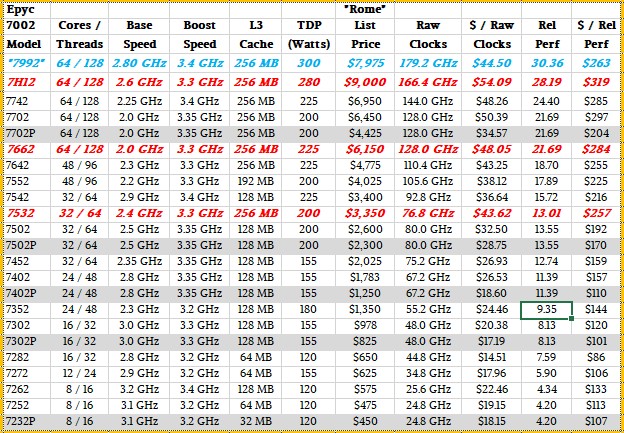

Here is how the new Epyc 7662 compares to its peers in the Epyc 7002 lineup:

As you can see, the Epyc 7662 very closely resembles the Epyc 7702 aimed at two-socket servers and the Epyc 7702P aimed at single-socket servers. The Epyc 7662 has the same clock speed and core counts as these two chips, but runs a bit hotter (12.5 percent using thermal design point metrics given by AMD) and so AMD is cutting the price compared to the Epyc 7702 (which like the Epyc 7662 can be used in two-socket servers). From $6,450 down to $6,150, to be precise, a 4.7 percent reduction in price. The performance, as gauged by the aggregate raw clocks across the cores and using our relative performance metric compared to the Opteron 2387, which had four cores without SMT running at 2.8 GHz for $873, is the same on the Epyc 7702 and Epyc 7662. But the bang for the buck will be 4.7 percent better, of course, but the performance per watt and the dollars per performance per watt will be a little bit worse. Some customers want a lower-cost 64-core Rome Epyc chip that still fits into the thermal envelope engineered for the top-bin 7742, which is 225 watts of course.

We wonder, of course, how far AMD might push this, particularly if it could work with the providers of water block cooling systems to make seriously overclocked 64-core and 32-core chips. To boost to a 2.9 GHz frequency – within 100 MHz of the clock cycles with the that Shanghai Opteron 2387 – AMD has to drop the count in half to 32 cores to stay in that 225 watt thermal envelope. Imagine if the Rome Epycs could be running as hot as 300 watts, which is not crazy, and that datacenter energy use and heat dissipation were not an issue because absolute performance – serial and parallel – was. Such a hypothetical chip – we called it the Epyc 7992, shown in blue in the table above – might cost on the order of $7,975 by our estimates and have a relative performance of 30.36, about 24 percent higher than the current top-bin part, and cost $263 per unit of performance, or about 8 percent better bang for the buck than the Epyc 7742. That delta in value would help cover the cost of the water cooling, and would also allow AMD to make a screaming box – albeit one that was a bit toasty. But as we say, for some customers, that is not the issue. A faster answer is.

This is sort of what AMD did last September with the launch of the Epyc 7H12 processor, which is shown in the table above and which we talked about in detail here. The big difference is that we don’t think AMD lowered the price on the chip, but rather tried to raise the price a lot for that extra performance. But we think that is going a little too far and that a slightly lower price is in order given the difficulties it creates. The object here is to keep beating Intel in supercomputers and search engines.

The other new chip is the Epyc 7532, which as 32 cores with SMT running at 2.4 GHz and, significantly, with the full 256 MB cache instead of half of it like the other 32-core variants of the Epyc 7002 series. This chip fits in the 200 watt thermal envelope and that extra cache boosts the price to $3,350, presumably because there are some workloads that need more cache than 128 MB.

Dell and Supermicro are the first two server OEMs that are selling the two new Rome Epyc SKUs. Dell is putting them in the PowerEdge R6515, R7515, R6525, R7525 and C6525 servers. Supermicro is putting both of the chips in the A+ line of machines, and the Big Twin line of hyperscale-style machines is supporting the Epyc 7532. Hewlett Packard Enterprise and Lenovo are working on adding the new chips to their respective server lines.

Your chart shows “7992” not “7662”.

It shows both.