You don’t have to be a chip designer to program an FPGA, just like you don’t have to be a C++ programmer to code in Java, but it probably helps in both cases if you want to do them well.

The trick to commercializing both technologies – Java and FPGAs – is to make that latter statement increasingly not true. The good news for FPGAs is that with the appropriate layers of abstraction and the right mix of tools, creating algorithms and dataflows that run on an FPGA, rather than on a CPU, a DSP, a GPU, or some other form of custom ASIC, has gotten progressively easier over the 35 years since this programmable logic device was first invented.

The wonderful serendipity is that just at the time that the CPU can no longer be the sole and primary unit of compute in the datacenter for many workloads – for a whole host of reasons – the FPGA has come into its own, offering performance, low latency, sophisticated networking and memory, the heterogeneous compute capabilities of modern FPGA system on chips, which are arguably compute complexes and nearly complete systems in their own right at the high end of the product lines from FPGA suppliers. But FPGAs can and do play well with other devices in hybrid systems, and we think are just beginning to finding their natural places in the hierarchy of compute.

This, among other factors, is why we are hosting The Next FPGA Platform event at the Glass House in San Jose on January 22. (You can register for the event at this link, and we hope to see you there.) Xilinx, of course, is one of the dominant suppliers of FPGAs in the world, and one of the pioneers in this field. Ivo Bolsens, senior vice president and chief technology officer at Xilinx, is giving one of the two keynotes at the event, and gave us a preview of the things that are on his mind these days as Xilinx helps create malleable computing for the datacenter.

It has taken some time for system architects and programmers to come around to the heterogeneous datacenter that will include all kinds of compute engines scattered across the stack to perform compute, storage, and networking tasks, and it is happening of necessity as Moore’s Law advances are harder to come by across all aspects of semiconductors on CMOS chips. Our language is still stuck in the CPU frame of reference, and we are still talking about “application acceleration,” meaning doing it better than we can do on the CPU all by itself. Years hence, datacenters will be a collection of compute engines, storage media, and interconnect protocols offering coherency or not, and we will get back to just talking about “compute” and “applications.” Hybrid compute will be normal, just like the metered compute, wrapped up in bare metal, virtual machine, or container chunks, that we call “cloud” today will just be “compute” at some point in the future of our IT lingo. At some point, and the FPGA will probably usher this era along, we will go back to calling it data processing.

Bringing FPGAs into the datacenter requires a shift in thinking. “If you look at applications today and think about how you accelerate them, you have to drill down to see how these applications are being executed and what resources they are using and where the time is going,” Bolsens explains. “You have to look at the total problem that you are trying to solve. Many applications running in a datacenter these days are scaling out, leveraging a large amount of resources. Take machine learning training, for example, which uses a tremendous amount of compute nodes. But when you talk about acceleration, you have to look at not just the acceleration of the compute, but also acceleration of the infrastructure.”

As an example, for the machine learning training applications that Bolsens has seen in the field, somewhere around about 50 percent of the time is spent on moving the data around for your applications across clustered compute, and 50 percent or less of the time is spent on actually doing the compute itself.

“This is where I think FPGA technology comes in, because we can provide capabilities to optimize both the compute and the data movement aspects of the application. And we can do that at the level of the overall infrastructure, and we can do that at the chip level. This is one of the big merits of the FPGA, which allows you to build application specific communication networks. With machine learning inference applications, which have a typical dataflow, when you look at how the data is moving through your compute problem, I think there is no reason why you need a complicated switch-based architecture. You could build a network that is more dataflow. The same holds for machine learning training, where you could build a mesh network and have packet sizes that are accommodating specifically to the problem at hand. With FPGAs, you precisely scale and tune your communication protocols and topologies for an application. And as we see with machine learning and inference, we see that we don’t really need double precision floating point and we can tune that, too.”

The difference between an FPGA and a CPU or some other ASIC is that the latter are hard coded and once it is made you can’t change your mind about the type of data that is computed and the compute elements that are matched to it in kind and number, or the nature of the dataflow through the device and to the outside world. FPGAs allow you to change your mind if conditions change – and they always do, so this is a real benefit.

It is one that has come at a high cost in the past with FPGAs, which have not been for the faint of heart. The trick here is to open up the compilers for FPGAs so they integrate better with the tools that programmers use to create parallel applications on CPUs written in C, C++, or Python and offload work to libraries that accelerate routines on the FPGA. This, in a nutshell, is what the Vitis machine learning stack, which sits underneath the Caffe and TensorFlow machine learning frameworks and has libraries for running straight AI models or for adding FPGA-boosted AI oomph to workloads such as video transcoding, image and video recognition, data analytics, financial risk management, and any number of third party libraries that are in the works.

This is no different in concept than what Nvidia did with the CUDA parallel computing and offload environment with GPU accelerators a decade ago, and what AMD is doing similarly with its ROCm tools, and moreover, what Intel is promising to deliver across CPUs, GPUs, and FPGAs with its OneAPI effort.

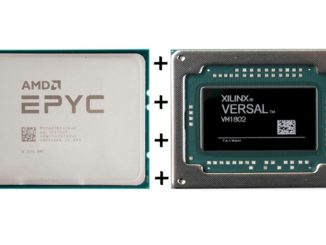

The question now is how these toolchains will all be woven together so anyone can program any collection of compute engines as they see fit. The reason this is important is simple: FPGAs have evolved, and are every bit as sophisticated as any CPU that was ever invented. They are on the most advanced processes and they are using the most advanced chip packaging techniques. And they are going to find their place because we can’t afford to waste time, money, energy, and intellect any more – these are all too dear.

“There is an intrinsic technical value proposition of an FPGA,” says Bolsens. “And it is about more than the usual one-liner about adaptability and reconfigurability. With all of the important applications – machine learning, graph analytics, high frequency trading, and so on – we have the capability of not just adapting your compute data path to the problem at hand, but also adapting your memory architecture – the flow of data in the chip – to the problem. And we also have much more memory integrated in the FPGA than any other device, too. Couple this to the fact that if your problem doesn’t fit inside of one FPGA then you can scale it across multiple FPGAs without paying the kinds of penalties you pay when you are scaling out over CPUs or GPUs.”

I don’t see bright future for FPGA in Data Center applications, just as we haven’t seen FPGA success in the data center in the last decade: too expensive & too much power consumption & too complicated to program compared to GPU & AI ASIC.

So, since it hasn’t happened yet, it won’t happen. Stick with your day job.

If only one of the dominant players would create a single API that would allow you to make use of CPUs, GPUs, FPGAs and maybe even AI accelerators – they could call it “Single API” or even better “One API”…

But, according to your logic, it won’t happen since it didn’t happen in the past.