Stanford University doesn’t own software defined networking. But it sure does feel that way some days. Stanford has been the wellspring from which key projects that are framing the future of networking – such as programmability of devices and disaggregation in its many guises, including prying network operating systems free from their switches and routers to breaking the control planes and data planes free from the underlying silicon so they too can be programmable on a vast scale.

When you break it all down, the advances that are coming to networking are about making networks more malleable, less expensive, and with more predictable latencies and with a flatter and wider network topology. Programmable is a key aspect of all parts of the network stack, and it is safe to say that Nick McKeown, a professor of computer science and electrical engineering at Stanford as well as a serial entrepreneur, has been leading the charge to change datacenter networks.

McKeown’s first startup, Abrizio, was founded in 1997 focused on energy-efficient, terabit-class switching and was sold to PMC Sierra for $400 million. We first got to know McKeown as one of the founders of software-defined networking pioneer, Nicira, which VMware acquired in 2012 for $1.26 billion and which is the foundation of its NSX virtual switching business. More recently, McKeown was co-founder and chief scientist at programmable switch ASIC maker Barefoot Networks, which Intel just acquired to jumpstart its Ethernet switching business. McKeown joined us at the recent The Next I/O Platform event in San Jose for a chat

One of the central themes we have been talking about at The Next Platform over the years is the push to make networking less proprietary and brittle and more open and malleable, first through virtualization at a very high level – breaking free the control planes and data planes that were formerly etched in ASICs and more recently with programmability in the switch ASIC itself, defining its functions and personalities much as a software stack does on a CPU. The first question we asked McKeown is why is the time right for turning the switch or router into a programmable domain specific architecture such as those based on CPUs, GPUs, FPGAs, DSPs, or TPUs.

“Networking has changed a lot in the past ten or fifteen years,” explains McKeown. “If you think back to 2005, every hyperscaler was building its network out of proprietary networking equipment with proprietary software and fixed function silicon. And things have changed. Today, all ten of the top hyperscalers in the world write their own software to control their own network, and they needed to do this because essentially the model of the networking industry was broken, it was out of touch. And that’s because if you are operating a big network, then you must control the software that controls the behavior of your network. No one else knows how to do it. And as the networks get bigger and bigger, the chip suppliers are further removed from how those networks work. So it is vitally important – and we will just take it for granted – that those who operate big networks, whether they are big telcos or hyperscalers, will continue to write that software. And today we take it for granted that they can write it, they can commission someone else to write it, or they can download it from open source whether it is from Facebook with FBOSS or from Microsoft with SONiC. In the telco space it is happening as well: AT&T with ONAP and DANOS and now ONF’s Trellis and SEBA. There is an acceleration in the ability to control your network, and it is really about changing who is in charge. They keys have been handed form those who were building the equipment to those who have to operate the network. It was inevitable, it had to happen.”

We would say that it is more accurate to say that people like McKeown taught us how to hotwire the car. . . . and then showed us not how to build a better car, but how to craft an entire transit system.

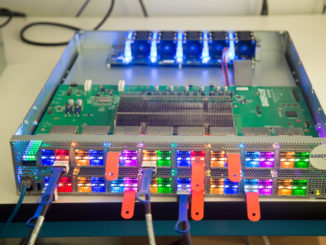

However, all of this work that has been done in the past decade and a half is just the first stage of software defined networking, says McKeown. The first stage made the control plane programmable, and now we need to do the same thing to the data plane or forwarding plane in the network devices. That, of course, is what Barefoot Networks has been building for the past six years with its “Tofino” family of programmable switch ASICs and the P4 programming language that is affiliated with it but can – and is – used on other kinds of network devices.

Up until this new wave of programmability, chip designers were determining, in their silicon, how large networks would operate, not the actual companies that were tasked with operating the networks and who really knew better how to take on this task. Hyperscalers didn’t want to make their own silicon, but they do what silicon that they can program like the CPUs at the heart of their servers and storage. And that is why we have seen switch makers not only open up their software development kits, but to make the actual silicon – the packet engines at the heart of their ASICs – more programmable.

We are at the front end of this second phase of the networking revolution, and if you want to hear more about McKeown’s thoughts on this, you are going to have to watch the interview above.

Be the first to comment