According to the Centers for Disease Control and Prevention’s latest incidence data, cancer is the second leading cause of death in the United States, exceeded only by heart disease. The tragic statistics are difficult to internalize. However, there is reason for optimism. Novel approaches to cancer diagnosis and treatment, spearheaded by the dedicated team behind the CANcer Distributed Learning Environment (CANDLE), seek new and highly targeted ways to fight back against cancer’s devastating impacts. The CANDLE project is building a software environment that will bring the combined power of exascale computing and deep learning to bear on the challenges of cancer research, diagnosis, and treatment.

Bringing an ambitious project like this to life involves experts and some of the world’s most powerful supercomputers from leading research institutions across the United States. Participating organizations include the US Department of Energy’s Argonne, Oak Ridge, Lawrence Livermore, and Los Alamos National Labs, plus the National Cancer Institute and the Frederick National Laboratory for Cancer Research. “CANDLE is a large project spanning multiple labs, vendors, partners, and other contributors. We balance our efforts among developing the technology, integrating it with applications, and teaching people how to use it,” said Rick Stevens, principal investigator behind the CANDLE project.

The Challenge Ahead

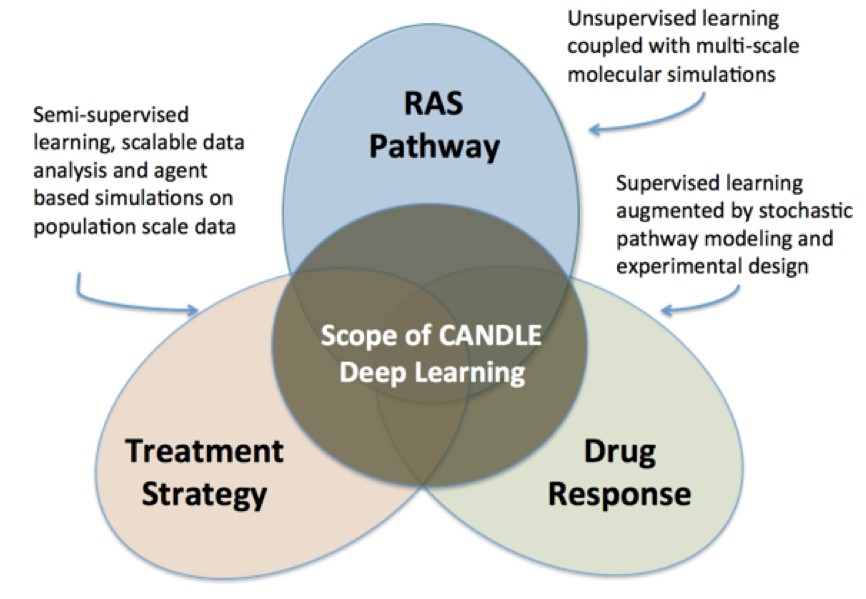

Stevens describes three distinct challenges the team behind CANDLE must overcome to understand the nature of cancer better and identify the best drugs to fight cancer’s many forms. First off, the research team needs to gain a detailed understanding of the biology – and molecular interactions – at the heart of various types of cancers. Second, the team must develop computing models which can predict cancer cells’ response to drugs. “We foresee a future of cancer treatment where a doctor can take a biopsy of a cancerous tumor and analyze it to pinpoint its molecular properties. From there, machine learning models can use that data to determine which drugs have the best chance of killing that unique tumor,” said Stevens.

The third aspect of the challenge facing Stevens and his team is gathering information from what he refers to as ‘patient trajectories.’ In short, that means the team must evaluate data from millions of cancer patients nationwide, hoping to discover patterns from which the CANDLE team can build large-scale computing models. While some of that patient information data exists in structured databases, other data comes from more challenging sources like patient reports handwritten by oncologists. However, gathering and mining all that data for meaning has the potential to unlock commonalities and patterns to hone the models.

By tackling the triad of cancer research challenges simultaneously, the CANDLE project strives for the ability to identify – and even design – drugs and treatment strategies to fight various cancer types in patients.

Adding perspective on the three-point challenge inherent in fighting cancer, Stevens said, “CANDLE needs the capability to predict what a complex cancer cell is going to do when exposed to a drug. To do that, we must acquire more high-quality data to gain a greater understanding of the biology behind the process. Therefore, the machine learning methods must integrate many, many sources of data to overcome that hurdle. Of course, we also need more testing to hone our predictive models and move that capability from the laboratory environment to a place where it can be used to help patients. It’s a big challenge, but we are making good progress.”

Offering an analogy, Stevens describes the critical relationship of data integration and deep learning applications. “If we think about the system like a rocket ship, the learning model is akin to the rocket engine, while data represents the fuel. No matter how good the engine is, it needs excellent fuel for liftoff. For this reason, our persistent challenge is obtaining large quantities of high-quality data, cleaning it, integrating it, normalizing it, and devising new types of deep learning architectures that get the most out of it.”

As larger volumes of data and predictive models simulate the interaction between drugs and their molecular targets, the process has the potential to identify new drugs which are more effective than those currently available. Stevens reinforces the opportunity the process represents. “Chemotherapy has been available for about 75 years. However, we have never been able to predict effectively which patients will respond to it. Devising ways of incorporating molecular information and visual information to build more predictive models will help distinguish which tumors which will respond to a given drug and those that won’t. With exascale computing, we have a chance to do that, and that will change the lives of millions of people.”

Aurora: Argonne’s Exascale System

In addition to his role in the CANDLE project, Stevens serves as associate laboratory director for the Computing, Environment and Life Sciences directorate at Argonne National Laboratory. As a central figure behind Argonne’s forthcoming “Aurora” exascale system, Stevens has a uniquely nuanced perspective on the enormous benefits exascale computing will offer many fields including materials science, cosmology, neurology, climate research, and more. Aurora, built by Cray and featuring future generation Intel Xeon SP CPUs, next-generation Optane DC persistent memory, and future Xe GPUs, is expected to be delivered in 2021.

“We chose the name Aurora because it encompasses our aspirational goal to create a system that in some sense can illuminate the world,” explains Stevens. “For the first time, we will have staggeringly powerful compute power that offers performance levels on the order of ten to the eighteenth power operations per second. The CANDLE team is excited to unleash Aurora’s full capability to help humanity in ways impossible before.”

Building exascale systems is a highly complex endeavor. Added Stevens, “We feel very fortunate to have Intel and Cray supporting the success of Aurora. Intel’s depth of experience with high-performance computing (HPC) and advanced hardware like Intel Xeon SP processors, along with a software overlay from Cray make our exascale system possible. Together, we are making a new era of exascale computing possible.”

Exascale promises the ability to create more sophisticated models and incorporate new ideas. Stevens elaborates, “For instance, we can involve uncertainty quantification where we can estimate how confident the model is in making a given prediction. With that confidence factor, we can teach the model to be more effective. If it is not very effective, however, we can also teach it to keep its mouth shut,” joked Stevens. “So exascale is critical in pushing the envelope with machine learning. We’re also understanding more and more how to maximize extreme-scale compute power for more effective deep learning models.”

A Bright Future

Because CANDLE aims to tap deep learning capability on DOE supercomputers, the project’s environment has the potential for use in other application areas unrelated to cancer treatment. According to Stevens, CANDLE’s underlying capability and tools can apply deep learning to other fields of science like climate modeling, materials science, cosmology, and more. “Because the learning environment is designed to be general and versatile, it can offer multiple practical purposes,” noted Stevens.

While Stevens ultimately wants to see a diverse ecosystem of scientific use cases for CANDLE, his team remains focused on making progress in the battle against cancer. “Like other members of the CANDLE team, I get up early in the morning and stay up late at night thinking about new ways to attack cancer and do our best to uncover practical solutions which doctors can use to combat it. With exascale computers, we are unencumbered by legacy compute capability bottlenecks, so we have the freedom to experiment and try new approaches.”

Added Stevens: “We look forward to the day when our work may help millions of people worldwide. It’s a very exciting time.”

Rob Johnson spent much of his professional career consulting for a Fortune 25 technology company. Currently, Rob owns Fine Tuning, LLC, a strategic marketing and communications consulting company based in Portland, Oregon. As a technology, audio, and gadget enthusiast his entire life, Rob also writes for TONEAudio Magazine, reviewing high-end home audio equipment.

Absolutely. am totally with you on this one. AI can detect cancer now in every early stages and I am sure, it is going to help in curing cancer in future too.

This article sheds light on the crucial battle against cancer, which ranks as the second leading cause of death in the US. It’s heartening to see initiatives like the CANDLE project leveraging cutting-edge technology, supercomputers, and deep learning to revolutionize cancer research and treatment. The prospect of personalized cancer treatments based on molecular properties is truly promising. Here’s to science and innovation leading the way in the fight against this devastating disease!

CANDLE’s deep learning capabilities can help tailor cancer treatments based on a patient’s unique tumor molecular properties, potentially increasing treatment effectiveness.The project has the potential to identify new drugs that are more effective in treating cancer, offering hope for more successful therapies.