The Open Compute Project (OCP) held its 9th annual US Summit recently, with 3,441 registered attendees this year. While that might seem small for a top-tier high tech event, there were also 80 exhibitors representing most of the cloud datacenter supply chain, plus a host of outstanding technical sessions. We are always on the hunt for new iron, and not surprisingly the most important gear we saw at OCP this year was related to compute acceleration.

Here is how that new iron we saw breaks down across the major trends in acceleration.

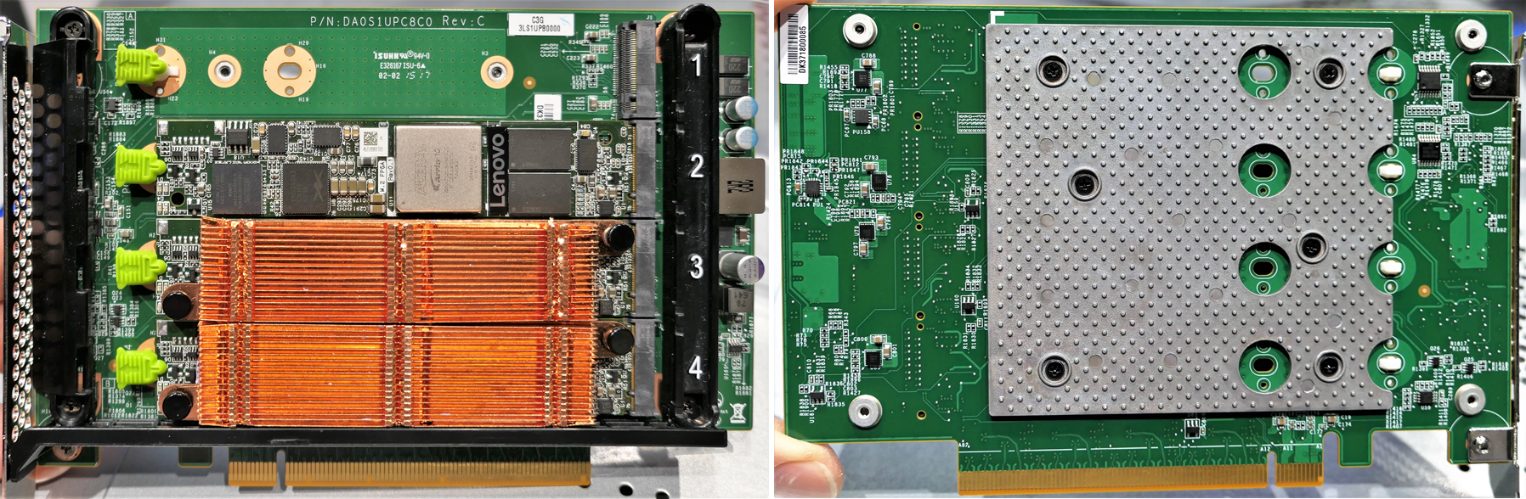

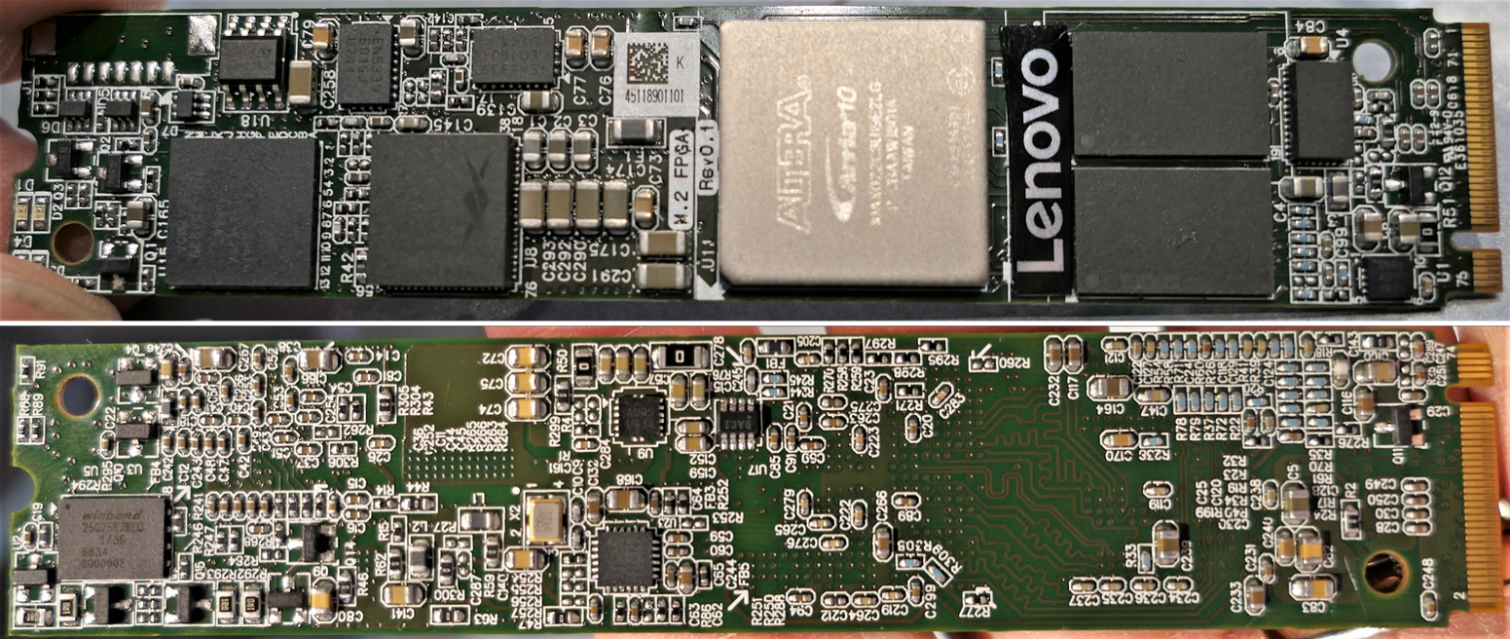

The first interesting thing we saw was a PCI-Express carrier used for M.2 form factor FPGA compute accelerators. This full-height, half-length PCI-Express 3.0 x16 carrier hosting four Lenovo M.2 form factor proof-of-concept (PoC) PCI-Express x4 Intel Aria 10 GX FPGA accelerator cards is seriously innovative. It is the opposite of a brawny core general purpose processor. The words “inference as a service” were used in describing what this might be good for, and we are hoping that at some point we can see performance numbers for a bunch of simultaneous machine learning (ML) model execution streams or threads. We think we are going to need new language to describe running ML real-time inferencing tasks.

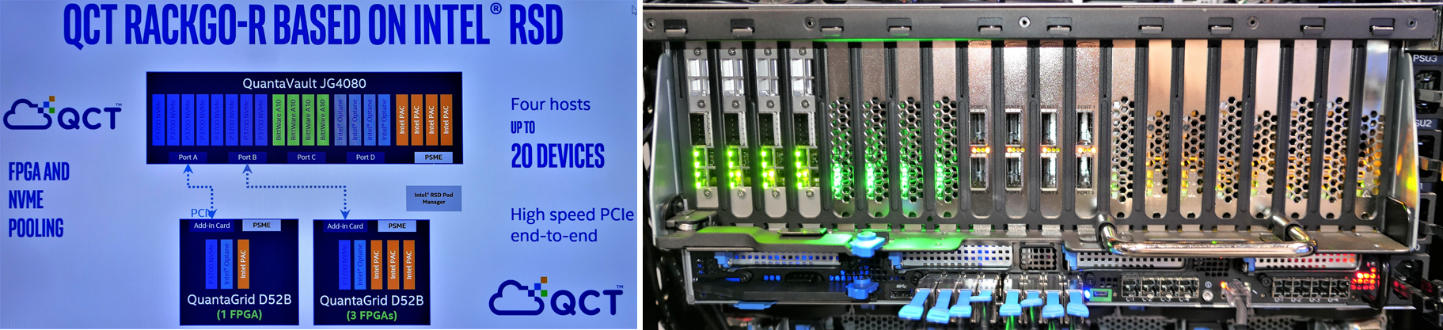

This fine-grain FPGA hardware is being enabled by Intel’s Rack Scale Design (RSD) initiative. Intel is also enhancing its RSD initiative to pool NVM-Express and FPGA resources at boot time to disaggregate FPGA compute resources like this Lenovo PoC. American Megatrends (AMI), the BIOS/UEFI folks, are handling the boot-time resource discovery, as well as run-time composition and management of FPGA resources.

Intel demoed a chassis containing a mix of BitWare A10 FPGA cards and Intel PAC FPGA cards to illustrate their progress.

We think that more M.2 form factors that might host accelerators at some point. FPGAs and M.2 boards were very popular at OCP US Summit this year.

Microsoft and Wiwynn both showed a new dual M.2 “ruler” style carrier cage; 16 of these carriers can be housed in a 1U server. That is 32 M.2 cards total.

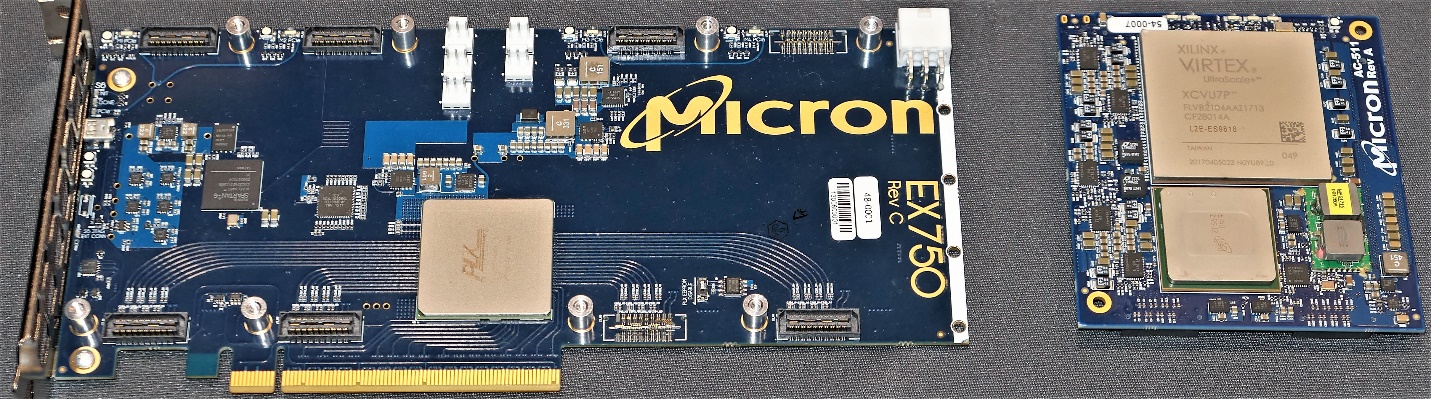

Micron Technology showed a PCI-Express 3.0 x16 FPGA carrier card for two bigger Xilinx Virtex FPGA daughter cards. Micron also conveniently designed a couple of its Hybrid Memory Cube (HMC) parts onto the daughter cards (one has a bright yellow sticker in the photo).

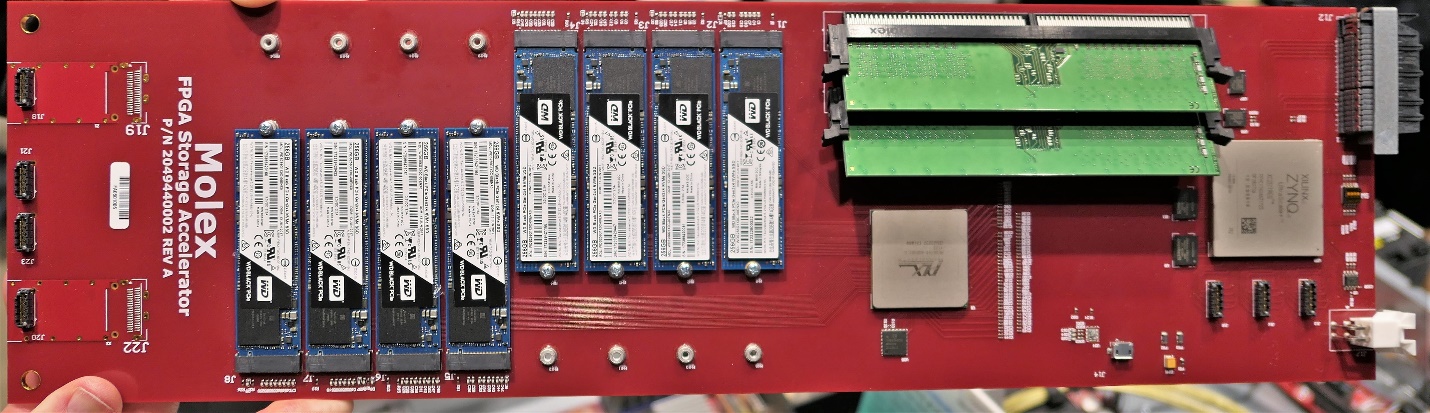

The OpenCAPI effort, founded by IBM under the auspices of the OpenPower Consortium, showed its OpenCAPI to M.2 NVM-Express flash storage accelerator (FSA) reference board for Google/Rackspace OpenPower machines, including the first generation “Zaius” and second generation “Barreleye” designs. The FSA includes a Xilinx Zynq UltraScale+ FPGA and memory subsystem. OpenCAPI can accelerate compute, storage, and/or networking.

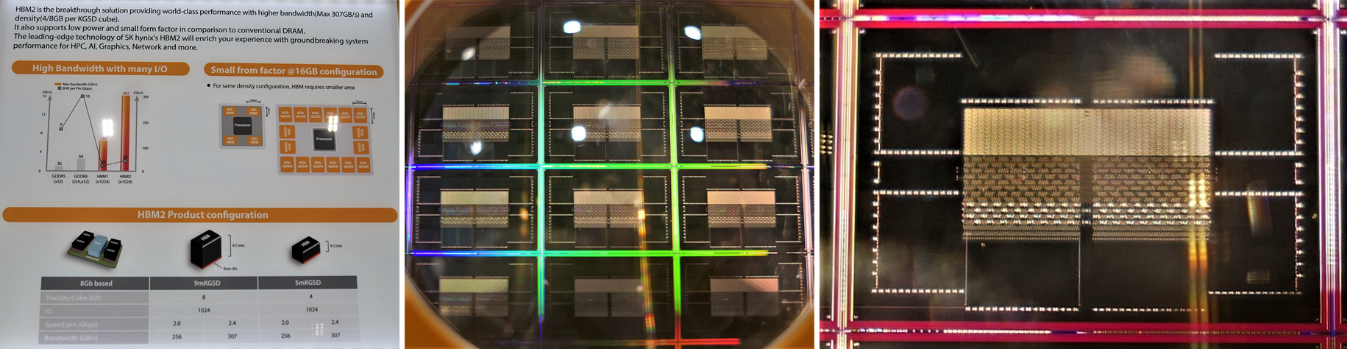

OCP has a very technical audience, so SK Hynix brought its high bandwidth memory (HBM). This is the type of memory that Nvidia just adopted on for its “Pascal” and “Volta” generation of Tesla GPU accelerators. Nvidia’s newest V100 SXM3 doubles the HBM2 capacity from its first generation V100 SXM2 to 32 GB of HBM2 in the processor package.

For Nvidia’s V100 design, HBM2 seems to be consuming about 3 watts per GB. Performance does have a price, but it’s not unreasonable.

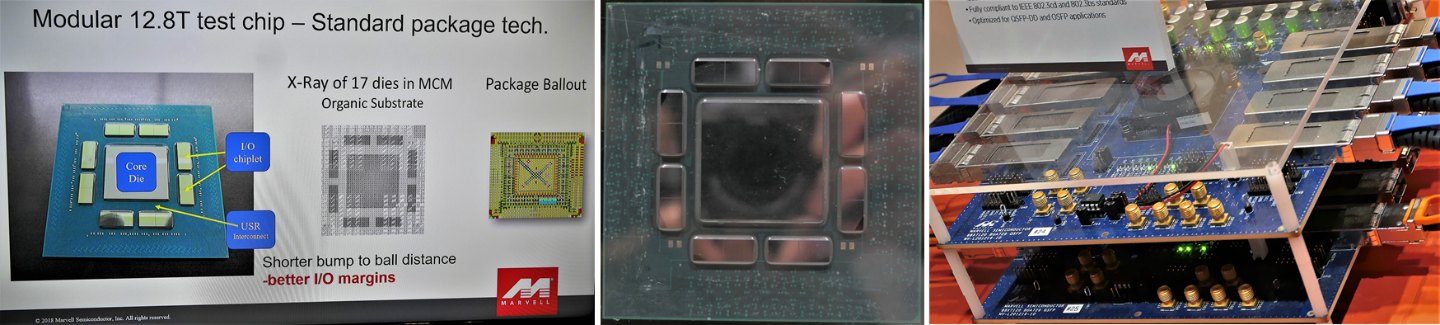

Marvell, which is in the process of buying ARM chip maker and Ethernet ASIC supplier Cavium, is a member of the Ultra Short Reach (USR) Alliance, and demonstrated its USR link for connecting multiple die in a multi-chip module (MCM). USR has a 2.5 centimeter reach at low power (0.82pJ/bit) and tolerates routing bends to reach far corners of an MCM. Marvell’s datacenter networking test chip has been tested at 12.8 Tb/sec of bandwidth.

Marvell’s datacenter networking test chip already integrates 56 Gb/sec PAM4 PHY and gearbox, 112 Gb/sec SERDES, and a ColorChip 200 Gb/sec QSFP56 FR4 silicon photonics transceiver. If Marvell’s acquisition of Cavium proceeds as planned, then Marvell will have access to Cavium’s first-rate ThunderX series of Arm-based server processors and its XPliant chips and QLogic adapters. This type of MCM might show up in a datacenter near you.

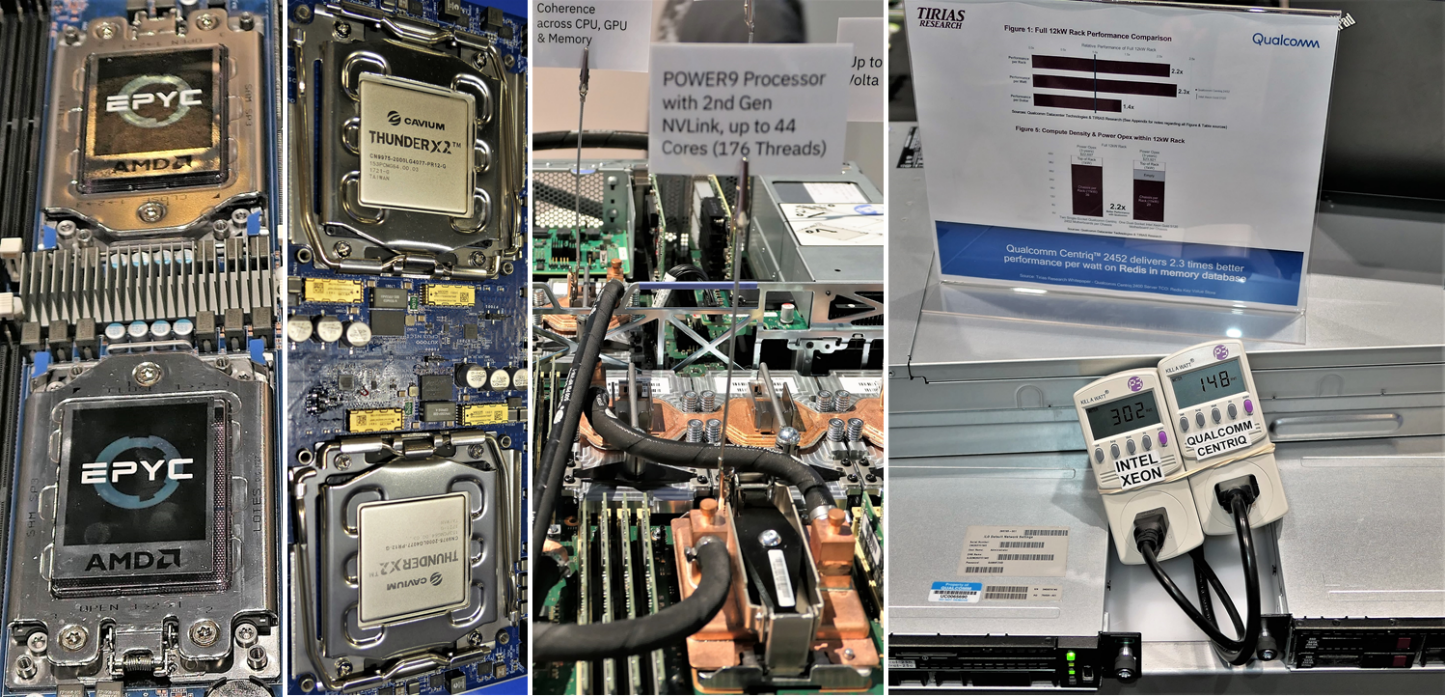

There were also processors galore, of course, at the OCP Summit because this is where worldwide cloud datacenter designers and operators go to shop, and this year all the server processor vendors were there:

- AMD Epyc

- Ampere eMAG

- Cavium ThunderX and ThunderX2

- IBM Power9

- Intel Xeon SP

- Qualcomm Centriq 2400

Finally, there is a strong field of Xeon alternatives available in the market – not “in development” or “coming soon,” but finally “shipping now.” We can’t help but feel that the server market is at peak Intel, with the company’s market share is close to 100 percent. If any of these alternatives gains traction, then Intel loses share, even if Intel’s server business continues to grow within an expanding server market.

Here is our overall impression from the ground at OCP Summit. There were few GPUs at the event this year. Microsoft brought its HGX-1 GPU chassis and Facebook updated its Big Basin GPU chassis design to v2 for Nvidia Tesla V100 support. It could also be that most of the vendors at OCP US Summit either showed GPU designs the week before the summit at OpenPower Summit and/or IBM Think 2018, or the week after the summit at Nvidia’s GPU Technology Conference. It has been a busy time in the past few weeks.

The overarching trend at OCP US Summit was that cloud datacenter buyers in 2018 have a broad choice of processors and compute accelerators to optimize the performance and costs for deploying workloads at massive scale. However, emerging workloads like ML are still not well characterized. It is still too early to call out winning architectures for specific workloads. A wider competitive field means that we may see greater divergence between hardware architectures for accelerating different software stacks.

Paul Teich is an incorrigible technologist and a principal analyst at TIRIAS Research, covering clouds, data analysis, the Internet of Things and at-scale user experience. He is also a contributor to Forbes/Tech. Teich was previously CTO and senior analyst for Moor Insights & Strategy. For three decades, Teich immersed himself in IT design, development and marketing, including two decades at AMD in product marketing and management roles, finishing as a Marketing Fellow. Paul holds 12 US patents and earned a BSCS from Texas A&M and an MS in Technology Commercialization from the University of Texas McCombs School.

Good one Paul, There’s a lot more happening on the OpenCAPI front on the Barreleye Generation 2 platform , other than the molex FSA. We are able to run real data-movement scenarios. For details check my presentation from OpenCompute Summit:

https://drive.google.com/file/d/1AWurrwZ2FbqNaL2ZYIVS_s9ipzpcM04x/view?usp=sharing

Looks Like AMD has more Plans(1) for its HBM2 Stacks than Others and that’s some nice space savings with some FPGA compute inclided right on the HBM2’s die stacks and sharing the same footprint. It’s also an example of some FPGA compute right there in HBM2”s stacked memory with all the intrensic latency advantages of not having to go outside over any PCIe/Other latency and power inefficient protocol steps. Let’s see how that will compare once the dust settles.

Also what about any AMD Radeon Pro WX SSD or Radeon Instinct offerings at this event and AMD is lised as one of the Founding members of that IBM/Members OpenCAPI Consortium while Nvidia is only an assoicate member. I Hope that for the Open Compute nature of this article that folks would see some questions asked of AMD about their OpenCAPI based GPU accelerator intentions as the entire market is much too focused on Nvidia’s GPUs Over Nvidia’s proprietary NVLink goals.

Epyc has arrived and that’s somewhat more sffordable so what about RTG’s accelerator products that will most definitely be going along for the Epyc ride and i’m seeing that those Project 47 systems are now up for order. I’d like to see more focus on the competing products and how that relates to the overall going forward for those that are looking at the Price/Performance and TCO sorts of metrics.

(1)

“AMD patent filing hints at FPGA plans in the pipeline

Plots response to Intel’s Altera acquisition”

https://www.theregister.co.uk/2015/08/11/amd_patent_filing_hints_at_fpga_plans_in_the_pipeline/