Six years ago, when Google decided to get involved with the OpenPower consortium being put together by IBM as its third attempt to bolster the use of Power processors in the datacenter, the online services giant had three applications that had over 1 billion users: Gmail, YouTube, and the eponymous search engine that has become the verb for search.

Now, after years of working with Rackspace Hosting on a Power9 server design, Google is putting systems based on IBM’s Power9 processor into production, and not just because it wants pricing leverage with Intel and other chip suppliers. Google now has seven applications that have more than 1 billion users – adding Android, Maps, Chrome, and Play to the mix – and as the company told us years ago, it is looking for any compute, storage, and networking edge that will allow it to beat Moore’s Law. Google has absolutely no choice but to look for every edge, even if it means adding Power servers – and other exotic forms of compute – to the fleet of millions of Xeon servers that comprise its global infrastructure. The benefits of homogeneity, which have been paramount for the first decade of hyperscaling, no longer outweigh the need to have hardware that better supports the software that companies like Google use in production.

“With a technology trend slowdown and growing demand and changing demand, we have a pretty challenging situation, what we call a supply-demand gap, which means the supply on the technology side is not keeping up with this phenomenal demand growth,” explained Maire Mahony, systems hardware engineer at Google and its key representative these days at the OpenPower Foundation that is steering the Power ecosystem these days. “That makes it hard to for us to balance that curve we call performance per TCO dollar. This problem is not unique to Google. This is an industry-wide problem.”

Mahony was speaking during a keynote session last week at the OpenPower Summit, where IBM also talked about the roadmap for Power processors and the differentiators of memory and I/O connectivity that is key to current and future Power platforms.

Google has spent years creating a software development toolchain that can compile and deploy applications to X86, Power, and Arm servers, and importantly, it has worked with makers of Power and Arm processors and system boards to make sure that these alternatives have microcode and management controllers that look and smell like those available on X86 platforms. In fact, aside from the “Zaius” Power9 system that Google co-engineered with Rackspace Hosting, the most important contributions that Google has made to the OpenPower effort is to work with IBM to create the OPAL firmware, the OpenKVM hypervisor, and the OpenBMC baseboard management controller, which are all crafted to support little endian Linux as is common on X86 chips and which is distinct from the big endian Linux that is designed for IBM Power and z mainframe chips. (The endian refers to the byte ordering that is used, and IBM chips and a few others do them in reverse from the X86 and Arm architectures. The Power8 chip and its Power9 follow-on support either mode.) By making all of these changes, IBM has made the Power platform more palatable to the hyperscalers, which is why Google, Tencent, Alibaba, Uber, and PayPal all talked about how they making use of Power machinery, particularly to accelerate machine learning and generic back-end workloads.

Google, which took center stage in the OpenPower effort four years ago and which spoke in the most detail about its Power platform experiences, did not come right out and say how many machines it had deployed and precisely what workloads they are running. But Mahony did provide some pretty strong hints about where Power systems will probably end up initially in the global Googleplex of datacenters – and why.

The first workload where Google has put Power chips through the paces – in this case with some researchers from Stanford University and Seoul National University – is, of course, the bread and butter search workload that got Google going two decades ago. Earlier this year, Parthasarathy Ranganathan, who used to be chief technology officer at Hewlett Packard Enterprise and who is now a distinguished engineer at Google designing its next-generation systems, published a paper with the Stanford and Seoul researchers showing how search engine performance was affected by the architecture of the platforms.

Specifically, the tests that they ran put Google’s actual search engine code on two-socket servers, one based on the “Haswell” Xeon E5 v3 processor from Intel and the other based on the Power8 processor from IBM, which are roughly of the same vintage. The interesting bit here is that Google has done tests that show that generic benchmark tests such as the SPEC integer and floating point suite and even open source search engines such as Solr or Elasticsearch are not good proxies for predicting the performance of the Google search engine in trolling through and indexing and characterizing web pages. This is a very complex paper, but this chart, part of which Mahony showed, illustrates some of the concepts:

The tests showed the impact of scaling the query per second performance of the search engine with the number of cores in the system without simultaneous multithreading (SMT) turned on, then the effect of SMT on the search job (which as you can see was substantial), and then the affect of huge page support and hardware prefetchers on boosting (or in the case of Power8 with hardware prefetching, curtailing) performance. Obviously, IBM is much closer in parity to Intel now that it has 24 cores on a Power9 die, and with up to four threads per core with SMT4 threading turned on, this is a good balance between cores and threads. We wish the paper had tests on Power9 versus Xeon SP chips, and while such results were not published, you can bet that Google knows the search engine score there.

“We find that when you right size the architecture, and in particular when you right size the L2 cache, the performance of web search scales really nicely with thread count,” explained Mahony. “So for web search, which consumes a significant amount of compute resources at Google, more cores and more threads is a good thing.”

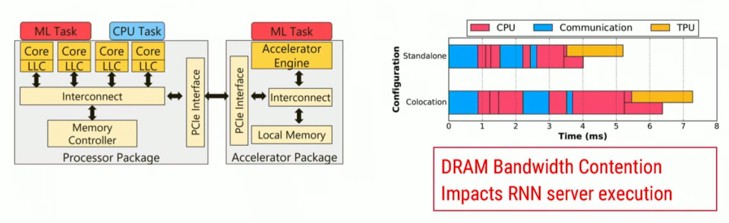

The second example that Mahoney talked about where Google was testing Power9 against Xeon iron was in acting as the host processor for its TensorFlow Processing Unit, or TPU, machine learning accelerator. (We did a deep dive in the latest TPU2 accelerator here.) In this case, the host CPUs are used as parameter servers, managing the gradients that are deployed during machine learning training and inference. Mahony showed this chart:

The choice of host is important for a lot of reasons, but bandwidth is the big one, as Mahony explained: “If the host processor can’t keep up with the accelerator, did you really have an acceleration? Did you really accelerate the fleet? What we find is that the first limiting factor was DRAM bandwidth because there is contention on the DRAM interface. So in this case, more memory bandwidth is what we need in a platform.”

Google is also looking to have other persistent, memory class storage in its systems that can slide in between DRAM and flash, giving something closer to DRAM performance at a cost that is closer to flash, and the 25 Gb/sec “Bluelink” interconnects and the OpenCAPI protocol give Google the means to weave these in as they become available. (3D XPoint, ReRAM, PCM, and memristor memories are all options, we shall see which ones take off.)

The exact deployment details for the Zaius server at Google were not made public, as you might expect. But we think Google has probably been at the front of the Power9 line, just like the big HPC centers at Oak Ridge National Laboratory and Lawrence Livermore National Laboratory have been at the front of the line for the Power9 chips from IBM and the “Volta” GPU accelerators from Nvidia.

“We are pretty excited about the progress we have made with this project and have deployed Zaius in our datacenters,” Mahony said. “We are at the phase for this Zaius platform where we are ready to scale up the number of applications and we are ready to scale up the machine count. What that means is that we are declaring this platform to be Google strong.”

We probably won’t see Power9-based instances on the Google Cloud Platform public cloud, at least not for a while. But it sure seems like a case can be made to use Power9 iron to chew through search queries and to host TPU2 accelerators. There could be plenty of applications that do run on Power9 inside Google in the long run, wherever memory or I/O bandwidth are key. Just like Microsoft can use ARM-based servers, running either its own Windows Server or a variant of the open source Linux operating system, on internal-facing workloads and external facing services where customers are not deploying operating systems and all of the middleware and applications above that, Google can deploy Power9-based machines for internal workloads and external platform-level services where the plumbing doesn’t matter one whit to the customers.

finally, the first real competition against Intel in the cloud. POWER9 is a beast and outperforms Xeons in memeory BW and IO… it is going to be a great 2019 for IBM Systems. Looking forward to the POWER10. Did they provide a hint on that?