In the ideal hyperscaler and cloud world, there would be one processor type with one server configuration and it would run any workload that could be thrown at it. Earth is not an ideal world, though, and it takes different machines to run different kinds of workloads.

In fact, if Google is any measure – and we believe that it is – then the number of different types of compute that needs to be deployed in the datacenter to run an increasingly diverse application stack is growing, not shrinking. It is the end of the General Purpose Era, which began in earnest during the Dot Com Boom and which started to fade a few years ago even as Intel locked up the datacenter with its Xeons, and the beginning of the Cambrian Compute Explosion era, which got rolling as Moore’s Law improvements in compute hit a wall. Something had to give, and it was the ease of use and volume economics that come from homogeneity enabled by using only a few SKUs of the X86 processor.

Bart Sano is the lead of the platforms team inside of Google, which has been around almost as long as Google itself, but Sano has only been at the company for ten years. Sano reports to Urs Hölzle, senior vice president of technical infrastructure at the search engine and cloud giant, and is responsible for the designs the warehouse-scale computers, including the datacenters themselves, everything inside of them including compute and storage, and the network hardware and homegrown software that interconnects them.

As 2016 wound down, Google made several hardware announcements, including bringing GPUs from Nvidia and AMD to Cloud Platform, and also that it would be getting out “Skylake” Xeon processors on Cloud Platform ahead of the official Intel launch later this year and that certain machine learning services on Cloud Platform are running on its custom Tensor Processing Unit (TPU) ASICs or GPUs. In the wake of these announcements, The Next Platform sat down with Sano to have a chat about Google’s hardware strategies, and more specifically about how the company leverages the technology it has created for search engines, ad serving, media serving, and other aspects of the Google business for the public cloud.

Timothy Prickett Morgan: How big of a deal are the Skylake Xeons? We think they might be the most important processor to come out of Intel since the “Nehalem” Xeon 5500s way back in 2009.

Bart Sano: We are really excited about deploying Skylake, because it is a material difference for our end customers, who are going to benefit a lot from the higher performance, the virtualization it provides in the cloud environment, and the computational enhancements that it has in the instruction set for SIMD processing for numerical computations. Skylake is an important improvement for the cloud. Again, I think the broader context here is that Google is really committed to working on and providing the best infrastructure not only for Google, but also to the benefit of our cloud. We are trying to ensure that cloud customers benefit from all of the efforts inside of Google, including machine learning running on GPUs and TPUs.”

TPM: Google was a very enthusiastic user of AMD Opterons the first time around, and I have seen the motherboards because Urs showed them to me, but to my way of thinking about this, 2017 is one of the most interesting years for processing and coprocessing that we have seen in a long, long time. It is a Cambrian Explosion of sorts. So the options are there, and clearly Google has the ability to design and have others build systems and put your software on lots of different things. It is obvious that Skylake is the easiest thing for Google to endorse and move quickly to. But has Google made any commitment to any of these other architectures? We know about Power9 and the work Google is doing there, but has Google said it will add Zen Opterons into the mix, or is it just too early for that?

Bart Sano: I can say that we are committed to the choice of these different architectures, including X86 – and that includes AMD – as well as Power and ARM. The principle that we are investing in heavily is that competition breeds innovation, which directly benefits our end customers. And you are right, this year is going to be a very interesting year. There are a lot of technologies coming out, and there will be a lot of interesting competition.

TPM: It is easy for me to conceive of how some of these other technologies might be used by Google itself, but it is harder for me to see how you get cloud customers on board at an infrastructure level with some of these alternatives like Power and ARM because they have to get their binaries ported and tuned for them. What is the distinction between the timing for a technology that will be used by Google internally and one that will be used by Cloud Platform?

Bart Sano: Our end goal is that whatever technology that we are going to bring forward to the benefit of Google we will bring to bear on the cloud. We view cloud as just another product pillar of Google. So if something is available to ads or search or whatever, it will be available to cloud. Now, you are right, not all of the binaries will be highly optimized, but as it relates to Google’s binaries, our intention is to make all of these architectures equally competitive. You are right, there is obviously a lag in the porting efforts on this software. But our ultimate goal is to get all of them on equal footing.”

TPM: We know that Intel has already had early release on the Skylake Xeons, and we assume that some HPC shops and other hyperscalers and cloud builders like Google have early access to these processors already. So my guess is that you have been playing in the labs with Skylakes since maybe September or October last year, tops. When do you deploy internally at Google with Skylake and when do you deploy to the cloud?

Bart Sano: I can’t speak to the specifics internally, but what I can say is that the cloud will have Skylake in early 2017. That is all that I can really say with precision. But you would assume that we have had these in the labs and we will do a lot of testing before we made an announcement.”

TPM: My guess is that Intel will launch in June or July, and that you can’t have them much before March in production on GCP, and that January or February of this year is just not possible. . . .

Bart Sano: “We could make some bets.” [Laughter]

TPM: Are you doing special SKUs of Skylake Xeons, or do you use stock CPUs.

Bart Sano: I can’t talk about SKUs and such, but what I can say is that we have Skylake. [Laughter]

TPM: AMD is obviously pleased that Google has endorsed its GPUs as accelerators. What is the nature of that deal?

Bart Sano: It is about choice, and what architecture is best for what workloads. We think there are cases where the AMD will provide a good choice for our end customers. It is always good to have those options, and not everything fits onto one architecture, whether it is Intel, AMD, Nvidia or even our own TPUs. You said it best in that this is an explosion of diversity. Our position is that we should have as many of these architectures as possible as options for our customers and let competition choose which one is right for different customers.

“The cloud infrastructure is not remarkably different from the internal Google infrastructure – and it should not be because we are trying to leverage the cost structures of both together and the lessons we learn from the Google businesses.”

TPM: Has Google developed its own internal framework that spans all of these different compute elements, or do you have different frameworks for each kind of compute? There is CUDA for Nvidia GPUs, obviously, and you can use ROCm from AMD to do CL or move CUDA onto its Polaris GPUs. There is TensorFlow for deep learning, and other frameworks. My assumption is that Google is smart about this, so prove me right.

Bart Sano: It is a challenge. For certain workloads, we can leverage common pipes. But for the end customers, there is an issue in that there are different stacks for the different architectures, and it is a challenge. We do have our own internal ways to try to get commonality so we are able to run more efficiently, at least from a programmer perspective it is all taken care of by the software. I think that what you are pointing out is that if cloud customers have binaries and they need to run them, we have to be able to support that. That diversity is not going to go away.

We are trying to get the marketplace to get more standardization in that area, and in certain domains we are trying to abstract it out so it is not as big of an issue – for instance, with TensorFlow for machine learning. If that is adopted and we have Intel support TensorFlow, then you don’t have that much of a problem. It just becomes a matter of compilation and it is much more common.

TPM: What is Google’s thinking about the Knights family of processors and coprocessors, especially the current “Knights Landing” and the future “Knights Crest” for machine learning and “Knights Hill” for broader-based HPC?

Bart Sano: Like I said, we have a basic tenet that we do not turn anything away and that we have to look at every technology. That is why choice is so important. We want to choose wisely, because whatever we put into the infrastructure is going to go to not only our own internal customers, but the end customers of our cloud products. We look at all of these technologies and assess them according to total cost of ownership. Whether it is for search, ads, geo, or whatever internally or for the cloud, we are constantly assessing all technologies.

TPM: How do you manage that? Urs told me that Google has three different server designs each year coming out of the labs into production, and servers stay in production for several years. It seems to me that if you start adding more different kinds of compute, it will be more complex and expensive to build and support all of this diversity of machinery. If you have an abstraction layer in the software and a build process that lets applications be deployed to any type of compute, that makes it easier. But you still have an increasing number of type and configuration of machines. Doesn’t this make your manufacturing and supply chain more complex, too?

Bart Sano: You are right, having all of these different SKUs makes it difficult to handle. In any infrastructure, you have a mix of legacy versus current versus new stuff, and the software has to abstract that. There are layers in the software stack, including Borg internally or Kubernetes on the cloud as well as others.

TPM: You can do a lot in the hardware, too, right? An ARM server based on ThunderX from Cavium has a similar BIOS and baseboard management controller as a Xeon server, and ditto for a “Zaius” Power9 machine like the one that Google is creating in conjunction with Rackspace Hosting. You can get the form factors the same, and then you are differentiating in other aspects of the system such as memory bandwidth or capacity. But we have to assume that the number of servers that Google is supporting is still growing as more types of compute are added to the infrastructure.

Bart Sano: The diversity is growing, and when we helped found the OpenPower consortium, we knew what that meant. And the implications are a heterogeneous environment and much more operational complexity. But this is the reality of the world that we are entering. If we are to be the solution to more than just the products of Google, we have to support this diversity. We are going into it with our eyes wide open.

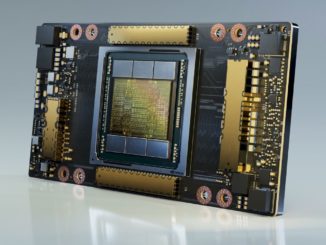

TPM: Everybody assumes that Google has lots of Nvidia GPU for accelerating machine learning and other workloads, and now you have Radeon GPUs from AMD. Have you been doing this for a long time internally and now you are just exposing it on Cloud Platform?

Bart Sano: We have actually been doing the GPUs and the TPUs for a while, and we are now exposing it to the cloud. What became apparent is that the cloud customer wanted them. That was the question: would people want to come to the cloud for this sort of functionality.

The cloud infrastructure is not remarkably different from the internal Google infrastructure – and it should not be because we are trying to leverage the cost structures of both together and the lessons we learn from the Google businesses.

TPM: With the GPUs and TPUs, are you exposing them at an infrastructure level, where customers can address them directly like they would internally on their own iron, or are they exposed at a platform level, where customers buy a Google service that they just pour data into and run and they never get under the hood to see?

Bart Sano: The GPUs are exposed at more of an infrastructure level, where you have to see them to run binaries on them. It is not like a platform, and customers can pick whether they want Nvidia or AMD GPUs. They will be available attached to Compute Engine virtual machines, and for the Cloud Machine Learning services. For those who want to interact at that level, they can. We provide support for TPUs at a higher level, with our Vision API for image search service or Translation API for language translation, for example. They don’t really interact with TPUs, per se, but with the services that run on them.

TPM: What design does Google use to add GPUs to its servers? Does it look like an IBM Power Systems LC or Nvidia DGX-1 system? Do you have a different way of interconnecting and pooling GPUs than what people currently are doing? Have you adopted NVLink for lashing together GPUs?

Bart Sano: I would say that GPUs require more interconnection and cluster bandwidth. I can’t say that we are the same as the examples you are talking about, but what I can say is that we match the configuration so the GPUs are not starved for memory bandwidth and communication bandwidth. We have to architect these systems so they are not starved. As for NVLink, I can’t go into details like that.

TPM: I presume that there is a net gain with this increasing diversity. There is more complexity and lower volumes of any specific server type, but you can precisely tune workloads for specific hardware. We see it again and again that companies are tuning hardware to software and vice versa because general purpose, particularly at Google’s scale, is not working anymore. Unit costs rise, but you come out way ahead. Is that the way it looks to Google?

Bart Sano: That is the driver behind why we are providing these different kinds of computation. As for the size of the jump in price/performance, it is really different for different customers.

Google does not want competition to foster innovation… what Google wants is competition for lowering costs. So far, all cloud providers have very similar performance and capabilities. Cloud is.like utitlity market, you need to optimize cost to get $$$