When it comes to supercomputing, more is almost always better. More data and more compute – and more bandwidth to link the two – almost always result in a better set of models, whether they are descriptive or predictive. This has certainly been the case in weather forecasting, where the appetite for capacity to support more complex models of the atmosphere and the oceans and the integration of models running across different (and always increasing) resolutions never abates.

This is certainly the case with the National Oceanic and Atmospheric Administration, which does weather and climate forecasting on a regional, national, and global level for the United States and which feeds its forecasts to various organizations in the news, transportation, agricultural, and other interested industries that are affected by weather.

The United States arguably did not invest enough in weather forecasting for many years, but NOAA inked a ten-year, $502 million deal with IBM back in March 2011 to massively upgrade the supercomputers at the National Centers for Environmental Prediction (NCEP) facilities in Reston, Virginia and Orlando, Florida, and in the wake of Hurricane Sandy in 2012, the investment increased, not just to build yet another larger pair of supercomputers, but to keep the pace of upgrades steady and predictable. The Weather and Climate Operational Supercomputing System, or WCOSS, was last upgraded two years ago with a pair of Cray XC40 machines, and just like clockwork, it is getting upgraded in early 2018 with a substantial performance boost that will improve the length and accuracy of forecasts here in the States.

IBM is the system integrator for the WCOSS contract still, but thanks to the sale of its System x business to Lenovo in 2014 and the fact that IBM moved NCEP off its Power architecture machines as part of the initial WCOSS contract, Big Blue did not have an X86 server business of its own and could not convince NCEP to move to Power-based machinery. When and if weather codes are accelerated by GPUs, it is possible that IBM may be able to pitch a hybrid Power CPU-Tesla GPU cluster to NCEP and win the deal. But this time around, in 2018, due to the nature of the NOAA simulations (which are not accelerated by GPUs for productions) and the simplicity and economics of staying with X86 systems, IBM has tapped Dell to supply the servers for the pair of new WCOSS machines, dubbed “Mars” and “Venus,” and Mellanox Technologies to supply the InfiniBand network linking the nodes together.

With the addition of these pair of systems, the aggregate computing capacity of NOAA’s six production machines (three pairs) has now tripled, to 8.4 petaflops, and including research and development systems, the aggregate capacity of all the iron fired up in the NOAA datacenters (including some capacity it shares on the “Gaea” system at Oak Ridge National Laboratory) is now 16.5 petaflops.

The Path To Petaflops

Throughout the 1990s, NCEP facilities at NOAA were big on Cray Y-MP vector supercomputers for weather forecasting. But back in 2002, IBM won a deal to build NOAA’s supercomputers, and clusters using Power4 and Power5 processors were deployed in NOAA’s datacenters in Maryland and West Virginia. The two final Power-based clusters, called “Stratus” and “Cirrus,” went live in August 2009 and had 156 nodes of IBM’s Power5-based, water-cooled Power 575 machines linked by a 20 Gb/sec DDR InfiniBand network. These clusters had a total of 4,992 cores running at 4.7 GHz, but delivered a mere 73.1 teraflops.

With the initial WCOSS contract, a pair of machines was installed in NOAA datacenters in Reston, Virginia (the primary) and in Orlando, Florida (the backup). The primary datacenter runs the production workloads, which crank through the forecasting models and kick out the weather reports that organizations use to either tell us the forecast or make their own business plans and predictions.

The neat thing is that the backup systems don’t just sit there idle, waiting for a crash, David Michaud, director of the National Weather Service’s Office of Central Processing, tells The Next Platform. Rather, code that is making the transition from development to production is run side-by-side with the actual production code so it can be analyzed against that production code for its effectiveness in doing forecasts. If something bad happens and the primary site is taken offline, the research and production tasks are switched. Files are always replicated – using homegrown scripts, not a geographically dispersed extension of the Global Parallel File System (GPFS) that NOAA uses for file storage on its clusters – between the datacenters, so this task switching is not a big deal.

“Within NOAA, we certainly are looking at GPUs. Our research labs have been doing some work on how to best use novel architectures. To date, we do not have a comprehensive suite configured in such a way to take advantage of GPUs in an operational environment, but we are keeping an eye towards that.”

The initial WCOSS cluster in the Virginia datacenter was called “Tide” and its mirror in the Orlando backup center was called “Gyre,” processed 3.5 billion observations per day from various input devices – satellites, weather balloons, airplanes, buoys, and ground observing stations – used in weather forecasting and cranked out over 15 million simulations, models, reports, and other outputs per day. The WCOSS phase one machines were installed in July 2013 were rated at 167 teraflops peak at double precision floating point, and were almost immediately upgraded with another 64 teraflops. In January 2015, IBM added a lot more iDataPlex nodes to Tide and Gyre, boosting the performance of the aggregate cluster by 599 teraflops per system and to a total of 830 teraflops of compute per cluster. The Tide and Gyre clusters run Red Hat Enterprise Linux, employ 56 Gb/sec FDR InfiniBand from Mellanox and IBM’s Platform Computing Load Sharing Facility (LSF) to lash the nodes together and schedule work on the two clusters.

Back in January 2016, as we reported in detail at the time, NOAA kicked off another upgrade cycle and spent $19.5 million of that WCOSS budget plus another $25 million it got in supplemental funding from the Disaster Relief Appropriations Act of 2013 that paid for the damages from Hurricane Sandy to add a pair of Cray XC40 systems to the Reston and Orlando datacenters, called “Luna” and “Surge,” respectively. These Cray machines employed the company’s “Aries” interconnect, not InfiniBand, and were built from 2,048 nodes apiece, with each node having a pair of either “Sandy Bridge” Xeon E5 or “Haswell” Xeon E5 v3 processors from Intel. The Luna and Surge clusters have 50,176 cores each and delivered 2.06 petaflops of peak performance. The machines were equipped with 2 GB per core of memory, which seems to be the way NOAA does it across many generations.

With the Mars and Venus upgrades based on Dell iron that IBM is integrating for NOAA, NCEP is getting a lot more compute in less than half as many nodes, and NCEP is switching away from Aries back to InfiniBand – a decision that was based mostly on the bang for the buck that the vendors submitting bids to IBM offered. (We don’t know who bid on the contract, but presumably Cray did to try to keep the account and probably Hewlett Packard Enterprise did, too, since NOAA has also used SGI iron in the past in its research and development systems.) The Dell clusters are based on enterprise-grade, rather than purpose built, PowerEdge nodes. There are 1,212 nodes in each cluster, and each node has a pair of 14-core “Broadwell” Xeon E5 v4 processors from Intel, which run at 2.6 GHz. The nodes all have 2 GB per core of memory (which works out to only 56 GB in a machine with 28 cores), and are linked to each other using 100 Gb/sec EDR InfiniBand switches and server adapters from Mellanox. The Mars and Venus clusters are rated at 2.8 petaflops peak, with 33,936 cores each. That’s a lot fewer cores and considerably more oomph than in the prior Cray XC40 iron, thanks to Moore’s Law.

As for storage, NOAA is, as you might expect, sticking with GPFS and each machine is getting a dedicated 5.9 PB file system. All of the four pairs of GPFS storage clusters are interlinked with an additional InfiniBand network, which allows the machines in the Reston and Orlando datacenters to access any data stored adjacent to any of the four systems in each center. The total storage capacity of each center is now just under 14 PB.

That’s a 100 percent increase in production compute against a 60 percent increase in production storage.

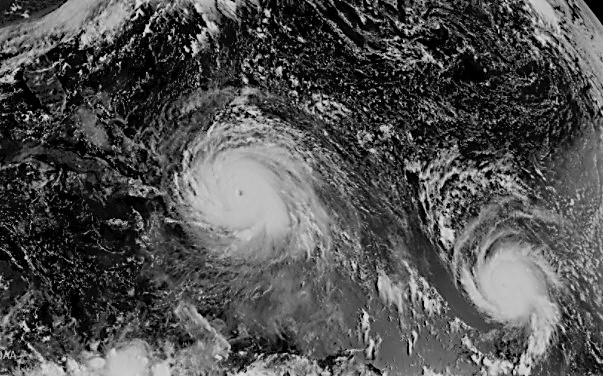

At the moment, the Global Forecast Model created by NOAA and used by the National Weather Service to provide the basis for forecasting by other interested parties has a 384 hour (16-day) forecast that runs at 13 kilometer cell resolution with 64 levels; but to get the forecasts in a timely fashion, the forecast is only run at that resolution for the first ten days and then at a lower resolution for the following six. With the Mars and Venus upgrades, the 13 kilometer higher resolution will first be enabled to run for the full 16 days thanks to the increased floating point oomph, and then the next generation Global Forecast System, called the American Model in the weather circles (and in contrast to the European Center for Medium-Range Weather Forecasts, or European Model for short, that has been the best predictor of weather for a while) will be fired up by NOAA, delivering a resolution of 9 kilometer cells with 128 levels and a full 16-day forecast. That higher resolution American Model will be running on the backup NOAA systems in test mode throughout the hurricane season in 2018 – which starts on June 1 in the Atlantic Ocean and on May 1 in the Pacific Ocean – and it is hoped that this higher resolution forecasting system will be tested and ready for production in 2019.

We were curious as to why NCEP would go with Broadwell processors instead of “Skylake” Xeon SP processors, especially considering the fact that the Skylakes came with AVX-512 vector math units, which are twice as wide and, therefore, in theory capable of processing twice as many math operations per clock as the AVX2 units in the Broadwell chips. Without getting into detail, Michaud simply said that when IBM shopped out the iron for the deal, the Broadwell chips provided the best bang for the buck and that is why they were chosen. We think that many other HPC shops are doing similar math, especially considering the hefty premium that Intel is charging for processing and memory capacity with the Skylake chips. The other factor, according to Michaud, was the availability of Skylake processors, and when the WCOSS phase three bidding was being done, the timing on the Skylake chips was apparently less certain.

We were also curious about when – or if – NOAA might be moving its forecasting models to GPU accelerated nodes, given the hard work done on porting codes to GPUs in the past few years, particularly in Europe but also with IBM working with the National Center for Atmospheric Research to develop a new community model for weather, possibly accelerated by GPUs and tuned for Power processors as you might expect from Big Blue.

“Within NOAA, we certainly are looking at GPUs,” Michaud says, pointing out that NOAA has a research cluster called “Thea” that weighs in at 2 petaflops that is a hybrid CPU-GPU machine that is based on Nvidia’s prior generation of “Pascal” GPU accelerators. “Our research labs have been doing some work on how to best use novel architectures. To date, we do not have a comprehensive suite configured in such a way to take advantage of GPUs in an operational environment, but we are keeping an eye towards that. We are not unique in this, and whether numerical weather prediction codes in general can benefit, it is a challenging problem to run on architectures like that. It is difficult to get the kind of performance improvements to make it a reasonable investment.”

That said, some things have to be tested at scale, and hence the 2 petaflops research machine in Fairmont, West Virginia, which would rank relatively high on the Top 500 list if the Linpack Fortran benchmark was ever run on it. Like, as high as the prior generation Luna and Surge systems, for instance, which ranked 80th and 81st on the November 2017 list.

Mars and Venus will be operational at the NCEP centers by the end of this month, and hopefully the weather forecasts will get that much better.

Be the first to comment