Professor Satoshi Matsuoka, of the Tokyo Institute of Technology (Tokyo Tech) researches and designs large scale supercomputers and similar infrastructures. More recently, he has worked on the convergence of Big Data, machine/deep learning, and AI with traditional HPC, as well as investigating the Post-Moore Technologies towards 2025.

He has designed supercomputers for years and has collaborated on projects involving basic elements for the current and more importantly future Exascale systems. I talked with him recently about his work with the Tsubame supercomputers at Tokyo Tech. This is the first in a two-part article. For background on the Tsubame 3 system we have an in-depth article here from earlier this year.

TNP: Your new Tsubame 3 supercomputer is quite a heterogeneous architecture of technologies. Have you always built heterogeneous machines?

Matsuoka: I’ve been building clusters for about 20 years now—from research to production clusters—all in various generations, sizes, and forms. We built our first very large-scale production cluster for Tokyo Tech’s supercomputing center back in 2006. We called it Tsubame 1, and it beat the then fastest supercomputer in Japan, the Earth Simulator.

We built Tsubame 1 as a general-purpose cluster, instead of a dedicated, specialized system, as the Earth Simulator was. But, even as a cluster it beat the performance in various metrics of the Earth Simulator, including the top 500, for the first time in Japan. It instantly became the fastest supercomputer in the country, and held that position for the next 2 years.

I think we are the pioneer of heterogeneous computing. Tsubame 1 was a heterogenous cluster, because it had some of the earliest incarnations of accelerators. Not GPUs, but a more dedicated accelerator called Clearspeed. And, although they had a minor impact, they did help boost some application performance. From that experience, we realized that heterogeneous computing with acceleration was the way to go.

TNP: You seem to also be a pioneer in power efficiency with three wins on the Green 500 list. Congratulations. Can you elaborate a little about it?

Matsuoka: As we were designing Tsubame 1, it was very clear that, to hit the next target of performance for Tsubame 2, which we anticipated would come in 2010, we would also need to plan on reducing overall power. We’ve been doing a lot of research in power-efficient computing. At that time, we had tested various methodologies for saving power while also hitting our performance targets. By 2008, we had tried using small, low-power processors in lab experiments. But, it was very clear that those types of methodologies would not work. To build a high-performance supercomputer that was very green, we needed some sort of a large accelerator chip to accompany the main processor, which is x86.

We knew that the accelerator would have to be a many core architecture chip, and GPUs were finally becoming usable as a programming device. So, in 2008, we worked with Nvidia to populate Tsubame 1 with 648 third-generation Tesla GPUs. And, we got very good results on many of our applications. So, in 2010, we built Tsubame 2 as a fully heterogenous supercomputer. This was the first peta-scale system in Japan. It became #1 in Japan and #4 in the world, proving the success of a heterogeneous architecture. But, it was also one of the greenest machines at #3 on the Green 500, and the top production machine on the Green 500. The leading two in 2010 were prototype machines. We won the Gordon Bell prize in 2011 for the configuration, and we received many other awards and accolades.

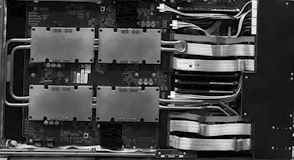

It was natural that when we were designing Tsubame 3, we would continue our heterogeneous computing and power efficiency efforts. So, Tsubame 3 is the second-generation, large-scale production heterogeneous machine at Tokyo Tech. It contains 540 nodes, each with four Nvidia Tesla P100 GPUs (2,160 total), two 14-core Intel Xeon Processor E5-2680 v4 (15,120 cores total), two dual-port Intel Omni-Path Architecture (Intel OPA) 100 Series host fabric adapters (2,160 ports total), and 2 TB of Intel SSD DC Product Family for NVMe storage devices, all in an HPE Apollo 8600 blade, which is smaller than a 1U server.

A lot of the enhancements that went into the machine are specifically to make it a more efficient machine as well as for high performance. The result is that Tsubame 3–although at the time of measurement for the June 2017 lists we only ran on a small subset of the full configuration—is #61 on the Top 500 and #1 on the Green 500 with 14.11 gigaflops/watt, an RMax of just under 2 petaflops, and a theoretical peak of over 3 petaflops. Tsubme 3 just became operational August 1, with its full 12.1 petaflops configuration, and we hope to have the scores for the full configuration for the November benchmark lists, including the Top500 and the Green500.

TNP: Tsubame 3 is not only a heterogeneous machine, but built with a novel interconnect architecture. Why did you choose the architecture in Tsubame 3?

Matsuoka : That is one area where Tsubame 3 is different, because it touches on the design principles of the machine. With Tsubame 2, many applications experienced bottlenecks, because they couldn’t fully utilize all the interconnect capability in the node. As we were designing Tsubame 3, we took a different approach. Obviously, we were planning on a 100-gigabit inter-node interconnect, but we also needed to think beyond just speed considerations and beyond just the node-to-node interconnect. We needed massive interconnect capability, considering we had six very high-performance processors that supported a wide range of workloads, from traditional HPC simulation to big data analytics and artificial intelligence, all potentially running as co-located workloads.

For the network, we learned from the Earth simulator back in 2002 that to maintain application efficiency, we needed to sustain a good ratio between memory bandwidth and injection bandwidth. For the Earth Simulator, that ratio was about 20:1. So, over the years, I’ve tried to maintain a similar ratio in the clusters we’ve built, or set 20:1 as a goal if it was not possible to reach it. Of course, we also needed to have high bisection bandwidth for many workloads.

Today’s processors, both the CPUs and GPUs, have significantly accelerated FLOPS and memory bandwidth. For Tsubame 3, we were anticipating certain metrics of memory bandwidth in our new GPU, plus, the four GPUs were connected in their own network. So, we required a network that would have a significant injection bandwidth. Our solution was to use multiple interconnect rails. We wanted at least one 100 gigabit injection port per GPU, if not more.

For high PCIe throughput, instead of running everything through the processors, we decided to go with a direct-attached architecture using PCIe switches between the GPUs, CPUs, and Intel OPA host adapters. So, we have full PCIe bandwidth between all devices in the node. Then, the GPUs have their own interconnect between themselves. That’s three different interconnects within a single node.

If you look at the bandwidth of these links, they’re not all that different. Intel OPA is 100 gigabits/s, or 12.5 GB/s. PCIe is 16 GB/sec. NVLink is 20 GB/sec. So, there’s less than a 2:1 difference between the bandwidth of these links. As much as possible we are fully switched within the node, so we have full bandwidth point to point across interconnected components. That means that under normal circumstances, any two components within the system, be it processor, GPU, or storage, are fully connected at a minimum of 12.5 GB/sec. We believe that this will serve our Tsubame 2 workloads very well and support new, emerging applications in artificial intelligence and other big data analytics.

TNP: Why did you go with the Intel Omni-Path fabric?

Matsuoka: As I mentioned, we always focus on power as well as performance. With a very extensive fabric and a high number of ports and optical cables, power was a key consideration. We worked with our vendor, HPE, to run many tests. The Intel OPA host fabric adapter proved to run at lower power compared to InfiniBand. But, as important, if not more important, was thermal stability. In Tsubame 2, we experienced some issues around interconnect instability over its long operational period. Tsubame 3 nodes are very dense with a lot of high-power devices, so we wanted to be make sure we had a very stable system.

A third consideration was Intel OPA’s adaptive routing capability. We’ve run some of our own limited-scale tests. And, although we haven’t tested it extensively at scale, we saw results from the University of Tokyo’s very large Oakforest-PACS machine with Intel OPA. Those indicate that the adaptive routing of OPA works very, very well. And, this is critically important, because one of our biggest pain points of Tsubame 2 was the lack of proper adaptive routing, especially when dealing with degenerative effects of optical cable aging. Over time AOCs die, and there is some delay between detecting a bad cable and replacing it or deprecating it. We anticipated Intel OPA, with its end-to-end adaptive routing, would help us a lot. So, all of these effects combined gave the edge to Intel OPA. It wasn’t just the speed. There were many more salient points by which we chose the Intel fabric.

TNP: With this multi-interconnect architecture, will you have to do a lot of software optimization for the different interconnects?

Matsuoka: In an ideal world, we will have only one interconnect, everything will be switched, and all the protocols will be hidden underneath an existing software stack. But, this machine is very new, and the fact that we have three different interconnects is reflecting the reality within the system. Currently, except for very few cases, there is no comprehensive catchall software stack to allow all of these to be exploited at the same time. There are some limited cases where this is covered, but not for everything. So, we do need the software to exploit all the capability of the network, including turning on and configuring some appropriate DMA engines, or some pass through, because with Intel OPA you need some CPUs involvement for portions of the processing.

So, getting everything to work in sync to allow for this all-to-all connectivity will require some work. That’s the nature of the research portion of our work on Tsubame 3. But, we are also collaborating with people like a team at The Ohio State University.

We have to work with some algorithms to deal with this connectivity, because it goes both horizontally and vertically. The algorithms have to adapt. We do have several ongoing works, but we need to generalize this to be able to exploit the characteristics of both horizontal and vertical communications between the nodes and the memory hierarchy. So far, it’s very promising. Even out of the box, we think the machine will work very well. But as we enhance our software portion of the capabilities, we believe the efficiency of the machine will become higher as we go along.

In the next article in this two-part series later this week, Professor Matsuoka talks about co-located workloads on Tsubame 3.

Ken Strandberg is a technical story teller. He writes articles, white papers, seminars, web-based training, video and animation scripts, and technical marketing and interactive collateral for emerging technology companies, Fortune 100 enterprises, and multi-national corporations. Mr. Strandberg’s technology areas include Software, HPC, Industrial Technologies, Design Automation, Networking, Medical Technologies, Semiconductor, and Telecom. He can be reached at ken@catlowcommunications.com.

Be the first to comment