Over the last couple of years, much work has been shifted into making FPGAs more usable and accessible. From building around OpenCL for a higher-level interface to having reconfigurable devices available on AWS, there is momentum—but FPGAs are still far from the grip of everyday scientific and technical application developers.

In an effort to bridge the gap between FPGA acceleration and everyday domain scientists who are well-versed in using the common scripting language, a team from the University of Southern California has created a new platform for Python-based development that abstracts the complexity of using low-level approaches (HDL, Verilog). “Rather than drive FPGA development from the hardware up, we consider the impact of leveraging Python to accelerate application development, explains one of the project leads, USC’s Andrew Schmidt.

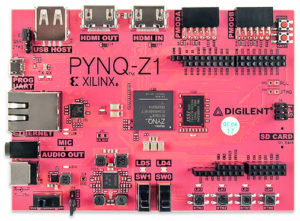

Schmidt’s team used PYNQ, a Python development environment for application development based on the Xilinx Zynq boards. “Python has a rich ecosystem of libraries and applications that the communities have developed. We can replace those lower level functions with FPGA implementations and tell the device that instead of calling, for example, function A, you’re calling function A-prime. We wanted to understand how painful it would be for developers, and of course, what the overhead would be of using Python to punch through to the hardware—how much performance degradation, latency, and memory problems it might cause,” Schmidt tells The Next Platform.

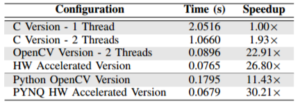

The bottlenecks and latencies were not as bad as one might expect. After all, there are already many that say even OpenCL introduces far too much overhead to be useful in a high performance environment. Using the computer vision platform, OpenCV, Schmidt and team worked on using Python on FPGAs for a NASA JPL project for edge detection to help a rover avoid rocks and barriers. Schmidt says the results were very promising with the ability to exceed performance from C implementations by 30X. He adds that with so many efficient Python libraries beyond OpenCV, there are far more options for FPGA acceleration with a Python springboard that might provide similar boots over C-only approaches.

The bottlenecks and latencies were not as bad as one might expect. After all, there are already many that say even OpenCL introduces far too much overhead to be useful in a high performance environment. Using the computer vision platform, OpenCV, Schmidt and team worked on using Python on FPGAs for a NASA JPL project for edge detection to help a rover avoid rocks and barriers. Schmidt says the results were very promising with the ability to exceed performance from C implementations by 30X. He adds that with so many efficient Python libraries beyond OpenCV, there are far more options for FPGA acceleration with a Python springboard that might provide similar boots over C-only approaches.

The NASA work using OpenCV took less than a day to get running with good results but of course, getting the baseline hardware libraries was not quite as speedy. That took the team six months, but with that hardware core done, developers can drop that down and adapt the Python code with all the debuggers and profilers and get results faster than C on Linux-based systems. In short, they can change the hardware configuration and design without changing C. The goal is to make this all portable to higher end devices since it is essentially a hardware API—something that could be very useful for Amazon and its F1 instances in the future.

Schmidt says the logical step is to port the same concept to higher-performing FPGA devices for use in larger-scale server environments with multiple FPGAs and a Python base. He points to the new Xilinx Zynq UltraScale+ FPGA that has four ARM A53 application processors and two ARM R5 real-time processors as being particularly attractive for scientific and technical computing users that might want a Python interface for FPGA acceleration.

He notes that as software developers embrace Python for ease of programming, naïve ports or implementations can yield terrible performance. “A straight port of the C version of the edge detector was implemented in Python and resulted in running 334.8% slower than the C version. Even though the port took less than an hour, it was mean to highlight the importance of using Python’s extremely large communities of libraries, analysis tools, and debuggers.” He says it was simple to get the Python based OpenCV up and running on the ARM A9 cores for an 11.43X speedup over the C version and an outstanding 3,826.9X speedup over the Python C version.

While the work is promising and right in line with what Xilinx expected with the release of the PYNQ boards to provide a wider potential base for FPGA use, it is still a long road ahead. There is still a very FPGA driven development flow component to this work. Until there is a nice, rich set of libraries to download and add to the design, users are still going to be beholden to the this flow of building bitstreams versus taking a library and calling it, using functions, and so on. A bitstream is device and location specific, which introduces some constraints, but this is at least a start—and with the PYNQ boards in the hands of more developers that can start building an ecosystem around it similar to what happened with Raspberry Pi, for instance, it does offer the promise of wider FPGA adoption—even if it’s still missing the usability matched with performance Schmidt and team are continuing to strive toward.

Very interesting, I quite like Python as a language, especially for rapid prototyping. Considering that in situations of hardware construction, the speed isn’t really an issue, this is a perfect example of where it could be useful.

Perhaps someone could make something similar to Chisel (possibly even running on top of it) that uses Python as the prime language?

Very interesting approach and useful comparison.

We work on a similar project trying to accelerate Spark application using python and offloading the most computational intensive functions to the programmable logic of Pynq.

The goal is to utilize the accelerators seamlessly without any change on the original Spark-pynq code using a library of accelerators. https://github.com/vineyard2020/SPynq