Security is one of those necessary things that should not be an afterthought, but often is, and ideally is so invisible that it doesn’t get in the way of applications and the infrastructure it runs on. Speaking very generally, the cost of security has been so high on servers that for many years that even the hyperscalers and cloud builders could not deploy it throughout their infrastructure. And in many cases, the persistence of the IBM mainframe in the financial, insurance, and healthcare industries is that legendary security is built into the very platform. Ands they pay a hefty premium for that.

Over the two decades that it has been pursuing the datacenter budget with its Xeon processors and related server platforms, Intel has done a lot to bolster the security of the platform, but with the forthcoming “Ice Lake” Xeon SP processors, Intel is providing a step function improvement in security in the datacenter.

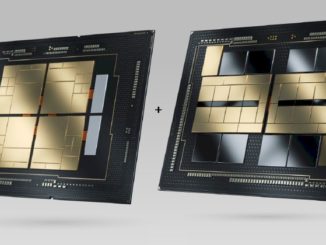

This is good news for Intel Ice Lake Xeon SP processor and its companion “Whitley” server platform, which is going to need every advantage it can get to compete against AMD’s “Rome” and “Milan” Epyc X86 server processors and the future Marvell ThunderX3 and Ampere Computing Altra Arm server processors in the datacenter. As it turns out, the boosted security in Ice Lake Xeon SPs might be the real reason that companies buy server CPUs now from Intel rather than wait for the future 10 nanometer “Sapphire Rapids” Xeon SPs due next year or the 7 nanometer “Granite Rapids” Xeon SPs coming the year after that.

In fact, the slippage of the 7 nanometer manufacturing processors at Intel, which was announced in July, means that the impending Ice Lake and Sapphire Rapids Xeon SP processors are now going to have a longer lifespan. Among other things, this means server vendors have more time to sell servers based on Ice Lake processors chips, and therefore recoup their investments. So does Intel, for that matter. But these new security features coming with the Ice Lake Xeon SPs, which Intel quietly announced today and which we presume are being created largely at the behest of the hyperscalers and cloud builders, is going to give service provider and large enterprise customers more time to see how the boosted security features fit into their datacenters.

Ice Lake server chips have had an ever-shrinking window of opportunity as Intel’s 10 nanometer processes were constantly being shifted out. We discussed this in detail back in August when some of the details of the Ice Lake Xeon SP processors were unveiled at the Hot Chips 32 virtual conference. When the “Cooper Lake” Xeon SPs were launched for machines with four or eight sockets in June of this year, Intel showed a roadmap that had Ice Lake Xeon SPs coming this year on the “Whitley” platform, and we all presumed it was going to be in the fourth quarter, with Sapphire Rapids following relatively fast in 2021. Like this:

You always have to be careful with server CPU roadmaps because “ship for revenue” is not the same thing as “announcement date” or “generally available. So before we get into the new security featured coming with Ice Lake Xeon SPs, let’s clear this up. Intel has said – and this roadmap corroborates – that revenue shipments for the Ice Lake Xeon SPs will happen in the fourth quarter of 2020. But the actual announcement, and therefore the generally availability of the Ice Lake Xeon SP, will not happen until early in 2021. So, both OEMs and their enterprise customers have even more time to contemplate what Intel is doing.

The security enhancements were a kind of soft launch by Intel just to keep people thinking about Xeon SPs at a time when AMD is making a little hay with its “confidential computing” approach, being spearheaded with the help of Google Cloud. Intel is teaming up with Microsoft Azure in making its announcements, but don’t read too much into it. In the long run, the security features that AMD has already brought out and that Intel will be bringing out will eventually be table stakes for cloud computing.

We are working on a story about the confidential computing effort between AMD and Google as this new broke, but it bears repeating some of the interesting things that AMD has done with regard to security, starting with the first generation “Naples” Epyc 7001s. These chips had a 32-bit Arm Cortex-A5 processor that resides on the Naples package, which generates secure cryptographic keys for locking down certain data within the system and to manage those keys as they are distributed. As a baseline, this secure processor allows for hardware-validated boot, which means it can take control of the cores as they are loaded up with operating system kernels and make sure their data comes from a trusted place and has not been tampered with. The secure processor can interface with off-chip non-volatile storage, where firmware and other data is held and encrypts the boot loader and the UEFI interface between the processor and the firmware; the secure processor has its own isolated on-chip read-only memory (ROM) and static RAM (SRAM), and it also has a chunk of DRAM main memory allocated to it that is not accessible by the cores on the Epyc 7000 chip and is also encrypted. This security processor is used to provide the Secure Memory Encryption (SME) and Secure Encrypted Virtualization (SEV), which means encrypting these two parts of the server stack and better insulating them from attack.

These features were improved upon in the second generation “Rome” Epyc 7002 processors, and we expect that the impending “Milan” Epyc 7003s, due by the end of the year like the Ice Lake Xeon SPs and including the Zen 3 cores that just were unveiled in desktop and laptop chips this week, will have security improvements as well.

Starting with the “Sunny Cove” cores in the Ice Lake Xeon SPs, Intel will be pulling the Software Guard Extensions (SGX) from its client devices into the mainstream server processors for the first time. The entry Xeon E3 processors from 2015 through 2018, which are slightly modified Core desktop processors, and the more recent Xeon E series from 2019, also modified Core chips, supported SGX, which provides encryption for the memory allocated to applications and data for those applications. But SGX is not transparent, and requires those applications to be tweaked and recompiled to use the SGX feature, unlike the AMD SME and SEV features, where it works behind the scenes on all data in memory and all hypervisor data in memory. The SGX feature coming with Ice Lake Xeon SPs will support up to 1 TB of application and data in its private regions, called memory enclaves.

Intel is also touting that the Ice Lake Xeon SPs will have full memory encryption, like the AMD Epycs do and IBM’s forthcoming Power10 processor, due in the fall of 2021, will also have. Intel is calling this Total Memory Encryption, or TME for short, and it uses the AES XTS encryption algorithm to scramble the data in memory. The odds of someone freezing server DIMMs with liquid nitrogen, removing them, and then reading the data is pretty small, but why not encrypt everything all along. (Why stop at DRAM? Why not at L3, L2, and L1 cache?) Intel is also talking up how it can double-pump encryption algorithms through its on-die encryption engines to accelerator the performance of these operations, much as it has long since pumped up its vector floating point engines to do lots of 32-bit and 64-bit – and now 16-bit or 8-bit – math operations side by side.

One other new innovation, which is going to lead us to where we are really going in this story in just a second, is called Platform Firmware Resilience, or PFR for short. With PFR, Intel is embedding an unspecified one of its FPGAs (complements of its Altera acquisition from five years ago) into the system to create a “platform root of trust” that is meant to validate the critical pieces of firmware in a server – think the BIOS flash, the baseboard management controller flash, the Serial Peripheral Interface (SPI) controller, the Intel Management Engine (which supports SGX), or the power supply firmware – before they are loaded up as the machine boots.

All of this security embedded on or near the processor is great, but in the long run, after listening to all of the talk about DPUs, short for data processing units and what we used to think of as a compute-glorified SmartNICs, we are under the distinct impression that much of this security should be offloaded from the CPUs to the DPUs. We realize that this is just shifting the security issue from one compute engine to another, but at least the security is being performed in such a way that it does not interfere with the compute work on the main CPUs in the system. (Memory encryption excepted – that has to be done inline with the memory controller to keep the performance hit absolutely minimal.) Intel might even require one of its FPGA-enhanced SmartNICs to do the PFR part, which would be a pretty big ask except among those hyperscalers and cloud builders who were going to put in SmartNICs (remember to call them DPUs) anyway.

What happened to MKTME?

I wonder is this is just the implementation of the idea. It sure looks like it, now that I do a little digging.

Graphite Rapids? Should that be Granite Rapids?

HA! Yes, spell check got me. Although I would think it would be Garnet Rapids, followed by Sapphire Rapids and then Emerald Rapids and eventually Diamond Rapids. Which gets us back to graphite in a strange way….

intel-cooper-lake-xeon-sp-roadmap is showing the text, not a presumed image of a roadmap.

Thanks. All fixed.