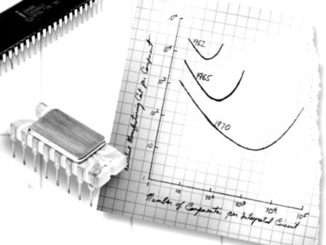

Several decades ago, Gordon Moore made it far simpler to create technology roadmaps along the lines of processor capabilities, but as his namesake law begins to slow on the rails, the IEEE is stepping in to create a new, albeit more diverse roadmap for future systems.

The organization has launched a new effort to identify and trace the course of what follows Moore’s Law with the International Roadmap for Devices and Systems (IRDS), which will take a workload focused view of the mixed landscape and the systems that will be required. In other words, instead of pegging a single set of processor developments as the key to how future systems will evolve, committees will begin looking at broader use areas and the associated processing landscapes that surround them. This means a fresh look at a number of emerging technologies, including accelerators, neuromorphic devices, quantum computers, and beyond—as well as standard, better known devices.

As one might imagine, this represents a grand classification and standardization challenge. As Thomas Conte, former IEEE president and George Institute of Technology professor tells The Next Platform, roadmaps for technology development have been a critical guidepost for the industry since the mid-1960s and still have great value, although for a post-Moore’s Law world, it will take a different effort. Teams will be meeting this month to set forth the various application areas and start to carve out the structure for the new specifications and roadmap. “Bringing the IRDS under the IEEE umbrella will create a new Moore’s Law of computer performance, and accelerate bringing to market new, novel computing technologies,” Conte says.

“The process is being driven from the workload level. In other words, for each of the critical workloads we care about, what’s the right way to do computation and the most power efficient way of doing so. For example, can you do finite element simulation using a neuromorphic computer? Some people think you can, and can do it at a hundredth or thousandth of the power if that computer can be built out of analog devices instead of binary digital devices. That’s the kind of thing we’re looking at.”

In addition to putting this new roadmapping effort into historical perspective, Conte also said that various initiatives, including the broad, non-specific National Strategic Computing Initiative, which was signed by the Obama administration earlier this year, go a long way in setting the stage for exascale, but after exascale, there is no clear direction forward. Although this is a heavy undertaking with many different workloads to consider, it will at least direct the industry toward certain technologies and help create a path forward for where development dollars need to go.

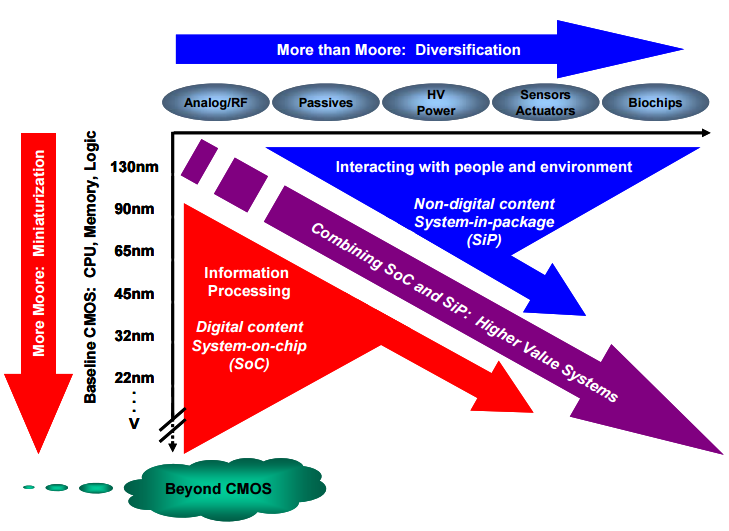

In short, the effort can be seen as continuing to watch “more Moore” versus “more than Moore” where novel devices step in as more power efficient and potentially higher performance for critical workload areas.

“It’s not a roadmap in which every so many years we’re going to map to smaller transistors, and show how to make them smaller. That’s been great, but has not really helped computer performance. The last two generations of transistors we’ve been given aren’t much more power efficient or higher performance, we’re just getting more of them,” Conte argues.

“There’s a limited benefit to getting more. It would be great if we say, let’s stop the insanity of just focusing on one device, step back, and look at the different ways of computing to solve the problems we need to solve. See what those approaches need in terms of devices, and roadmap those devices.”

“There’s a limited benefit to getting more. It would be great if we say, let’s stop the insanity of just focusing on one device, step back, and look at the different ways of computing to solve the problems we need to solve. See what those approaches need in terms of devices, and roadmap those devices.”

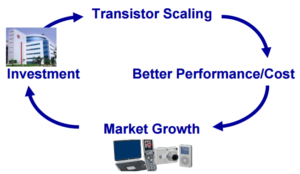

There has been a “virtuous cycle” for the industry fed by the dogged persistence of Moore’s law. As an IEEE paper on how this has shaped the industry (and will soon leave a gap) detailed, this curve in turn, “allows further investments in new technologies which will fuel further scaling. Technical progress was of course a key ingredient of this industry ability, but it was not the only one: another key factor was the high degree of confidence, shared by industry players, that achieving Moore’s law was possible—and would bring the expected benefits.”

As the IEEE notes, “there is no ‘natural’ roadmap exiting per se” for this effort. “Technology needs and company internal roadmaps are usually defined based on short term market requirements. In addition, there is a much closer link between process technologies on the one hand, and product implementations on the other hand.”

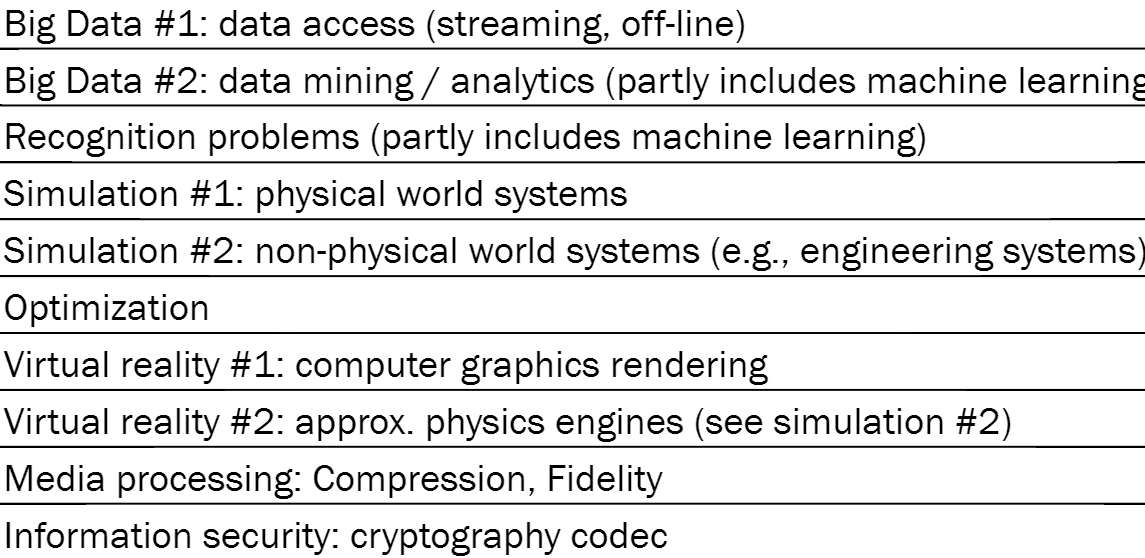

As Conte tells us, and as seen in the above chart from one his presentations for the committee highlighting a select set of potential workload areas to explore with the new roadmap process, category areas will be quite broad. The goal, however, is to look at the work that has been done in those communities beyond Von Neumann architectures and to explore where vendors might put their investments in the future to support those workloads.

“We’re taking the traditional roadmapping processing, which we’ve become quite good at, and putting a few levels on top. The top level are the working groups that establish what the ‘killer apps’ and workloads we see as important and that need to continue to scale in the future. That might evolve over time, but that will be largely stationary once we define that.” That process is starting this week in Belgium and will play out over the next year with successive technology briefs and input from the vendor community.

Be the first to comment