For decades, HPC centers were on the bleeding edge of any technology, but enterprises comprised most of the sales volume and so there was a bifurcation of those technologies. HPC tech was advanced and expensive, while enterprise technology was less capable and capacious but also less expensive.

With the rollout of 25G Ethernet, we are witnessing a technology transition where hyperscalers and cloud builders are pushing the leading edge of a technology while at the same time getting in position to be the sales volume ahead of enterprises in other sectors of the economy.

This is a new and interesting phenomenon, and one that we think should accelerate adoption of 25G Ethernet. But hyperscalers and cloud builders like open technologies, and specifically, they want their switches to look more like X86 servers in terms of the breadth of software they support and their ubiquity. Mellanox Technologies bought its way into the Ethernet switching business and bolstered its InfiniBand share when it bought partner Voltaire for $218 million back in November 2010. Mellanox launched its first generation SwitchX converged Ethernet-InfiniBand ASIC the following April, but in recent years it has returned to having separate Switch-IB ASICs for 100 Gb/sec InfiniBand and Spectrum ASICs for 100 Gb/sec Ethernet so it can better address the needs of each customer set. In short, Ethernet overhead was hurting the performance of InfiniBand on the SwitchX chips, and moreover, to compete effectively in Ethernet, the company needed a chip specifically tuned up for the 25G standard that Mellanox, along with Google, Microsoft, and Broadcom, helped create – more or less without the initial support from the IEEE standards body that control Ethernet.

Now that the Spectrum hardware is ramping, Mellanox is broadening its support for an open software stack atop its Ethernet ASIC and talking up the differences between its Spectrum chips and the “Tomahawk” ASICs from Broadcom, which also support the 25G protocols and offer the lower energy consumption and lower cost per bit that Google and Microsoft have been pushing for since the summer of 2014. The launch of support for Cumulus Linux as well as a slew of network adapters aimed at specific OCP system designs coincides with the Open Compute Summit being hosted this week in San Jose.

Mellanox has a very high share of the 40 Gb/sec server adapter market, and Kevin Deierling, vice president of marketing at Mellanox, tells The Next Platform that the company expects to maintain very high market share with 50 Gb/sec adapters looking ahead – although he concedes that it may be tough to match the 95 percent share that Mellanox has for 40 Gb/sec adapters because Intel, Broadcom, and others are putting adapters that sport 25 Gb/sec, 50 Gb/sec, and 100 Gb/sec ports that adhere to the 25G standard into the field.

Mellanox has such high share of 40 Gb/sec adapters because it is essentially the only big supplier, and all of the hyperscalers and cloud builders that have moved to 40 Gb/sec for the uplinks have bought its adapters or its chips to make their own. And as it turns out, they do the former, not the latter, for the most part.

“The vast majority to date have bought adapters from us, but moving forward, we may see companies make that transition – maybe the hyperscalers will do that,” says Deierling. “To date they have not done this because we are cost competitive with what they can build themselves. For the adapters and the cabling, it just makes a lot more sense for them to let us do it.”

Mellanox will be demonstrating ConnectX-4 Lx Ethernet adapters that support the 25G standard for three different OCP machines at the summit this week. The first is the “Yosemite” quad-node microserver that Facebook previewed at last year’s summit and that it has been installing in its datacenters using Intel’s Xeon D processors since that time. This Yosemite sled has a converged 100 Gb/sec adapter card that virtualizes four 25 Gb/sec uplinks from the servers, allowing for one card to replace what would have been four, thus saving money and space and heat. Mellanox also has 25 Gb/sec and 50 Gb/sec adapters that are being plugged into an OCP server design code-named “Leopard,” which is a two-socket sled based on Intel’s “Haswell” Xeon E5 v3 processor and that packs the nodes in two-wide in an Open Rack chassis. Finally, Mellanox has certified its adapters to work with the forthcoming “Barreleye” system being designed by Rackspace Hosting based on IBM’s Power8 processor. In this case, Rackspace is opting for a 50 Gb/sec link down to the servers from the switch. Deierling says that Mellanox is not only delivering single-port OCP adapters that run at 25 Gb/sec or 50 Gb/sec as well as the 100 Gb/sec card with multi-host support, but that it will also have a 50 Gb/sec card with multi-host capabilities. All of these adapters and the Spectrum switches use the same cabling as 10 Gb/sec downlinks and 40 Gb/sec uplinks, and with cabling being such a big cost and hassle, this makes upgrading the network considerably easier and less costly than it might otherwise be.

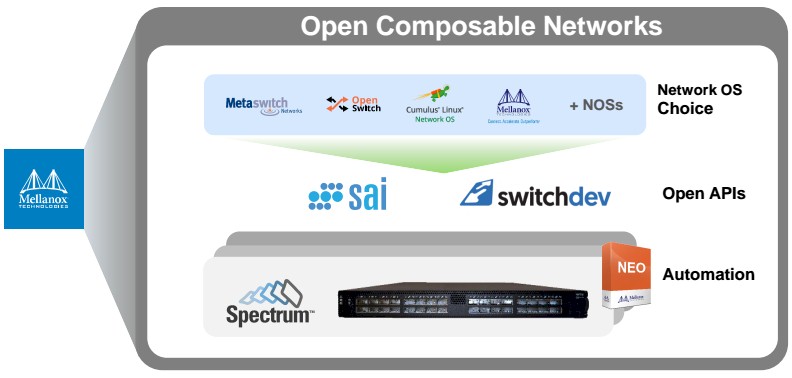

To further appeal to the hyperscalers and cloud builders and the enterprises that will want to emulate them, Mellanox is also rounding out the software stack for the Spectrum switches. Specifically, Mellanox is now supporting the Cumulus Linus network operating system from Cumulus Linux, which is one of several companies pushing an alternative to captive network operating systems sold by Cisco, Mellanox, and others on their switches. Mellanox is contributing its own SwitchDev tools, which link into network operating system interfaces, into the Linux community, and is adding its Neo network orchestration and management software to the stack alongside the Switch Abstraction Interface (SAI) software created by Microsoft and Dell to abstract the underlying hardware from the network operating system. (Microsoft is actually running Linux on the switches underpinning the Azure cloud, as we have previously explained, and this is enabled by SAI.) The open networking stack also includes the ONIE bootloader, which was developed by Cumulus and, like the SAI spec, was contributed to the OCP for dispersal to the open source community.

At the moment, the Spectrum switches support five operating systems, including the MLNX-OS in-house one created by Mellanox, Cumulus Linux just announced this week and arguably the most popular of the open NOS stacks, OpenSwitch from Hewlett-Packard Enterprise (which debuted last fall), Microsoft’s own Azure networking stack (which is not a commercial product, at least not yet), and the Metaswitch Networks routing software. There are a number of other NOS platforms that can and probably will be added to this list, including software from Big Switch Networks and Pica8 and possibly Dell.

The Spectrum ramp is now starting in earnest and will no doubt be helped by a server refresh cycle coming from Intel with the “Broadwell” Xeon E5 v4 processors that are imminent, a wide line of server adapters, and the expanding software stack on the switches. “It is still early days for 100 Gb/sec,” says Deierling. “We have customers across the board that are testing it in their labs and we will see the ramp in the second half of the year. Before, there were some blocking functions because people will not willing to use our hardware and software, but we don’t see that any more with the addition of Cumulus Linux.”

Mellanox is shipping Spectrum switches that offer 32 ports running at 100 Gb/sec as well as one that have 48 downlink ports that run at 25 Gb/sec and eight uplink ports that run at 100 Gb/sec. In the second half of the year, it will roll out a half-width switch that has 16 ports running at 100 Gb/sec that is suitable for more modular system designs.

“On the adapter side, I think we will see more 25 Gb/sec and 50 Gb/sec than 100 Gb/sec, and we will start seeing that ramp next quarter. And you will see the switches ramping, too, and frankly, the switches have slowed us down. People locked onto our competitor, Broadcom, and they have had difficulty getting Tomahawk parts and now people are re-evaluating their decisions.”

Cisco is also gunning for the Tomahawks with its own Cloud Scale ASICs, but will sell switches based on Broadcom chips because this is what they have standardized on.

Be the first to comment