Well before the Intel acquisition of Altera and the news about Microsoft’s use of the Catapult servers, which feature FPGAs to power their Bing search engine and other key applications, it has been clear that the FPGA future is just starting to unfold. With all the pieces in position in terms of the vendor ecosystem, understanding where their value might be for actual applications beyond where one might have expected to find them ten years ago is still something of a challenge. But that picture is getting clearer.

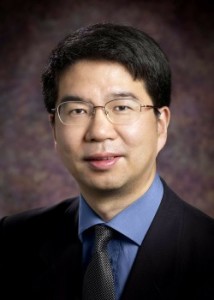

In his 25-year career working with FPGAs, UCLA’s Dr. Jason Cong has watched the devices move from purpose-driven implementations, to devices for prototyping and now, in more recent years as computing devices and accelerators. Cong has developed a number of key technologies to push FPGA functionality and programmability forward, something that FPGA maker, Xilinx, has historically been interested in. The company acquired one of Cong’s developments, the AutoES tool (renamed Vivado HLS after the 2011 purchase and also acquired a scalable FPGA physical design tool via Cong’s Neptune Design Automation startup in 2013. Other startups include Aplus Design Technologies, a UCLA spin-out company that developed the first commercially available FPGA architecture evaluation and physical synthesis tool—something that was licensed by most FPGA makers until it was acquired and eventually pulled into Synopsys in 2003.

Much of this development in the 1990s until more recently has been aimed at the prototyping space, but Cong is seeing a new wave of options for FPGAs as compute engines and accelerators for a number of workloads, including more prominently, deep learning and machine learning. “The future will be accelerators with the CPUs there to interface with the software, handle scheduling, and coordination of tasks, but the real heavy lifting will be done by accelerators.” This includes GPUs, he says, but the single instruction, multiple data limitation (SIMD) limitations are clear and while FPGAs with their programmable fabric, customizable logic and interconnect, and low power are an appealing option, the programmability needs to be stepped up—and quite significantly.

“Von Neumann architectures were elegant and have served us well, but if you think about something like the human brain, there is no single pipeline to execute instruction after instruction; we have one part for language processing, another for motor control, and so on. These are highly specialized circuits. If you think about Von Neumann architectures as well, executing something simple such as add has many steps—retrieve, decode, rename, schedule, execute and write back—there are usually five to ten steps, depending on what you want to do and this pipeline is not efficient.”

Although Cong believes that GPUs will continue to dominate on the training side of deep learning, there is great promise ahead for what they might provide on the inference front. For instance, his team put together a study based on the inference for a convolutional neural network and showed the FPGA as capable of offering 350 gigaflops per second in under 25 watts—an attractive story from both a performance and efficiency angle.

Cong did not seem stunned at the $16 billion Intel acquisition of Altera last year, noting the opportunities for efficiency in the datacenter, both for the underlying networking and communications gear that uses FPGAs, but for a new range of application in machine learning, genomics, compression and decompression, cognitive computing, and other areas. “We welcomed this move as well because anything that brings the FPGA closer to the CPU means the latency is reduced—this is something we’re working on in a paper that will compare the Intel platform on QPI over existing PCIe. It will be very favorable for many new applications, and even more favorable when Intel puts the FPGA in the same package as they’re expected to do in the future.” Additionally, one of the startups Cong co-founded, Falcon Computing, which is working with machine learning libraries for FPGAs, they have been able to show how it is possible to reduce machine learning (inference) workloads from four servers to one using such libraries and an FPGA card. Cong says the results of this will be published soon and show a new range of potential machine learning and deep learning capabilities coming to FPGAs.

“FPGAs can be easily integrated into datacenters, adding 20 watts as compute engines in a PCIe slot like Microsoft is doing with Catapult across thousands of nodes. GPUs cannot do that; the CPU already has between 200-300 watts so those will not fit into the server profile. And while you can design an ASIC and it is more efficient than anything else, they are very rigid. This is a time of great, fast change so in the time it takes to fab and ASIC, the algorithms, especially in deep learning and machine learning, could have changed significantly by then.”

“There is full confidence in Intel’s manufacturing capabilities to bring this all to the same package, but the real risk is on the programming side,” Cong says. “Personally, I don’t think OpenCL is the answer as it is now. Look at all the datacenters where it’s not being used (big data processing uses Hadoop and Spark, HPC is OpenMP and MPI).” This is something Falcon Computing is focusing on—taking these languages and mapping them to OpenCL automatically without losing performance.

One can imagine how a company like Falcon Computing, which is one of only a few focused on bringing low-level capabilities for FPGA with a higher-level interface might be attractive to the handful of FPGA makers. We know that Cong’s previous startups have often found a home at Xilinx, but it is worth questioning what tooling Intel will need to buy into (if at all) to support its FPGA initiatives over the next few years. In other words, we would not be surprised to see Falcon gets snapped up by Intel—or by Xilinx or others at some point in the near future.

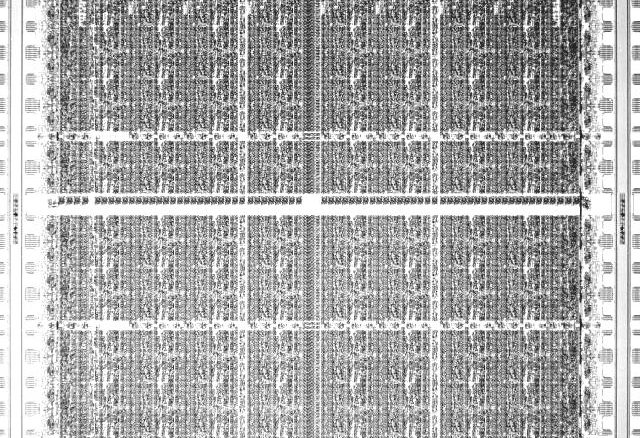

Cong’s work at UCLA’s VLSI Architecture, Synthesis and Technology (VAST) lab garnered him an IEEE Technical Achievement award this month for work on making FPGAs easier to program. In 2008, Cong and a group of researchers were awarded a $10 million NSF Expeditions in Computing grant to extend over five years, which was based on the recognition then that the days of swift frequency scaling were coming to an end—but he could not have guessed even then that the opportunity for FPGAs as accelerators would be such a large part of the story.

Be the first to comment