At the last five annual Supercomputing Conferences, an underlying theme has been the potential of accelerators. However, this year at SC15, there is a growing sense of the normalization of accelerated computing—and even if it hasn’t the supercomputing sites yet, that classification goes beyond GPUs and Xeon Phi coprocessors.

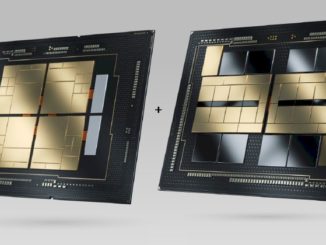

There is a new accelerator option on the block and while it has not hit the supercomputing sites with any force yet, the trend line for large-scale datacenters on the hyperscale end and for some specialized commercial and research HPC-centric workloads in genomics, seismic analysis, and finance, intersects with where FPGAs are heading. A lack of FPGA accelerated systems on the Top 500 now is no indication of what the future holds. With Intel aiming to eventually integrate the Altera FPGA IP they acquired this year for over $16 billion and the other major FPGA maker, Xilinx, beefing up its hardware and software approach to broader markets, we are poised for a shakeup in accelerated computing at extreme scale.

Intel is not the only company that has a path to integrated FPGAs with CPUs and the companion software environment to wrap around it. Armed with a new Qualcomm partnership for low-power FPGA approaches to suit hyperscale datacenter needs, and a freshly announced partnership (that is finally formalized after several years of co-development) with IBM’s Open Power Foundation, Xilinx sees a path to the large-scale datacenter. High performance computing applications are part of this roadmap—but so too are workloads that other accelerator approaches are tackling, including Nvidia with its newly launched Tesla M40 and M4 processors aimed at hyperscale datacenters running machine learning training and inference workloads, among others in data analytics, security, and beyond.

There is reason to believe that within the next few years, there could be at least a few entrants on the Top 500 list that are taking advantage of FPGA acceleration as well. With Intel’s acquisition of FPGA maker Altera earlier this year and their projections for integration with Xeon CPUs and the other major FPGA company, Xilinx, striking up partnerships with Qualcomm on the low-power ARM processor side and IBM’s OpenPower Foundation on the other, the FPGA space is set to become varied and heated enough to spur unprecedented activity for FPGAs on the compute side.

“Heterogeneous computing is no longer a trend, it’s a reality,” Hamant Dhulla, VP of the datacenter division at Xilnix, tells The Next Platform.

From machine learning, high performance computing, data analytics, and other emerging areas since these are all highly parallel workloads that ca be contained in a small power envelope and take advantage of the growing amount of on-chip memory available on FPGAs. Dhulla says the market potential is large enough to upset the way Xilinx has looked at its own business. For several years, storage and networking dominated the FPGA user base, but within five years, the demand on the compute side will far outpace storage and networking—both of which are expected to continue along a steady growth line.

FPGAs are set to become a companion technology in some hyperscale datacenters, Dhulla says. “We are seeing that these datacenters are separated into ‘pods” or multiple racks of servers for specific workloads. For instance, some have pods set aside to do things like image resizing, as an example. Here they are finding a fit for FPGAs to accelerate this part of the workload.” In other hot areas for FPGAs, including machine learning, Dhulla says that they are operating as a “cooperating” accelerator with GPUs. “There is no doubt that for the training portion of many machine learning workloads GPUs are dominant. There is a lot of compute power needed here, just as with HPC, where the power envelope tradeoff is worth it for what is required.” But he says that these customers are buying tens or hundreds of GPUs instead of hundreds of thousands—those large accelerator numbers are being used on the inference part of the machine learning pipeline—and that’s where the volume is. As we noted already, Nvidia is countering this with two separate GPUs (the M40 for training, the low-power M4 that plugs into pared down hyperscale servers) to counter this, but Dhulla believes FPGAs can still wick the power consumption lower and, by taking a PCIe approach, can snap into hyperscale datacenters as well.

Their SDAccel programming environment, which we are set to explore in more depth after SC15 draws to a close, is making this more practical by offering a high-level interface to C, C++ and OpenCL, but the real path to pushing hyperscale and HPC adoption is through end user examples.

When it comes to these early users that will set the stage for the next generation of FPGA use, Dhulla points to companies like Edico Genome, which was described in detail here. Xilinx is currently working with production customers in other areas, including historical compute-side workloads in oil and gas and finance. But the first inkling of where their compute acceleration business is going can be seen with early customers using Xilinx FPGAs in machine learning, image recognition and analysis, and security. For instance, on the deep packet inspection front, the FPGA sits in front and all the traffic goes through it, meaning it’s possible to look at each individual packet—a capability that will have implications in software-defined networking as well.

The traditional business for FPGAs in storage and networking will continue to get a boost from new approaches, including NVME drives over Ethernet fabric and innovations in terms of the storage memory hierarchy. Gone are the days when simple DDR-based DIMMS are main memory pushed with hard drives for storage, so with SSD and non-volatile memory computing closer to the compute side, there are more segments of the network, storage, and compute side that can work in harmony with FPGA acceleration.

In other words, it’s finally a good time to be in the FPGA business. Whereas before, the technology was isolated to the lower level, in part because of the programmatic complexity and limited use cases outside of storage and networking, new workload demands are driving new ideas for use. And while indeed, having Intel acquire a rival at an enormous sum and promise big things with that IP in the years to come is daunting, Dhulla says it has been remarkably good for Xilinx in that it brought a new wealth of attention to FPGAs and the future place in the datacenter.

It will be interesting to see what, if any, traction FPGAs get in the supercomputing world. Here at SC15 this year, there are some sessions we’ll be reporting from and vendors like Ryft and others showcasing FPGA-laden boards to target specific workloads. The other “time will tell” note here is how a limited set of vendors on the FPGA and GPU side will weave a story around their respective performance, use cases, and most important, performance per watt for varied workloads. Over the next couple of years too, especially once Intel clarifies its path through the integrated FPGA woods, this will heat up—again, leaving few options technology and vendor-wise for what looks to be a vibrant future set of markets.

On that note too, the Intel acquisition brings a whole new set of skills to bear for Intel’s software and silicon-driven teams. But on the Xilnix side, there are also some changes that reflect, rather artfully, how they are seeing the market grow for compute as the future dominant source of FPGA revenue. Dhulla himself spent 15 years in the datacenter group at Intel working on the cloud and low-processing fronts and Xilinx’s public face on the technical marketing side is now Andrew Walsh, who spent a long career at GPU maker, Nvidia. While one might not have expected GPU and FPGA makers to square off just a few years ago, the point is, like Intel, Xilinx is beefing up its strategy for FPGA accelerated compute by pulling from around the accelerated computing industry in all of its forms. Its partnership now with the OpenPower Foundation is a big step for both IBM and Xilinx in that it sets the stage for how that processor ecosystem for accelerated computing will shake out. Interesting times, indeed.

I wouldn’t fully discount DSPs yet either also they have a longer tradition being in super computers than FPGAs

What about eASIC, Intel appears to be working with them and future Xeon SKUs, and just what is and eASIC.

“Is it an ASIC? Is it an FPGA? No, it’s eASIC!”

This article is an intersting read.

http://www.eetimes.com/document.asp?doc_id=1327688