The next wave of virtualization on servers is not going to look like the last one. That is the thinking of Mark Shuttleworth, founder of the Ubuntu Linux project more than a decade ago and head of strategy and user experience at Canonical, the company that provides support services for Ubuntu.

Under his stewardship, Canonical has, like other Linux players, brought successive waves of virtualization to its distro, starting with the Xen hypervisor, then adding KVM and moving on to Docker. The company was on the spearpoint when Eucalyptus was the cloud controller of choice, and quickly shifted gears to OpenStack when the industry backed this open source alternative and has become the Linux of choice for OpenStack clouds and as the Linux deployed on various public clouds. Docker and its software container approach has all of the virtualization and cloud providers hyperventilating at the moment, but what has Shuttleworth excited is an alternate technology called Linux containers, or LXC for short, and a companion hypervisor for it that Canonical has created, called LXD, which is a daemon that wraps around these containers and functions more or less like a server virtualization hypervisor akin to the open source Xen and KVM, Microsoft’s Hyper-V, or VMware’s ESXi.

“To give you a sense of how excited we are about that, we genuinely believe that is the biggest disruption in Linux virtualization in a decade,” Shuttleworth tells The Next Platform. “We see enormous enthusiasm from large enterprises and other technology companies.”

The inspiration, and some of the early work for LXC containers, was done by Google, which has been running containerized applications on its infrastructure for at least a decade and which says it fires up over 2 billion containers a day. Canonical and about 80 other organizations have been working to commercialize LXC, which was not initially a very user-friendly technology and to make it something that looks and feels like familiar server virtualization even though it uses very different – and simpler – technology.

“Something that many people don’t understand about containers is that the systems by which we set up containers can be used to kind of virtualize or fake things in wildly different ways,” explains Shuttleworth. “Sometimes you want to fake things to look like Docker, sometimes you want to fake things to look like something else. In the case of LXC, the way we want to set up these containers is to create the illusion of machines, but complete machines that are running an entire operating system or all of the processes that make up an operating system. That’s quite different from what Docker does, those are all using the same underlying primitives, but setting things up differently. The intent of LXC was always to create virtual machines without the hardware virtualization.”

With Docker, which used to be based on LXC in the early days but now has its own abstraction layer, it is almost like a single process running on top of the file system, almost like you booted the machine but instead running the Init and all of the processes that make up the operating system, you literally just run the database or whatever, and then you put multiple containers on a machine and pod them together. With LXC and its LXD daemon, Canonical wants to preserve the look and feel of having a full Debian, CentOS, Ubuntu, or whatever Linux operating system.

“In LXC 1.0, it has been exposed as something where developers can say, ‘Make me this container.’ What we have done now is taken that code and embedded it into the LXD daemon, so rather than have each container set up by a developer, I can have a couple of hundred virtual machines, and can talk to the LXD daemon on all of them, and what I have is a virtual machine cluster just like you have with VMware’s ESXi hypervisor. The wrapping of LXC into a daemon turns it into a hypervisor. What is ESXi? It is essentially an operating system that you can talk to over the network and ask it to make you a VM. With LXD, you can talk to a machine running LXC and say make me a new container with CentOS running in it. This becomes a directable mechanism across a cluster.”

LXD also provides another important function: it is the piece of software on two distinct physical machines that allows for an LXC instance to be live migrated between them.

“Developers have this sense of hygiene, and they like to keep things orderly and neat. And to a certain extent, having to dump things onto many machines just because it is expensive to virtualize the hardware has been a sore point. Now, suddenly you can run multiple things on a machine without all of that overhead and still keep them pristine and isolated.”

This week, Canonical is announcing the beta of LXC 2.0, which will include the LXD hypervisor for the first time. Both are available in the Ubuntu Server 15.10 update that came out this month, and Canonical has taken the unusual step of backporting LXC 2.0 to the Ubuntu Server 14.04 LTS, short for Long Term Support, release of its server variant. The LTS releases come out every two years and have a five year support lifecycle. About 70 percent of the customers running Ubuntu Server in production do so on an LTS release because of the stability it provides. The production-grade version of LXC 2.0 with the LXD hypervisor will debut next year, according to Shuttleworth, probably in the February or March timeframe in the run up to the next business-class release, Ubuntu Server 16.04 LTS, due in April as the naming scheme suggests. The LXC and LXD combo will be installed by default on every Ubuntu Server starting with the next LTS release, says Dustin Kirkland, who manages Ubuntu products and strategy, tells The Next Platform that each of those machines will be able to run dozens to hundreds of containers – and on the largest IBM Power-based machines, even thousands of containers.

The number of containers that can be packed on a machine and the speed with which they can be fired up are two big advantages that LXC containers have over regular virtual machines that are virtualizing hardware. Because LXC is just Linux running Linux, there is nothing to virtualize, even though there is some work to do to get the different Linuxes to run side-by-side in containers on the same iron.

“This is a step function change in performance, and it is an order of magnitude change in density,” brags Shuttleworth. “And it is a dramatic improvement for low-latency, real-time types of applications. These sorts of things become tractable on the cloud, where they really were not in the past. If your cloud is running LXC, suddenly high performance computing on the cloud makes sense, real-time trading on a cloud makes sense, and network function virtualization of you are a telco where you need low streaming latencies makes sense. All of these big money domains where people have really wanted to use clouds and virtualization, LXC makes that possible. It is phenomenal.”

The LXC containers can deliver something on the order of 14X the density of a hypervisor like ESXi, Xen, or KVM can with virtual machines, says Shuttleworth, and the LXC and LXD combo does not have the 20 percent overhead that a hypervisor carries along with VMs that have hardware-based virtualization. That is for idle workloads, where the VMs or LXC containers are sitting there for the most part, waiting for work, like most VMs and physical machines are doing. For busy VMs, LXC containers will offer dramatically better I/O throughput and lower latency. So for the idle machines, you focus on the compression and for the busy machines, you focus on the throughput and latency and can get about 20 percent higher performance because the overhead that was dedicated to the hypervisor and VMs is free to do real work.

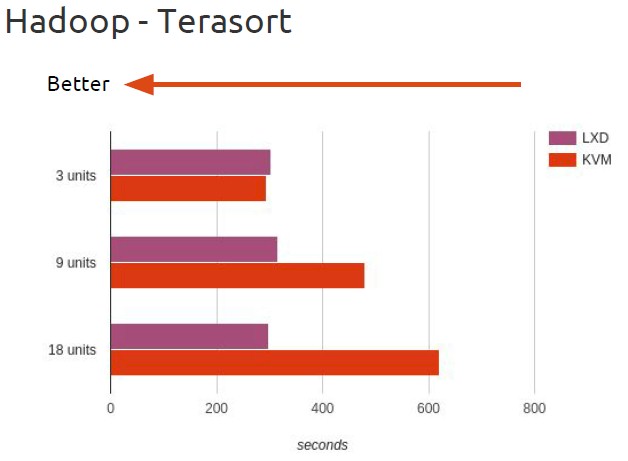

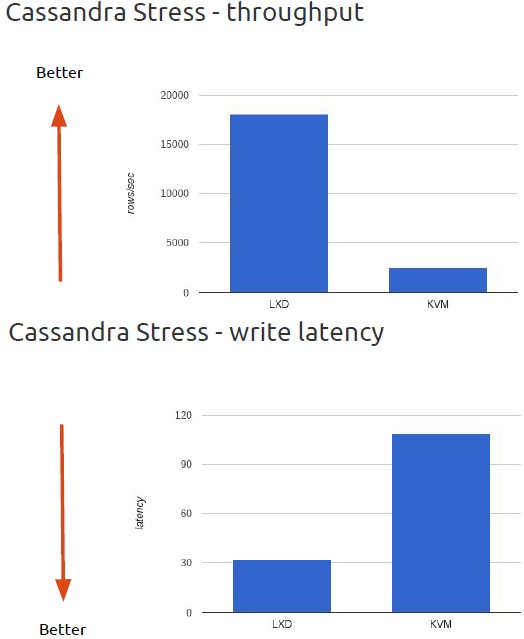

The benchmarking is just starting to be done on the LXC and LXD combo. At the OpenStack Summit in Tokyo last week, Tycho Andersen of the LXD development team at Canonical, was showing off some benchmarks in virtualized environments, one using the Hadoop TeraSort test and the other stressing out a Cassandra NoSQL data store. On both tests, the machines were running the latest OpenStack “Liberty” cloud controller, launched at the summit, and Ubuntu 15.10, which also just came out, with either a KVM or LXD hypervisor and their respective VMs and containers. The servers nodes had 24 cores and 48 GB of memory, and one controller was managing the OpenStack setup with three uses for compute in the base setup.

On the base three machines, LXC and KVM are pretty equal when chewing on the TeraSort test, but as the number of machines in the OpenStack/Hadoop cluster grows along with the dataset size, the difference in performance for the two different virtualization approaches becomes apparent.

The latency and throughput differences between LXC/LXD and KVM are dramatic, as you can see.

There is another interesting research paper out of Ericsson Research and Aalto University that shows a significant power savings from machines running LXD and Docker compared to Xen and KVM, which you can see here. The upshot is that on CPU and memory benchmarks, as more VMs or containers are added to machines, the power draw on a server goes up, and they are all within spitting distance of each other, but when they are processing network traffic, Docker and LXC burn about 10 percent less juice to do the same work.

Given the skinniness of containers, there is not much of a surprise, then, that LXC containers underpin a lot of platform clouds and will very likely be foundational for future infrastructure clouds – at least those that will only run Linux, since LXC cannot run Windows Server, the other important operating system in the datacenter. But having said that, most of the important new systems software is written to run atop Linux – Hadoop, Spark, NoSQL databases, various file systems, and so on – so this should not be limiting for certain parts of the platforms that people are building.

Interestingly, Canonical is eating its own dog food, as the saying goes, and is deploying its Ubuntu OpenStack in LXC containers now. This enables administrators to upgrade OpenStack piecemeal or move chunks of it off bits of hardware to performance maintenance on the iron. In a typical small OpenStack cloud, says Shuttleworth, you end up with eight management nodes that run various pieces of the control plane, and you tend to run them in triplicates so that if you lose one, you still have high availability through the other two. That yields 32 different physical servers. By packaging these into LXC containers and managing them through the LXD hypervisor, several of these control plane bits can be packed into the same physical machines and the overall physical server footprint for the OpenStack control plane can be shrunk without sacrificing performance or availability.

“Developers have this sense of hygiene, and they like to keep things orderly and neat,” explains Shuttleworth. “And to a certain extent, having to dump things onto many machines just because it is expensive to virtualize the hardware has been a sore point. Now, suddenly you can run multiple things on a machine without all of that overhead and still keep them pristine and isolated. It is interesting to see how behaviors change once LXD shows up. It is just like a VM experience but just lighter.”

“Red Hat tried very, very hard to ignore us and eventually they added it with RHEL 7,” says Shuttleworth, speaking of LXC, and Kirkland says that even though Canonical contributed to the work to make libvirt, the management tool for KVM, speak LXC, he says that it is a “bit of a hack” and that the libvirt maintainers are not focused on containers, but rather on KVM virtual machines. “Red Hat has historically tried to poo-poo the idea of containers as lightweight VMs because of their investment in KVM, which skews their worldview,” says Shuttleworth.

The key thing that could drive adoption of LXC and LXD is the fact that so many workloads in the enterprise, in hyperscalers, and in HPC centers are already running on Linux, and organizations want to preserve their applications while also moving head their infrastructure. This is precisely what made VMware a $6 billion giant in the enterprise datacenter.

As an example, Shuttleworth says that Canonical has been working with Intel and some unnamed HPC centers to test ancient simulation and modeling code atop LXC containers using the LXD hypervisor at bare metal performance. “Think about what that does. Now a supercomputer can have the latest operating systems that the administrators love, but the scientists can get bare metal performance and they get the old operating environment that they love. It is all of the benefits of virtualization, and both halves get what they want, without the friction associated with physical machine virtualization.”

Private Cloud First, Then Public

LXC and LXD do not require the use of the OpenStack cloud controller, but it seems likely that in the datacenter, the three will be matched up. Shuttleworth says that Ubuntu Server underpins more than half of the OpenStack clouds installed in the world, according to the latest statistics from the OpenStack community, and that among the largest OpenStack clusters, it has 65 percent share. Canonical’s Ubuntu OpenStack distribution has been adopted by Walmart, AT&T, Verizon, NTT, Bloomberg, and Deutsche Telekom – all very big wins. Moreover, at the OpenStack Summit in Tokyo last week, Shuttleworth adds that he “did not meet a single enterprise customer running OpenStack on Ubuntu” that was not interested in LXD, because the economics and density “were so compelling.”

Those are private cloud wins, for the most part. The long term goal, Shuttleworth says, is to have public cloud providers using LXC and LXD as the underpinnings of infrastructure and platform services, but in order to get there most public cloud providers are probably going to wait until X86, Power, and ARM servers have their virtualization-specific circuits tweaked so they can offer hardware-based security for LXC/LXD as they currently do for KVM, Xen, or Hyper-V (platform depending, of course). At the moment, the Linux community can provide security for LXC containers inside of the Linux kernel, but this is not going to be enough for cloud providers. Exactly when this support will be added to Xeon, Opteron, Power, and ARM chips is not known, and Shuttleworth cannot reveal the roadmaps of Canonical’s hardware partners.

Thanks for a detailed coverage on LXC/LXD. I was hoping a comparison with latest SPARC/Solaris containers and virtualization offerings would have been interesting. Or, better, have a separate article on that topic with similar details.

Anand… I’d be interested in reading about the SPARC/Solaris containers you mention… Do you have a link?

There are different technologies available in Solaris:

* Solaris containers (aka zones) is build into the OS and doesn’t require SPARC. It works on Solaris X86 and Solaris SPARC alike. I run 6 zones (virtual hosts) on a $200 HP MicroServer using Solaris. Works like a charm. I’m not sure I would have been able to do the same on Linux as Linux containers seem to be still in its infancy compared to Solaris.

* LDOMs is a hardware-assisted virtualization technology which relies on features in the SPARC processor so they do not work on X86.

Sites with large SPARC boxes often first partition their box into LDOMs and then partition each LDOM into zones. If however you run Solaris on X86 gear then you would only use zones.

The concept of containers on Solaris is so prevalent on Solaris that most Solaris admins would always create an inner container even for a small X86 box which is only running a single application. It just gets much easier that way and the application can then easily be moved to another host if the need arises (by migrating the container). Indeed on Solaris it is considered bad practice to run ANY application in the outermost zone. I believe the same practice will eventually find its way to Linux once Linux matures in this field.

Intel’s clear container is good enough for security of public cloud?

Definitely believe that which you stated. Your favorite justification seemed to be

on the internet the simplest thing to take into accout

of. I say to you, I definitely get annoyed even as other people think about concerns that they just do not

realize about. You controlled to hit the nail upon the highest

and also defined out the entire thing with no need side effect

, other folks can take a signal. Will probably be again to get more.

Thanks