Back in 2000, Ian Buck and a small computer graphics team at Stanford University were watching the steady evolution of computer graphics processors for gaming and thinking about how such devices could be extended to fit a wider class of applications.

At first, the connection was not clear, but as GPUs started to become programmable to enable more realistic game graphics, Buck and his team started tweaking the small devices, playing with the relatively small bit of programmability to test the limits of possible performance outside of game graphics.

“At the time, a lot of the GPU development was driven by the need for more realism, which meant programs were being written that could run at every pixel to improve the game,” Buck tells The Next Platform. “These programs were tiny then—four instructions, maybe eight—but they were running on every pixel on the screen; a million pixels, sixty times per second. This was essentially a massively parallel program to try to make beautiful games, but we started by seeing a fit for matrix multiplies and linear algebra within that paradigm.”

The problem, of course, was that this was all intensely difficult to program. For the next few years, Buck and his comrades at Stanford took what little they had to work with and wrote some of the first published research on GPU computing. What propelled them forward, despite the difficulty, was that even at the beginning, the performance results they were getting were outstanding. Further, this was just using the graphics APIs of the time, including DirectX and OpenGL, which effectively tricked the GPU to render a triangle that could do a matrix multiply. Following that work, they broadened out the list of other core algorithms that could be accelerated and had a fit in scientific computing circles, encountering similar programming challenges, but learning some tricks of their own along the way.

Another issue was that their research group was small—and was destined to stay that way, since finding researchers with equal experience in computer graphics and, say molecular dynamics, was no small feat. But on they pressed, developing Brook, the original precursor to the now ubiquitous parallel programming model, CUDA, which has been developed and championed by NVIDIA—a place Buck found himself after the company, eager to explore computational opportunities for GPUs, snatched him away from his Stanford research work.

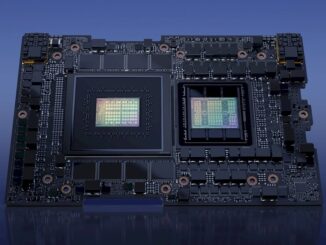

The idea behind Brook, and of course, later, CUDA, was to create a programming approach that would resonate with any C programmer but offer the higher level parallel programming concepts that could be compiled to the GPU. Brook took off in a few scientific computing circles, where interest continued to build after 2004, when Buck took the work to NVIDIA. He recalls managing a small team there, with people on both the hardware and software side trying to create a more robust general computing solution from the still gaming-centric processors. Now, over a decade later, there are “too many to count” hard at work on everything from libraries, programming tweaks, and of course, the NVIDIA Tesla series GPU accelerators, the most recent of which is the K80, which has 4,992 CUDA cores across its two GPUs and close to two teraflops peak double precision floating point performance at its base clock speed.

Buck, now vice president of accelerated computing at the GPU maker, remembers the time before NVIDIA rolled out the core-dense Tesla GPUs, when, all the way back in 2003, the performance tests they were running were getting eye-popping results, outperforming CPUs on a range of benchmarks. But without the ability to program them, without a platform, the work would have stopped dead with the base graphics APIs and research compilers.

“Over the years, both at Stanford then at NVIDIA, we talked to people in lot of different industries and found that no one wanted to learn a new language. They wanted to stick with what they had, even if it meant leaving performance on the table, just to keep with the existing languages.”

To push things ahead, the work around CUDA centered on taking common languages, like C, C++, and Fortran, and extending them in the most minimal way possible to compile on and run on the GPU. Ultimately, for the CUDA team, it meant adding one little language extension to C and Fortran to let users declare functions that could run and compile on the GPU and a lightweight way to call those functions.

At the core of this programmability is a familiar concept for programmers—threading. In theory, the only difference with programming a GPU is that instead of having four, eight, or sixteen threads, the programming model scales to tens of thousands of threads. That might sound like it adds enormous complexity, but as Buck explains, the real key is that the programming model finds the parallel sections of a code, searching for the places where users are iterating all the data, and calling it as one would with any other models while bringing all those threads to bear. At the beginning of CUDA development, Buck says, the goal was to make it so if a programmer knew C, C++, or Fortran and understood threading, programming a GPU would not be a great challenge. Of course, it is not as simple as that, depending on a user’s code, but with ongoing investments from the kernel and other teams at NVIDIA, the CUDA libraries support an ever-widening array of codes.

“When we started CUDA, we started a library team at the same time, which developed in tandem with our CUDA work,” Buck says. “We’ve expanded that out and have over a dozen libraries we authored and hundreds out there from other places. When we look to new markets, we see if there are libraries and act accordingly, optimizing at the high level as we’re doing with things like the deep learning libraries we’ve been working with most recently.”

A lot of this early work on libraries caught hold in scientific computing. Users of high performance computing systems had some of the most demanding problems code-wise, and required ultra-high performance from their calculations, which spurred the CUDA team’s work to get their applications up to GPU speed. Oil and gas, defense, and domain research were among the first users of GPU computing using CUDA, which created a solid feedback loop for NVIDIA teams to continue honing the kernel, libraries, and hardware. As many are aware, this eventually paid off in 2012 with the appearance of the Titan supercomputer at Oak Ridge National Laboratory, which was the most powerful HPC system on the planet with 18,688 NVIDIA Tesla K20 GPU accelerators set against an equal number of AMD Opteron CPUs. The supercomputer set has become quite enamored with GPUs from 2011 until the present, with 52 of the Top 500 supercomputers (as of the June 2015 ranking) using GPUs—many of which appear on the companion benchmark, the Green 500, which measures energy efficient supercomputer performance.

The HPC community, along with other GPU users in enterprise – database acceleration, deep learning, and so forth) – are feeding further development of CUDA. For instance, Buck’s teams at NVIDIA are now digging into the memory management complexities to take advantage of the fact that the new GPUs have their own memory (which at over 1 TB/sec have almost an order of magnitude more memory bandwidth than an X86 CPU). In the past, developers were forced to move the data manually from the CPU memory to the GPU memory, but with CUDA 6, there is a new software memory management feature that allows data to be automatically moved. Furthermore, recent developments in CUDA allows for unified memory support in the hardware for dynamically moving that data around between processors, which means the developers aren’t worrying about proactively managing memory unless they want to get their hands dirty for optimization purposes. This is something that will be even more seamless when the future “Pascal” GPU is released, but according to Buck, shows a real maturity for CUDA (as well as the GPUs themselves) in that performance and capability (not to mention new abstractions for programmers) are being tuned to such a degree.

“In HPC in particular, these are experts at taking complex partial differential equations and expressing them in Fortran, but less familiar with how to parallelize those algorithms.” Accordingly, Buck and his colleagues expanded the concept of directives, which are already popular with OpenMP but a similar approach, which allows users to get the performance of a GPU without explicitly parallelizing code as one would with CUDA (or in cases where code doesn’t map to a library) became a more recent priority in the GPU programming evolution. The resulting work in OpenACC, which was done in part at NVIDIA, takes the directive style approach where users can declare where their parallelism and performance opportunities lie and let the runtime system automatically grab that code and move it to the GPU.

“You always get the best results doing it with CUDA, but for the users who want to focus on the results and far less on the implementation—in other words, where productivity is the main goal – OpenACC can provide hints in the code and let the compiler shoulder the burden.” Just as it was the beginning of Buck’s career opening up the GPU to a wider world, so too is the evolution of OpenACC offering another way to better exploit GPU performance. Buck says there are currently upwards of 8,000 domain scientists using OpenACC and it’s been a complementary effort.

As Buck notes, none of this would have been possible without a programming model that domain scientists across the GPU landscape could make use of—and find easy enough to deploy against complex scientific applications. “Scientists first, programmers a very distant second,” Buck emphasized several times. “That was the goal at the beginning and that has not changed.”

Will Pascal have more in hardware asynchronous compute ability compared to AMD’s GCN “1.2” generation of GPU micro-architecture. I’ll expect that the Khronos Groups’ Vulkan will be make for a better software model that can be developed by the entire market relative to CUDA’s propitiatory software ecosystem. The lack of a full hardware based thread context switching in Nvidia’s GPU hardware will result in less efficient usage with vector/other units not being able to be utilized as efficiently for lack of a faster in hardware dispatch/context mechanisms. The amount of academic representation at the HSA foundation, as well as the corporate membership, are pointing in the direction of having a more open software ecosystem focused towards a sharing of development resources, the Khronos Group included, for an open standards software ecosystems and GPU compute. It’s interesting how similar the Khronos group’s SPIR-V IL code is in reach compared to the HSA foundation’s HSAIL, and will CUDA be able to remain relevant with the market beginning to move towards open standards based compute API’s for CPU/GPU number crunching.

One missing piece, in your article, of the evolution of GPGPU computing is the introduction of ATI’s Close to the Metal API for GPGPU. It was introduced the previous year before CUDA.

https://en.wikipedia.org/wiki/Close_to_Metal

The comments about the competing AMD/ATI (or other) technology are interesting, thank you for them. But has anyone actually *implemented* any supercomputers of note with them yet? As this article states, nVidia (and before them Buck on his own at Stanford) has been putting a lot of resources into building libraries, etc for a decade and a half, and there are specific examples of the solution being used today, most notably in the machines ranked #1 and #2 in the TOP500. I could not find any reference to an ATI solution being used in practice anywhere, although the link you give shows that they have been working on it at least a decade, and predates. Though I may have missed it? An alternate possibility would seem to be that AMD has invested in it less (currently nVidia’s overall R&D budget dwarf’s AMDs)

AMD GPUs do have a modest presence in large-scale HPC : see the green computing list

http://www.green500.org/lists/green201506, with number 4 powered by AMD GPUs, as well as two more systems further down the list.