Despite the woes heaped onto investors in the past couple of weeks, the future is still out there, waiting to be created. And that creation takes funding, and keen eyes or plain old luck – and maybe a little bit of both – to make the right bets on the technologies that will make it in the future and indeed comprise that future.

Quantum computing is, for many, a given for solving certain kinds of problems, and it is going to take a significant amount of funding to turn the ideas embodied in quantum computing into working machines. That was the consensus of the researchers who spoke recently about quantum computing at the ISC 2015 supercomputing conference in Germany, who had varying opinions about the right approach to building quantum computers and the time it would take to get a machine of sufficient size to solve real problems.

Google has acquired a quantum machine from upstart D-Wave and has been playing around with it to see what kinds of problems – particularly search indexing problems – they might be better at solving than conventional binary machines. D-Wave raised $23.1 million in January from unknown investors, and has received a total of $139 million in funding from a variety of investors, including investment bank Goldman Sachs, In-Q-Tel (the investment arm of the US Central Intelligence Agency), Bezos Expeditions (the investment arm of Amazon.com founder Jeff Bezos), and BDC Capital, Harris & Harris Group, and DFJ. While D-Wave has recently shipped a quantum machine that sports 1,000 quantum bits, or qubits, and is arguably on the bleeding edge of quantum computing. (There are those who say D-Wave machines are not true quantum computers, but we are setting aside that argument for the moment.)

Vadim Smelyanskiy, the Google scholar working on quantum computing for the search engine giant, presented a sobering view of the scale of the quantum computer that would be necessary to solve really tough problems, in this case implementing Grover’s algorithm, which would be useful in speeding up search indexing. Building a quantum machine with 5.9 billion – yes, that is billion with a b – would provide a factor of 34 million speedup in running the algorithm compared to a conventional binary CPU architecture, and you would need a conventional supercomputer to feed this quantum machine data, and the whole shebang might cost on the order of $2 billion. This, as we pointed out, is considerably more than the $1 billion that IBM put together to fund its massively parallel BlueGene supercomputer effort from 1999 and which probably paid for itself over the long run.

Another hotbed of quantum computing is QuTech, which is located Delft University of Technology in the Netherlands, where Liven Vandersypen heads up research efforts. Vandersypen was blunt about the steep curve quantum computing has to climb to go from curiosity to useful tool.

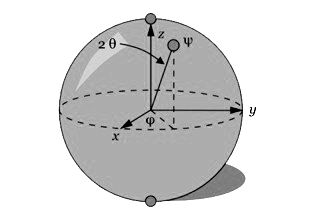

“What we are after, in the end, is a machine with many millions of qubits – say 100 million qubits – and where we are now with this circuit model, where we really need to control, very precisely and accurately, every qubit by itself with its mess of quantum entangled states, is at the level of 5 to 10 quantum bits,” Vandersypen explained. “So it is still very far way.”

But it just got a little bit closer, because binary chip juggernaut Intel has just ponied up $50 million to support research at Delft University of Technology over the next ten years.

This may seem like a strange thing for Intel to do, but as we pointed out back in July, a quantum computer will not stand in isolation, but will require a very large and very conventional parallel supercomputer to do error detection and correction on the qubits. And Intel, as a key player in computing, has to hedge its bets outside of traditional logic devices.

Under the collaboration agreement, Intel will put engineers to work on quantum computing at QuTech and at its own facilities to coordinate with Vandersypen and his team. Intel is specifically going to help with its manufacturing, electronics, and architectural expertise as QuTech tries to take the collection of electronics gear – which includes waveform generators, cryo-amplifiers, FPGAs, and other gear to control and measure qubits – and reduce them down in size. This will take semiconductor manufacturing and packaging expertise, which Intel can supply.

To highlight the investment, Intel CEO Brian Krzanich put out a statement outlining his views on quantum computing, pointing out that the future of computing is not easy to see, even if you have some good stars to steer by.

“Fifty years ago, when Gordon Moore first published his famous paper, postulating that the number of transistors on a chip would double every year (later amending to every two years), nobody thought we’d ever be putting more than 8 billion of them on a single piece of silicon,” Krzanich explained. “This would have been unimaginable in 1965, and yet, 50 years later, we at Intel do this every day.”

Krzanich pointed out that research into quantum computing has been going on for over 30 years and that Intel believes it is one of the more promising areas that Intel has been exploring with “some of the smartest engineers in the world” and adding that Intel believes “it has the potential to augment the capabilities of tomorrow’s high performance computers.”

We agree with this assessment and think of quantum computers, when they become more economically viable, as a coprocessor of sorts for conventional systems. And in Intel’s case, probably something with a Xeon brand on it in some fashion. Xeon Q has a nice ring to it.

But we are a long way off from that day. Just like we think that investment in high performance computing has been insufficient to deliver exascale systems that won’t break the system and electricity budgets, we also do not think that the level of investment in quantum computing – tens of millions, splashed around here and there – are sufficient to the very big manufacturing challenges that Intel is helping QuTech take on. Quantum Wave Fund is also raising up to $100 million to invest in startups related to quantum computing, and this will help, too. The good news is that QuTech is getting assistance, and from a company that does have the skills to help move quantum computing along on the semiconductor front. Hopefully others will follow suit.

I think that money would bettter spend in investigating reversible computing architectures instead. Quantum computing in theory is cool but only applies to a limited subset of problems. While an reversible computing architecture would solve energy and heat dispersion with traditional irreversiblle computing and would allow for an insane amount of compute power

Well money better spent on investigating security in communications, and Quantum will be part of the future, the train has already left the station.

But we will see some bad apples fall off on the way. Today there are a variety of quantum systems developed with different architectures. it spent more investment in certain spesifika system depends on participation. Another aspect is the clean media in its function as quantum or not, without more as possessing one (Quantum as “atomic bomb”) Quantum computing in theory have many branches, and not just based on an expensive electron gun as random generator, which need surrounding systems to find out if the answer is Yes, No, Maybe, or both, Humm. beyond that required a correction program to compensate all the errors with interference. Quantum encryption has been around for many years in different parts of the world, with more or less successful attempts. but to stop the development because someone does not understand, is to return to invent the wheel again to the limited subset of problems “Binary Vs Qubit.” 256 value to 65536 Value (d-wave) “

Looking to invest in quantum computing