Now that the on-again, off-again deal between Intel, the world’s largest maker of processors, and Altera, one of the dominant makers of field programmable gate arrays, is going to happen for the tidy sum of $16.7 billion in cash, Intel is poised to usher in a new era of computing while at the same time countering the many competitive threats it has in the datacenter.

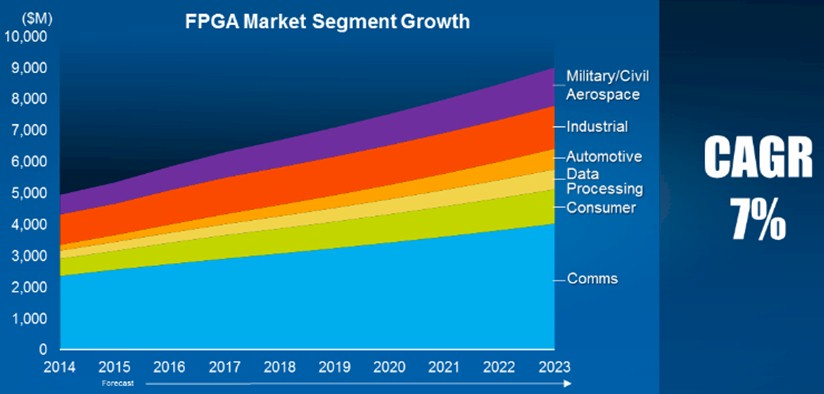

Programmable logic devices like FPGAs are not new in the datacenter, as we explained back in March in our detailed analysis when rumors of a possible acquisition of Altera by Intel first surfaced. FPGAs have been important elements of many networking and storage systems where the volumes are not high enough to justify the creation and etching of a full-blow ASICs, and there has been decades of experimentation and niche use of FPGAs as either compute engines or as coprocessors for general purpose CPUs, particularly in the financial services and oil and gas industries. The obvious question is why is Intel, which has fought so hard to bring a certain level of homogeneity in the datacenter, now willing to spend so much money to acquire a company that makes FPGAs as well as hybrid ARM-FPGA devices? What is it that Altera has that Intel thinks it needs, and needs so badly, to make the largest acquisition in its history?

The short answer is a lot of things, and with what Altera brings to the table Intel is hedging its bets on the future of computing in the datacenter – meaning literally serving, storage, and switching – and perhaps in all manner of client devices, too. The Next Platform doesn’t care much about what happens on the clients except that they are the things banging against the datacenter, driving up usage.

The first hedge that Intel is making with the Altera acquisition is that a certain portion of the compute environment that it more or less owns in the datacenter will shift from CPUs to FPGAs.

In the conference call announcing the deal for Altera, Intel CEO Brian Krzanich said that up to a third of cloud service providers – what we here at The Next Platform call hyperscalers – could be using hybrid CPU-FPGA server nodes for their workloads by 2020. (This is an Intel estimate.) Intel’s plan is to get a Xeon processor and an Altera FPGA on the same chip package by the end of 2016 – Data Center Group general manager Diane Bryant showed off a prototype of such a device in early 2014 – and ramp its production through 2017, with a follow-on product that actually puts the CPU and the FPGA circuits on the same die in monolithic fashion “shortly after that.”

Intel plans to create a hybrid Atom-FPGA product aimed at the so-called Internet of Things telemetry market, and this will be a monolithic design as well, according to Krzanich; the company is right now examining whether it needs an interim Atom and FPGA product that shares a single package but are not etched on a single die.

Neither Krzanich nor Intel CFO Stacy Smith talked about how much of the CPU workload in the datacenter space they expected to see shift from CPUs to FPGAs over the coming years, but clearly the maturity of the software stack for programming FPGAs is now sufficient that Intel believes such a shift will inevitably happen. Rather than watch those compute cycles go to some other company, Intel is positioning itself to capture that revenue. Presumably the revenue opportunity is much larger than the current revenue stream from Altera, which had $1.9 billion in sales last year. (Intel’s own Data Center Group had $14.4 billion in sales, by comparison.)

As we have said in numerous articles in the past several months, adoption of FPGAs to accelerate workloads in the enterprise and to goose the performance of HPC simulations seems to be on the rise, and we think that is happening for a number of reasons. First, the software stacks for programming FPGAs have evolved, and Altera in particularly has been instrumental in grafting support for FPGAs onto the OpenCL development environment that was originally created to program GPUs and then was tweaked to allow it to turn GPUs into offload engines for CPUs. (Not everyone is a big fan of OpenCL. Nvidia has created its own CUDA parallel programming environment for its Tesla GPU accelerators, and just last week SRC Computers, a company that has been delivering hybrid CPU-FPGA systems in the defense and intelligence industries since 2002, launched into the commercial market with its own Carte programming environment, which turns C and Fortran programs into the FPGA’s hardware description language (HDL) automatically.)

The other factor that is driving FPGA adoption is the fact that getting more performance out of a multicore CPU is getting harder and harder as the process shrink jumps get smaller for chip manufacturing techniques. Performance jumps are being made, but mostly in expanding the performance throughput of CPUs, not the individual performance of a single CPU core. (There are some hard-fought architectural enhancements, we know.) But both FPGA and GPU accelerators offer a more compelling improvement in performance per watt. Hybrid CPU-FPGA and CPU-GPU systems can offer similar performance and performance per watt, at least according to tests that Microsoft has ran, on deep learning algorithms. The GPUs run hotter, but they do roughly proportionally more work and at the system level, offer similar performance per watt.

That increase in performance per watt is why the world’s most powerful supercomputers moved to parallel clusters in the late 1990s and why they tend to be hybrid machines right now, although Intel’s massively parallel “Knights Landing” Xeon Phi processors are going to muscle in next to CPU-GPU hybrids. With Altera FPGA coprocessors and Knights Landing Xeon Phi processors, Intel can hold its own against the competition at the high end, which is shaping up to be the OpenPower collective that brings together IBM Power processors, Nvidia Tesla coprocessors, and Mellanox Technologies InfiniBand networking.

The chart we did not see as part of the announcement of the Altera deal is what Intel’s datacenter business looks like if it does not buy Altera. Intel, as you might expect, wants to focus on how Altera expands its addressable market, which it no doubt does. But there seems little doubt that Intel expected for FPGAs to take a big bite out of its Xeon processor line, too, even if the company doesn’t want to talk about that explicitly. (It is significant, perhaps, in the image that Intel is using for the Altera acquisition, which is the featured image above, the company is showing generic racks where you can really tell what is inside.)

Krzanich said that the future monolithic Xeon-Stratix part would offer more than 2X the performance of the on-package hybrid CPU-FPGA parts it would start shipping in 2016, but what he did not say is that customers using FPGAs today can see 10X to 100X increases in the acceleration of their workloads compared to running them on CPUs. Every one of those FPGAs represents a lot of Xeons that will not get sold, just as is the case in hybrid CPU-GPU machines. (In the largest parallel hybrid supercomputers, there is generally one CPU per GPU, so you might be thinking you only lose half, but the GPUs account for 90 percent of the raw compute capacity, so really a CPU-only machine of equivalent performance would have a factor of 10X more CPUs.) And exacerbating this compression of compute capacity is the fact that Intel will tie the FPGA to the Xeon and makes the combination even faster and with better performance per watt.

Intel has to believe that the workloads in the hyperscale, cloud, enterprise, and HPC markets will grow fast enough to let it still ramp its compute business, or that it has no choice but to be the seller of FPGAs or else someone else will be, or perhaps a little of both. But Intel is not talking about it this way.

“We do not consider this a defensive play or more,” Krzanich said on the call. “We look at this, in terms of both IoT and the datacenter, as expansive. These are products that our customers want built. We have said that 30 percent of the cloud workloads will be on these products as we exit this decade, and that is an estimate on our part on how we see trends moving and where we see the market going. This is about providing the capability to move those workloads down into the silicon, which is going to happen one way or another. We believe that it is best done with the Xeon processor-FPGA combination, which will clearly have the best performance and cost for the industry. In IoT, it is about expanding into new available markets against ASICs and ASSPs, and with datacenter moving those workloads down into silicon and enabling the growth of the cloud overall.”

The other hedge is that Intel will be able to bring a certain level of customization to its Xeon and Atom product lines without having to provide customizations in the Xeon and Atom chips as it has been doing for the past several years to keep cloud builders, hyperscalers, and integrated system manufacturers happy. Rather than doing custom variants of the chips – in some cases, Intel has as many custom SKUs of a Xeon chip as it has in the standard product line – for each customer, Intel will no doubt suggest that custom instructions and the code that uses them can run in the FPGA half of a Xeon-Stratix hybrid. In some cases, those getting custom parts from Intel are designing chunks of the chip themselves, or they have third parties doing it, according to Krzanich.

“This does give them a playground,” Krzanich explained. “You can think of an FPGA as a large sea of gates that they can program now. And so if they think that their algorithm will change over time as they learn and get smarter, or if they want to get to be more efficient and they don’t have enough volume to have a single workload on a single piece of silicon then they can use an FPGA as accelerators of multiple segments – doing facial search at the same time as doing encryption – and we can basically on-the-fly reprogram this FPGA, literally within microseconds. That gives them a much lower cost and a much greater level of flexibility than a single customized part that you would need to have quite a bit of scale to need.

The final hedge that Intel is making is that it can learn a bit about making system-on-chip designs and can, if necessary, quickly ramp up an ARM processor business if and when 64-bit ARM chips from Broadcom (soon to be part of Avago Technology), Cavium Networks, Qualcomm, AMD, and Applied Micro take off in the datacenter. In its press release announcing the Altera deal, Intel said that it would operate Altera as a separate business unit and that it would continue to support and develop Altera’s ARM-based system-on-chip products; on the call, Krzanich said that Intel was not interested in moving Altera’s existing Stratix FPGAs and Arria ARM-FPGA hybrids to its fabs and was happy to leave them at Taiwan Semiconductor Manufacturing Corp.

We will be doing some more analysis of the implications of this Altera deal for future systems and their applications. Stay tuned. And don’t be surprised if Avago, which is in the process of acquiring Broadcom for a stunning $37 billion, starts looking real hard at FPGA maker Xilinx.

Hopefully Intel will replace the basically useless integrated GPU with an integrated FPGA and make it easy to port code to partially run on the FPGA.

A speed up of 100x for certain cpde has applications from supercomputers to phones.

The trick for Intel will be to make it easy for programmers to use the power of the FPGA.