The accelerator story for top supercomputers is a strong one, starting with GPUs, which were snapped in as coprocessors on some of the world’s largest systems over the last five years. Since then, other accelerator options, including the Xeon Phi (and next generation Knights Landing coming soon) have emerged, which aim to provide a more programmable interface to accelerate HPC applications.

There are certainly other accelerators on the market, often targeted at specific use cases, but one such engine that has not found its high performance computing footing is the FPGA. There are reasons for this, which we will get to a in a moment, but on the flip side, some experimental work happening in traditional HPC applications using programmable gates, coupled with on-chip and programmatic enhancements, could make FPGAs more attractive for HPC. As we noted yesterday in an analysis of the FPGA growth path, one of the major barriers for supercomputing or general enterprise datacenters is simply that these devices are difficult to program. We described how that is changing with some recent momentum around moving FPGAs closer to procedural languages and OpenCL, but it is still a long road.

The good news for HPC, especially in research and academic environments, is that there are plenty of graduate students who can spend the time needed to specialize in FPGA development and programming. But even still, that is not enough to make these devices ready for the next generation of supercomputers, even with momentum from vendors like IBM, who through their CAPI interface and shared memory, are making FPGAs and other devices closer to the compute and eventually, more integrated programmatically.

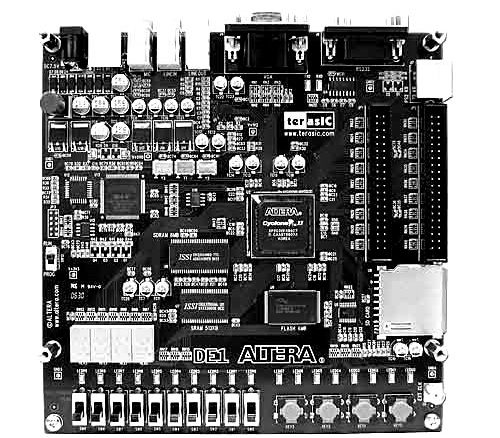

Unlike some enterprise workloads, HPC is historically floating point intensive, which means that these applications are not a good fit for the FPGA, at least until vendors can snap in dedicated floating point units—something that Altera has publicly said they are working on for future generations (and Xilinx will likely follow suit). It is not that it’s impossible to get reasonable floating point performance off FPGAs now, but there are a lot of gates and it would be woefully inefficient to do so. GPUs, the dominant accelerator in HPC, are stuffed with floating point units, on the other hand and coupled with their rich CUDA (and OpenCL) ecosystems, are still the simpler choice for acceleration.

Even still, FPGAs do offer the fine grained parallelism and low power consumption of other accelerators, with extreme configurability added in. But beyond the floating point limitations to date, the difficult programming environment, there is also another big limitation—albeit one that will be overcome soon enough. For these applications, FPGAs are limited by the internal memory on the chip. The bandwidth might be great, but without enough memory, this is a big limitation.

Wim Vanderbauwhede at the University of Glasgow’s School of Computing Science has been working with FPGAs for well over a decade and has moved his research into the area of looking at key HPC applications and how they match to FPGAs. In a chat with The Next Platform he talked about how for things like search and working on large graphs, the FPGA is well-suited (although in the latter case, memory limitations are still an issue).

There are already a range of high performance computing applications that can be run on the FPGA for a sizable boost, presuming the code legwork can be done. According to Vanderbauwhede (whom incidentally, wrote the book on high performance computing on FPGAs) if code has already been optimized for other accelerators, including GPUs, much of the heavy lifting has already been done. In his teams’ work on FPGAs for a select set of HPC applications, it took about one month for a full time person to prepare code to run at high performance and efficiency on FPGAs—a boost that is worth the effort in areas like financial services where stock option pricing and Black Shoals models really let the FPGA shine. In this example, along with others that are multi-kernel and deal with relatively small datasets (including molecular dynamics and key biomedical applications) FPGAs can perform well, but there are other areas that offer opportunity in the future that are worth picking through for now, including weather modeling—an interesting target since it is straight number crunching and memory hungry.

“I’ve been watching the evolution of FPGAs since 2000, but it’s really just in the last five years that they’ve become useful for HPC. Five years ago, GPUs were in experimental clusters and testbeds and now you’ll see them dominating in the Green 500 and in other big supercomputers. It is not impossible that we will see the same thing with FPGAs at some point as well.”

More recently, Vanderbauwhede and his team have taken a traditional HPC application in weather modeling to look for speedups with FPGA acceleration. While they have had success performance-wise, he says that for these centers and other large supercomputing sites, it’s far more a matter of performance per watt. This is where FPGAs will find a fit in HPC while the memory and programming environments catch up.

“There is use for some HPC applications like weather modeling as long as you are able to use the full parallelism of the device. What we have done shows it is just as power efficient as a multicore system and far more efficient than any original single-core code they used to run. But of course, memory is still an issue.”

Vanderbauwhede says that the problem with FPGAs for weather simulations and a select set of other HPC application is that there is always a limitation in the number of gates one has to work with. That means, if you can split it up and reconfigure the FPGA to do different parts of the program at different times, and it is fast enough, the cost of swapping the configuration is offset—assuming, of course, there is enough data to work with. “So in the weather example, the time spent computing a volume of atmosphere will be larger than the time it takes to reconfigure the device. That’s exciting because until recently, even though they’re called reconfigurable devices, a lot of that didn’t happen on the fly.”

In terms of future directions for FPGAs in HPC, Vanderbauwhede agrees that once they are more tightly integrated and can share memory it would be a step change. “If you have an FPGA in the socket where normally a processor would sit so that it has access to the front side bus, that is a game changer. At the moment, the big problem is that it’s a PCIe offload model. There’s so much data that needs shifted back and forth, so getting around that will likely open FPGAs for more users.”

FPGA in the socket 2006 FPGA + CPU 1996

http://commacorp.com/management.html