Around this time last year, EMC announced the acquisition of DSSD, a storage acceleration startup founded by a slew of ex-Sun leads, including Andy Bechtolsheim (one of the original Sun founders), Jeff Bonwick (co-creator of Sun’s ZFS file system) and led by Bill Moore as CEO (former head of storage at Sun).

EMC was an early investor in DSSD’s approach to rack-scale flash technology and while it has made public that it expects to roll out key products around in-memory databases, HPC, and of course, streaming analytics throughout the year, there is little available about how the shared storage concept, based on NVMe, might look. With the announcement of a new supercomputing site set to run data-intensive workloads that sit just outside the traditional high performance computing sphere, The Next Platform was able to get a firsthand sense of how the DSSD flash might boost I/O for a range of EMC’s targeted areas, including HPC.

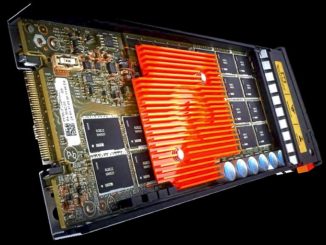

Although they have not shared much about how the offering works, aside providing a general description about how they can provide the benefits of direct attached flash (high performance, low deterministic latency) via a host connection through PCIe 3.0. In essence, their chassis is disaggregated from the compute nodes, which in theory, provides all the benefits of shared storage (no more stranded storage, no need to shuffle data around) as well as a much bigger pool of flash, which as Matt McDonough, product manager for DSSD, told The Next Platform, leads to higher performance and better reliability and scalability since it is in a chassis and thus not limited to what an individual PCIe flash card might be able to provide. This also means the management and serviceability are joined under a single banner, which he said targets operational efficiency—another side of the large-scale storage puzzle that is critical for larger sites doing data-intensive work, including the Texas Advanced Computing Center (TACC).

TACC, along with system partners at Indiana University and the University of Chicago is getting closer to putting the long-awaited Wrangler supercomputer into production, which is one of the first systems to use the DSSD technology (publicly, McDonough says there are a handful of other early users in a cross-section of industries). The Wrangler system, which is focused on non-traditional, data-intensive workloads (versus MPI-driven, standard high performance computing jobs, as their other larger systems have historically been) has already had some success proving out the DSSD concept across a number of user applications and research areas, including some notable zing against MapReduce/Hadoop and Postgres.

As McDonough told us, “This early work with TACC is helping us drive a lot of these new complex applications to work in parallel on a single working dataset instead of consuming significant compute and network resources to share and copy data into smaller working sets.”

Dan Stanzione, TACC director, echoed this, noting that his team could have just as easily bought conventional PCI or SAS attached SSDs, stuck those in a server and built a file server that way, but such an approach comes with two big bottlenecks that are removed when taking the DSSD route. First, there’s the actual disk interface to consider, which treats flash like disk, meaning users have to take the protocol overhead just as if a spinning disk drive was being accessed. With the DSSD array, the flash is available as an object store, which means there’s no need to go through the normal disk protocol to get to it.

The second barrier with that traditional approach, Stanzione says, is that no matter how many SSDs you put in a server, for anything else to talk to them, they still have to come through the network. Whether Ethernet or Infiniband, you are still left with a lot of storage servers and many network attachments, which is far less efficient and high performance than having what amounts to a big box of flash with a bunch of PCIe connections where one can connect up to 96 servers to a single shared flash space.

“The point is, we are never going through the network protocol and copying data across the network to access disk. We have everything locally attached and shared via PCI and do we don’t have to slow down the flash by treating it like a disk drive.”

With 96 connections, one might imagine that some overhead could come with additional scale. We asked Stanzione about their experiences scaling to multiple boxes of DSSD’s flash, which he said moved in time with their requirements. Wrangler has a total of 112 nodes at TACC and 24 nodes at Indiana University, and although DSSD and TACC would not disclose the exact configuration of the DSSD piece of their infrastructure, Stanzione agreed that while there are limits to how many servers can physically connect to a D5, it’s possible to replicate blocks of those (sets of D5s hooked to multiple sets of servers). “Our D5s are not the largest that are available, but you can just add flash and scale that way.” With the introduction of the DSSD boxes, he says he can see how TACC could build out enough to keep a petabyte of data on a D5 and coherent across nodes without any scaling overhead, although beyond that he would think about building multiple sets of them.

DSSD declined to share any early performance testing results or details, but Stanzione was able to talk about how they are getting some rather impressive results out of a few key workloads that are similar to the types users in scientific realms brought to traditional supercomputers, only to find that their problems didn’t mesh well with the compute-driven architectures of those machines, which do well on simulations but don’t have what is required for data-intensive applications.

For instance, one biology application presented a problem in a Postgres database, with almost all of the computation was in the SQL queries. “For workflows like this, the things we were running on our traditional database machines were taking four to five days to run and some of the bigger datasets just caused the application to die and never finish. On Wrangler, most of the workflows for this biology group were finishing in four to six hours.” Stanzione says they have step-by-step micro-benchmarking for certain parts of that workflow and on these, the team was seeing 40-50x faster performance than with traditional disk-based database systems to plow through those workflows.

TACC has also been experimenting with DSSD and Hadoop-based workloads like the Crossbow workflow against their traditional approach to Hadoop systems that have several disks on each compute node with a high-speed interconnect between the nodes. “Out of the box, using a pre-production release of the software that operates the D5s, we were seeing a 7-8x performance improvement. That is more of a mix of some I/O and computation—the I/O sped up a lot, the computation not so much, but we are seeing a huge performance difference,” according to Stanzione.

One might expect this could add some complexity, at least at the beginning, but he told The Next Platform that there were very few tweaks that had to be made to their code, which was one of the defining factors for the Wrangler team. “We take the D5 and make it available to users in several different ways. In both cases we talked about, natively the D5 looks like an object store and there’s a native API we could let users loose on, which involves coding. But we also make it available as a traditional file system (in our case GPFS) so one can do regular POSIX-style I/O and while there is some overhead there, it’s still faster than anything we have.”

Right now, it’s just open to early users, we’re running completely unmodified code and taking advantage of the acceleration you get from the hardware. But there is an opportunity to code from the native interface and get even more performance. We haven’t had any end users start to do that yet, but we are getting big performance boosts from just unmodified code.”

Be the first to comment