The University of Stuttgart’s High Performance Computing Center (HLRS) in Germany has tapped Hewlett Packard Enterprise to build a pair of its next-generation supercomputers. The €115 million project will see the construction of “Hunter,” a small-scale system powered by AMD’s MI300A APUs, and “Herder,” a larger exascale machine designed to expand Germany’s high performance computing capacity.

Managed under the Gauss Center for Supercomputing (GCS), the project’s funding comes courtesy of the German Federal Ministry of Education and Research and the state of Baden-Württemberg’s Ministry of Science, Research, and Arts.

Hunter, presumably named to reflect the relatively small scale of the system, is slated to come online in 2025. It will serve as a transitional system on which code developed purely for CPU-based big ion can be refactored to take advantage of GPU acceleration at scale.

Up to this point, HLRS has largely focused on CPU-based systems. The current supercomputer at HLRS, nicknamed “Hawk,” debuted in 2020 at 26 petaflops at FP64 double precision floating point. The machine is predominantly CPU based with 11,264 64-core “Rome” Epyc processors spread across 44 cabinets making up the bulk of the system. Hawk does have a small GPU partition comprising 24 nodes and a total of 192 Nvidia A100 GPUs good for 1.8 petaflops of FP64 vector performance.

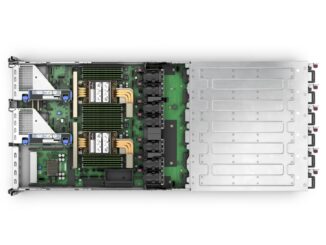

By comparison, Hunter will occupy a fraction of the power and space required by Hawk while delivering 33.3 petaflops of peak double precision performance. According to HPE, the Cray EX4000-based system will feature 136 compute nodes each with four Slingshot interconnects.

If the system uses the two-node per compute blade arrangement typical of Cray systems, we’re looking at 68 blades, just over the 64 supported by a single EX4000 chassis. HPE tells us the system will occupy two chassis, so, this thing is going to be tiny compared to Hawk no matter how you look at it.

Alongside the compute blades, the Hunter system will be supported by HPE’s next-generation Cray ClusterStor storage system as well as the Cray Programming environment.

Powering the system is AMD’s newly introduced MI300A accelerated processing unit (APU). The accelerator combines six CDNA 3 GPU dies totaling 228 compute units with a trio of eight-core compute complex dies (CCDs). The APU also features 128 GB of HBM3 spread across eight stacks that speeds along at 5.2 Tb/sec of memory bandwidth.

AMD claims the part’s unified memory architecture, which allows the CPU and GPU to operate on the same memory domain, unlocks a substantial performance advantage in HPC workloads like OpenFOAM. The chip is also at the heart of Lawrence Livermore National Laboratory’s 2+ exaflops “El Capitan” system, which is being built by HPE and AMD right now.

HPE tells us that the Hunter machine will feature 544 accelerators and each node will have four Slingshot NICs. This tells us that there will be four MI300As per node, the maximum supported by the platform, for a combined 69.6 petabytes of HBM3 stacked memory. At 61.3 teraflops of FP64 vector and 122.6 teraflops of matrix performance each, that works out to 33.35 or 66.7 petaflops, respectively.

Details are much thinner when it comes to the University of Stuttgart’s new exascale-class system. Dubbed Herder, presumably on account of the system’s scale, the machine is slated to come online in 2027.

Beyond the fact that HPE will build the system, we only know that it’ll be accelerator based. The actual chips powering the system won’t be determined until the end of 2025. We’ll note that’s roughly aligned with AMD’s two-year release cadence for Instinct cards, so possibly an MI400A? It’s hard to say.

Herder isn’t the Gauss Center for Supercomputing’s only exascale system under development. The Jülich Supercomputing Center has been selected as the site for Europe’s first exascale supercomputer called Jupiter.

Built by Eviden and ParTek, the machine is slated to begin installation in 2024 and will feature both CPU and GPU partitions. The CPU partition will be powered by SiPearl’s Arm Neoverse V1-based Rhea1 CPUs, while the GPU segment will feature 24,000 of Nvidia’s Grace-Hopper GH200 superchips.

Together, the system is expected to deliver in excess of an 1 exaflops on the High Performance LINPACK benchmark that is used to rank the performance of supercomputers.

Good for Stuttgart and HPE! Stuttgart University’s CPU-only Hawk (HPE, EPYC) is currently the 3rd fastest HPC machine in Germany, after the JUWELS Booster (Eviden, EPYC+A100), and the SuperMUC-NG (Lenovo, Xeon). It sits at #42 on HPL, #39 on HPCG, and #107 on Green500. With its MI300As, Hunter will be a nice update, with great opportunities to research mixed-precision code and related speedups. The space savings might even be used for a small experimental kitchen specializing in delicious Maultaschen (for the students!)! (eh-eh-eh!).

I do wonder though, if €115 million will get them all the way to ExaFlopping. If so, it seems that it would be quite a bargain compared to today’s machines, except Aurora (maybe the new MI400As will help there)!

The exascale in the title is for dramatic effect and refers to the generous low precision metrics, not FP64, of course.

Very nice article as usual.

Just noticed what I think to be a unit error shouldn’t it be terabytes instead of petabytes in “69.6 petabytes of HBM3 stacked memory”?

Spelling Barden-W -> Baden-W