Not all cloud providers are the same, and even among the three cloud giants – Amazon Web Services, Microsoft Azure, and Google Cloud – there is some measure of differentiation. For instance, many enterprises preferring Azure for compatibility with their Windows-based infrastructure.

But some believe that many customers are not well served by the cloud giants with their complexity and byzantine pricing models, and aim to deliver simple infrastructure services that are affordable for smaller organizations and individuals.

One such company is cloud infrastructure provider Vultr, which has just launched a new service that pairs a virtual machine with a fractional part of an Nvidia A100 GPU in order to make GPU accelerated computing available at a lower cost and thus open up access to machine learning and data analysis for a broader audience.

The Vultr Talon service is based on Nvidia’s AI Enterprise suite and also uses its Multi-Instance GPU (MIG) technology, which partitions the GPU and its resources into separate instances, effectively creating virtual GPUs from one Nvidia A100, in the same way that a hypervisor carves up a bare metal server into a number of virtual machine instances based on CPU cores and threads.

Other cloud providers have had GPUs in their portfolio for some time, of course, but these have centered on offering an entire GPU paired with either a virtual machine instance or an entire bare metal server, and use of the GPU alone can cost thousands of dollars per month, Vultr claims.

“The big clouds are primarily focused on enterprises that have massive scale and budgets, and they tend to build products to attract the large spend of massive enterprises, first and foremost,” Vultr chief executive officer J.J. Kardwell tells The Next Platform.

“In the case of GPUs, we wanted to deliver the industry-leading GPU for AI/ML, which is, of course, the Nvidia A100. But, we didn’t want to charge thousands of dollars per month as other clouds do. So we naturally investigated how we could virtualize the A100, splitting it into pieces, much as we’ve done with our cloud compute line-up of VMs.”

Vultr also says that the Talon service had been developed with Nvidia’s participation.

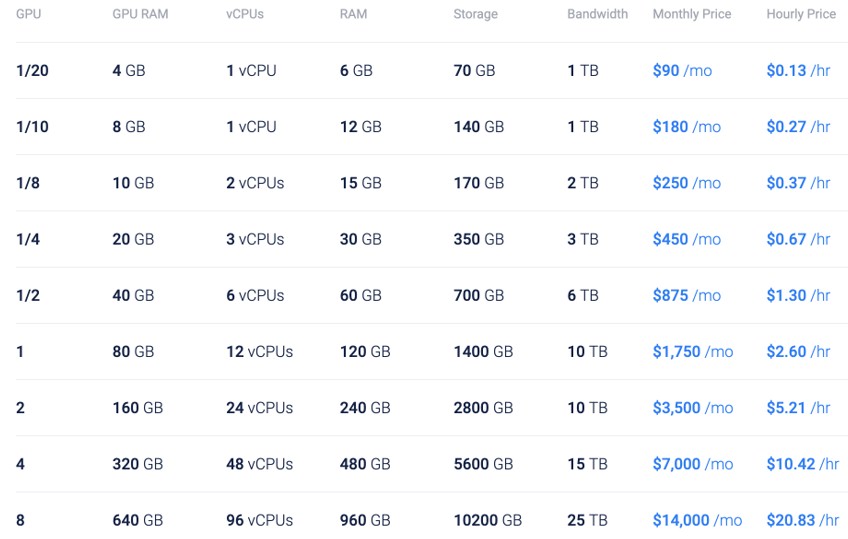

The result is that Vultr is able to offer Talon at a starting price of $90 per month for a virtual machine with one vCPU and 6 GB of memory, plus a virtual GPU that is equivalent to 1/20th of the compute power of a full Nvidia A100, combined with 4 GB of GPU memory.

This appears to be achieved using Nvidia’s vGPU software, part of the AI Enterprise suite, but for Talon instances with 10 GB of GPU memory or more, the Nvidia MIG technology is used to provide guaranteed quality of service (QoS) and a fully isolated GPU memory cache.

According to Nvidia’s documentation, the vGPU software uses time slicing to share the GPU compute resources between virtual machines, but with each virtual machine having its own dedicated GPU memory area. This contrasts with the MIG technology, which statically divides up the GPU cores, so that a virtual machine can only use the cores assigned to it and only access the memory assigned to it.

To our mind, this makes the software vGPU mode analogous to the way multi-tasking works in a typical operating system, whereas MIG mode seems more like the partitioning seen in some high-end systems from IBM, Hewlett Packard Enterprise, and NEC among many others in the history books. The difference is akin to a hardware partition (the MIG is analogous to a motherboard in a multi-node system) versus a virtual machine (which can be defined by a socket, a core, a thread, or a portion of a thread, depending on the hypervisor and CPU.)

Vultr informed us that virtual GPUs can only be provisioned as part of a Cloud GPU virtual machine, and these virtual machines have to be deployed onto server nodes within the Vultr cloud that have Nvidia A100 GPUs physically installed within them. This is what you would expect, of course, but we were unsure whether the company might be using some trickery to allow virtual machine instances to link up with a GPU installed elsewhere in the infrastructure over the network. (There are others that are doing this GPU-at-a-distance thing for PCs, and the one we know about is called Juice Labs.)

However, Vultr also told us that customers who wish to upgrade from a virtual machine without a vGPU to a Cloud GPU instance, they should be able to accomplish this by taking a snapshot of their instance and provisioning a new Cloud GPU instance from that snapshot.

Vultr is offering virtual machines with full GPUs, providing users who want GPU acceleration a choice starting from fractions of a GPU all the way up to a monster of a virtual machine with eight full GPUs and 640 GB GPU memory, combined with 96 vCPUs and 960 GB of RAM. Bare metal servers with four Nvidia A100 GPUs and dual 24-core Intel Xeon SP processors are also available.

We asked Vultr if it is possible for a customer running a Cloud GPU virtual machine instance with a vGPU to increase their compute power by adding a second vGPU rather than having to swap to one with a more powerful vGPU. This is apparently technically possible, but the company currently does not support this option via its customer control panel.

As to what use a vGPU might be put to, Vultr said that Cloud GPUs with the virtualized Nvidia A100 are well suited for a variety of workloads that don’t require full GPUs. One such example is running the inferencing for a machine learning model, which typically requires much less processing power than the training. Natural language processing, computer vision, and voice recognition are some specific applications that should work well on virtual GPUs, the company told us.

The new Talon Cloud GPU service builds on the company’s model of trying to offer a simpler and more cost-effective service than the larger cloud providers, Vultr claims.

“The majority of spend in cloud computing goes to Amazon, Google, and Microsoft Azure, but there’s an enormous portion of the world that is not well served by their pricing models and their complexity,” Kardwell says.

The bulk of Vultr’s customers are smaller organizations and developers, he added, but it also has plenty of large enterprise customers thanks to its wide geographic footprint, possibly on a par with Google Cloud as far as locations go.

“We serve large enterprises who care about controlling the infrastructure deep down into the stack, and often are buying bare metal, and then those users who are not well served by the hyperscalers because of price, ease of use, and location. And really, that’s how we differentiate – ease of use and simple transparent pricing,” Kardwell claims. He says that many cloud users are frustrated by the constant price shocks and by being stung for extras such as egress charges by the big clouds.

In contrast, Vultr has predictable pricing by including storage and an allocation of network bandwidth in the monthly charge for many of its virtual machine instances, rather than these being metered separately.

“We’re not selling a low end product, we sell a high end product, built to enterprise-grade standards, but engineered in a way where we can deliver it more efficiently,” Kardwell adds.

The Talon Cloud GPU service is initially available in beta, but this is simply because it is largely the first offering of its kind, Kardwell tells us. For this reason, the Cloud GPU plans will only be available from Vultr’s New Jersey location while the company evaluates how well it is operating.

“We will be adding global inventory for Nvidia A100, A40, and A16 GPUs in the weeks ahead, to better support additional regions and a wider variety of use cases. We are continuing to work closely with Nvidia as we progress towards a general availability release, which we anticipate in the next few months,” says Kardwell.

Be the first to comment