SPONSORED If buoyant market figures are anything to go by, most enterprises already have a good grasp of the benefits of adopting hyperconverged infrastructure (HCI). By tightly integrating compute, storage and networking in a HCI platform, organizations gain a cookie cutter level of simplicity, ease of scalability and management – whether they are building out hyperscale datacenters or enterprise-scale banking or ecommerce systems. Also, the OPEX subscription-style pricing that HCI vendors are adopting wholesale may win the approval of the finance department.

On the downside, individual components may be under-utilized for certain workloads in a standard HCI setup. But if some applications are more compute intensive, you can always add some specially tuned racks just for them. For more data intensive applications, you can juice some racks with additional SSDs. Perhaps they can sit next to the GPU-enriched SKUs you’ve ordered for your AI workloads.

Then, before you know it, bubbling under the surface of that placid pool of similar looking boxes, is what Scott Hamilton, Western Digital’s senior director for product management and marketing, describes as “sku-nami”.

“The more you have, the harder it is to manage and predict,” he argues. Conversely, you may end up with a bunch of stranded resources when there are fewer options / less granularity.

The direction of travel of modern workloads makes this issue even more challenging. The shift to the cloud, and cloud-native applications, brings with it a greater focus on flexibility and scalability. Simultaneously, the new breed of AI and machine learning applications rely on vast amounts of data (though this will vary according to whether the focus at any given time is on training or inference).

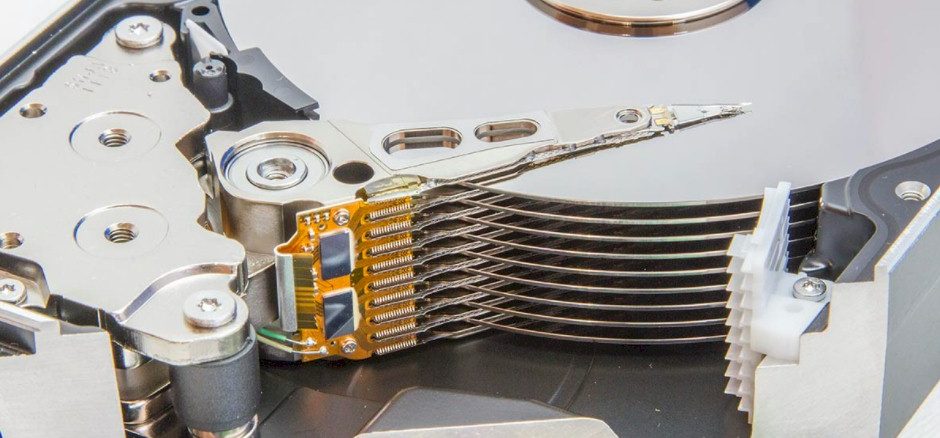

These are the macro trends. At a more micro-level, NVMe SSDs have increased the volume of data that can be served up to the processing cores in a system, resulting in better CPU utilization. But why confine that within individual servers? “NVMe has led the way. Now the standard allows for NVMe over Fabrics, which basically says now I’m disaggregating my NVMe storage, that was connected directly to the CPU over PCI, and extending it over a fabric so it can be shared,” explains Hamilton.

NVMe Sets The Pace

Once you have taken the leap of sharing a pool of NVMe resources, the next obvious step is to ask: “Wouldn’t it be great if I could disaggregate all of my resources, whether it be compute, whether it be GPU whether it be storage, fast or high capacity, and make that all shareable?”

These disaggregated components can then be composed into new logical entities precisely geared towards particular workloads and projects. “If it’s dynamic, you can put those resources back in the pool when it’s no longer needed, whether it’s over time, over days, or projects.”

That’s what Western Digital aimed to kickstart in 2018 when the storage giant announced its proposal for an open composable disaggregated infrastructure (CDI), with an API. Hamilton describes the initiative as a framework that allows communication amongst all of the resources, “which are peers in a CDI model, as opposed to in an HCI model, where the CPU is the kingpin and everything else is underneath.”

The 2018 CDI announcement was accompanied by the Openflex 3000 Fabric Enclosure, a 1U device that hosts upto 10 OpenFlex F3200 flash-based devices, each of which offer up to 61.4TB of capacity, with write IOPs up to 2.1M, and write bandwidth of 11.5GB/s.

“We also demonstrated disk attached to the fabric,” adds Hamilton. “It was managed in terms of NVMe namespaces, which is like volumes in the disk world. Everything can be managed the same way – at least the storage, whether it’s flash or disk. It just has different characteristics and price points.”

So, in Western Digital’s vision, composability and disaggregation isn’t just applicable to production workloads, but also to colder storage and ultimately an entire infrastructure where everything is disaggregated, fabric attached, and composable.

Being open makes things a “bit more complicated”, Hamilton acknowledges, but working with a broad ecosystem will accelerate the development of this infrastructure. “You’ve got to work with the NIC partners, you’ve got to work with the switch partners… we’re partnering with a lot of folks in that ecosystem, whether it be Broadcom, whether it be Mellanox, which is now Nvidia, which then gets into the GPU arena.”

The results of CDI pilots and proof of concepts are now showing exactly what the approach can deliver.

Composability? What’s The Score?

Breaking out the storage components for general enterprise workloads – such as Oracle or SQL Server applications – should allow organizations to flush out data bottlenecks in HCI systems, and thereby increase response times and speed up queries. Also, whenever another HCI system is added to just fix a storage problem, organizations may be dinged with additional licensing costs. By disaggregating the infrastructure, they can avoid this expense, by increasing the amount of (cheaper) storage in relation to (more expensive) compute, thus ensuring better CPU utilization.

Similarly, Western Digital is working with “a major software company” on a customer use case for improving the service experience for service ticket management. The company is evaluating a software-defined storage (SDS) approach, using OpenFlex devices with SSDs, to handle huge volumes of customer and service data. As Hamilton explains, “what they’re trying to do is reduce the latency, which gives them a differentiation and to make their overall system faster and more cost effective.”

On an even bigger scale, Western Digital is working on a customer use case with a telecoms provider that has adopted HCI for its content delivery network. “It was just getting pretty expensive,” as the company added customers to individual points of presence. The HCI model it deployed meant it was not adequately using the existing storage in each of the servers.

“We are offering them disaggregated flash storage,” says Hamilton. “So, very fast, low latency, but it’s shared over the fabric, and then they can have their compute nodes. We can provide them much greater efficiency.” Some predictions are that the CDI approach could deliver as much as a 10x improvement in dollars per gigabit per second.

Not all CDI applications have to be focused on composability and on-the-fly orchestration. According to Hamilton, the approach also lends itself to developing more static, but highly efficient and cost-effective infrastructure. “You can think of disaggregation as a great building block to then do something very purpose built.”

For example, France’s Brain and Spine Institute (ICM) has put the OpenFlex platform to work in its research infrastructure. The system handles vast amounts of data, captured from a range of medical imaging tools including MRI systems, and digital light sheet microscopes that generate up to 21TB of data per hour. Moving the data around to researchers imposes such a burden that the researchers often have to use lower res images. Even with this fix, copying data to storage could take four hours. The alternative of adding local storage to workstations was a no-go because The Institute lacked the physical space in its Paris headquarters.

ICM opted for a centralized OpenFlex-based system, initially serving 10 workstations, with plans to eventually scale up to more than 50. The system serves up the full high-res images with latencies of 34 microseconds or less, which enables scientists to analyse more images at four times the resolution the previous system allowed. Other benefits include the removal of the need to support multiple storage servers around the campus, while additional microscopes can be added without having to deploy further local storage.

To conclude, HCI has opened people’s eyes to the possibilities of software-defined storage. However, tight integration of components reduces flexibility and can impact performance and budgets. In response, Western Digital has architected the disaggregated CDI platform to deliver this greater flexibility. The company’s approach feeds into whatever SDS or orchestration layer customers may already have in place. “You can see that software-defined storage and its adoption over time is increasing rapidly… and CDI is riding off of that,” says Hamilton.

And although not everyone needs to orchestrate their infrastructure on-the-fly today, they do need the flexibility to match their infrastructure more precisely to their current and future workloads. Ultimately, says Hamilton, “If I don’t disaggregate, then I don’t really compose.”

This article is sponsored by Western Digital.