It is no accident that quantum computing is being undertaken by some of the biggest IT companies in the world. Google, IBM, and Intel in particular have the capacity to devote a lot of resources to their respective efforts, and all of them demonstrated impressive progress over the past few years. And even though we’ve devoted a lot of our coverage of quantum computing to these three key players, it’s still much too early to tell which companies will come to dominate this nascent market.

That’s mainly because, as of yet, there is no real consensus on which technological approach will be able make universal quantum computing commercially viable. That said, IBM, Google, and Intel, as well as Rigetti are all developing various kinds of solid-state quantum processors. While this approach builds on decades of experience with silicon-based semiconductors and large-scale integrating, this technology demands cooling the chips to near absolute zero and employing aggressive error correction techniques to keep the qubits behaving properly.

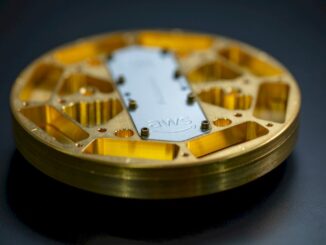

A notable exception to this solid-state approach is being pursued by a started named IonQ, which is using trapped ions for its qubits using ytterbium atoms suspended in a vacuum. Here, processing is accomplished with the use of laser beams, which are applied to the atomic qubits to store and retrieve information, perform logical operations, and link them together into specific circuits. In a recent conversation with IonQ CEO and cofounder Chris Monroe, he told us why he believes this approach has the best chance of making the leap into commercial quantum computing.

Since the laser beams are under programmatic control (using a conventional computer), the quantum hardware can be constructed on the fly to optimize the circuit layout for the application. Quantum computing is intended to be applied to a wide array of optimization problems in areas such as quantum chemistry, logistics, machine learning, and cryptography, so being able to codesign the hardware with the software in FPGA-like fashion is yet another way IonQ differentiates itself from its hard-wired competition.

If Monroe and company manage to outrun their larger, better funded competition, it won’t be because the engineers at IonQ are better quantum physicists. In fact, Monroe says the challenges of scaling their processor into something that can run real production applications does not require higher quality qubits – the atoms behave perfectly in that regard – but rather more refined optical control. That mostly has to do with reducing laser noise and movement, which just requires better engineering, not new materials or breakthrough physics.

IonQ currently operates three devices. At this point, their best system can operate on 10 or 20 qubits at a time and run several hundred operations. On benchmarks, they’ve demonstrated average single-qubit gate fidelities of 99.5 percent and two-qubit-gate fidelities of 97.5 percent, with state preparation and measurement errors of just 0.7 percent. That’s as good as or better than what any solid-state quantum device has been able to achieve to date. Monroe says they are confident they can scale to a few hundred qubits and tens of thousands of operations without any error correction just by improving the optical control system.

“That’s probably not good enough in the long run,” he admits, “but we’ll be able to do some interesting problems with that.” Their long-term goal is to build systems capable of performing millions or even billions of operations, and to do that, Monroe says they will indeed have to employ error correction. But according to him, those systems will need perhaps just 10 or 20 qubits per usable qubit, which is orders of magnitude lower than what will be required for error correction with solid-state quantum devices.

In fact, Monroe thinks solid-state quantum computing processors are a dead end. If they can’t improve on the two percent error rate these systems currently exhibit, he believes it will require too many qubits for error correction to enable a small number of them to be used for logical operations. The confounding problem with this approach is that error rates will tend to rise as more qubits are added due to qubit variance and increased cross-talk, requiring ever greater numbers of error correcting qubits. “That a really hard scaling problem,” he says.

Where IonQ needs to catch up with the competition is in third-party availability. IBM and Google, and even startup Rigetti, have made their quantum machinery available to researchers and even some potential early customers. IBM is furthest along in this regard, with its commercial Q Network for paying clients, and has attracted a handful of big-name companies like JP Morgan Samsung, and ExxonMobil.

Since its founding in 2016, IonQ and its 35-person workforce has been focused internally on building initial systems for testing and further refinement. Thus far, the strategy has been to devote all the company’s resources into evolving the platform as quickly as possible so as not to impede technical progress. Monroe says they’ve burned through between two-thirds and three-fourths of their $20 million in venture capital funding to move the technology along during this time.

IonQ does have a few partnerships with academics who have ad-hoc access to its systems, but according to Monroe, they now recognize the need to give their platform wider exposure to researchers and commercial organizations so they can start throwing actual use cases against their technology. Although nothing is official quite yet, the CEO says there are plans in the works to do just that and hinted they will be announcing key engagements with some well-heeled partners in the coming months.

“People are knocking down the door looking to get into these systems,” says Monroe.

It’s still too early to tell!!!

What the end will be. C#,html,css, Python,Rust.

Ahahaha, Monroe’s gonna do billions of operations? On logical qubits each composed of 20 physical trapped ion qubits, which translates into 100s of billions of operations? Yeah, that’ll be really fast… only engineering problems there. And he is going on record saying he thinks solid state is a dead end? Let me know when he’s running real problems in the age of the universe… what a blow hard.

I’ve heard similar proposals in the past, but have never heard much detail. I always get curious when lasers and atoms are mentioned in the same sentence. Photons are more than a thousand times larger than atoms; perhaps a reader more knowledgeable than I will explain how the two will interact with the required precision.

Photons are larger than atoms physically if you think about electron orbitals and such, but the cross section for atom photon interaction is about the size of a photon. (Atoms and photons are waves so size is a little tricky…)