Even if Nvidia had not pursued a GPU compute strategy in the datacenter a decade and a half ago, the company would have turned in one of the best periods in its history as the first quarter of fiscal 2019 came to a close on April 29.

As it turns out, though, the company has a fast-growing HPC, AI, and cryptocurrency compute business that runs alongside of its core gaming GPU, visualization, and professional graphics businesses, and Nvidia is booming. That is a six cylinder engine of commerce, unless you break AI into training and inference (which is sensible), and then add database acceleration to make a balanced eight cylinder engine.

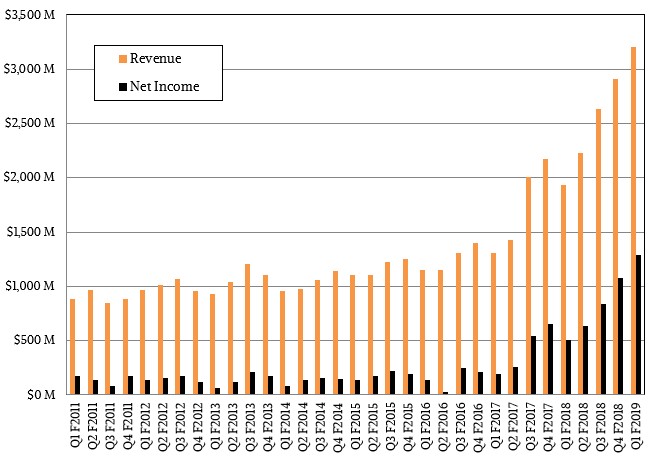

In the fiscal first quarter, Nvidia’s revenues rocketed up 65.6 percent to $3.21 billion, and thanks to the tightness of supply on gaming GPUs due to very big demand for GPUs by cryptocurrency miners, average selling prices are up and therefore Nvidia is raking in the profits as too much demand chases too little supply. Net income rose an incredible 153.5 percent, to $1.29 billion, and represented 40 percent of revenues.

Let’s stop right there.

First, who says you can’t make money in hardware? That level of net margin is almost the same as Intel’s gross margin in its datacenter business. Nvidia’s gross margins for Tesla have to be well north of 75 percent at this point we estimate, and given the pricing environment (with prices up around 70 percent since last June on existing GPU accelerators from the “Kepler,” “Maxwell,” and “Pascal” generations even), this has turned Nvidia into a very profitable company indeed. The same phenomenon is happening with gaming GPUs.

And second, Wall Street always wants more. Then again, it always wants more and was crabbing about it in the wake of the announcement of the numbers by Nvidia. The fact is, Nvidia’s strategy is paying off handsomely and we can let the traders and quants argue about current and future share prices as company co-founder and chief executive officer Jensen Huang pitches in to help chief financial officer Collette Kress count the big bags of money piling up in the corporate headquarters.

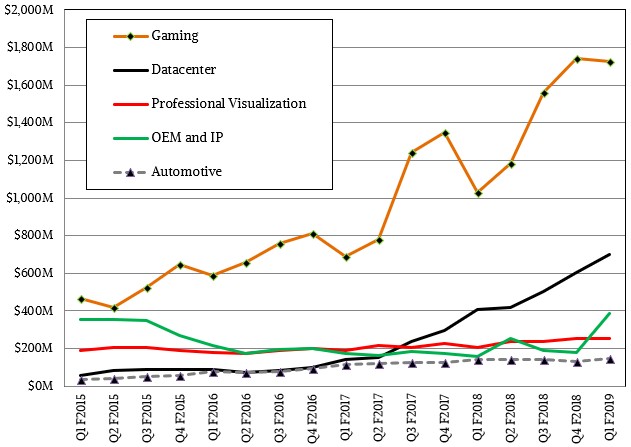

It is hard for Nvidia to reckon precisely how much money it made off of miners in the quarter, but Kress took a stab at it, saying that $289 million of the $387 million in total OEM revenue came from those mining Ethereum and other cryptocurrencies that are amenable to processing on GPUs. As Huang explained on the call with Wall Street analysts, the company creates special variants of its GPU cards, called CMP, which it peddles out of its OEM division (which also sells custom Tegra hybrid CPU-GPU cards), but even this division could not meet the cryptocurrency demand and Huang conceded that many miners bought GeForce cards, driving up prices. In any event, maybe there was as much as $350 million that came from cryptocurrency miners. Looking ahead to the second fiscal quarter, Kress forecast that cryptocurrency sales of CMP and GeForce products would be about a third of this level. This market has its ups and downs, just like the HPC and hyperscale businesses do. You can see the spike in the OEM line below to show just what a big jump it was in Q1:

In that core datacenter business, sales have slowed in recent quarters and are no longer growing at triple digits, but the thing to remember is that this market is very spikey but definitely on an upward trend. And moreover, no vendor and no market can sustain triple digit growth forever. We don’t know when the GPU compute business will hit its equilibrium, growing at the rate of gross domestic product at worst and at the rate of overall compute more likely, but we think that day is many years into the future. Nvidia’s datacenter business grew 71.4 percent year on year and was up 16 percent sequentially from the fourth quarter of fiscal 2018, which is pretty good considering that Nvidia has shipped many thousands of “Volta” Tesla V100 accelerators to IBM for use in the “Summit” and “Sierra” supercomputers being built for the US Department of Energy. Kress said that the HPC pipeline was building for Volta accelerators, particularly in the manufacturing and oil and gas sectors, and of course, GPUs are the engines of compute for machine learning training and are seeing uptake, particularly with the Pascals and Voltas, for machine learning inference. Kress said that shipments of GPUs for inferencing shipped to hyperscalers and cloud builders more than doubled compared to the prior fourth quarter, and the combination of the GPUs plus the TensorRT inferencing stack that Nvidia has cooked up, the pipeline will be building for this. While Nvidia has inferencing engines in various devices, such as drones and cars, Huang said that the biggest opportunity for Nvidia in inferencing is in the glass house, and this is due to the flexibility and open programming model of the GPU.

“The largest inference opportunity for us is actually in the cloud and the datacenter,” explained Huang. “That is the first great opportunity. And the reason for that is there is just an explosion in the number of different types of neural networks that are available. There is image recognition, there is video sequencing, there is video recognition, there are recommender systems, there is speech recognition and speech synthesis and natural language processing. There are just so many different types of neural networks that are being created. And creating one ASIC that can be adapted to all of these different types of networks is just a real challenge.”

Public clouds are starting to ramp up Tesla V100s in their infrastructure, and we suspect that some are also buying Pascal Tesla P100s, too, to meet demand. This is also driving up sales.

The DGX line of hybrid CPU-GPU appliances is also adding to the datacenter revenues, and Huang said that it was now “a few hundred million dollar business.” We take this to mean that the annualized run rate for DGX system sales (including to Nvidia itself, so far its biggest customer) is running at a few hundred million dollars. The datacenter business as a whole has an annualized run rate of $2.8 billion based on the fourth quarter and has trailing twelve month sales of $2.22 billion, so DGX might represent 15 percent of datacenter revenues at this point, which is one reason why the DGX line exists and why Nvidia is not afraid to compete against its OEM, ODM, and cloud partners in servers.

The thing everyone wants to know is how this datacenter business will grow. In the first and second quarters of fiscal 2018, this business was nearly tripling in size, and in the third and fourth quarters it was just a tad bit more than doubling. It is hard to say for sure it the growth rate will stay around 70 percent or spike again as Volta ramps and becomes more widely available on clouds, driving more consumption for HPC and AI and database workloads and then generate more sales to Nvidia. This stuff is very hard to predict. Even with an AI model running on GPUs.

Q: “First, who says you can’t make money in hardware?”

A: People who don’t understand and don’t love technology.

You can make money but not as much as if you also have some form of services and Nvidia Requires registration for its GFE and there is some metrics gathering. Nvidia also has some services income also. But That Coin Mining/Block chain business tide has lifted both Nvidia’s and AMD’s boats and now that the consumer GPU MSRPs are beginning to return to normal and those consumer/gaming boosted by coin mining revenues will return to mostly consumer/gaming revenues where the markups are not very large compared to the professional GPU markups.

AMD’s really will see more revenue gains from it Epyc CPU sales from now on compared to any of AMD’s soon to be of lesser importance consumer/gaming GPU revenues. There will come a time where AMD’s share price volatility will be well buffered and reduced by much larger Epyc CPU server revenues quarter to quarter and AMD will get more Graphics market share via it’s Raven Ridge APUs integrated Vega graphics in addition to that semi-discrete GPU “Vega” die that sits atop that Intel EMIB/MCM module and more consumer graphics share piggy backed along with Intel’s Kaby Lake G series SKUs.

Intel currently, as a percentage of the total integrated and discrete graphics markets, has the largest market share on integrated graphics alone with Nvidia having a commanding lead in the discrete mobile gaming market. The coin miners always have wanted AMD’s consumer gaming GPUs more for those Gaming GPUs excess compute resources when compared to Nvidia. So if there are stock of AMD’s Polaris and Vega GPUs available then the miners will go with AMD’s SKUs first and Nvidia’s GPUs will once again be available for gamers more than miners. Nvidia has strategically chosen to reduce its compute on its consumer gaming only SKUs in order to sell the power savings as Nvidia reserves is excess compute to only its professional Tesla and quadro branded lines of costly pro SKUs.

AMD will with Vega and to a lesser degree Polaris still be the go to choice for miners and one need only look at Vega’s Shader core counts compared to Nvidia’s consumer GPU shader core counts and the lesser FP Tflops on Nvidia’s consumer SKUs. AMD really does not have to worry about any reduction in mining demand because Nvidia’s mining demand will dry up first before AMD’s Mining demand. AMD is really focused on only the mainstream gaming market with Nvidia getting the flagship GPU crown, but that flagship GPU market revenue stream pales in comparison to the mainstream gaming GPU market where both Nvidia and AMD compete with each other on a relatively level playing field currently. Vega 64 competes nicely against Nvidia’s GTX 1080 and Vega 56 competes nicely against the GTX 1070 SKU. AMD’s is still going to be selling more of its Vega graphics capable Raven Ridge APUs in the Laptop and Desktop markets in addition to that Intel/”Vega” Semi-custom discrete mobile die on the EMIB/MCM Kaby Lake G line of Intel SKUs.

AMD’s First GPU at 7nm is a Vega 20 Professional market variant to replace the Vega 10 base die based Radeon Instinct MI25 Compute/AI SKU. So AMD knows that its can earn more money from its GPUs in the professional markets where that Larger Markup can and will be paid. Vega 20 is getting some additional AI oriented instructions and at 7nm maybe a higher compute shader core count or AMD may just choose to do another dual GPU die on a single PCIe card variant and get double the complement of shaders than the current Vega 10 die provides. AMD has made dual GPU die SKUs in that past most notably that Radeon Pro Duo a few years back.

Of more importance with Vega, and doubly so for Vega 20, is the fact that Vega supports the infinity fabric so that’s a bit more coherent way of interfacing any new dual GPU Dies on a single PCIe card variant than was done the old way via a PCIe interface across that single PCIe card as was done on the old Radeon Pro Duo at that time. So Vega with the Infinity fabric sorts of presages what AMD’s Navi will do with more of some Smaller Navi Modular/Scalable GPU dies when that arrives. That Infinity Fabric is already in place for Vega so AMD could very well create a larger logical GPU out of 2 Vega 20 dies on a 7nm process node and really have plenty more DP/SP FP flops compared to even Nvidia’s Volta. And Vega(Current and on Vega 20) supports Rapid Packed 16 bit math whereby 2 FP 16 calculations are loaded into one 32 bit Vega FP unit so that’s a vary large amount of FP 16 Tflops right there with Vega 10 and even more for any Dual die on a single PCIe card Vega 20 variant if AMD so chooses.

Currently it takes 80 Vega 10 base die based Radeon Instinct MI25s and 20, 32 core Epyc CPUs/MBs to provide a complete Projects 47 Petaflop(32 bit) compute/Inference cabinet. Also Vega 20 is going to have a 1/2 DP FP to SP FP ratio compared to Vega 10’s 1/32 DP FP to SP FP ratio. So Vega 20 will be the Go To for any future Epyc/Radeon Supercomputer potential customers that nay need all the DP FP that can be squeezed into a cabinet.

Right, Epyc doing production workloads requires placing GPU and all other acceleration and co-processing control on the system to subsystem bus and not intentionally on the CPU private / back side bus.

mb

Making money in hardware . . .

I will again champion Intel opening up all back in time processor system bus (pre-1151, 2011x) to every independent core logic/hub design producer enabling all back in time processor salvage that is not technically obsolete control/data processing plane renewed CPU subsystem features and utilities.

Accelerated patterning so rapidly through process node regimes, benefiting processor alone, and Intel alone, not missed but neglected all the associated co-processing, acceleration, sub system build opportunities . . . for generations of previous central processing units extending to some system on chip.

There is nothing obsolete about Sandy, Ivy and Haswell generation quad mobile / desktop in particular, running consumer and embedded systems, Xeon renewed for applications that do not require state of the art commercial utilities.

This is called social welfare value.

Mike Bruzzone, Camp Marketing