In a very real sense, the world’s three biggest public cloud operators are also among the largest server and storage manufacturers. Sure, they subcontract the actual manufacturing work to OEMs or ODMs, just like most name brand server and storage array makers do these days, but in the end, they control what goes into the gear and how it is made and they are its only customer.

No one knows if all workloads will eventually migrate to public clouds or not. That is the honest truth. The volume economics would argue that workloads and data will eventually migrate to the largest and cheapest pools possible, and this is mitigated somewhat by security, provenance, and sovereignty concerns surrounding data and just the sheer difficulty of moving terabytes or petabytes of data over the Internet. What we all know is that most enterprises want both infrastructure and platform cloud services and are not ready to just go all the way to software as a service, and that most enterprises envision themselves operating in a hybrid cloud mode, with capacity in their own datacenter and capacity in one or more public clouds.

Amazon Web Services, as the pioneer in infrastructure cloud services and an innovator in platform cloud, has always said that cloud, by definition, means public cloud – and only public cloud. And, as the volume leader, cloud therefore means Amazon’s cloud, and the book seller turned IT supplier has not been shy about the fact that, “in the fullness of time,” as senior vice president in charge of AWS Andy Jassy always puts it, that AWS will be larger than the Amazon retail business. That will mean growing AWS from its annual run rate of $7.3 billion last fall by more than an order of magnitude. But with something on the order of $1.4 billion in datacenter systems, enterprise software, and services consumed last year by the world’s IT organizations, an AWS that is on the order of $90 billion – about the size of IBM these days – is not impossible or even unreasonable. (Such growth will take time, of course.)

“A lot of people think that cloud is a place, and we reject that thinking. We think of the cloud as a model that can exist in multiple places.”

Both Microsoft and Google wanted to skip the infrastructure cloud and expose their infrastructure to the outside world as platform services, and they were rebuffed by most enterprise customers. The leap from what they had in their datacenters – virtualized servers running a hodge-podge of hundreds to thousands of applications – was too great. Which is why AWS EC2 compute and S3 and EBS storage took off. While the gap is large between the corporate datacenter and these basic AWS is still large, the spark can jump it. This is why Google and Microsoft ate some humble pie a few years ago and rolled out their own respective Compute Engine and Azure Virtual Machines services. But Google and AWS still believe, fundamentally and at their core, that cloud means public cloud – unless you are a US government agency with hundreds of millions of dollars to spend on a truly private public cloud – and this is leaving an opening for Microsoft Azure to exploit.

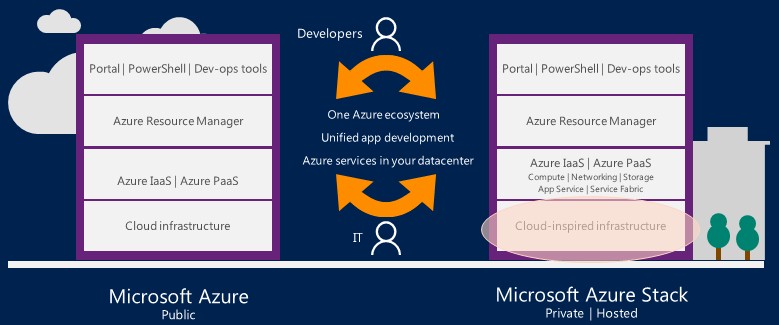

And with the launch today of a preview of Azure Stack, a set of software that will allow enterprises and service providers to create a private cloud that looks and smells and feels and operates like a baby version of the actual Azure cloud that Microsoft operates at hyperscale, exploiting that difference and its vast installed base of tens of millions of Windows Server instances running applications worldwide in other people’s datacenters, is precisely what Microsoft intends to do.

“A lot of people think that cloud is a place, and we reject that thinking,” says Jeffrey Snover, Technical Fellow and chief architect of enterprise cloud at Microsoft. “We think of the cloud as a model that can exist in multiple places.”

To put it bluntly, this is just like a cloud exists in multiple places – datacenters and regions, they are called – in a public cloud like those from AWS, Google, Microsoft, and others. Arguing about how that cloudy infrastructure is broken up is not the point. Showing how modern cloud software is better than traditional and more rigid infrastructure is where the focus has to be, according to the top brass running Azure and their peers at Microsoft who are trying to bring it into the datacenters of the world. (Whether it stays there forever or not, no one knows.)

Snover, speaking at a prebriefing for analysts in Microsoft’s Bellevue offices ahead of the Azure Stack launch, knows a thing or two about the fragility and annoyance of infrastructure in the corporate datacenter. Way back in the day, during the dot-com boom, he worked as an architect in the office of the chief technology officer at Tivoli Software, the systems management tool maker that was acquired by IBM in 1996. Snover moved to Microsoft in 1999, and among many things he is the inventor of the PowerShell scripting environment and automation engine for Windows Server. Organizations are railing against shadow IT, but hoops that developers and system administrators have to jump through to get basic server, storage, and networking infrastructure for developing, testing, and deploying applications is too unwieldy and time consuming. In fact, public cloud providers have been counting on that. The answer, as far as Microsoft is concerned, is to make private infrastructure look like the public clouds – in the case of Azure Stack, quite literally.

“We did the traditional model for all sorts of great reasons, and it has served us very well,” Snover explains. “But in reality, what we would end up with is a bunch of dedicated infrastructure for each application, and often we would have custom hardware especially for an application and people and processes all tuned to an application. When you step back a bit, you see this is a pretty brittle, non-agile environment. You end up with each company – and each group within each company – ends ups building their own little worlds. It is perfectly natural, because as an industry, we gave people a bunch of components and a rabbit’s foot and wished them the best of luck to figure out how to put these components together to solve their problem. And they did, with each organization coming up with their own solution. But you end up with enterprise-specific languages and environments, and when you want to hire someone, there is a very high training cost to get them up to speed. Every company has had the experience of hiring someone who is a superstar in one organization and they fail when they came to your organization, and the reason is that the success is a function of the environment, and when the environment is so different, it is hard for people to be effective.”

As it turns out, scaling down infrastructure like that peddled by AWS, Microsoft Azure, and Google Compute Engine is harder than scaling it up, which all three have perfected out of necessity. And the reason why you don’t see scaled-down versions of public cloud infrastructure, says Snover, is because it is really hard to do and do right. Arguably, Microsoft’s own first pass, the Azure Pack extensions to the Windows Server platform, which are orchestration and control elements culled from the Azure public cloud, were not as faithful to Azure as they needed to be. Ditto for the hosted and managed OpenStack cloud from Rackspace Hosting, which is not a precise carbon copy of the cloud running inside of Rackspace itself. VMware has the combination of its vCloud Air public cloud and its vRealize cloud automation tools, but its public cloud is small and, thus far, very few of its ESXi and vSphere customers use the vRealize (formerly vCloud) wares to convert their virtualized servers into private clouds.

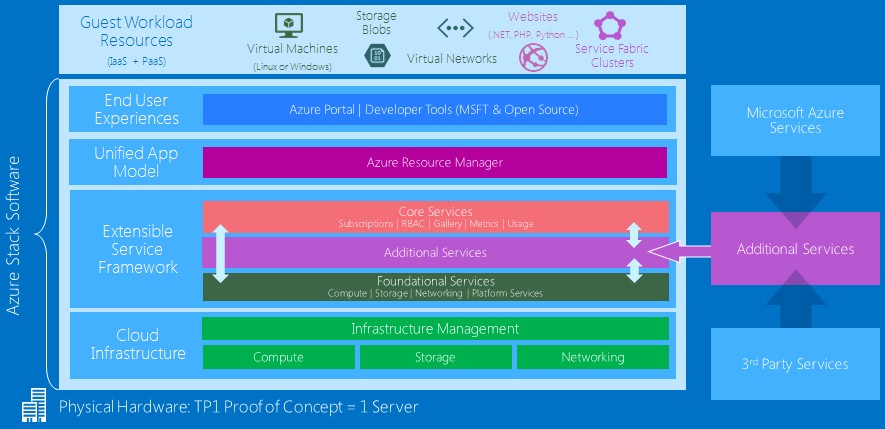

With Azure Stack, the goal is to give system administrators and developers the same experience for virtualized and orchestrated infrastructure as they get on the Azure public cloud. With the first technical preview of Azure stack that is being announced today and that will be available for download on Friday (January 29), the Azure Stack will create a baby virtualized Azure cloud on a single server with fairly beefy requirements. In this case, a machine with 128 GB of memory, at least two eight-core processors, and five disk drives. That will give testers enough capacity to load up the ten virtual machines that run the Azure control plane and still have enough capacity to learn how to run an Azure private cloud and play with some virtual machines.

Future technology previews will allow this private Azure to span multiple server nodes, and by the time that Azure Stack is ready for primetime in the fourth quarter of this year – after Windows Server 2016 is out and ramping – it will stretch far enough for enterprises that want to support Windows and Linux workloads on the Hyper-V hypervisor on a very large scale. Our guess is Azure Stack will span many, many tens of thousands of virtual machines, which is about as large as any cloud in the enterprise gets these days, but Microsoft is not providing specifics about scale at this point because it is early in the customer testing cycle for Azure.

At this point, Microsoft is also not saying what specific servers and storage servers will be certified to run Azure Stack when it becomes generally available at the end of the year, but the idea is clearly to bring some of the practices of hyperscalers down to enterprises even if they might not literally go out and buy Microsoft-designed Open Cloud Servers from Hewlett Packard Enterprise, Dell, or Quanta, which manufacture machines that are clones of those that Microsoft itself uses in the Azure public cloud. (We will be drilling down into the feeds and speeds of the Azure Stack, in terms of the hardware requirements and the Azure services that users can expect to be able to run locally in a separate article.)

No More Gold Plated Servers

Microsoft is not saying how many developers and companies it has hosted on the Azure cloud today, but Mark Russinovich, chief technology officer at Azure, who we spoke to back in October about the future of the cloud building, tells The Next Platform that adding more than 90,000 new customers each month to the Azure cloud and that 80 percent of the Fortune 500 are using Azure cloud services in one form or another. Azure has 22 regions around the world, and is adding two regions each in the United Kingdom, Germany, and Canada to get local processing in those countries. Azure Compute revenues and capacity have both doubled in the past twelve months, Russinovich adds, and over 40 percent of the revenue coming in for the Azure public cloud is coming from startups and established software developers who want to run their apps in the cloud.

Enterprise customers do not just want to run in hybrid mode across private and public clouds, though. They also want to get some agility when it comes to software development, and having flexible infrastructure that hooks into Visual Studio or Eclipse development tools just like Azure does and allows them to do continuous development in a modern, microservices manner is part of the bigger picture.

“If you take a look at applications and the demand to create agile applications, the old monolithic, three-tier, SOA-style applications of the IT world do not cut it anymore,” says Russinovich. “Teams have trouble getting feature updates into software, having it tested, and they can’t scale out efficiently to make the most use of the underlying hardware that the company is paying for. So we are seeing this trend towards microservices architectures and loosely coupled systems with small teams that develop independent components that can be tested, rolled out, and scaled efficiently. If you take a look at the infrastructure underneath, the only way that hyperscale public clouds get to the scale that they do is using industry standard hardware, basically minimizing the variations in the hardware. This gives you the agility to not have to worry about individual failures across a whole number of machines and throwing people at the problem. You have to take people out of the equation as much as possible,” he says, adding that “the traditional IT world is full of drama.”

These are arguments for cloud in general, of course, not just for Azure. But the point for Azure Stack is to have the private cloud that enterprises install look and feel as much as is possible like the real Azure cloud. Here is what the stack looks like conceptually:

The idea is that anything that works with Azure will hook into Azure Stack and basically see it as just another region, and having played around with the Azure Stack proof of concept a bit ourselves, it certainly is faithful to this idea as far as we can see. Visual Studio and Eclipse tools that deploy applications to Azure infrastructure will be able to deploy to Azure Stack private clouds in the same manner, and Chef and Puppet automation tools as well as PowerShell scripts will all work across the two Azures in the same fashion. The Azure Resource Manager control plane, which was spearheaded by Russinovich, is the same, too. The core services that provide role-based access control, subscriptions to virtual machines and resources are the same, too, and customers can even set up their VM instances to have the same feeds and speeds as Microsoft’s own Azure VMs. It will look and feel like the Azure cloud to developers, but system administrators will have to learn to run the infrastructure in slightly different ways.

Any company deploying applications to a public cloud has to learn something new because each cloud has its own language for talking about the infrastructure and the services that connect it or run on top of it. The key thing about Azure Stack – and this is something that is only true for Microsoft right now across all public and private cloud providers – is that the new metaphors for describing compute, storage, networking, and services that they learn for one size of the firewall will apply to the other side. Windows Server customers in particular, who are naturally predisposed to use Microsoft’s Azure public cloud, need only learn one new thing and they have covered both their private and public cloud bases.

That vast installed base of Windows Server coupled to what is for all intents and purposes infinite compute and storage on the Azure public cloud is going to be a very compelling combination for enterprises. Particularly as they dabble and also try to rein in shadow IT. We are dying to find out how much more expensive an Azure Stack private cloud will be to set up and run compared to the exact same infrastructure on the Azure public cloud, and can’t wait to do that math. How that math works out, and how sensitive and heavy the data is, will determine how much compute and storage stays in the enterprise datacenter and how much shifts to the public cloud.

Azure stack cloud software could be useful for small / medium countries in terms of population as well (Central America; Eastern Europe) for helping ministries and government agencies building services for citizens.

This can also be Azure’s downfall. The problem with Azure Stack is the client side upgrade. Since it will be on client’s premises it will be subject to client’s change control and enforced upgrade policies. Inherently firms do not want to take a risk, which will create a version drift and Microsoft personnel throttling trying to catch up with hotfixes and minor versions. As a result Microsoft will be forced to make concessions and create “exceptions.” This will eventually create unmanageable monstrosity which is many versions and patches behind. The right way to go is the way Google and AWS went, public cloud, which create versatile and agile platform