While many consumers in the U.S. might not have heard much about Baidu, when it comes to engineers and computer scientists, the Chinese company is on par with Google, Facebook, and their ilk when it comes to massively scaled distributed computing. It uses that iron to deliver software capability that requires such scale.

Baidu has garnered significant recognition for pioneering efforts in machine learning and deep neural networks, which the company uses to deliver services including near real-time speech recognition across vastly different languages, as well as English to Mandarin translation (and vice versa). Like other hyperscale companies, the tough work on the software side is matched by massive hardware optimization efforts which, when combined, make Baidu a major force in shaping the next generation of hyperscale services powering complex real-time applications. The ones most of us take for granted because they have the illusion of fitting into our smartphones.

Baidu made a splash this week with an announcement about the complex neural network activity required to spin English and Mandarin in real time around the world. But of greater interest to us here at The Next Platform are the key questions are around how large-scale infrastructure can be tuned and optimized to meet the competing concerns of scalability, accuracy of results on real-time requests, computational and datacenter efficiency (in terms of resource utilization and power consumption), and of course, cost.

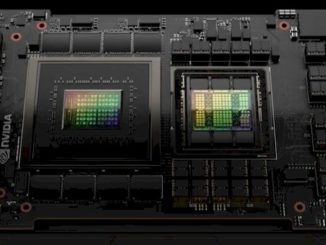

To deliver against all of these competing parameters, and to feed deep neural network training, Baidu has looked to GPU acceleration, particularly for training models based on many millions of data points, then rolling those models out to production systems. Interestingly, Baidu is taking a page from the books of supercomputing in its approach to solving problems at scale—both in terms of optimization and more theoretically, in how they think about massively parallel problem solving.

Baidu’s Silicon Valley AI Lab is at the heart of many of these efforts and according to one of the leads there, Bryan Catanzaro, the evolution of both these problems, and their potential solutions, at scale tends to be swifter than even his team of hardware, AI, and other experts expects.

Catanzaro was the first employee at the AI lab, right behind chief scientist Andrew Ng and research lead Adam Coates. Before moving to Baidu, Catanzaro worked at Nvidia, where he collaborated with Coates on large scale deep learning using commercial off the shelf HPC technologies. He continued this collaboration with Coates at Baidu, where they decided to apply HPC for deep learning to speech recognition. The team went from no infrastructure to their first functional speech recognition engine in just a few months and moved quickly from scaling from four to eight GPUs for model training—a notable feat given some of the communication and other hurdles of multi-GPU systems, particularly for data-rich training workloads.

Earlier this week, we looked at how Facebook approaches similar issues with GPU-laden systems for its neural network training systems, but for Baidu, the first hurdles came with optimizing such a system for efficiency. At first, Catanzaro says that there was doubt about whether moving from four to eight GPUs per box would be the right approach due to concerns about having enough CPU bandwidth to keep the thousands of cores on the GPUs busy, but the team took the plunge with their hardware provider, Colfax International.

The other evolution for Baidu’s AI neural network training infrastructure included a shift away from Kepler-generation GPUs to the Maxwell-based cards. As we detailed last month, there are now deep learning and hyperscale Nvidia Tesla M40 (for training) and companion M4 (for inference) accelerator cards and while Facebook is one key early user for the M40, Baidu’s research team opted for the slightly less higher performance, but presumably far cheaper Titan X GPUs, which pack a whopping 6-plus teraflops peak into a $1,000 GPU package.

This brought a big jump in efficiency since the team was able to get a far higher percentage of peak throughput on the Maxwells than on the Keplers. With the Kepler-generation GPUs, even after optimizing for their single-precision matrix multiply-based workloads, they weren’t getting above 75 percent of peak, but with the Maxwell generation, Baidu is seeing closer to 95 percent of peak. Given previous pricing for other Tesla cards, our own estimate is that the M40 GPUs will be in the $3,500-$4,500 range.

“We went to a denser box, a newer generation GPU, and spent a lot of time on software optimization for other systems guided by an HPC philosophy wherein we think about systems in terms of finding the theoretical limits of systems and looking at how close we are to saturating those. This informs where we need to invest time to make the next improvements. It’s this and a lot of little optimizations that ultimately make a huge difference in scalability.”

Of course, peak teraflops versus real-world performance are different things entirely. Catanzaro says they are getting 3 teraflops out of their Titan X GPUs on their neural network code. And what is most interesting here, they have made yet another GPU computing leap with those, scaling first from four to eight GPUs in a single box, then going on to use sixteen GPUs per training run—an uncommon feat that Catanzaro says will gain traction elsewhere in time, especially once optimization tricks of the trade are passed down the line at other companies. A lot of those “tricks” to getting closer to peak throughput are driven by an “HPC philosophy” Catanzaro says, and those efficiency numbers are, indeed, quite a bit higher than what we’ve seen from the national labs running a large number of GPUs against parallel scientific applications.

The systems Baidu is running its speech recognition neural network training algorithms on from Colfax feature two “Haswell” Xeon E5-2600 v3 series processors from Intel in a 4U rack-mounted configuration. These systems are a bit less heavily engineered than custom machines Dell created five years ago to cram 16 GPUs into a single system. (The Colfax system can use hotter GPUs than the Dell machine of years gone by.) Given the 30 kW per rack power constraint Baidu is tied to, having a denser configuration would not work anyway. The graphics cards consume around 250 watts, the CPUs gobble around 700 watts with memory and other considerations factored in, and overall, Baidu’s training cluster zaps about 2.7 kW per node, so just under 27 kW for the entire ten-unit rack.

The Baidu neural network training cluster has over 100 nodes…and growing.

Users want accurate results and immediately—and therein lies another computational challenge, one that the Baidu AI team has found a clever workaround that incorporates that HPC philosophy Catanzaro speaks of frequently. Latency is all about optimization, and certain techniques they’ve developed, including something called “batch dispatch,” which bundles user requests on inference/production sides to be executed, thus avoiding data movement overhead (even if it increases the math demands on any node), go a long way toward creating a balance between latency, accuracy, and efficiency. In such a view, if costs can be calculated as loosely as how many user requests can be served on a single piece of hardware, this tightens the costs and becomes an optimization problem that, solved once, provides big efficiency leaps.

Training neural networks is a high-throughput bit of business, with millions, or perhaps billions of training samples being pumped through. The end goal is to deliver fast, accurate results. “We don’t want to compromise on accuracy. There is always a little though when you take a research system and translate it to a production environment because of the real time constraints.” For Catanzaro’s team, the accuracy took a 5 percent hit moving from research to production, but that still falls in acceptable range, and as the team builds out the existing 100-node GPU-powered training cluster, they expect that to improve.

The team is working to scale past a number of other issues for multi-GPU systems at scale for real-time applications. For instance, to get around some challenges inherent in existing MPI libraries, Baidu lab researchers wrote their way around this to better exchange messages between GPUs using something called All Reduce.

Other tricks, including the deep learning-focused CTC, have been optimized to run entirely on the GPU, so as to preserve interconnect bandwidth between GPUs for scalability. Their CTC implementation builds on work to parallelize other dynamic programming algorithms for GPUs, such as those for DNA sequence alignment. Further, the team is looking at how new possibilities, including the addition of NVlink, and further down the road, FPGAs, might strengthen both the training and inference systems.

Deep Learning as Extreme Supercomputing

When we look at traditional high performance computing, we think of the future exascale systems, of the Top 500 list of supercomputers, measured as they are by peak floating point potential. But as we’ve covered quite extensively here in our first year as The Next Platform, that definition of what is valuable for real supercomputing applications is changing—and so the definition of what is (and is not) HPC is also evolving–and doing so to include the convergence of HPC and machine learning.

Catanzaro says that deep learning on the Baidu training and inference clusters, despite its lack of double-precision math and partial differential equations, is a sort of extreme example of HPC. “If you think of supercomputing, not as a particular application domain set, but rather a mindset—how you approach problems—this is extreme HPC.” The concept of how systems reach their peak performance, and how one extracts and optimizes to get top performance and utilization, that is the real value proposition of HPC.

“The fact that we are able to sustain 45% to 50% of peak running on eight to sixteen GPUs on one problem is extreme HPC. But in that area, most full applications, not just the kernel, don’t get that kind of performance. We have gotten there, and HPC is working on those challenges.”

Catanzaro has been giving talks at key HPC events over the last year, making the point that deep learning and HPC are closely connected, and have lessons to share—not to mention people who are skilled in these tough optimization challenges at scale. “A rising tide lifts all boats, and HPC people have been receptive because deep learning makes HPC more relevant.” What is happening at the national labs and their supercomputing sites is filtering into deep learning, and so too are lessons from hyperscalers moving into HPC. This creates a richer pool of talent between labs and academia and hyperscale web companies (and a more competitive one for young talent deciding between a lab versus Google—a tough problem for labs), but ultimately, a closer interconnect between the two will continue being beneficial, especially with common problems like scaling multi-GPU systems, delivering fast results with high utilization and efficiency, and ultimately, finding a host of code tricks that in total, create less power consumption and more scalable systems.

It’s difficult to talk through all the optimizations and scalability concerns in one article. For those interested, Baidu has an in-depth paper exploring architectural and other considerations.

I think that for this type of load a different approach could yield better results. For example using a huge NUMA cache coherent cluster filled with hundreds of NVIDIA video cards. IN this case one get a huge “workstation”, running one copy of Linux and ten of thousands of GPU cores in a single image shared memory OS. The guys at Numascale are perfectly able to build such machines.

We think the same thing and have said the same exact thing to both Cray and SGI.

BPLs will eventually spill the end of GPU dump NN as they will not require million of datasets to learn