If not for the fact that there are several installed, functional quantum computers on the planet chewing away on tough problems—including the burdensome issue of their own operation—one might suspect that these systems were still more science fiction than reality.

Still, with each passing year, more quantum devices are installed, including, most recently, at Los Alamos National Laboratory, with the hopes of being able to deploy them efficiently and find solutions to problems that far outpace conventional approaches to computing. The work to create stable platforms for quantum computers, or more specifically, the requisite tooling to support actual applications, is often locked behind thesis research or kept a mostly guarded secret by the few users that are working with D-Wave machines. What this means, according to Dave Wecker, who heads the Quantum Architectures and Computation (QUARC) group at Microsoft Research, is that a lot of the work on stabilizing such platforms is tossed or stashed and thus doesn’t contribute to future of quantum systems.

The real problem, Wecker says, is that while many organizations that understand the specific types of problems quantum systems solve know how they might use one in practice, they have no idea how to get to that point. In other words, knowing how a quantum computer works and how it might be made to work are two very different things.

Wecker knows about this problem firsthand, although not in the quantum computing context. He has led a number of big software and architecture projects at Microsoft, and perhaps of greater interest, some unique software and hardware projects in his early days in the research and engineering team at Digital Equipment (DEC). Wecker says that ultimately, the success or failure of such projects was dependent on the toolchain to support a potentially successful idea.

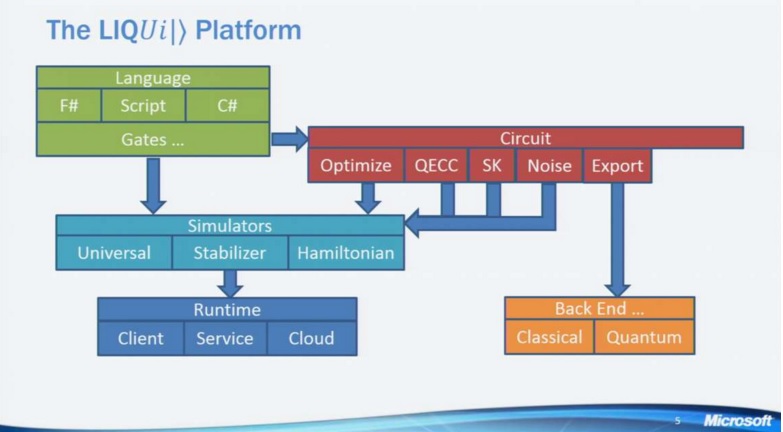

The goal of the LIQUID platform Microsoft Research is developing is to provide a full simulation environment that will make it easier to create complex quantum circuits. “To build a platform, what I did was start in the middle, after gathering a base sense, start at the abstract idea of what a QC is at the logic/gate level of QC, then worked my way up into circuits to assembly code equivalents, then moving up at the top a language that you could actually express in and compile down into that abstract model.”

Wecker says that the LIQUID platform was conceptualized in the same way many other computer science projects are—by starting in the middle of the stack. In this case, it began with understand what a quantum computer is at the logic and gate level, then working a way up into the circuits and higher up, the assembly code equivalents. Sitting at the top of that model is a language that developers can express in and then a sense of what would be required to compile down into that abstract model.

Along the way, the main goals of providing a compiling approach as well as the broader range of simulation and computer aided design tools set the pace. The problem is, not knowing what is fully needed from such a large group of potential application end users. Ultimately, Wecker says that the problem boils down to efficiency. As part of this, the final platform should provide for circuits that are re-targetable for many purposes, including rendering, optimization, and exporting.

Before we get too far ahead of ourselves, the work Wecker and team are doing are for quantum systems that are 10 to 20 years away. He says that there are pieces of real quantum devices that exist now, but believes the question about what we are seeing in the form of D-Wave machines are not fully at the point where they classify as true quantum systems that can be, say, connected via Azure and delivered as a service. Still, the work on developing a front end system to program such an approach is captured in LIQUID.

As seen below, there are a few ways to get into the system, but Microsoft is pushing F#. Wecker, as a long-time functional programmer, says that this has its advantages but also shows a few other ways in the graphic below. This compiles into gates and is sent off to one of three types of simulators. The universal and Hamiltonian approaches can perform a wide range of functions, and the Hamiltonian in particular is one of the most valuable in terms of future large-scale machine learning applications because it does not abstract away a lot of the physics. However, both of these approaches are hugely memory intensive and the Hamiltonian simulators are very, very slow. Wecker says that they are working on a 30-qubit experiment now on a one terabyte machine, but for every qubit that is added, the memory requirements are doubled. This brings us to stablizers, which can operate in the tens of thousands of qubits range and have less complex gates, but the application end use is somewhat limited.

The main point of the above breakdown of the different simulators is that Microsoft Research is developing a “write once” approach that will let users do the tough work of encoding their problem with the option of sending that problem to one of these three simulators—or to test each one. And that is a big deal for efficiency and flexibility, Wecker explains.

Under all of this sits the runtime, which eventually will include both individual clients and the company’s Azure service. In essence, this will allow the platform to run ensemble workloads without anything to tune. Of course, these are not workloads that are split off in traditional parallel fashion, but the team is working on a way that will let such computations run on thousands of machines using all the threads. If one thinks of the circuits as a data structure, that can then be optimized and run back through the simulators. At that circuit layer sits the capabilities for quantum error correction, the handling of noise (especially on such simulations that by nature atrophy over time). There too lies the possibility to ship to a different backend, including classical supercomputers (they will still be necessary, at least for now), which can retrieve in a form that allows for linear algebra. Another key aspect of this output backend is to provide rendering, a valuable service for researchers who spend a lot of time drawing the circuits—an aspect that is now handled by LIQUID.

It is, as one might imagine, impossible to list the functions and how they support the platform all in one place here, but there are some great talks on this here and here for the more quantum-adept. And for those do fall into that category, Microsoft Research is beefing up its quantum platform team.

Although the subject is around future systems (and likely not those that will find their way into our homes in our lifetimes) Microsoft’s goal is a familiar one. Owning the platform but not the systems—staking a claim to the operating system (used as a general term) for a device is actually the key to dominating a market. If this effort is successful, D-Wave, and whomever else might crop up as a manufacturer of quantum machines, would own the device business while Microsoft was the platform for development and pushing quantum use to a broader base of users.

Of course, as with anything in the quantum computing space, it’s all conjecture. But early steps like this—not to mention the investments going into quantum computing’s future—are noteworthy as large companies are seeing enough a future to put some of their lead brains behind development.

“Although the subject is around future systems (and likely not those that will find their way into our homes in our lifetimes)”

Careful, Nicole.

I think it is misleading to say “The work to create stable platforms for quantum computers, or more specifically, the requisite tooling to support actual applications, is often locked behind thesis research or kept a mostly guarded secret by the few users that are working with D-Wave machines.”

I think there are several issues with this statement.

1) I would put D-Wave machines in a category of “special case” quantum systems that, while interesting, are unlikely to be generally useful.

2) There are advancements from many university groups being reported every week. The literature is building up, while the possibilities for hardware are growing and look promising.

3) There isn’t a lot of evidence that industry has kept many technology secrets over a significant period of time. Of course everyone wants to get there first. But in complex subjects like this, it is unlikely that a single organization can discover something so universal as to take all the market. By this I mean the entire research eco-system depends on many players making contributions. As a similar example of something nearly as complex, look at what has happened in Silicon Photonics over the last 10 years. The vast amount of the fundamentals are well published.

C# with Azure. Only Microsoft could make quantum computing uncool.

Well there is probably increased effort for alternative computational structures as most people in the know have understood now that we are at the end of silicon semi-conductor lifetime. There will not be much more we will be able to gain from further node shrinkage and there are at best only two (if we are lucky maybe three) more shrinkages left and then it is pretty much game over. So innovation has to come from somewhere else.